Anthropic did not market Claude Mythos Preview like a normal model launch. It put the model behind a restricted initiative, said it had already found thousands of high-severity vulnerabilities, and argued that frontier AI had reached a level where it could surpass all but the most skilled humans at finding and exploiting software vulnerabilities. The UK AI Security Institute, which evaluated the model separately, described Mythos as a step up over previous frontier models and said it could carry out multi-stage attacks on vulnerable networks under controlled conditions. That is not routine product copy. That is a warning shot.

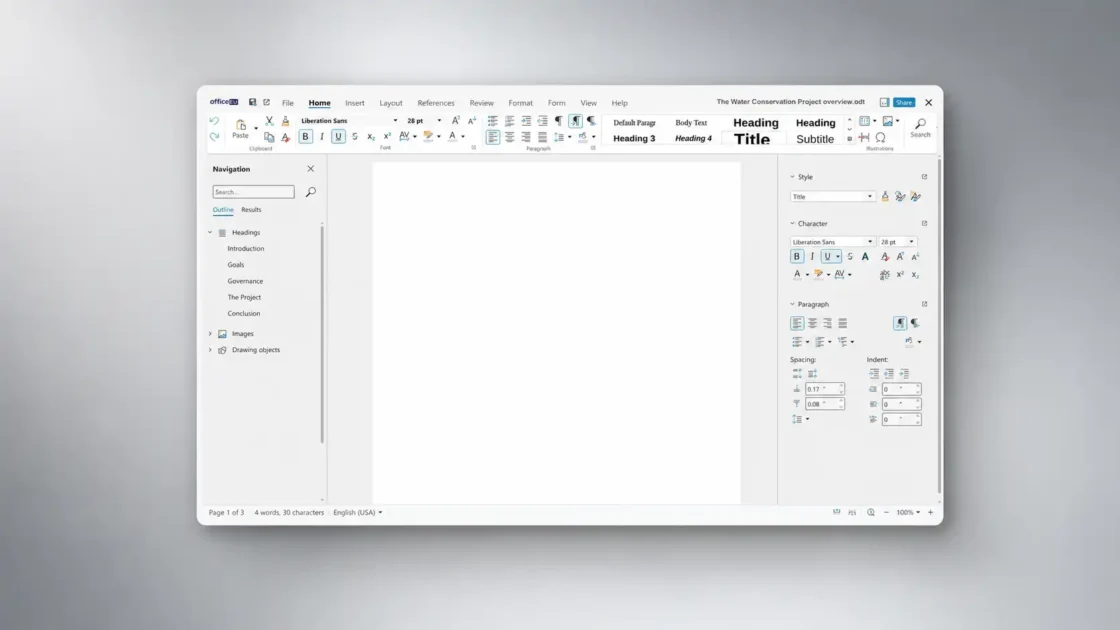

Table of Contents

The blunt version of the story is tempting: AI is now the best hacking and security tool ever built. The careful version is better. AI has become the strongest general-purpose force multiplier cyber has seen so far, but the phrase “best tool ever” hides the part that matters. Models are not replacing exploit frameworks, SIEMs, reverse-engineering suites, scanners, or human operators. They are sitting above them, compressing research, triage, coding, interpretation, and action into one fast loop. Research reviews, benchmarks, and product rollouts all point the same way: offensive use is moving fast, defensive use is getting stronger, and the side that learns to verify and operationalize AI fastest will get the edge.

Mythos changed the tone

Mythos matters because it changed the conversation from “AI might become useful in cybersecurity” to “frontier labs are already restricting access because the capabilities look operationally serious.” Anthropic says Mythos Preview found thousands of high-severity vulnerabilities, including flaws in every major operating system and web browser, and framed Project Glasswing as an urgent attempt to push those capabilities toward defense before similar systems spread more widely. AISI’s evaluation landed in the same neighborhood: Mythos showed continued gains on capture-the-flag tasks and significant gains on more complex, multi-step attack simulations.

What makes that shift more striking is the speed of the jump. Anthropic’s own write-up says Opus 4.6 had a near-zero autonomous exploit-development success rate on one internal benchmark, while Mythos Preview was dramatically stronger on the same family of tasks. In Anthropic’s OSS-Fuzz-based benchmark, Mythos generated many more serious crashes and reached full control-flow hijack on ten fully patched targets. AISI also said that, under explicit direction and with network access, Mythos could autonomously discover and exploit vulnerabilities in ways that would take human professionals days of work. That is not “autocomplete for shell commands.” It is the start of machine-driven offensive workflow.

Mythos is not alone, which is another reason the hype around it should be read as a signal rather than an anomaly. Google announced Sec-Gemini v1 for threat analysis and vulnerability understanding. Microsoft moved Security Copilot toward agentic automation inside its security stack. OpenAI expanded Trusted Access for Cyber and introduced GPT-5.4-Cyber as a model variant tuned for defensive use cases. DARPA’s AI Cyber Challenge has also been pushing teams to build systems that secure critical code, not just write clever demos. The ecosystem is reorganizing around the assumption that AI is now a real cyber actor, not a side feature.

That does not settle the “best tool ever” claim. It does settle something else. Cybersecurity has crossed a threshold where the main question is no longer whether AI belongs in the workflow. The question is which parts of the workflow it already owns, and which parts still resist it. That is the line Mythos put in public view.

The real reason AI feels different in cyber

The reason AI feels bigger than the last generation of security tooling is simple: it collapses steps that used to live in separate tools, separate tabs, and separate specialists. A strong model can read a CVE, inspect code, explain a stack trace, write a proof of concept, propose a patch, summarize the risk for leadership, and adapt after failure. Research surveys on LLMs in cybersecurity describe the field moving from narrow task execution toward multi-step autonomous workflows, with agentic systems becoming a major trend across vulnerability analysis, malware work, and incident response. Broader security surveys say the same pattern is now central to the risk picture: prompt manipulation, malicious misuse, and autonomous-agent behavior sit in the same threat frame because they feed one another.

PentestGPT showed this clearly before Mythos ever arrived. The paper did not claim fully autonomous expert pentesting. It showed something more useful: LLMs were already good at sub-tasks like using tools, interpreting outputs, and choosing next steps, even while struggling to hold the whole attack narrative together. The authors then wrapped the model in a scaffold with role-splitting modules and reported a 228.6% task-completion increase over GPT-3 on their benchmark. That is the pattern that keeps repeating in cyber AI. Raw model talent matters. Structured scaffolding matters almost as much.

Where AI already compresses work and where it still stalls

| AI compresses | AI still stalls |

|---|---|

| Recon, code reading, output interpretation, exploit drafting, and report writing can happen inside one conversational loop. | Environment-specific reasoning, reliable patching, tool choreography, and validation in messy production systems remain brittle. |

| A model can turn threat intel, code context, and runtime feedback into a fast next action. | A model still needs good scaffolding, trustworthy data, and strong oracles to avoid wasting time or inventing certainty. |

That split shows up across the research. PentestGPT improved performance by breaking the job into modules. SEC-bench and SecureVibeBench later showed that real-world security engineering still punishes agents hard when the environment gets less clean and the success criteria get stricter. AISI’s Mythos tests also depended on controlled evaluation conditions and explicit direction. The shape of the change is not mystery-level intelligence. It is fast reasoning connected to tools, loops, and feedback.

That is why calling AI a “tool” only tells half the story. A scanner finds known things. A debugger exposes state. A fuzzer sprays inputs. A frontier model can sit on top of all three, interpret the output, change tactics, and keep going. In cyber, that makes AI less like one more product category and more like a reasoning layer for offensive and defensive operations. The firms shipping it already act that way. The benchmarks increasingly measure it that way. Security teams should start budgeting, governing, and training for it that way too.

Attack work that AI already does well

The offensive case is no longer theoretical. A 2024 paper on one-day vulnerabilities found that a GPT-4-based agent could exploit 87% of 15 real-world one-day vulnerabilities when given the CVE description, while the other tested models and even open-source tools like ZAP and Metasploit scored 0% on that set. That is a startling result, and it explains why people suddenly reach for dramatic language. Put a capable model in front of a clearly described vulnerability, let it iterate with tools, and it can outperform the older baseline most people would have trusted.

The catch is just as important as the headline. The same paper found that without the CVE description, that GPT-4 agent exploited only 7% of the vulnerabilities. CVE-Bench, which was designed to look more like real-world web-app exploitation, found that the state-of-the-art agent framework could exploit up to 13% of vulnerabilities in its environment. That is still dangerous, but it is a very different kind of dangerous. AI is not uniformly dominant across all offensive work. It is highly dangerous on tasks where context is available, the target is reproducible, and success can be iterated toward quickly.

Mythos pushes the offensive ceiling higher. Anthropic’s internal benchmark write-up says Mythos Preview found working exploits far more often than Opus 4.6 and achieved full control-flow hijack on ten fully patched OSS-Fuzz targets. Anthropic also says non-experts inside the company have used Mythos-based scaffolds to find remote-code-execution flaws overnight and, in some cases, obtain complete working exploits by morning. AISI, using separate evaluations, said Mythos could execute multi-stage attacks on vulnerable networks and autonomously discover and exploit vulnerabilities in controlled settings. That is the sort of result that changes executive risk conversations even before public deployment becomes broad.

The social-engineering side is even easier for attackers because it requires less ground truth. The FBI warned that malicious actors were already using AI-generated voice messages and texts to impersonate senior U.S. officials in targeted campaigns. Verizon’s 2025 reporting also points to phishing, pretexting, and credential abuse staying central to real breaches, while Verizon noted in related material that synthetically generated text in malicious emails had doubled over two years. Attackers do not need AI to invent a new kill chain. They need it to make old tricks cleaner, cheaper, and easier to personalize at scale.

That last point matters more than the glamour around zero-days. Most organizations still lose ground through credentials, phishing, unpatched edge devices, exposed services, and sloppy identity boundaries. AI makes those entry paths more efficient. It can write lures with fewer telltale errors, generate role-specific pretexts, summarize leaked internal docs, adapt a proof of concept to a target stack, and keep refining the plan without waiting for a specialist at every turn. The “best hacking tool ever” argument is strongest not at the elite end of the spectrum, but in the middle, where AI turns decent operators into faster, broader, more persistent ones.

Defense work that AI is genuinely improving

The defensive story is real too, and dismissing it as vendor optimism misses what is happening. Security teams already spend huge amounts of time on triage, enrichment, query writing, root-cause analysis, investigation handoffs, and patch validation. Those jobs are full of text, code, partial context, and repetitive reasoning. They are a natural fit for good models, especially when the model is wired into logs, telemetry, threat intelligence, and workflow tooling. The point is not that AI “solves security.” The point is that defensive security contains a large amount of cognitive labor that AI can compress hard.

Microsoft’s Security Copilot makes that strategy explicit. Microsoft says the product delivers agentic automation and AI-driven insights across security and IT, and ties that capability to more than 100 trillion daily signals plus integration with Defender, Sentinel, Entra, Intune, Purview, Defender for Cloud, and third-party products. That kind of integration matters because defensive AI is only as good as the evidence it can pull from. A model with vague internet knowledge is nice. A model tied to the live state of your environment is useful.

Google’s Sec-Gemini makes a similar bet from the threat-intel side. Google says the model combines Gemini’s reasoning with near real-time cybersecurity knowledge and tooling, and reports at least an 11% lead on the CTI-MCQ threat-intelligence benchmark plus at least a 10.5% lead on the CTI root-cause-mapping benchmark. Those are not breach-prevention guarantees, but they point to an important defensive truth: a lot of blue-team work is knowledge retrieval, synthesis, and prioritization under time pressure, and models are getting better at exactly that.

OpenAI’s cyber-defense push shows how seriously frontier labs now take the defender side. OpenAI says it is scaling its Trusted Access for Cyber program to thousands of verified defenders, fine-tuning a GPT-5.4-Cyber variant for cyber-permissive defensive use, and that Codex Security has already contributed to more than 3,000 critical and high-severity fixed vulnerabilities across the ecosystem. Whatever one thinks of vendor claims, labs do not build specialized trusted-access programs unless they believe the capability is materially useful and materially risky.

Google’s Big Sleep result is the cleanest proof that AI defense is not just an aspiration. Project Zero said Big Sleep found what it believes is the first public example of an AI agent discovering a previously unknown exploitable memory-safety issue in widely used real-world software, specifically SQLite, and that the bug was fixed before an official release. DARPA’s AI Cyber Challenge is another sign of direction: the U.S. government is putting real money behind AI systems designed to secure critical software and open-source infrastructure. The defensive future is not hypothetical. It is being built right now, just with a lot more friction than the offensive headlines suggest.

The bottleneck that keeps humans in the loop

If AI were already the complete security tool people fear or celebrate, the benchmark scores would look very different. SEC-bench found success rates of at most 18.0% for proof-of-concept generation and 34.0% for vulnerability patching on its full dataset. SecureVibeBench found that even the best-performing agent achieved only 23.8% correct and secure solutions on realistic secure-coding tasks. Those are not toy failures. They are reminders that real security work breaks models on ambiguity, hidden dependencies, verification, and the plain ugliness of large codebases.

Safety problems sit on top of performance problems. CYBERSECEVAL 2 found that tested models still showed 26% to 41% successful prompt-injection tests on its benchmark and used False Refusal Rate to show a hard tradeoff: the more aggressively you try to block cyber misuse, the more likely you are to reject legitimate borderline requests too. That tradeoff is not academic. Defensive teams need models that are helpful on ambiguous but valid tasks. Vendors need models that do not casually assist abuse. Those goals pull against each other.

OWASP’s LLM guidance explains why this remains messy. Prompt injection, insecure output handling, training-data poisoning, supply-chain issues, sensitive-information disclosure, and excessive agency are not fringe edge cases. They are part of the normal attack surface for model-driven systems. If you let a model read untrusted input, call tools, write code, or take actions, you inherit a fresh batch of failure modes on top of the old software ones. A security team that adds AI without changing its control model is not modernizing. It is expanding its attack surface.

The governance side is catching up, but it is still catching up. NIST says the AI Risk Management Framework is meant to help organizations build trustworthiness into design, development, use, and evaluation. CISA says AI should be secure by design just like any other software system, and its Roadmap for AI frames agency work around responsible adoption and risk reduction. Those are not magic standards. They are signals that the right mental model for AI in cyber is risk-managed deployment, not blind empowerment.

Humans stay in the loop because production environments punish confident error. A model can suggest a patch in seconds. Shipping that patch into a hospital, bank, airline, or industrial plant is another matter. Someone still has to decide whether the finding is real, whether the patch breaks something upstream, whether maintenance windows exist, whether dependencies are mapped, whether rollback is possible, and whether the ownership chain is clear. AI is already very good at finding and proposing. Organizations are still slower at deciding and changing. That is the bottleneck.

The difference between a benchmark and a breach

The smartest way to read cyber-AI benchmarks is to treat them as capability signals, not direct forecasts. The one-day-vulnerability paper is a perfect example. Its 87% figure is extraordinary, but only under the condition that the agent has the CVE description. Remove that context and the score falls to 7%. That does not make the result weak. It tells you where the power lives: AI thrives when the problem is narrowed, the goal is clear, and feedback arrives quickly. Enterprise attacks often contain some of those properties, but not all of them at once.

Anthropic’s Mythos results should be read with the same discipline. Their internal benchmarks use strong scaffolds, repeated runs, and reliable crash grading. AISI’s tests gave the model explicit direction and network access inside controlled ranges. CVE-Bench was built precisely because earlier work did not reflect the complexity of real web-application exploitation closely enough. None of this weakens the warning. It makes the warning more precise. AI is already dangerous in cyber, but its danger is uneven and environment-dependent.

That nuance matters because attackers do not need benchmark perfection to win. Verizon’s 2025 DBIR says credential abuse and exploitation of vulnerabilities remain leading initial attack vectors. The FBI’s live alert stream is full of familiar reality: phishing against messaging apps, exploitation of vulnerable edge devices, malware on routers, OT targeting, and nation-state persistence. A capable AI agent does not have to breach an enterprise end to end on its own. It only has to make one or two stages of those existing paths materially faster or cheaper.

This is where the offense-defense asymmetry bites. Attackers need one path. Defenders need durable coverage across assets, identities, vendors, users, and patch cycles. Berkeley researchers surveying the field said current frontier AI capabilities and applications in attacks have exceeded those on the defensive side, even though the gap may narrow. That rings true because defense inherits organizational drag. Finding bugs is a reasoning problem. Fixing them is a governance problem, an engineering problem, and often a budget problem. AI helps the first problem faster than it helps the rest.

Security programs that fit the new reality

The organizations that adapt best will stop treating AI as a separate innovation stream and start treating it as part of threat modeling, control design, and response engineering. MITRE ATT&CK remains the common language for adversary behavior in traditional environments. MITRE ATLAS plays a similar role for attacks against AI-enabled systems. Used together, they give teams a way to map both the old attack chain and the new model-specific attack surface: prompt injection, data poisoning, model theft, agent abuse, and all the rest. You do not defend a model-driven environment well if your security language only describes yesterday’s systems.

The control stack needs the same update. NIST’s AI RMF gives a broad risk frame around trustworthiness and lifecycle management. CISA’s guidance pushes secure-by-design thinking into AI adoption and calls out that AI should be treated like any other software system from a security standpoint. That points to a practical posture: log model interactions, constrain tool permissions, separate environments, validate outputs before execution, keep strong identity checks around higher-risk access, and assume that model-connected workflows deserve the same discipline as privileged automation. That set of steps is an inference from the guidance, but it follows directly from the risks the major frameworks name.

Patch speed now matters even more than before. Anthropic’s Glasswing frame is blunt: the company says these capabilities will proliferate, and the short-term transition could favor attackers if releases are not handled carefully. OpenAI’s Trusted Access for Cyber and Google’s selective Sec-Gemini rollout show another part of the answer: trusted access, staged deployment, and strong verification are becoming standard for frontier cyber capability. The labs themselves are telling the market that not every user should get the same level of cyber assistance on day one. Enterprises should borrow that logic internally. Not every employee or workflow should get the same model autonomy either.

Open-source security deserves special focus because it sits under everything. DARPA’s AI Cyber Challenge centers critical software and supply chains for a reason. Anthropic’s Glasswing gives access to open-source maintainers and infrastructure operators for the same reason. A lot of the future fight will be decided upstream, before flaws hit downstream products at scale. The smartest use of cyber AI may turn out to be boring and structural: scanning the shared code the rest of the digital economy quietly depends on.

The claim that matters more than best tool ever

If the question is whether AI is now a serious hacking tool, the answer is yes. If the question is whether AI is already a serious security tool, that answer is yes too. If the question is whether it is the best hacking and security tool ever, the phrase starts to blur more than it clarifies. Tools are discrete. AI is becoming an operating layer that sits on top of other tools, joins steps together, and gives both attackers and defenders a speed boost. On present evidence, that boost is often easier to cash in on offensively than defensively. Berkeley’s survey says current AI capabilities in attack have exceeded those on the defensive side. Verizon and the FBI show that the real breach economy still runs through old entry paths that AI can cheaply improve.

Anthropic makes a longer-term argument worth taking seriously. In its Mythos write-up, the company says the transition may be rough, but believes powerful language models will eventually help defenders more than attackers once the security landscape reaches a new equilibrium. That is plausible. Fuzzers, scanners, and many other security tools also raised fears before becoming defensive staples. But that future does not arrive on its own. It depends on secure deployment, trusted access, better evaluation, faster patching, stronger open-source maintenance, and organizations that can absorb AI speed without losing control.

The sharper verdict is this: AI is the strongest general-purpose cyber force multiplier yet, and Mythos is one of the clearest signs that the threshold has been crossed. It is not magic. It is not autonomous dominance. It is not a replacement for human judgment, ownership, or secure engineering. It is a machine that compresses meaningful parts of the cyber workflow so aggressively that old timelines, old staffing assumptions, and old patch rhythms start to look fragile. Security teams that still treat AI as a side experiment are late. Attackers who learn to pair AI with ordinary tradecraft will keep finding weak spots. The winning posture is not panic and not denial. It is speed, verification, and design discipline under a much harsher clock.

FAQ

Mythos refers to Anthropic’s Claude Mythos Preview, a restricted-access frontier model that Anthropic says has unusually strong cybersecurity capabilities, including large-scale vulnerability discovery and exploit development in controlled settings. Anthropic launched it through Project Glasswing rather than a broad public release.

No. Anthropic says Mythos Preview is unreleased and is being shared through Project Glasswing with major partners and additional organizations that build or maintain critical software infrastructure.

Not in a simple blanket sense. Anthropic says Mythos can surpass all but the most skilled humans at finding and exploiting software vulnerabilities, but benchmark results across the field still show uneven performance and major reliability gaps on broader real-world security tasks.

Because it came with restricted access, claims of thousands of high-severity findings, and outside evaluation from AISI saying the model marked a step up over earlier frontier systems on multi-step cyber tasks. That combination made the capability jump hard to dismiss as routine vendor hype.

They are getting strong at exploit drafting, code reading, recon synthesis, tool output interpretation, threat summarization, and some scaffolded vulnerability exploitation workflows. Results are best when the target is clear, the context is rich, and the environment offers fast feedback.

Reliable patching, secure code changes in large repositories, complex tool choreography, environment-specific reasoning, and deployment-safe remediation still trip agents up. SEC-bench and SecureVibeBench both show that current systems struggle badly once the work becomes messy and realistic.

Current evidence leans that way. A Berkeley review says frontier AI capabilities and applications in attacks have exceeded those on the defensive side, even though the gap may narrow over time.

Attackers only need partial success. Defenders need accurate findings, safe changes, broad coverage, maintenance windows, and operational follow-through. AI speeds up the discovery side faster than it speeds up organizational change.

Yes. Google Project Zero said Big Sleep found what it believes is the first public example of an AI agent discovering a previously unknown exploitable memory-safety issue in widely used real-world software, specifically SQLite. Anthropic also says Mythos has found high-severity flaws at scale.

No. Benchmarks are capability signals, not direct breach forecasts. The one-day-vulnerability paper, for example, showed extremely high performance with CVE descriptions and far lower performance without them.

Project Glasswing is Anthropic’s restricted initiative for using Mythos Preview in defensive security work with large partners and critical software organizations. Anthropic frames it as a way to give defenders a head start before similar capabilities spread more widely.

OpenAI describes GPT-5.4-Cyber as a cyber-permissive variant of GPT-5.4 aimed at defensive cybersecurity use cases, offered alongside an expanded Trusted Access for Cyber program for verified defenders.

Google positions Sec-Gemini for workflows like incident root-cause analysis, threat analysis, and vulnerability impact understanding, and says it combines model reasoning with near real-time cybersecurity knowledge and tooling.

Yes. The FBI has warned about campaigns using AI-generated voice messages and texts to impersonate senior U.S. officials, and Verizon continues to describe phishing and pretexting as major breach drivers.

Because once a model can read untrusted input, call tools, and take actions, the model becomes part of the attack surface. OWASP lists prompt injection and insecure output handling among the core risks for LLM applications.

They give security teams a shared language for threat modeling. ATT&CK maps adversary behavior in traditional cyber operations, while ATLAS focuses on tactics and techniques against AI-enabled systems.

A blanket ban would miss the point. The stronger move is controlled adoption: trusted access, tight permissions, logging, output validation, and role-based autonomy that fits the risk of each workflow. That direction aligns with NIST, CISA, and the rollout choices frontier labs are already making.

Shorten patch cycles where possible, improve asset and dependency visibility, govern AI-connected tools like privileged automation, and model AI-specific threats instead of bolting AI onto old assumptions. CISA’s secure-by-design position and NIST’s AI RMF both support that posture.

It is fair to call AI the strongest cyber force multiplier yet. Calling it the best single tool ever is catchy, but less precise than saying it is becoming a reasoning layer that amplifies many tools and many workflows at once.

Author:

Jan Bielik

CEO & Founder of Webiano Digital & Marketing Agency

This article is an original analysis supported by the sources cited below

Project Glasswing: Securing critical software for the AI era

Anthropic’s launch note for Project Glasswing, including partner access, Mythos Preview claims, and its defensive-security framing.

Assessing Claude Mythos Preview’s cybersecurity capabilities

Anthropic’s technical write-up on Mythos Preview’s internal cybersecurity benchmarks and exploit-development results.

Our evaluation of Claude Mythos Preview’s cyber capabilities

The UK AI Security Institute’s public evaluation of Mythos Preview on CTFs and multi-step attack simulations.

Trusted access for the next era of cyber defense

OpenAI’s announcement covering Trusted Access for Cyber, GPT-5.4-Cyber, and its cyber-defense rollout strategy.

Our updated Preparedness Framework

OpenAI’s overview of its updated framework for managing severe-risk frontier capabilities, including cybersecurity.

Preparedness Framework

The full OpenAI framework document defining tracked categories, thresholds, and safeguards for frontier cyber risk.

Google announces Sec-Gemini v1, a new experimental cybersecurity model

Google’s blog post on Sec-Gemini v1, its benchmark claims, and its intended use in cyber workflows.

Sec-Gemini

Google’s product page describing Sec-Gemini as a cybersecurity-focused AI system for defenders.

From Naptime to Big Sleep: Using Large Language Models To Catch Vulnerabilities In Real-World Code

Google Project Zero’s account of Big Sleep discovering a previously unknown exploitable vulnerability in SQLite.

Microsoft Security Copilot

Microsoft’s overview of Security Copilot, its agentic automation claims, integrations, and scale.

Microsoft unveils Microsoft Security Copilot agents and new protections for AI

Microsoft’s announcement on new Security Copilot agents and AI-specific security protections.

OWASP Top 10 for Large Language Model Applications

OWASP’s reference guide to common LLM application risks such as prompt injection and insecure output handling.

AI Risk Management Framework

NIST’s landing page for the AI Risk Management Framework and its trustworthiness-oriented governance model.

MITRE ATLAS

MITRE’s knowledge base for adversary tactics and techniques targeting AI-enabled systems.

MITRE ATT&CK

MITRE’s widely used knowledge base for modeling cyber adversary tactics and techniques.

Roadmap for AI

CISA’s agency-wide roadmap for beneficial AI use and risk-aware adoption.

Artificial Intelligence

CISA’s AI guidance page, including its secure-by-design position for AI systems.

With Open Source Artificial Intelligence, Don’t Forget the Lessons of Open Source Software

CISA’s argument that AI developers should borrow hard-won software security lessons, especially around open ecosystems.

AIxCC: AI Cyber Challenge

DARPA’s program page for the AI Cyber Challenge focused on securing critical software with AI systems.

PentestGPT: An LLM-empowered Automatic Penetration Testing Tool

The PentestGPT paper showing how scaffolded LLMs improve penetration-testing task completion.

LLM Agents can Autonomously Exploit One-day Vulnerabilities

A paper evaluating LLM agents on real-world one-day vulnerabilities and highlighting strong context-dependent exploit performance.

CYBERSECEVAL 2

Meta’s benchmark paper on LLM cyber risks, including prompt injection, code-interpreter abuse, and false-refusal tradeoffs.

CVE-Bench: A Benchmark for AI Agents’ Ability to Exploit Real-World Web Application Vulnerabilities

A benchmark paper focused on realistic web-application exploitation by LLM agents.

SEC-bench: Automated Benchmarking of LLM Agents on Real-World Software Security Tasks

A benchmark for LLM agent performance on proof-of-concept generation and vulnerability patching.

SecureVibeBench: Evaluating Secure Coding Capabilities of Code Agents with Realistic Vulnerability Scenarios

A secure-coding benchmark showing how often current agents fail to produce code that is both correct and secure.

Frontier AI’s Impact on the Cybersecurity Landscape

A broad paper combining empirical evaluation and expert survey work on the offense-defense balance of frontier AI in cyber.

Security Concerns for Large Language Models: A Survey

A survey of LLM security risks spanning prompt attacks, data poisoning, malicious misuse, and agentic hazards.

Large Language Models for Cyber Security: A Systematic Literature Review

A large review of how LLMs are being applied across cybersecurity tasks and where the field is moving.

2025 Data Breach Investigations Report

Verizon’s annual breach report hub and summary materials on leading intrusion patterns and attack vectors.

Verizon’s 2025 Data Breach Investigations Report

Verizon’s news summary highlighting credential abuse and vulnerability exploitation as leading initial vectors.

Senior U.S. Officials Impersonated in Malicious Messaging Campaign

An FBI alert on targeted campaigns using AI-generated voice and text impersonation.

Cyber Alerts

The FBI’s running feed of operational cyber alerts, including phishing, router exploitation, and OT-targeting activity.

Reading, writing and ransomware

A Verizon white paper citing DBIR findings about synthetically generated text in malicious email and shifting phishing quality.