Google has spent years teaching people to think of Google Photos as a private, searchable backup of their lives. That promise still exists in a narrow sense. Your backed-up photos remain private and visible only to you on signed-in devices unless you share them. Yet the April 2026 shift in Gemini and Google Photos changes something more subtle and, for many users, more consequential. The library is no longer just a place where memories sit. It is a source of context for search, inference, personalization, and image generation.

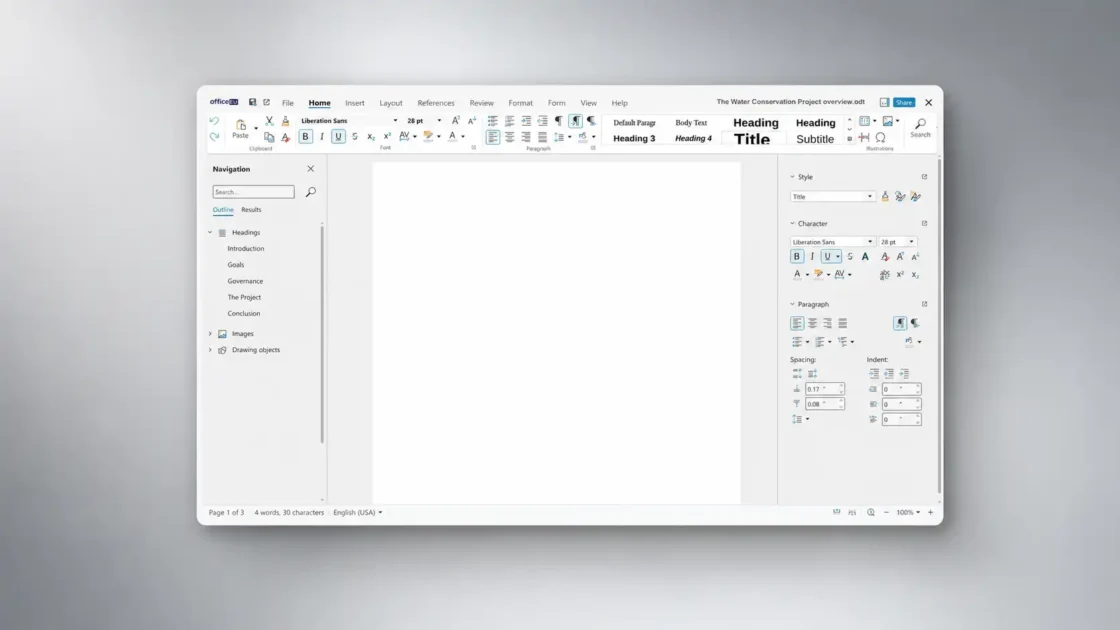

Table of Contents

That is why the latest update has produced a privacy alarm even without a dramatic breach, leak, or policy bombshell. People are reacting to a change in role, not just a change in settings. A cloud photo archive that once felt mostly passive is now being asked to do active cognitive work for Google’s AI stack. Ask Photos can interpret your library conversationally. Gemini’s Personal Intelligence can connect Photos with Gmail, Search, and YouTube. Google’s newest image tools can even use actual images of you and your loved ones to guide generation. Google says those experiences are opt-in and privacy-minded. Users see a different picture. They see a widening gap between “stored privately” and “not being deeply analyzed.”

The tension is not imaginary. Google’s own documentation makes clear that the company draws one privacy line inside Google Photos and another when Photos is connected to broader Gemini or Search personalization. Inside Photos, Google says personal data in Photos is never used for ads, Ask Photos responses are not normally reviewed by humans, and generative AI models outside Google Photos are not trained on personal data in Photos. But the same privacy hub also says that once Google Photos is connected to other Google services, those services apply their own policies, and Google may train on summaries, inferences, and generated media based on the contents of your library. That sentence is the hinge on which the whole debate turns.

What follows is the real story behind the backlash, the technical and legal stakes that matter, and the decisions ordinary users now have to make.

The feature behind the backlash

It helps to start with what actually changed. Google Photos has used machine learning for years. Searching for dogs, beaches, birthdays, or specific people already depended on automated analysis. Face Groups, object recognition, location estimation, highlight videos, and auto-created memories all rested on the fact that Google Photos was never a dumb file cabinet. That part is old. What feels new is the expansion from indexing content to reasoning across your life.

Ask Photos, first announced in May 2024, was the bridge. Google described it as a way to search a gallery with natural language and ask questions about your own history, such as where you camped last year or what themes appeared at a child’s birthday parties. The product was pitched as a smarter search layer powered by Gemini’s ability to understand image content, read text inside photos, and assemble a response from multiple results. That framing sounded harmless enough because it still lived inside the familiar logic of retrieval. You asked. Photos found.

But retrieval is not all Ask Photos does. Google’s own explanation says the system studies search results, decides which ones are most relevant, interprets what is happening in the pictures, and can remember corrections and extra details for the future. In the current privacy hub, Google says Gemini features in Photos can also use relationship information for face groups and a Remember List of facts you want Google Photos to save. That is a much richer model of interaction than classic search. The product is no longer just matching words to pictures. It is building a working model of the people, places, and facts that matter in your life.

Google itself has continued widening the aperture. In January 2026, it introduced Personal Intelligence for Gemini, letting users connect apps like Gmail, Google Photos, Search, and YouTube so Gemini could provide more tailored answers. In March 2026, Google expanded Personal Intelligence in the U.S. across AI Mode in Search, the Gemini app, and Gemini in Chrome. On April 16, 2026, Google announced a new step: personalized image creation in the Gemini app using Nano Banana and your own Google Photos library, including “actual images of you and your loved ones” to guide the image generation process. That phrase landed hard because it made the emotional stakes obvious in a way product jargon rarely does.

The company’s rollout notes matter too. Ask Photos remains region-limited and opt-in. Connecting Google Photos to Gemini Apps requires a personal Google account, age 18 or older, a supported country or territory, Keep Activity turned on, Face Groups enabled, your face selected, and estimate-missing-locations turned on. Personalized image creation with Google Photos launched first for eligible Google AI Plus, Pro, and Ultra subscribers in the U.S. Those are not the signs of a feature Google sees as trivial. They are the signs of a system that needs a lot of data plumbing and a lot of trust.

That is why the public reaction has been sharper than the usual grumbling about AI add-ons. Users did not merely hear that Google Photos got smarter. They heard that the service where they store baby pictures, medical snapshots, family trips, screenshots of bills, home interiors, pets, passports, whiteboards, and private relationships is becoming part of a personalized generative loop. Google can call that convenience. Many people hear intimacy at scale.

The privacy promise and its fine print

Google’s defense is not flimsy. In several places, the company makes clear promises. The Gemini features in Photos privacy hub says your personal data in Google Photos is never used for ads. It says Ask Photos responses are not reviewed by humans unless you give feedback or in rare cases involving abuse or harm. It also says Google does not train generative AI models outside Google Photos with your personal data in Photos. Those are meaningful commitments, and they deserve to be taken seriously.

The problem is not that those statements are false. The problem is that many users will read them as broader than they are. The same privacy hub says that if you connect Google Photos to another Google service, that service’s own policies and controls apply. It goes further: Google says it may train on summaries, inferences, and generated media based on the contents of your Photos library when that content is used through connected services such as Gemini Apps or Search. That is not the same as saying Google trains directly on the original photo library. But it is also not the same as saying the library stays inside an airtight Photos-only box.

That distinction matters because most ordinary users do not think in layers like “raw imagery,” “derived inference,” “generated output,” and “cross-service processing.” They think in ordinary language. They hear “my photos.” If a system derives a summary from a photo of your child’s birthday party, uses that summary to personalize another Google service, and learns from the resulting prompt-response pair, the technical lawyers may see separate categories. The human being on the other end sees a simpler truth: my private pictures helped shape this AI experience.

Google has started spelling out the same logic in newer product posts. In the March 2026 Personal Intelligence expansion, Google said Gemini and AI Mode do not train directly on your Gmail inbox or Google Photos library, but the company does train on limited info such as specific prompts and the model’s responses. In the April 16 personalized image announcement, Google repeated that the Gemini app does not directly train its models on your private Google Photos library and again said it trains on limited info like prompts and responses. This is a narrower promise than many users assume. It rejects direct training on the library, not downstream learning from interaction around the library.

Two privacy models now live inside Google Photos

| Context | What Google promises | What changes for the user |

|---|---|---|

| Gemini features inside Google Photos | No ads based on Photos data, no routine human review of Ask Photos responses, and no training of generative AI models outside Photos on personal data in Photos | Your library is still analyzed to answer questions, relationship labels and remembered facts can deepen personalization, and feedback can attach transcripts plus studied-photo metadata |

| Google Photos connected to Gemini or Search | No direct training on the private photo library itself | Other Google service policies apply, and Google may process or train on summaries, inferences, prompts, responses, and generated media tied to what came from the library |

That is the quiet structural change underneath the current alarm. The first model says, in effect, “we analyze your library inside Photos under Photos rules.” The second says, “if you connect Photos to a broader AI system, some of what comes out of that library can enter a wider policy environment.” Those are not the same bargain.

The privacy promise also has a human review wrinkle that is easy to miss. Google says Ask Photos responses are not reviewed by humans unless you provide feedback or rare abuse situations apply. Yet the company also says Ask Photos queries may be reviewed by humans to improve Photos, after steps are taken to disconnect queries from Google Accounts and remove sensitive content, and that users may opt out of that review in settings. That is not an outrage on its face. It is, though, another example of why “nobody sees my photos” is not the only question that matters. Metadata, query content, face-group information, and system-selected descriptions can still travel in ways users do not naturally picture.

Where the line moves from search to surveillance

The word “surveillance” can be abused in tech writing. It should not be used lightly. Google Photos is not secretly dumping whole camera rolls into public model training. It is not advertising that random staff browse user albums for fun. The product still requires opt-in for the most sensitive Gemini layers. Yet a lot of users still feel watched, and the reason is easy to understand. Modern AI systems shrink the distance between storage and scrutiny.

Classic search inside a photo library felt bounded. You typed “beach” or “dog” and got results. Even when users knew machine vision had classified the images, the action felt limited and specific. Ask Photos and Personal Intelligence move to a different plane. They can infer themes, retrieve life details, combine signals across services, and use context from labels, dates, locations, and relationships. A query stops being a simple lookup and starts becoming a request for synthesis. That is where people begin to feel that the archive is reading them back.

This is also why the phrase “automated scanning” hits a nerve even if it is imprecise. People are not worried only about whether a system scans each image pixel-by-pixel on a schedule. They are worried about the larger fact that their collection is machine-interpretable in far richer ways than before. Google’s own help documents say Gemini Apps can use face groups, relationships saved in Google Photos, dates, locations, descriptions of what is in a photo or video, and the current chat context to find and discuss content from the library. That is a lot of semantic reach.

Privacy scholars have been warning for years that client-side or local analysis changes the meaning of private space, even when it is marketed as safety or convenience. The Electronic Frontier Foundation argued long ago that client-side scanning breaks the ordinary promise users expect from private communications because analysis happens before the user experience reaches its supposedly protected boundary. Google Photos is not an end-to-end encrypted messenger, and the analogy should not be pushed too far. Still, the emotional logic is similar. People feel a boundary moving inward, from the cloud service they chose to a deeper layer of personal memory and identity.

Google’s own product history strengthens that perception. In mid-2025, Google had to improve Ask Photos and merge back some of classic search after users complained about latency and quality. The Verge also reported in 2025 that Google paused the rollout of Ask Photos because it was not meeting the company’s standards for speed, quality, and user experience. That matters because users are being asked to trust a system that Google itself has already slowed down and reworked in public. If a company says “give us more of your personal context” and then repeatedly adjusts the product because it is not yet dependable, suspicion is a rational response.

The strongest privacy alarm is not about a single hidden data grab. It is about scope creep made legible. Google Photos used to help organize your life. Now it increasingly helps an AI reason about your life and, in some cases, re-create it in synthetic form. That is a different kind of intimacy, even when it is voluntary.

Face groups and remembered facts raise the stakes

If you want to understand why this debate feels more personal than a normal AI feature launch, look at the inputs Google asks users to enable. Connecting Google Photos to Gemini Apps is not just a matter of giving the app access to a folder. Google says users need Face Groups turned on, need to select which face is theirs, and need estimate-missing-locations enabled. Ask Photos onboarding may also ask people to confirm their own face group and provide information about people in their photos so the system can give more accurate responses.

Face Groups are especially sensitive because Google’s own help page says that when the feature is on, Google creates models of the faces that appear in your photos, and those models may be considered biometric data in some jurisdictions. The same page says Google stores and uses your face models, face groups, and face labels until you delete them or your Google Photos account is inactive for more than two years. That is not hidden deep in a watchdog report. It is Google’s own explanation.

Regulators treat this category seriously for good reason. The UK ICO explains that biometric recognition starts with capture from a photograph, then feature extraction, then template creation. It also warns that biometric systems raise issues of fairness, transparency, data minimization, security, and the right to object or request erasure. NIST has separately documented that facial recognition systems can show demographic differences in error rates, including higher false positives in some groups. None of that proves Google Photos is misidentifying people at scale or abusing face models. It does show why users feel uneasy when a consumer photo service leans harder on face-linked personalization. A mislabeled family archive is annoying. A mislabeled family archive feeding AI personalization is more serious.

The Remember List adds another layer. Google says Gemini features in Photos let users save facts for future Ask Photos responses, and that these facts can include relationship information tied to face groups. The company also says Photos may rely on inferences and insights from these systems to provide customized memories, edits, and creations. That is a revealing phrase. It suggests that the system is not only retrieving existing content but shaping future outputs around a persistent model of your relationships and preferences.

This is where the privacy alarm becomes less abstract. People do not usually think of a photo library as a place where they are teaching an AI who counts as family, which child belongs to which birthday, or which face represents them. They think of it as a place where those facts already exist in visual form. The AI layer changes the status of those facts from passive memory to structured context. Once that shift happens, the library starts to resemble a personal knowledge graph built from your most intimate images.

Google’s personalized image generation announcement made this change impossible to ignore. The company explicitly said Gemini can use actual images of you and your loved ones to guide image generation, and that family members can become the stars of generated images if labels are in place. Even users who enjoy generative art can reasonably pause at that point. The feature may be fun. It may also be the clearest sign yet that Google Photos is not merely storing identity-laden imagery. It is supplying identity itself as creative input.

The legal and regulatory pressure around biometric inference

No serious analysis of this update can stop at product design. The legal backdrop matters, especially in Europe and the UK, where biometric and personalization issues draw sharper scrutiny. Google’s Gemini Apps Privacy Hub now explicitly says that under certain privacy laws, including the GDPR in the EU, users may have the right to object to the processing of personal data or ask for inaccurate personal data in Gemini responses to be corrected. That is a notable admission. It tells you Google understands these systems are not just convenience features. They can implicate personal data rights in a direct way.

The ICO’s biometric guidance helps explain why. It treats facial imagery and the templates derived from it as part of a structured recognition process that engages core data protection obligations. It highlights data protection by design, data minimization, lawful basis, explicit consent in some cases, risk of false acceptance or rejection, discrimination concerns, transparency duties, and rights such as access, rectification, erasure, portability, and objection. When a mainstream consumer product relies on face-group models and asks users to confirm “me” before AI features work well, it is stepping onto terrain regulators already view as sensitive.

That does not mean Google Photos is unlawful. It means the product belongs to a category where the company’s narrow wording matters a lot. Saying “we do not directly train on your private photo library” is not the same as saying “we do not derive identity-linked signals from your images,” and regulators know the difference. Saying “the results aren’t shared with Google” in one context, such as Android System SafetyCore, is not the same as saying a cloud-connected Gemini feature is purely local. The privacy burden now falls on Google to keep those distinctions crisp enough that ordinary users can understand them, not just lawyers.

This is where Google’s country-by-country rollout becomes revealing. The Google Photos connection in Gemini Apps is available only in a published list of supported countries and territories and is unavailable in some U.S. regions. The list is broad but not universal. Google does not spell out every reason in the help page, and it would be reckless to guess at all of them. Still, staged availability is often a sign that legal, product, policy, and operational constraints are all in play. When a company moves carefully around identity-rich data, that caution is part of the story.

The larger point is straightforward. AI personalization systems want more context because more context makes them better. Privacy law grows stricter precisely where the context becomes most identifying, intimate, or hard to take back. A photo library sits squarely in that collision zone. It holds faces, relationships, places, timestamps, documents, and a long behavioral record of what you cared enough to capture. Any product that turns that archive into an AI context engine will face pressure from both sides. Users want magic. Regulators want limits. Google wants both.

The control panel is real but incomplete

To Google’s credit, the company is not forcing the most controversial parts of this system on everyone without controls. Ask Photos is opt-in. Connecting Google Photos to Gemini’s Personal Intelligence is opt-in. Users can disconnect Google Photos from Gemini in Connected Apps settings. They can turn off Ask Photos activity. They can opt out of query donation for human review. They can turn Face Groups off, which deletes face groups, face models, and labels. They can delete photos, manage Google Account privacy settings, and export their data. Those controls are real, and any honest article should say so plainly.

The trouble starts when users expect those controls to be cleaner than they are. Google’s help pages explain that if you want to delete something Gemini knows about you from a connected app, you must do two things: delete all chats containing that information and disconnect the relevant app. If you only disconnect the app, Gemini may still use the information if it appears in past chats. If you only delete the chats, Gemini may still find the information in the connected app. Google also says that if you delete or update data in a connected app such as Google Photos, the experience in Gemini may not change until days later. That is a pretty important caveat. Control exists, but it is not instantaneous and it is not one-click clean.

There is another wrinkle. Google says users can turn Personal Intelligence off for a specific chat, but if they start a new chat, Personal Intelligence is on by default. It also says turning Personal Intelligence off for a chat does not stop the chat from being saved to Gemini Apps Activity and used to personalize a response in another chat unless the user uses temporary chat. That is not sinister. It is simply the kind of behavioral detail many users never discover until after they thought they had “turned the thing off.”

The same mismatch appears on the Photos side. Backed-up photos are private by default unless shared, but the Google Privacy Policy also says the company uses automated systems that analyze your content to provide customized features and detect abuse, and that users can manage, export, and delete information through privacy controls. In other words, Google has long described privacy as something compatible with automated analysis. Many users still operate with a stricter intuition: that privacy should also imply a limit on semantic processing unless they expressly invite more. The current alarm is partly the sound of those two definitions colliding.

So yes, there is a control panel. But a control panel is not the same thing as legibility. For most people, the problem is not finding the toggle. The problem is understanding which layer of analysis the toggle actually governs, what survives after a disconnect, what has already entered a chat history, and which downstream system’s policy now applies. When the answers spread across Photos help pages, Gemini privacy hubs, connected-app docs, and the general Google Privacy Policy, the burden shifts toward the user in a way few users can realistically handle.

SafetyCore confusion muddies the conversation

Any discussion of “Google scanning my photos” now runs into another source of confusion: Android System SafetyCore. Privacy communities have spent months arguing about it, often as if it were the same thing as Google Photos or Gemini rummaging through a photo library. Google’s own support materials say that is not accurate. SafetyCore is an Android system service that provides infrastructure for features like Sensitive Content Warnings in Google Messages, and the classification runs exclusively on-device. Google also says SafetyCore only becomes active when an app integrates with it and requests content classification, and that the service does not send identifiable data, classified content, or results to Google servers.

That distinction matters because it changes the technical and privacy analysis. SafetyCore is not presented as a cloud-personalization engine. It is a local classification layer for content-safety features. Google Play describes it as the underlying technology for warnings about potentially unwanted content. The processing is on-device and warnings remain private to the user. That is a separate design pattern from Ask Photos, Gemini Connected Apps, or personalized image generation.

People still lump them together for understandable reasons. They all involve the same broad fear: software examining intimate images in ways the user did not grow up expecting from a phone or a photo album. But the privacy stakes are not identical. A local safety classifier that never sends results to Google is not the same as a cloud AI system that uses Photos as connected context, stores activity in account histories, or learns from prompts and responses. If a user wants to make a calm decision, separating those systems is essential.

That said, SafetyCore’s existence still contributes to the mood around the Google Photos update. It reinforces a cultural shift inside consumer tech: the device, the operating system, the cloud service, and the AI assistant are all becoming layers of continuous interpretation. A decade ago, people mainly worried about who could see their files. Now they also worry about which system can read them, summarize them, classify them, learn from them, and recombine them. Even when the answers differ from product to product, the cumulative effect is the same. Trust gets thinner.

The real privacy question is about scope and trust

The most revealing thing about this controversy is that Google’s critics and defenders are often talking past each other. Defenders focus on policy language: opt-in, not used for ads, no direct training on the private library, controls available, limited rollout, human review only in narrow cases, local safety classification in other contexts. Critics focus on lived meaning: the system is learning the contours of my private life and turning them into active AI context. Both sides are describing something real.

For a user deciding what to do, the sharper question is not “Is Google lying?” The sharper question is “What level of semantic access am I comfortable granting to the service that stores my life?” Some people will look at Ask Photos and Personalized Intelligence and see a compelling trade. They want faster retrieval, better memories, smarter summaries, and image tools that actually know what matters to them. Others will look at the same features and see a quiet migration from storage to behavioral modeling. Neither reaction is irrational.

The issue becomes even clearer when you imagine edge cases rather than happy-path demos. What happens when the system misidentifies a person in a family archive. What happens when a library contains sensitive health images, legal documents, grief photos, or records of private relationships. What happens when a synthetic image tool chooses the wrong reference face. What happens when an inference drawn from old travel photos shapes a recommendation that no longer fits your life. These are not science-fiction fears. Google’s own docs say Ask Photos is experimental, may give inaccurate or inappropriate answers, and that Gemini might not always pick the right photo or detail the first time in personalized image generation.

This is where product rhetoric about magic starts to fray. AI assistants are strongest when they flatten friction. Privacy protection is strongest when it preserves friction in the right places. The newest Google Photos features are asking users to decide which side they value more. That decision should not be made with slogans like “it’s private” or “it’s spying.” It should be made with a clearer view of the actual bargain: you get a more context-aware AI by letting it work with a much more intimate map of your life.

A calmer way to decide whether to keep using it

The right response to this update is not panic. It is discrimination in the old-fashioned sense of careful judgment. Google Photos still does many things very well. It remains one of the best mainstream services for backup, search, cross-device access, and fast retrieval from giant libraries. Its newer AI tools will genuinely delight a lot of people. Yet delight is not the same as consent, and consent is not meaningful unless people understand what the product is doing with enough detail to make a real choice.

If your photo library is mostly everyday travel, pets, landscapes, and social pictures, you may decide that Ask Photos or connected Gemini features are worth it. If your library contains sensitive family records, medical imagery, confidential work screenshots, or identity documents, you may draw the line differently. The point is not that one answer fits everyone. The point is that Google Photos now asks a more intimate question than it used to, and users should answer it deliberately rather than sleepwalking through setup banners.

The strongest case for caution is not apocalyptic. It is practical. Google’s own docs show a stack of systems with different rules, different retention behaviors, and different consequences once Photos content enters Gemini, Search, feedback flows, or generated-media loops. The strongest case for optimism is also practical. Google has not hidden every control, it has published real privacy commitments, and it is at least trying to preserve some boundaries between Photos data, ads, model training, and human review. Both facts can be true at once.

The privacy alarm around the latest Google Photos update is justified not because the company has obviously crossed into blatant abuse, but because it has shifted the old bargain without making the new one feel simple. The service is no longer just a private attic full of boxes. It is becoming an interpretive layer over your life. Plenty of users will like that. Plenty of others will decide the attic should stay dark, quiet, and boring. After this update, that no longer feels like an old-fashioned preference. It feels like a serious design choice.

FAQ

Google expanded the role of Photos in its AI ecosystem. Ask Photos already used Gemini for conversational search, and in 2026 Google pushed further with Personal Intelligence connections and image generation that can use Google Photos context and, in some cases, actual images of you and loved ones.

No. Google Photos has long analyzed images for search, face grouping, places, objects, and automatic creations. What feels new is the broader use of that analysis for conversational answers, connected AI personalization, and generated media.

Yes. Google says Ask Photos is available only to users who opt in to Gemini features in Photos, and classic search remains available if you do not opt in.

No. Google’s Photos privacy hub says personal data in Google Photos is never used for ads.

Google says Gemini and related services do not directly train on your private Google Photos library. It also says it trains on limited info such as prompts and model responses, and that connected services may process or train on summaries, inferences, and generated media based on library contents.

Because direct training is only one part of the privacy picture. Users are reacting to the fact that their photo library can still be interpreted, summarized, connected to other services, and used to personalize AI behavior.

For eligible users who connect Google Photos to Personal Intelligence, Google says Gemini can use actual images of you and your loved ones to guide image generation.

It is a feature inside Gemini features in Photos where users can save facts they want Google Photos to remember for future responses. Google says this can include relationship information and other details tied to face groups.

Because Google requires Face Groups and a selected “me” face for some Gemini-Photos connections, and Google also says the face models it creates may be considered biometric data in some jurisdictions.

They are more than labels. Google says turning Face Groups on allows it to make face models from faces in your photos, and those models can be stored until you delete them or the account is inactive for more than two years.

Google says Ask Photos responses are not normally reviewed by humans, except if you provide feedback or in rare abuse or harm cases. It also says Ask Photos queries may be reviewed by humans for product improvement after privacy protections are applied, and users can opt out of that review.

Google says feedback can include the conversation transcript, an optional screenshot, and metadata about photos and face groups studied to generate the response. The actual pixels of photos are not included unless they appear in a screenshot you attach.

No. Google says you may need to both disconnect the app and delete chats that contain the relevant information. It also says changes to connected-app data may take days to affect the Gemini experience.

Yes, Google says you can turn it off for a specific chat. But new chats start with Personal Intelligence on by default where the feature is available, and saved chat activity can still influence later experiences unless you use temporary chat.

No. Google says SafetyCore is a separate Android system service used for things like Sensitive Content Warnings in Messages, and that its classification runs on-device without sending identified content or results to Google. That is different from cloud-connected Photos and Gemini features.

Yes, by default your backed-up photos and videos are private and viewable only by you on your signed-in devices unless you share them. The current debate is about AI analysis and connected use, not open public access.

Yes. Google improved Ask Photos in 2025 after complaints about speed and simpler searches, and reporting from The Verge said the company had paused the rollout at one point because of latency, quality, and user-experience issues.

Google says some users under laws such as the GDPR may have rights to object to processing or ask for inaccurate personal data in Gemini responses to be corrected. UK biometric guidance also highlights rights such as access, rectification, erasure, portability, and objection in relevant contexts.

Not automatically. The more sensible move is to decide which AI layers you actually want, review Face Groups and connected-app settings, and keep especially sensitive images out of tools that rely on deeper personalization if that trade feels wrong to you.

Author:

Jan Bielik

CEO & Founder of Webiano Digital & Marketing Agency

This article is an original analysis supported by the sources cited below

Ask Photos A new way to search your photos with Gemini

Google’s original announcement of Ask Photos, including how the system interprets complex queries and the company’s first privacy commitments.

Google Photos’ Ask Photos feature improved, expanded availability

Google’s 2025 update explaining why it folded classic search strengths back into Ask Photos and broadened rollout.

Use Ask Photos for photos, information & assistance

Google Photos Help page covering opt-in flows, onboarding, and requirements for Ask Photos.

Gemini features in Photos privacy hub

Google’s main privacy explainer for Ask Photos and related Gemini features inside Google Photos.

Personal Intelligence Connecting Gemini to Google apps

Google’s January 2026 announcement of Personal Intelligence for Gemini, including Photos as a connected source.

Bringing the power of Personal Intelligence to more people

Google’s March 2026 expansion note for Personal Intelligence in Search, Gemini, and Chrome.

New ways to create personalized images in the Gemini app

Google’s April 16, 2026 post explaining personalized image generation with Nano Banana and Google Photos.

Connect your Google apps to personalize your Gemini experience

Gemini Help documentation for Personal Intelligence, Connected Apps, deletion caveats, and chat-level controls.

Search for your photos, videos & more with Gemini Apps

Gemini Help page detailing eligibility, supported regions, and the data signals Gemini can use from Google Photos.

Gemini Apps Privacy Hub

Google’s broader Gemini privacy documentation, including retention, legal rights, and how connected data is governed.

Use AI to create with Google Photos

Google Photos Help page for Photo to video, Remix, and Me Meme, including eligibility and generation limits.

Set up & manage your face groups

Google’s explanation of Face Groups, retention of face models, and the note that they may count as biometric data in some jurisdictions.

Back up photos and videos

Google Photos Help page stating that backed-up photos are private by default unless shared.

Google Privacy Policy

Google’s general privacy policy describing automated analysis, privacy controls, deletion, export, and retention.

Understanding Android System SafetyCore

Google’s official explanation of SafetyCore as an on-device classification service used for features like Sensitive Content Warnings.

Android System SafetyCore

Google Play listing describing SafetyCore’s role and its on-device processing model.

Google’s Gemini AI will use what it knows about you from Gmail, Search, and YouTube

The Verge’s reporting on the introduction of Personal Intelligence and Photos as part of Gemini’s broader personalization layer.

Google Search AI Mode can use Gmail and Photos to get to know you

The Verge’s coverage of Google’s AI Mode expansion and Google’s public wording on limited training.

Google quietly paused the rollout of its AI-powered Ask Photos search feature

The Verge’s report on Google pausing Ask Photos rollout over latency, quality, and user experience.

Biometric recognition

UK ICO guidance explaining biometric capture, feature extraction, and template creation from photographs.

Biometric data guidance Biometric recognition

The ICO’s broader guide to fairness, transparency, minimization, rights, and security in biometric processing.

Facial Recognition Technology Part III Ensuring Commercial Transparency & Accuracy

NIST testimony summarizing findings on error rates and demographic differentials in face recognition systems.

Why Adding Client-Side Scanning Breaks End-To-End Encryption

EFF’s technical argument about why on-device or client-side content analysis changes privacy expectations, used here for broader context.