Physical AI is the point at which artificial intelligence stops being only a software layer and starts becoming an operating force in the world. It is AI with cameras, wheels, arms, grippers, rotors, force sensors, safety envelopes, maintenance schedules and liability. The shift matters because the largest untouched pool of work is not writing text or generating images. It is moving goods, inspecting assets, sorting inventory, assembling products, navigating streets, assisting people at home and reacting to public spaces that never stay still.

Table of Contents

The shift from digital answers to physical action

The first popular wave of generative AI lived on screens. It drafted emails, answered questions, wrote code, generated images and searched across files. That work was useful because it compressed knowledge labor into shorter loops. A person gave a prompt, a model produced an output, and the final action still belonged to a human. Physical AI changes the endpoint. The output is not a paragraph or a prediction. The output is movement.

That difference sounds simple until it touches a warehouse aisle, a public road or a home kitchen. A chatbot can be wrong and still leave the physical world unchanged. A robot arm that misreads a shelf can crush an item, drop a part, block a worker or stop an assembly line. A drone that loses situational awareness is not merely an unreliable app. It is an aircraft. A self-driving vehicle that fails at an edge case is a road user. Physical AI brings AI into settings where errors have mass, speed, heat, pressure, height and consequence.

The term has become popular because the AI industry is searching for the next large arena after chatbots and copilots. NVIDIA defines physical AI as systems such as cameras, robots and self-driving cars that perceive, understand, reason and perform or orchestrate complex actions in the physical world. Its own examples include robots, autonomous vehicles and smart spaces such as factories and warehouses. That definition is useful because it avoids treating physical AI as only humanoid robotics. The category is broader than humanoids. It includes any autonomous or semi-autonomous machine that senses the world and acts inside it.

The market signal is already visible in industrial data. The International Federation of Robotics reported 542,000 industrial robot installations in 2024, the fourth straight year above 500,000 installations, with 4.664 million industrial robots in operation worldwide. Asia accounted for 74% of new installations, while China represented 54% of global deployments. These figures do not prove that every robot is powered by advanced AI, but they show that the installed base for physical AI is no longer theoretical. The hardware footprint already exists.

The same pattern appears in service robotics. IFR said professional service robot sales reached almost 200,000 units in 2024, up 9%, while robot-as-a-service fleets grew 31%. That shift is as important as the unit count. Subscription and rental models lower the upfront barrier for companies that want automation but cannot buy expensive machinery outright. Physical AI will spread faster when customers buy outcomes, hours or tasks rather than machines.

The near-term adoption path is not a science-fiction jump from office software to robot butlers. It is narrower, more industrial and more operational. Factories, warehouses, logistics yards, hospitals, farms, public roads and controlled delivery zones will absorb physical AI before most homes do. These places already run on processes, metrics, maps, safety rules and trained staff. They have enough repetitive work to justify capital spending. They also have managers who can measure whether a robot completes enough tasks per hour to matter.

Still, the strategic meaning is larger than any one robot. For decades, software ate information. Physical AI is the attempt to let software coordinate the movement of things. That includes boxes, pallets, sheet metal, groceries, vehicles, medical supplies, inspection cameras, agricultural sprayers and household objects. The core question is not whether AI can talk. It is whether AI can reliably act.

A precise definition of physical AI

Physical AI is artificial intelligence embedded in machines that perceive the physical world, reason about space and time, choose actions, and execute those actions through hardware. It combines perception, planning, control, robotics, simulation, edge computing, safety engineering and operational feedback. A system does not need to look human to qualify. A warehouse robot that routes around people, a drone that plans a delivery path, a factory camera network that coordinates mobile machines, and a robot arm that adjusts its grip based on force feedback all sit inside the category.

The definition matters because the phrase is already being stretched by marketing teams. A connected appliance is not automatically physical AI. A remote-controlled drone is not physical AI merely because it has sensors. A robotic arm running a fixed script is automation, but not the stronger form of AI-led autonomy now being discussed. The distinction is adaptability. Physical AI systems must interpret changing real-world conditions and alter behavior without being manually reprogrammed for every case.

Older automation followed tightly specified instructions. A machine on a car assembly line welded the same seam thousands of times. That remains powerful, and much of modern manufacturing depends on it. Physical AI aims at messier work: shelves with random items, delivery routes with pedestrians, factory tasks with part variation, homes with clutter, hospitals with unpredictable human movement and public spaces with incomplete information. The machine must handle change rather than demand a perfectly organized world.

That is why multimodal models are central. A robot needs to process images, video, depth, sound, text instructions, force data, joint positions, maps and sometimes speech. It also needs an action interface. Google DeepMind’s RT-2 introduced a vision-language-action model that learns from both web and robotics data and translates that knowledge into generalized instructions for robotic control. The point was not just to recognize objects. It was to connect web-scale semantic understanding with robot action.

DeepMind’s later Gemini Robotics work made the same direction clearer. The company described Gemini Robotics as a vision-language-action model built on Gemini 2.0 with physical actions as a new output modality for directly controlling robots. Gemini Robotics-ER was designed for spatial understanding and embodied reasoning, including perception, state estimation, planning and code generation. This is the software thesis behind physical AI: models trained for language and vision are being adapted into models that understand tasks in terms of motion.

NVIDIA frames the problem from the infrastructure side. It argues that physical AI extends generative AI with spatial relationships and physical behavior, taking multimodal inputs and converting them into insights or actions an autonomous machine can execute. It also emphasizes simulation, synthetic data and reinforcement learning because real-world trial and error is expensive, slow and sometimes unsafe.

A working definition therefore needs three tests. First, the system must sense the world through real inputs rather than relying only on static data. Second, it must reason about physical constraints such as distance, collision, grasp, weight, speed, timing and human proximity. Third, it must cause an action in the physical world, whether through a robot, drone, vehicle, machine tool or coordinated smart space. When all three are present, AI has left the screen.

Physical AI stack at a glance

| Layer | Function | Practical example |

|---|---|---|

| Sensors | Capture the physical environment | Cameras, lidar, radar, force sensors, microphones |

| Models | Interpret and plan | VLA models, world models, spatial reasoning systems |

| Simulation | Train and test safely | Digital twins, synthetic data, reinforcement learning |

| Edge compute | Run decisions near the machine | Robot computers, onboard drone processors, vehicle systems |

| Actuation | Move or manipulate | Wheels, arms, grippers, rotors, conveyors |

| Safety layer | Limit damage and verify behavior | Geofencing, speed limits, collision avoidance, human oversight |

| Operations layer | Connect machines to work | Fleet management, maintenance, workflow orchestration |

This stack shows why physical AI is harder than screen-based AI. The model is only one layer. Deployment depends on sensors, hardware, simulation, controls, safety rules and operational discipline working together.

The older automation model is running out of easy gains

Industrial automation has already changed production. Robots weld, paint, palletize, cut, inspect, transfer and assemble. Conveyor systems move inventory faster than people can. Warehouse management systems assign work, scanners track stock, and machine vision checks defects. The world is not starting from zero. The difference now is that many remaining tasks resist rigid automation because they are variable, physical and context-sensitive.

A conventional robot thrives when the world is arranged for the robot. Parts arrive in the same orientation. Fixtures hold objects in the same place. People stay outside the robot cell. The process is engineered until the machine can repeat it. That model works beautifully for high-volume production, but it struggles in lower-volume operations with frequent product changes, mixed inventory or human traffic. Physical AI tries to reduce the cost of variability.

Amazon’s warehouses illustrate the transition. The company has operated large fleets of mobile robots for years, but its newer systems move into more difficult manipulation. In 2025, Amazon introduced Vulcan, described as its first robot with a sense of touch and built on advances in robotics, engineering and physical AI. The company said the system was designed to pick and stow items, not just move shelves or containers.

Amazon Science explained the technical problem: fabric storage pods are not open bins. Items are randomly arranged in cubbyholes and held by elastic bands, making contact with other objects and pod walls nearly unavoidable. That is a different task from suction-picking a parcel from an open conveyor. It requires tactile feedback, motion planning and control under uncertainty. The warehouse problem is no longer only movement across the floor. It is dexterous interaction with clutter.

Amazon’s fleet scale is also revealing. The company said in June 2025 that it had deployed its one millionth robot and introduced DeepFleet, a generative AI foundation model intended to coordinate the movement of its robotic fleet and improve fleet travel efficiency by 10%. The number matters because it shows the path from individual robot intelligence to fleet intelligence. Physical AI is not just a smarter arm. It is the coordination of many machines across buildings, routes and shifts.

Factories face a different version of the same issue. Classic automation is strongest when products are stable and the process runs at scale. The next layer of automation targets parts handling, inspection, setup, changeover, intralogistics and quality tasks where variation still forces human intervention. That is why companies are experimenting with humanoid and mobile manipulation systems. They are not doing it because humanoid shape is magical. They are doing it because factories, warehouses and tools were designed around human reach, human pathways and human workstations.

The economic case depends on the cost of integration. A cheap robot that takes months to deploy may be more expensive than a costly robot that can be trained quickly. This is where AI models, simulation and digital twins enter the business argument. If a manufacturer can test robot behavior in a virtual copy of the line, generate synthetic edge cases, validate safety conditions and update policies without stopping production, automation becomes less brittle.

The hard limit is reliability. A robot that works 80% of the time may be a successful demo and a failed operation. Physical AI must cross a threshold where uptime, task completion, safety and maintenance fit the rhythm of real work. That threshold differs by sector. A warehouse may tolerate staged pilots. A public road demands far stricter public accountability. A home robot faces almost infinite variation and low patience. The older automation model is not disappearing. It is being surrounded by AI systems built for the messy tasks that older automation left behind.

Foundation models are learning to handle sensors and actuators

The breakthrough behind modern physical AI is not one robot part. It is the migration of foundation-model techniques into robotics. Language models learned general patterns from vast text corpora. Vision-language models connected images and words. Vision-language-action models add a new target: actions that a robot can perform. This turns a model from a describer into a controller, though real controllers still need safety and low-level engineering around them.

The problem is not only recognizing a cup. The robot must know where the cup is, how it is oriented, whether it is full, whether the handle is reachable, how much force to use, where the human is standing, whether the surface is slippery, and what to do if the cup moves. A language answer can ignore physics for a sentence. A robot cannot. Physical AI forces foundation models to respect geometry, timing and contact.

RT-2 was one of the clearest early signals because it used web-scale vision-language learning and robotics data in one model. Its argument was that internet-trained models contain semantic knowledge that robots can use. A robot that has never been explicitly trained on every possible object might still understand instructions such as moving a soda can, finding a specific color or placing an item near another item.

The Open X-Embodiment effort then attacked another bottleneck: robotics data fragmentation. It introduced a dataset with more than one million real robot trajectories spanning 22 robot embodiments, from single arms to bi-manual robots and quadrupeds. That matters because robots vary dramatically in body shape, joints, sensors and grippers. A model trained on one robot may fail on another. Shared datasets and cross-embodiment learning are attempts to make robotics look more like modern AI, where larger pooled datasets improve generalization.

Gemini Robotics pushes the field toward models that can adapt across tasks, instructions and embodiments. DeepMind said Gemini Robotics was designed to be general, interactive and dexterous. It also described Gemini Robotics-ER as a model with spatial understanding that can connect with low-level controllers. That split is important. A high-level model may decide what action makes sense, while a lower-level safety-critical controller ensures the machine does not exceed force, speed or stability limits.

NVIDIA’s Project GR00T is another signal of the same movement. Announced in March 2024, GR00T was presented as a general-purpose foundation model for humanoid robots, alongside Jetson Thor and upgrades to the Isaac robotics platform. NVIDIA’s framing links foundation models, simulation and onboard compute into a full robotics development chain.

Foundation models do not remove the need for robotics expertise. They shift where the expertise sits. Engineers still need kinematics, controls, safety, mechanical design, sensor calibration, networking, power management, thermal planning, failure recovery and maintenance. The model may reduce the number of hand-coded behaviors, but it increases demand for data pipelines, validation methods and runtime monitoring. The frontier is not a robot brain floating above the machine. It is a trained model embedded inside a disciplined engineering stack.

This is also why the field will not follow the same adoption curve as chatbots. A software model can be deployed to millions of users with cloud infrastructure and interface changes. A robot model needs physical hardware, local testing, safety reviews, spare parts and site-specific integration. Scaling intelligence is easier than scaling bodies. Physical AI will move in fleets, not downloads.

Simulation becomes the first workplace

The safest place for a robot to fail is a simulated world. That is why digital twins, synthetic data and world models are no longer side tools. They are becoming core infrastructure for physical AI. A robot that must learn by trying every error in the real world would be too slow, too costly and too dangerous. Simulation allows developers to stage rare situations, vary lighting and layouts, test collisions, train policies and evaluate changes before a machine moves near people or production assets.

NVIDIA’s Omniverse is positioned exactly in that layer. The company describes it as libraries and microservices for industrial digital twins and physical AI simulation. It is not a consumer metaverse product. It is a development environment for representing factories, warehouses, robots, sensors and environments with enough physical accuracy to train and test machines.

The phrase “digital twin” is often used loosely, but in physical AI it has a concrete role. A digital twin is a structured virtual representation of a physical space, machine or process. For a factory, it may include equipment geometry, aisle widths, work cells, conveyors, lighting, cameras, robots, humans and materials. For a drone route, it may include terrain, obstacles, airspace constraints and landing zones. For a warehouse, it may include storage racks, traffic patterns, picking stations and human workflows. The better the twin, the more useful the rehearsal.

NVIDIA’s Cosmos research paper states the argument plainly: physical AI needs to be trained digitally first, with a digital twin of itself, the policy model and a digital twin of the world, the world model. The paper describes Cosmos as a world foundation model platform for customized world models, covering curation, pretrained models, post-training examples and video tokenizers.

World models are important because robots need predictive understanding, not just perception. A camera tells the system what the scene looks like now. A world model attempts to predict what may happen next if the robot moves, if an object falls, if a person walks into the path or if a vehicle changes lanes. Prediction is central to action. Every movement is a bet on the next state of the world.

Simulation also changes the economics of safety. Real-world safety testing is slow when the dangerous cases are rare. A delivery robot may operate safely for thousands of routine trips and still fail at an unusual curb, a construction zone or a child running across its path. A simulated testbed can generate many variations of those scenes. It cannot prove total safety, but it can reveal weaknesses earlier than random field exposure.

The sim-to-real gap remains a serious obstacle. A virtual object may not deform like a real object. Simulated friction may be wrong. Lighting can differ. Sensors can drift. Human behavior is hard to model. A policy that looks strong in simulation may fail under dust, glare, vibration, wireless outages or small mechanical wear. Simulation reduces physical risk; it does not replace real-world validation.

The best deployment pipelines will combine three loops: simulation for scale, lab testing for controlled reality, and field testing for operational truth. Companies that master the transfer between those loops will have an advantage. They will update systems faster, test more edge cases and detect failures before customers do.

World models make physical AI less blind to time

Most AI applications deal with static or slow-changing information. A document does not run into traffic. A spreadsheet does not fall off a shelf. Physical AI lives inside time. Every decision changes the scene. Every delay matters. Every moving object creates new constraints. World models matter because they let machines reason about sequences rather than snapshots.

A robot vacuum uses a simple form of this idea when it maps a room and predicts where it can move. A robotaxi uses a far more complex version when it tracks vehicles, pedestrians, cyclists, road geometry, signals and possible future trajectories. A warehouse robot uses it when navigating around workers and other robots. A drone uses it when wind, obstacles and airspace constraints alter a route. Physical AI needs memory of what just happened and prediction of what may happen next.

The world-model approach differs from conventional perception. Perception answers “what is here?” Prediction answers “what will happen if I act?” Control answers “what action should I take now?” Physical AI needs all three. A system that sees well but predicts poorly may still make unsafe or inefficient choices. A system that predicts well but acts poorly may hesitate, jerk, overcorrect or miss deadlines.

NVIDIA Cosmos is aimed at this predictive layer. Its product page describes Cosmos Predict as a world generation model adaptable to physical AI tasks and environments, able to generate predictive video worlds from text, image or video and support custom edge cases, closed-loop policies and multiview, robot-centric simulations.

This does not mean robots are gaining human-like understanding. It means developers are giving machines better tools to anticipate physical consequences. That distinction matters. A robot may predict a falling object without “understanding” it in the human sense. In industry, the philosophical debate is less urgent than the operational result: can the system avoid damage, finish the task and recover when the world changes?

World models may also lower the amount of real-world data needed for edge cases. Rare events are hard to collect because they are rare. Synthetic generation lets teams create variations of near misses, unusual lighting, occlusion, clutter, weather, broken pallets, blocked aisles or unexpected human behavior. This is particularly relevant for drones, self-driving vehicles and mobile robots, where long-tail situations define safety.

The danger is overconfidence. A generated world may look plausible while missing the messy properties that cause real failures. Humans may trust impressive simulations too much. Regulators and insurers will need evidence that simulation coverage maps to operational risk. A physical AI system should not be considered safe because it performs well in a beautiful virtual factory. It must perform safely in the ugly one.

World models also create a new data governance problem. To simulate real workplaces, companies may capture detailed 3D data about factories, homes, streets, hospitals or logistics facilities. That data can reveal layouts, workflows, security weaknesses and human behavior. The more realistic the training world, the more sensitive the dataset may become.

The strategic value is clear. If AI can build useful internal models of physical spaces, it can shift from reactive automation to anticipatory automation. Machines will not only respond to sensor input. They will plan through likely futures. That is the step from “see and stop” to “see, predict and act.”

Edge compute becomes the nervous system

Physical AI cannot depend on cloud inference for every critical action. A robot arm cannot wait for a distant server before deciding whether to stop near a person. A drone cannot lose safe navigation because a connection drops. A vehicle cannot outsource moment-to-moment control to a data center. Cloud systems will train models, update fleets and analyze logs, but physical machines need local intelligence.

This is why edge compute is a central part of the physical AI stack. Onboard processors must ingest high-speed sensor data, run perception models, maintain maps, process instructions, control movement and apply safety rules in real time. The latency budget can be tight. Milliseconds matter when a gripper closes, a wheel turns or a drone corrects in wind.

NVIDIA’s Jetson Thor announcement shows how hardware makers are packaging that demand. The company says Jetson Thor modules provide up to 2,070 FP4 TFLOPS of AI compute, 128 GB of memory and 40 W to 130 W configurable power, with far higher AI compute and energy efficiency than AGX Orin. NVIDIA positions the platform for physical AI and robotics, including humanoids, sensor processing and generative AI at the edge.

The numbers matter less than the direction. Edge hardware is being built for transformer-style workloads, multimodal perception and robot control. Older embedded systems were often designed around narrow computer vision or deterministic controls. Physical AI pushes heavier models onto machines that must still manage heat, battery life, weight, cost and reliability. A robot’s intelligence is constrained by its power budget.

Edge compute also affects privacy and resilience. A home robot that can perform certain tasks locally may send less sensitive video to the cloud. A factory robot that can continue operating during a network interruption may be more useful than one that fails safely every time connectivity dips. A drone with onboard autonomy may handle dynamic obstacles better than one waiting on remote instructions.

Local inference does not remove cloud dependence. Training large models, generating synthetic data and analyzing fleet performance will still happen in data centers. The likely architecture is hybrid: cloud for training and fleet learning, edge for immediate perception and control, local site servers for coordination, and human operators for exceptions. The winners will design the full system rather than selling a model alone.

The hardware challenge is also economic. AI chips, sensors and batteries add cost. Industrial buyers care about payback. A warehouse does not adopt a robot because the processor is impressive. It adopts the robot because the system improves throughput, reduces injury risk, fills labor gaps or handles work that people do not want to do. Edge hardware must translate into measurable operational value.

The maintenance burden is real. More compute means more software updates, thermal concerns, cybersecurity exposure and component failure points. Physical AI fleets may require IT teams and maintenance teams to merge skills. A failed model update could become an operational disruption. A sensor calibration error could look like a model error. A battery issue could become a productivity issue. The nervous system of physical AI is both computational and mechanical.

Factories are the first serious proving ground

Factories are the natural early home for physical AI because they combine structured environments with valuable physical work. They are not easy environments, but they are measurable. A manufacturer can track cycle time, defects, downtime, safety incidents, scrap, rework, labor allocation and throughput. That makes it possible to evaluate whether a new robot earns its place.

Industrial robot data already shows a large automation base. IFR’s 2025 figures show annual industrial robot installations above half a million for four straight years and a global operational stock of 4.664 million units. The regional concentration matters. Asia, and especially China, has moved fastest, with China accounting for 54% of 2024 deployments and exceeding 2 million robots in operation.

Physical AI builds on that base by targeting tasks that do not fit classic robot cells. Consider parts presentation, bin picking, mobile inspection, material handling, fixture loading, quality checks and line-side logistics. These tasks involve perception and variation. They often sit between fully automated stations. Humans perform them because people adapt quickly to small changes. AI-enabled robots aim to absorb some of that adaptability.

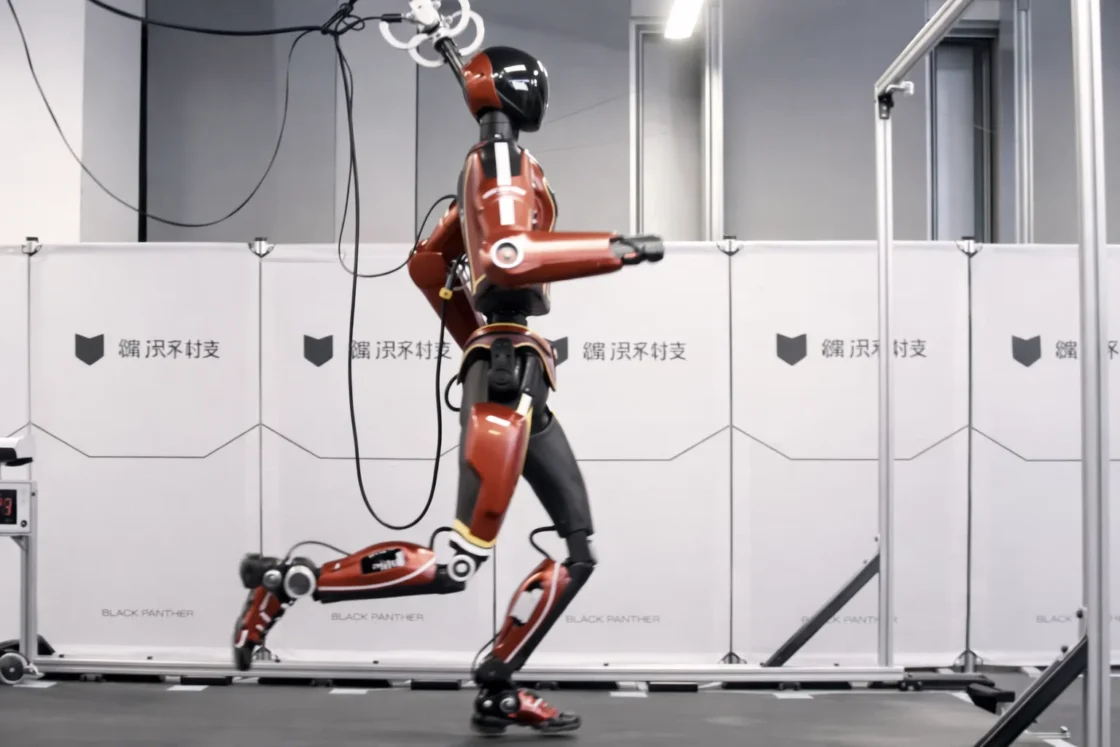

BMW’s work with Figure shows the current state of the field. In September 2024, BMW said Figure 02 was being tested at Plant Spartanburg in South Carolina in a real production environment. The robot was about 170 cm tall, weighed 70 kg and had a 20 kg load capacity. BMW described the test as an effort to determine possible applications for humanoid robots in production.

Figure later said its Figure 02 deployment at BMW ran for 11 months, reached full deployment on an active assembly line within 10 months, ran 10-hour weekday shifts, loaded more than 90,000 parts, logged more than 1,250 runtime hours and contributed to production of more than 30,000 X3 vehicles. BMW’s 2026 press release confirmed many of those figures and said the pilot showed that physical AI could produce measurable value under real industrial conditions.

This does not mean humanoids are ready to flood factories. It means targeted tasks are becoming realistic. BMW described the work as precise removal and positioning of sheet metal parts for welding, a repetitive and physically demanding task. It also stressed integration with production IT, occupational safety, production process management and shop floor logistics. That is the lesson. The robot is only the visible part of the deployment. The integration work decides whether it becomes production.

Factories also offer a useful compromise between structure and complexity. They are more controlled than homes or streets, but less perfect than lab demos. Lighting changes, workers cross paths, parts vary, fixtures wear, schedules shift and production targets do not wait for researchers. A physical AI system that survives factory reality has crossed a meaningful line.

Logistics reveals the economics faster than home robotics

Warehouses and logistics operations may produce the fastest commercial proof of physical AI because the work is repetitive, labor-intensive and measurable. Fulfillment centers move huge volumes of items through receiving, storage, picking, packing, sorting and shipping. Each step creates data. Each delay has cost. Each injury has human and financial consequences. Robots do not need to solve every human task to matter. They need to relieve bottlenecks.

Amazon is the clearest example because it combines scale, internal robotics expertise and dense operational data. Its one-million-robot milestone shows that robotics is already part of logistics at industrial scale. DeepFleet adds a more AI-native layer by coordinating fleet movement rather than treating robots as isolated machines. A 10% improvement in robotic fleet travel time matters when applied across hundreds of facilities.

The shift from moving shelves to handling items is harder. Vulcan’s tactile sensing matters because e-commerce inventory is irregular. Items are soft, rigid, reflective, fragile, oddly shaped, partly hidden or packed tightly. A human picker uses sight, touch and judgment without thinking about it. A robot must convert those capabilities into sensors, models and controls. Touch is not a luxury in logistics. It is part of knowing whether an object is safe to move.

Amazon’s Vulcan description points to a practical safety argument. The company says Vulcan can make associate jobs easier and operations more efficient. The strongest near-term use cases are often not full replacement but ergonomic relief: reaching high or low bins, handling awkward repetitive motions, reducing walking distance, moving heavy containers, and keeping people in safer work zones. That framing is credible because many warehouse tasks are physically tiring.

GXO’s Digit deployment with Agility Robotics shows another logistics path. GXO and Agility announced a multi-year robots-as-a-service agreement in 2024 to deploy Digit humanoids in GXO operations, describing it as the first formal commercial deployment of humanoid robots and first RaaS deployment of humanoid robots. Agility said Digit began work at a GXO facility near Atlanta on June 5, 2024.

The RaaS model matters because logistics firms often avoid large capital bets on early hardware. A subscription contract can shift some technology risk back to the robotics provider. It also aligns the vendor with uptime. If the customer pays for useful work, the robot company must solve maintenance, support, software updates, fleet management and deployment speed.

Logistics also reveals the difference between task automation and workflow redesign. A robot that moves totes may require changes in storage layout, worker assignments, safety procedures, inventory planning and exception handling. If the surrounding workflow stays human-centered in the wrong way, the robot becomes a fragile add-on. If the workflow is redesigned, robots and people can each do work suited to their strengths.

The strongest logistics deployments will likely be mixed fleets: autonomous mobile robots, fixed arms, mobile manipulators, humanoids where useful, drones for certain movements, and AI systems that coordinate the whole building. The future warehouse will not be a humanoid army. It will be a layered machine ecology. Physical AI wins in logistics when it reduces friction across the flow of goods, not when one robot looks impressive on video.

Humanoids are useful when the world was built for humans

Humanoid robots draw attention because they look like a direct substitute for human labor. That makes them exciting, uncomfortable and easy to overhype. The business reason for humanoids is more specific. Many workplaces were designed around human bodies. Doors, stairs, shelves, handles, carts, tools, fixtures and workstations assume a human reach envelope. A humanoid form may let a robot enter existing spaces without rebuilding everything.

That advantage comes with severe engineering trade-offs. Bipedal locomotion is hard. Human-like hands are hard. Balance, battery life, durability, safety, cost and maintenance are hard. A wheeled robot arm may be better for many tasks. A fixed robot cell may be cheaper and faster. Humanoid shape is not a guarantee of usefulness. It is a bet that generality will outweigh mechanical complexity.

The BMW-Figure case is useful because it avoids the vague claim that humanoids will do everything. Figure 02 handled a specific industrial task: moving sheet metal parts into fixtures. The robot worked in a highly automated body shop, not in an uncontrolled home. BMW involved IT, safety, process management and logistics early. The lesson is not that humanoids are now general workers. The lesson is that a humanoid can become useful when the task, environment and integration are carefully chosen.

Agility’s Digit deployment at GXO follows the same pattern. Digit is designed for logistics work, not as a universal household servant. GXO described it as a multi-purpose, human-centric robot made for logistics work and intended to operate safely in human spaces. The first deployments focus on repetitive tasks that fit the robot’s body and reliability profile.

Tesla’s public positioning has also moved toward physical AI. Its Q4 2025 update said the company was transitioning from a hardware-centric business to a physical AI company, citing FSD, Robotaxi, Cybercab production lines, Optimus design work and AI training infrastructure. The statement is strategically important because it shows how a major vehicle manufacturer is tying autonomy, manufacturing and humanoid robotics into one AI narrative.

Humanoids will face a credibility test different from software. Demos can be choreographed. Real work is repetitive, dirty and dull. Customers will ask whether the robot completes enough tasks per shift, how often it needs help, how quickly it recovers from errors, what happens when it falls, whether it damages goods, how it is cleaned, who maintains it, how it is insured and how workers feel about sharing space with it.

The home market is even harder. Homes are cluttered, private and unstandardized. A household robot must operate around pets, children, fragile objects, personal routines and privacy expectations. 1X is taking early orders for its NEO home robot, with a $499 monthly subscription option and a $20,000 ownership option, while saying U.S. deliveries start in 2026. That is a bold consumer claim, but early home robots will likely rely on constrained tasks, user patience and human assistance more than marketing implies.

Humanoids should be judged by boring metrics. Hours worked without intervention. Cost per useful task. Mean time between failures. Injury reduction. Integration time. Maintenance cost. Worker acceptance. Data privacy. Safety incidents. A humanoid that passes those tests in narrow work is more important than one that performs a flashy demo. The humanoid story becomes real when the robot is still useful on the hundredth shift.

Drones turn autonomy into moving infrastructure

Drones show another side of physical AI: autonomous machines that move through shared airspace rather than floors or roads. They combine robotics, aviation, logistics, regulation and public acceptance. Their value is clearest where roads are slow, infrastructure is weak, deliveries are urgent or inspection is dangerous. Medical logistics, small-package delivery, agriculture, mapping, emergency response and infrastructure inspection all fit the pattern.

Zipline is one of the strongest operational examples. The company says it has flown more than 130 million commercial autonomous miles since its first commercial delivery in 2016 and made more than 2 million deliveries, with an autonomous delivery somewhere in the world every 30 seconds. Those are company-reported figures, but they show that drone autonomy is already far beyond small pilots in some markets.

Wing, an Alphabet company, frames drones as lightweight, highly automated delivery systems for small packages between businesses, homes and healthcare providers. Reuters reported in March 2026 that Wing planned to start drone deliveries in California’s San Francisco Bay Area, that it had completed more than 750,000 deliveries and that it served more than two million customers across parts of the U.S.

The technical problem for drones is not only flight. It is integration into airspace and neighborhoods. A delivery drone needs route planning, obstacle detection, weather handling, battery management, payload safety, landing or lowering mechanisms, noise management, fleet coordination and emergency procedures. It also needs permission to operate beyond the pilot’s line of sight if the business is to scale.

That is why the FAA’s beyond visual line of sight rulemaking is pivotal. In August 2025, the FAA published a proposed rule for normalizing BVLOS drone operations, with requirements covering operations, aircraft manufacturing, separation from other aircraft, operational authorizations, security, information reporting and recordkeeping. The Federal Register notice says the proposed rule is intended to create a repeatable framework for wider UAS adoption, including package delivery, agriculture, aerial surveying, public safety and other uses.

Regulation is not a footnote here. It is part of the product. A drone delivery company cannot scale through technical performance alone if every new route requires bespoke approvals. BVLOS rules could move the industry from waiver-by-waiver operations toward a more repeatable path. That does not mean instant adoption. It means the legal architecture may start to match the technology.

Public acceptance will remain uneven. People may accept medical deliveries faster than fast-food deliveries. They may tolerate drones over rural routes more easily than dense neighborhoods. Noise, privacy, crash risk and visual clutter matter. Physical AI in the air must earn trust not only from regulators but from people who never ordered the delivery.

Drones also show how physical AI may become infrastructure. A drone fleet is not just a set of aircraft. It is charging, dispatch, routing, maintenance, monitoring, compliance, weather systems, launch sites, payload handling and customer interfaces. When mature, the system behaves less like a gadget category and more like a logistics network.

Public roads are the hardest public trust test

Autonomous vehicles are the most visible form of physical AI in public space because they share roads with people who did not opt in. A warehouse robot operates inside a company-controlled environment. A robotaxi operates in a city. That changes the trust model. The public expects oversight, crash reporting, emergency coordination, insurance clarity and accountability when something goes wrong.

Waymo provides the clearest current scale among robotaxi operators. Its safety impact dashboard says that through December 2025, Waymo had driven 170.7 million rider-only miles without a human driver across operating cities. The same dashboard compares Waymo crash rates with human benchmarks and reports lower rates for serious injury or worse crashes, airbag deployment crashes and injury-causing crashes over the same distance in its operating areas.

The key phrase is “operating areas.” Autonomous vehicle performance is bounded by operational design domain: mapped areas, road types, weather, speed ranges, lighting, traffic patterns and regulatory permissions. A system may be strong in Phoenix and less ready for heavy snow. It may handle surface streets before freeways. It may work in a geofenced service area and fail outside it. Physical AI does not become universal by being good somewhere. It must define where it is safe enough to act.

Regulators are trying to make that boundary visible. NHTSA’s Standing General Order requires certain manufacturers and operators to report crashes involving automated driving systems or Level 2 advanced driver assistance systems. NHTSA says the order gives it timely information about real-world crashes and lets it investigate safety concerns or defects.

California’s DMV requires autonomous vehicle testing manufacturers in its programs to submit annual disengagement reports showing how often vehicles disengaged from autonomous mode during tests. Disengagement data is imperfect because companies test different routes and define situations differently, but it gives regulators and researchers one view into system maturity and failure modes.

Road autonomy also highlights the difference between safety statistics and public experience. A robotaxi fleet may have lower crash rates than human drivers and still create public frustration if vehicles block emergency responders, stop awkwardly, cluster in neighborhoods or behave in ways people find hard to predict. Trust is not built by averages alone. It is built by visible competence in edge cases.

The public road domain will influence physical AI elsewhere. Safety case methods, incident reporting, geofencing, fleet monitoring, remote assistance, cybersecurity and insurance models developed for autonomous vehicles may inform drones, delivery robots and mobile machines. The road is the harshest classroom.

Vehicle autonomy also shows how physical AI changes company identity. Tesla’s Q4 2025 shareholder material explicitly linked FSD, robotaxi services, Cybercab, Optimus and AI infrastructure to a transition toward being a physical AI company. Whether Tesla delivers on every timeline is a separate question. The strategic move is clear: vehicle companies increasingly see autonomy and robotics as a common AI platform, not as separate side projects.

The core public issue remains accountability. If a model makes a wrong call, who is responsible: manufacturer, operator, fleet manager, software provider, mapping provider, safety driver, remote assistant, owner or municipality? Physical AI forces that question into law, insurance and daily life.

Smart spaces matter as much as smart machines

Physical AI is often imagined as intelligence inside a moving machine. That is only one architecture. Some of the most valuable systems may come from smart spaces: factories, warehouses, ports, hospitals, airports, streets and campuses instrumented with sensors and coordinated software. In these environments, intelligence is distributed across machines, cameras, maps, traffic systems and control rooms.

A smart warehouse may track people, forklifts, autonomous mobile robots, inventory containers, blocked aisles and picking stations. A smart factory may coordinate robot arms, mobile carts, inspection cameras, digital work instructions and maintenance systems. A smart city corridor may manage autonomous shuttles, traffic signals, curb space and emergency vehicles. The machine becomes safer and more useful when the environment shares information.

NVIDIA’s own physical AI definition includes smart spaces, noting large indoor and outdoor areas such as factories and warehouses where fixed cameras and computer vision can improve route planning and operational tracking. This is a broad but accurate view. Physical AI is not confined to mobile robots; it includes environments that perceive and coordinate physical activity.

Smart spaces are attractive because fixed infrastructure can simplify autonomy. A ceiling camera can see occlusions a robot cannot. A warehouse management system knows inventory priorities. A building map can define zones, speeds and permissions. A control room can coordinate exceptions. Instead of asking every robot to understand everything alone, the site becomes a shared intelligence layer.

The risk is dependency. If the environment does too much, robots may become fragile outside that site. If the site sensors fail, machines may lose confidence. If data integration is poor, the system may make decisions from outdated information. Smart spaces also raise surveillance concerns. Tracking worker movement for safety can slide into productivity monitoring. Public-space sensing can slide into privacy harm.

The best industrial smart spaces will make their purpose and boundaries clear. Worker safety systems should not quietly become disciplinary systems. Public-space robotics should not use autonomy as a backdoor for unnecessary surveillance. Physical AI governance must cover the room, not only the robot.

Smart spaces also create a strategic divide between companies with rich operational data and those without. Amazon can train and coordinate robots across hundreds of facilities because it controls the environment, process and data. Smaller firms may rely on vendors, integrators and shared platforms. That could concentrate physical AI capability among large operators unless open standards and modular systems lower the barrier.

OpenUSD and similar standards matter in this context because physical AI systems need shared representations of 3D environments, assets and semantics. A factory digital twin that only works inside one vendor stack may lock customers in. A more interoperable environment lets different simulation, robotics and planning tools talk to each other.

The physical world is too complex for isolated intelligence. Smart machines will need smart places, and smart places will need rules about what they watch, store and control.

Physical AI turns data into a material asset

Data has always mattered in AI, but physical AI changes its character. Text data describes language. Image data captures visual patterns. Robot data captures actions, failures, contacts, forces, trajectories, timing and recovery. A physical AI dataset is not only what the world looked like. It is what the machine did and what happened next.

That makes robot data expensive. A human demonstration requires hardware, time, a task setup and often a skilled operator. A failed attempt can damage equipment. A dataset from one robot may not transfer cleanly to another because embodiments differ. A two-finger gripper, suction cup, humanoid hand, drone rotor system and vehicle steering stack do not share action spaces.

The Open X-Embodiment dataset is important because it tries to standardize and pool real robot trajectories across embodiments. More than one million trajectories across 22 robot embodiments is large for robotics, even if small compared with web text corpora. The effort signals that robotics needs its own version of foundation-model scaling laws, but with harder data collection.

Synthetic data is the other path. If real robot data is expensive, simulation can generate many variations. NVIDIA’s physical AI material argues that synthetic data from digital twins and world foundation models can train physical AI models by simulating interactions, sensor outputs and physical dynamics.

The strongest systems will combine real and synthetic data. Real data anchors the model in actual hardware behavior. Synthetic data expands coverage. Field logs reveal rare operational failures. Human demonstrations teach strategies. Simulation explores dangerous or unusual cases. The data advantage in physical AI will belong to companies that close the loop from deployment back to training.

That loop has a competitive consequence. A company with thousands of robots in operation collects task attempts, intervention logs, sensor traces, maintenance events and environmental variation every day. Each deployment becomes a data source. This is similar to the advantage large consumer platforms gained from user interaction data, but the data here is physical and operational.

The data is also sensitive. Warehouse data can reveal throughput, inventory, staffing and customer demand. Factory data can reveal production methods and supplier relationships. Home robot data can reveal intimate household routines. Road autonomy data can capture pedestrians, vehicles and locations. Physical AI firms will need privacy, retention and access policies that match the sensitivity of spatial data.

Data quality may matter more than raw volume. A million poor demonstrations can teach bad habits. A simulation that misses friction or deformation can mislead. A fleet log without clear labels may be hard to use. In robotics, metadata about embodiment, task, environment, success criteria, failure reason and safety state can be as valuable as video.

Companies often treat data collection as a byproduct. Physical AI companies must treat it as production infrastructure. Sensors, annotation, storage, replay, simulation, validation and privacy controls are part of the product.

Safety moves from content policy to operational engineering

AI safety in software often focuses on harmful content, hallucination, bias, privacy and misuse. Those concerns still matter in physical AI, but the safety center of gravity shifts. A physical AI system can collide, drop, pinch, cut, burn, block, misroute, startle, surveil or fail to stop. Safety becomes a matter of engineering controls, standards, testing, monitoring and response.

Classic robotics safety already has mature concepts: guarding, emergency stops, speed and separation monitoring, force limits, risk assessment and safe integration. Physical AI does not replace those concepts. It puts adaptive models inside systems that still need them. A model’s intelligence should never be the only safety layer between a machine and a person.

Google DeepMind’s Gemini Robotics announcement recognizes this layered reality. It says physical safety is a longstanding concern in robotics and mentions classic measures such as avoiding collisions, limiting contact forces and ensuring dynamic stability. It also says Gemini Robotics-ER can interface with low-level safety-critical controllers specific to each robot embodiment.

ISO 10218-1:2025 and ISO 10218-2:2025 show how formal standards are being updated around industrial robots and robot applications. ISO describes 10218-1 as safety requirements for industrial robots themselves, while 10218-2 covers integration into complete systems and robot cells. These standards do not solve every AI question, but they are part of the safety foundation for industrial robotics.

Safety assurance for physical AI must cover the whole lifecycle. Design safety asks what hazards the system creates. Training safety asks what data and objectives shape behavior. Deployment safety asks where and under what conditions the system may operate. Runtime safety asks how it detects unsafe states and stops. Post-market safety asks how incidents are reported, investigated and corrected.

Autonomous vehicles offer one template. NHTSA’s crash reporting order creates a mechanism for real-world incident visibility, while California disengagement reports provide one kind of test-program transparency. These tools are imperfect, but they acknowledge a basic truth: safety must be observed after deployment, not declared once before launch.

Drones offer another template. The FAA’s proposed BVLOS framework includes requirements for operations, manufacturing, separation, authorizations, security and recordkeeping. This is a systems approach, not a pure device approval. The operator, aircraft, data services and airspace procedures all matter.

Physical AI safety also includes human factors. Workers must understand where robots move, what signals mean, how to stop machines, how to report errors and what tasks remain human-owned. Public users must understand when they are near an autonomous system and what behavior to expect. Confusing machines create risk even when they are statistically safe.

The hard truth is that physical AI will fail. Good governance assumes failure and designs response. Logs, root-cause analysis, recalls, software rollbacks, remote disablement, repair procedures and compensation pathways are part of trust. The safest physical AI companies will be the ones that treat incidents as engineering evidence, not public-relations problems.

Regulation is becoming part of the product

Physical AI enters regulated domains faster than screen-based AI. Vehicles, drones, machines, medical robots and workplace equipment already face safety rules. A company building physical AI cannot treat regulation as a late-stage compliance task. Regulation shapes design, data collection, deployment speed and business models.

The European Union’s AI Act adds a horizontal AI governance layer. The European Commission says high-risk AI systems face duties such as risk assessment and mitigation, high-quality datasets, logging, documentation, information for deployers, human oversight, robustness, cybersecurity and accuracy. It says high-risk rules take effect in August 2026 and August 2027.

The EU Machinery Regulation adds another layer for machinery placed on the European market. EU-OSHA summarizes Regulation 2023/1230 as laying down health and safety requirements for design and construction, replacing the Machinery Directive and requiring conformity assessment, technical documentation, instructions and CE marking for machinery and related products.

For physical AI companies, this means two worlds overlap. A robot may be both machinery and an AI system. A drone may be both aircraft and autonomous AI. A vehicle may be both a motor vehicle and a model-driven decision system. A hospital robot may face medical, workplace and AI rules. Compliance will not be one checklist. It will be a stack of obligations tied to the machine’s role.

The U.S. approach is more sector-specific. NHTSA handles vehicle safety tools such as crash reporting. The FAA handles drone integration and BVLOS rules. Workplace safety agencies, product liability law, local rules and insurance requirements fill other gaps. This can be flexible, but also fragmented. Companies deploying physical AI across states and countries will need regulatory operations as a core capability.

Regulation can slow adoption, but it can also create markets. Clear BVLOS rules may make drone delivery easier to finance. Robot safety standards can make industrial buyers more comfortable. Incident reporting can build public trust if data is credible. Certification pathways can separate serious operators from demo-driven firms.

The danger is rules that lag technology or focus on the wrong layer. A rule written for static automation may not fit a model that updates. A rule focused only on hardware may miss fleet-learning behavior. A rule focused only on the model may miss workplace integration. Regulators will need technical fluency, and companies will need transparent evidence.

Physical AI may also push procurement rules to evolve. Industrial buyers and public agencies will ask for safety cases, cybersecurity documentation, data-handling policies, insurance coverage, human oversight design and maintenance plans. A buyer choosing a robot will not only compare payload and price. It will compare operational accountability.

Regulation is often described as external pressure. For physical AI, it is closer to a design input. The system must be built to show what it did, where it operated, why it stopped, who intervened, how it was updated and how incidents are corrected.

Labor impact will be workflow redesign, not simple replacement

The labor debate around physical AI is often framed as robots replacing workers. That will happen in some tasks and workplaces, but the broader effect is messier. Robots change work by shifting tasks, redesigning workflows, creating maintenance and oversight roles, reducing some physical strain, and making certain operations possible with fewer people. The unit of analysis should be the workflow, not only the job title.

The OECD’s AI and work material captures the mixed picture: AI may improve productivity, job quality and occupational safety and health, but it also creates risks including automation, loss of agency, bias, privacy breaches and lack of transparency. Those risks are sharper in physical AI because the system may monitor, direct or physically share space with workers.

McKinsey Global Institute’s 2025 research frames future work as a partnership between people, agents and robots. It estimates that today’s technologies could theoretically automate more than half of current U.S. work hours, while stressing that this is not a forecast of job losses and that adoption takes time. It also estimates about $2.9 trillion in potential U.S. economic value by 2030 if organizations redesign workflows and prepare workers.

The phrase “technical potential” is important. A task may be technically automatable and still not automated because the robot is too expensive, too slow, too unreliable, too hard to integrate, socially unacceptable or legally blocked. Physical AI adoption will depend on capital budgets, labor markets, safety records and managerial competence.

Warehouses show both sides. Robots may reduce walking, lifting and awkward reaching. They may also increase pace, surveillance and dependence on algorithmic scheduling if implemented poorly. A robot that handles dangerous high-reach storage is a safety gain. A robot that turns human workers into exception handlers under constant monitoring may degrade job quality. Physical AI is not automatically pro-worker or anti-worker. The deployment model decides.

Factories face similar trade-offs. Robots may take physically exhausting tasks, but they may also displace entry-level roles that once served as training pathways. New jobs will appear in robot maintenance, fleet supervision, safety validation, data review, integration and process design. Those jobs may require different skills and may not appear in the same location or wage band as the jobs affected.

The most credible adoption strategies involve workers early. BMW emphasized early involvement of production IT, occupational safety, process management and logistics in its humanoid deployment. It also said employee interest and acceptance grew as the robot became part of daily work. That matters because workplace robots succeed only when people trust, understand and know how to work around them.

Training will be a bottleneck. A maintenance technician may need to understand mechanical systems, sensors, networks and AI diagnostics. A supervisor may need to manage mixed teams of people and machines. A safety officer may need to interpret logs and failure modes. Companies that buy robots without investing in human capability will struggle.

The labor story will differ by region. Aging societies and tight labor markets may adopt service robots to fill gaps. High-wage logistics markets may adopt mobile robots faster. Manufacturing powerhouses may use physical AI to protect competitiveness. Lower-wage markets may adopt more slowly unless safety, quality or export demands justify it.

The central labor question is not “Will robots replace people?” It is “Who controls the redesign of work?” If workers are included, physical AI can remove harmful tasks and create skilled roles. If the redesign is imposed only as cost cutting, trust will break.

Capital spending turns AI into industrial infrastructure

Screen-based AI created huge demand for data centers, GPUs and cloud services. Physical AI adds another layer: machines, sensors, batteries, factories, simulation environments, site infrastructure, service networks and fleet operations. It turns AI from a software subscription into an industrial capital cycle.

NVIDIA’s strategic language reflects this. Jensen Huang has said that physical AI will affect the $50 trillion manufacturing and logistics industries, with cars, trucks, factories and warehouses becoming robotic and embodied by AI. The statement is promotional, but it accurately points to the scale of physical work relative to digital office work.

Capital intensity will shape winners and losers. A startup can ship an AI app with a small team and cloud APIs. A robotics company must build or source hardware, manage supply chains, certify systems, support field deployments, repair failures and finance inventory. Customers may demand pilots before contracts. Sales cycles may be long. Margins may be pressured by support costs.

This is why many robotics companies will choose focused markets. A company that tries to build a universal robot for all sectors may burn capital before reaching reliability. A company focused on warehouse tote handling, hospital delivery, crop spraying, inspection or machine tending can tune hardware and software to a narrower economic problem.

Large companies have advantages. Amazon has facilities, data and internal demand. Tesla has manufacturing, vehicle autonomy infrastructure and AI compute. NVIDIA sells the compute and simulation stack. Alphabet has Waymo and Wing. BMW, Foxconn and other industrial firms have factories where physical AI can be tested against real workflows. Physical AI rewards companies that control deployment environments.

Smaller firms are not excluded, but they may need partnerships. Integrators, robot-as-a-service models, open-source tools, shared datasets and standardized simulation formats can lower barriers. GXO’s RaaS agreement with Agility shows one structure: the customer gets access to robotics without owning every layer, while the robotics provider learns from real operations.

Financing models will matter. Customers may prefer paying per pick, per mile, per inspection, per hour or per machine-month rather than buying hardware. Vendors may need to carry capital costs and prove uptime. That shifts robotics toward service economics. It also means balance sheets matter more than in pure software.

The hardware bill arrives before the upside. A company must pay for prototypes, tooling, sensors, compute, field teams and safety work before fleet data compounds. Investors may underestimate the time needed. Industrial customers may underestimate integration cost. Physical AI will produce large markets, but not with the effortless margins of the best software.

The strongest business cases will start where pain is acute: labor shortages, injury risk, dangerous environments, high-throughput logistics, quality demands, remote operations and around-the-clock inspection. Capital will flow toward clear pain before general-purpose visions.

The hardware bill comes before the software upside

Physical AI sits at the intersection of two cost curves. Software intelligence may improve quickly as models, data and compute advance. Hardware cost declines more slowly. Motors, batteries, sensors, casings, connectors, safety systems and maintenance labor do not follow the same curve as inference cost. This mismatch is one reason physical AI will feel slower than digital AI.

A warehouse robot must be built, shipped, installed, charged, maintained and repaired. A drone must meet aviation requirements and survive weather. A humanoid needs actuators, joints, hands, batteries, sensors, compute and protective design. A self-driving vehicle needs redundant sensing, compute, braking, steering, power and safety systems. Bodies are expensive.

The financial question is not whether a robot is intelligent. It is whether intelligence justifies the body. A $20,000 home robot must perform enough useful work to make sense for a household, not only impress early adopters. A six-figure industrial robot must raise throughput, reduce injuries or fill gaps enough to pay back. A robotaxi fleet must cover vehicle cost, cleaning, charging, maintenance, remote support, insurance and local operations.

Hardware also slows iteration. Software teams can update daily. Robot teams must test updates against physical safety and mechanical wear. A model change that improves one task may create new risks in another. A gripper redesign may require new tooling. A sensor placement change may alter perception data. A battery upgrade may affect weight and balance.

This makes simulation and modular design valuable. If a company can test software changes in digital twins before field rollout, it shortens the iteration loop. If hardware modules are replaceable, maintenance becomes easier. If one compute platform supports multiple robot types, software reuse improves.

NVIDIA’s Jetson Thor positioning reflects demand for powerful robot compute in compact, energy-constrained machines. The platform’s emphasis on multimodal sensor processing, real-time performance and generative AI at the edge shows the hardware challenge: robots need data-center-like model capabilities under robot-like constraints.

Supply chain risk is another constraint. Physical AI depends on chips, cameras, lidar, radar, motors, gearboxes, batteries, rare earth materials, precision manufacturing and industrial integration. Geopolitics can affect all of them. Companies may seek domestic or allied supply chains for critical robots, especially in defense, logistics, transportation and infrastructure.

Hardware failures are also reputational failures. A chatbot outage annoys users. A robot fleet outage may stop a warehouse. A drone battery defect may ground operations. A vehicle sensor issue may trigger recalls. Physical AI companies need service organizations, spare parts and field diagnostics from the start.

The software upside is still powerful. Once a fleet is deployed, better models can improve utilization, reduce interventions and expand tasks without replacing every machine. That is the prize: hardware as the installed base, software as the compounding intelligence. But the installed base must survive long enough to learn.

Reliability is the metric that separates demos from deployment

Robotics demos are often optimized for attention. Deployment is optimized for reliability. The gap between the two is where many physical AI companies will fail. A robot that succeeds once on stage has proved almost nothing about real operations. A robot that completes thousands of dull tasks with low intervention has proved something valuable.

Reliability has many layers. Task reliability asks whether the robot completes the job. Safety reliability asks whether it avoids harm. Mechanical reliability asks whether parts keep working. Software reliability asks whether updates, networking and inference remain stable. Operational reliability asks whether the whole workflow keeps moving when something breaks. A deployed robot is judged by the weakest layer.

Industrial buyers tend to be unforgiving because downtime has clear cost. A robot arm that stops a production line may be more expensive than the labor it was meant to reduce. A warehouse robot that blocks a narrow aisle can create cascading delays. A drone fleet grounded by weather may fail a delivery promise. A home robot that frequently asks for help becomes a burden.

Mean time between intervention may become one of the most useful metrics in physical AI. Interventions include human rescue, remote teleoperation, manual reset, maintenance, route clearing, failed picks and safety stops. A robot with a high task success rate but frequent small interventions may still be operationally weak. The labor saved by automation can be consumed by babysitting.

The autonomy stack must handle graceful degradation. If a robot is uncertain, it should slow, ask for help, retreat to a safe zone or hand off the task. If a sensor fails, it should know whether it can continue safely. If a model update performs poorly, rollback should be fast. If a fleet management system goes down, machines should fail in safe and predictable ways.

Remote assistance will likely play a large role. Many “autonomous” systems use remote humans for exceptions, guidance or recovery. That is not cheating if disclosed and engineered properly. It may be the economically rational path while models improve. But hidden dependence on remote operators can distort claims about autonomy, labor savings and privacy.

Reliability also requires maintenance design. Robots need cleaning, calibration, part replacement, battery management, software patches and inspection. Customers will not accept constant specialist visits for basic problems. The maintenance model must be built into product design. A robot that is hard to service will struggle at scale.

BMW’s humanoid pilot is notable because the published figures include runtime, shifts, parts moved and steps. Those are the right kinds of metrics. They are still vendor and partner statements, not independent audits, but they move the conversation from spectacle toward operations.

The physical AI companies that win will look less like pure AI labs and more like operational engineering firms. They will obsess over logs, repairs, edge cases, worker feedback, facility constraints and boring failures. The future of physical AI will be built by teams that respect boredom.

Homes are the hardest market to automate

The home is emotionally attractive and technically brutal. People want robots that clean, fold laundry, cook, tidy, watch pets, assist older adults and handle chores. The addressable market sounds enormous. The problem is that homes are unstructured, private, cluttered, varied and full of fragile exceptions.

A factory task may use the same fixture thousands of times. A home robot may face a sock, a glass, a pet bowl, a child’s toy, a charging cable, a spilled drink, a narrow hallway, a moving dog and a user who gives vague instructions. Lighting changes. Furniture moves. Objects lack standard locations. Families have different preferences. A home is not a small factory. It is a living environment with low tolerance for mistakes.

1X’s NEO is one of the most visible attempts to move humanoids toward the consumer market. Its order page lists a $499 monthly subscription, a $20,000 early-access ownership option and U.S. deliveries starting in 2026. The company describes NEO as a home robot focused on utility and autonomy.

The early home robotics market will likely depend on constrained tasks. A robot may begin with fetching, basic tidying, teleoperated assistance, reminders, simple object movement or scripted routines. Full autonomy across household chores is much harder. Users may also have to prepare the environment, much as early robot vacuum users learned to remove cords and obstacles.

Privacy is the central consumer issue. A useful home robot needs cameras, microphones, maps and task history. It may see bedrooms, children, medication, documents, routines and visitors. If remote assistance is used, the privacy stakes rise. Companies must make clear when video leaves the device, who can view it, how data is stored, whether training uses household footage and how users can delete data.

Safety also looks different at home. A workplace can train staff and mark robot zones. A home has guests, children, pets and unpredictable behavior. A robot’s maximum speed, force and reach must be conservative. That conservatism may reduce usefulness. A machine strong enough to lift laundry baskets may also be strong enough to hurt someone if poorly controlled.

The economic case is not obvious. Households compare a robot with doing chores themselves, hiring occasional help or buying single-purpose appliances. A subscription robot must deliver enough reliable assistance every month. Early adopters may pay for novelty, but mainstream households will judge usefulness quickly.

The home may still become a major physical AI market, especially for aging populations and disability support. A robot that helps older adults remain independent, retrieves objects, monitors hazards or connects with caregivers could have high value. But those use cases demand high trust, privacy and safety. They may require medical or care-sector partnerships rather than consumer gadget launches.

Home robotics will likely lag industrial adoption by years. The technology will learn in warehouses, factories, hospitals and logistics before it becomes dependable enough for ordinary homes. The home is the prize people imagine first and the deployment environment physical AI should approach last.

Public spaces require social intelligence as well as machine intelligence

Public spaces are neither controlled factories nor private homes. They include sidewalks, hospitals, airports, malls, campuses, streets, parks and transit hubs. Robots and drones entering these spaces must behave in ways people understand. The challenge is not only collision avoidance. It is social navigation.

A delivery robot on a sidewalk must negotiate pedestrians, wheelchairs, dogs, strollers, cyclists, construction barriers and curb cuts. A hospital robot must move around patients, nurses, equipment and emergency situations. An airport robot must operate around crowds, luggage and security rules. A drone lowering a package near a yard must account for people nearby. Physical AI in public must be legible. People need to predict what the machine will do.

Legibility is partly design. Lights, sounds, movement patterns and stopping behavior signal intent. A robot that creeps awkwardly or freezes in the wrong place may be technically safe but socially disruptive. A robot that yields too late may scare people. A drone that sounds intrusive may create opposition even if it is safe.

Public-space physical AI also raises accessibility questions. Robots should not block wheelchair users, visually impaired pedestrians or emergency routes. Voice-only interaction may exclude some users. App-only control may exclude others. The built environment is already uneven; autonomous machines can either improve access or add barriers.

The regulatory environment is fragmented. Sidewalk robots may face municipal rules. Drones face aviation rules. Vehicles face transportation regulators. Public cameras face privacy laws. A company deploying across cities may need local partnerships and public engagement. Technical readiness does not equal civic permission.

Waymo’s robotaxi experience is relevant here because public roads are a high-stakes public space. Its safety data may show strong crash performance in operating areas, but cities also care about emergency response, congestion, curb management and public perception.

Drones face similar public-space issues in the air. The FAA’s BVLOS proposal addresses operational and aircraft safety, but communities will still debate noise, privacy and acceptable use. A medical drone route may earn support where a convenience delivery route does not. Public value matters.

Public spaces also test physical AI ethics. A robot may record bystanders who never consented. A smart camera network may track movement patterns. A drone may capture images over private property. Data minimization, local processing, blurring, retention limits and clear signage are not optional niceties. They are part of social license.

Physical AI systems also need emergency behavior. What should a robot do during a fire alarm, police activity, medical emergency or crowd surge? Can authorities stop or reroute it? Can it recognize emergency vehicles or first responders? Does it fail safely if communications drop? These are operational questions, not abstract ethics.

The public will not judge physical AI by benchmark scores. It will judge whether machines feel safe, useful, respectful and accountable. A technically strong system can still lose the public if it behaves like an intruder.

Drones, robots and vehicles are converging into fleets

The most important physical AI unit may not be the individual machine. It may be the fleet. A fleet learns from shared experience, coordinates tasks, schedules maintenance, routes around congestion, balances workload and improves from aggregate data. A single robot is a tool. A fleet is infrastructure.

Amazon’s DeepFleet announcement is a clear example. The foundation model is designed to coordinate the movement of the company’s robotic fleet, not simply control one robot. Improving travel time across a fleet by 10% is valuable because small improvements compound across millions of moves.

Waymo’s robotaxi business is also fleet-based. Vehicles need maps, charging, cleaning, maintenance, remote assistance, incident response, software updates and service-area management. Drones are fleet businesses by default because routing, battery swapping, payload handling and compliance are shared systems. Humanoid robots in warehouses will need similar fleet tools.

Fleet learning is powerful because every machine becomes a sensor. If one robot encounters a blocked aisle, others can avoid it. If one drone detects wind patterns, routing can adjust. If one vehicle sees construction, maps can update. If one warehouse robot fails at a certain item type, the model can learn from the intervention.