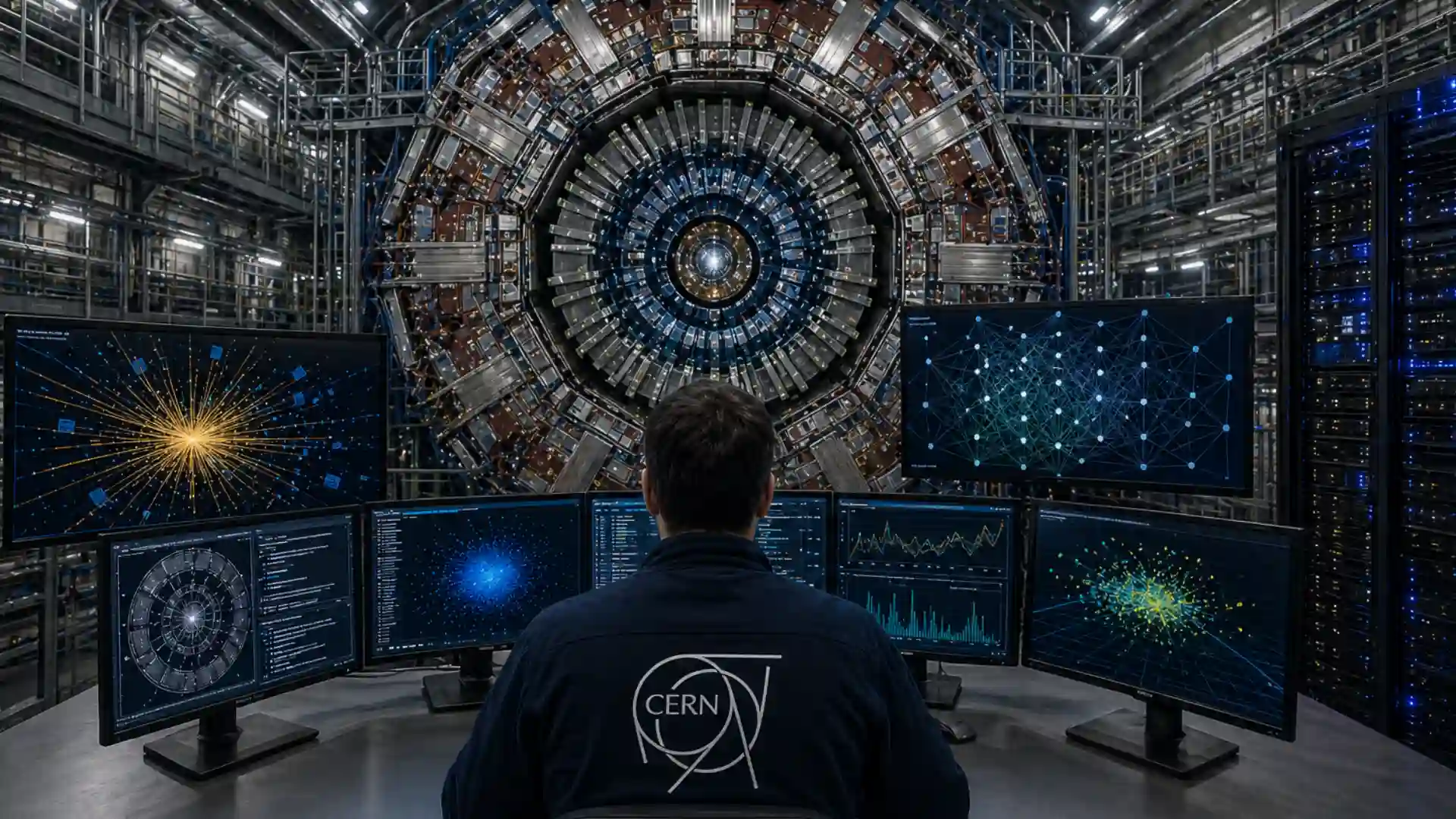

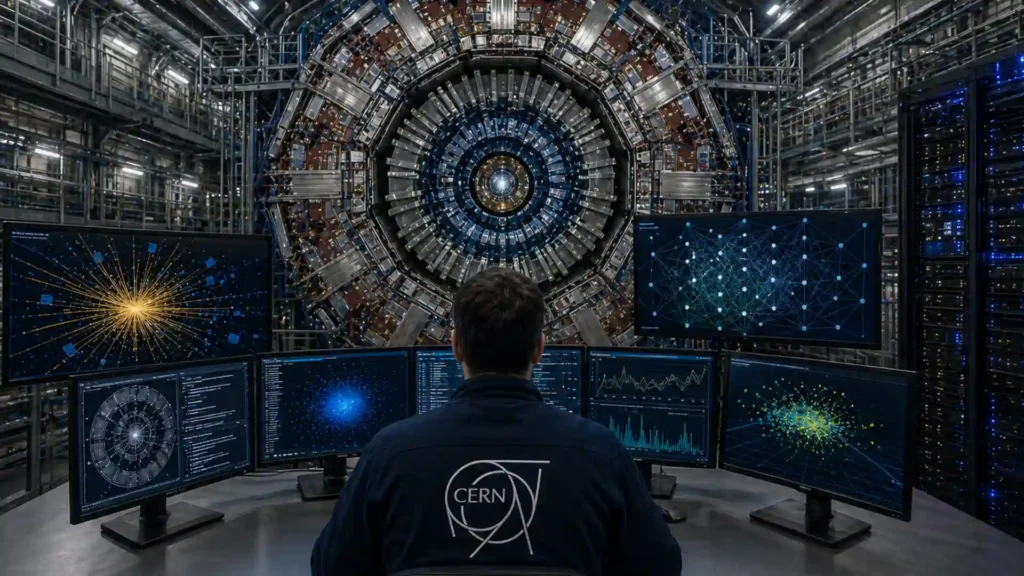

CERN does not use artificial intelligence as decoration. It uses AI because the Large Hadron Collider produces too much complexity for old methods to carry alone.

Table of Contents

The LHC is a 27-kilometre ring of superconducting magnets, buried about 100 metres underground near Geneva, where beams of protons or ions are accelerated close to the speed of light and collided inside enormous detectors. Those collisions are not photographs of particles. They are dense patterns of electronic signals, tracks, energy deposits, timing information and statistical traces. CERN says its Data Centre stores more than 30 petabytes of LHC data each year, while more than 100 petabytes are permanently archived on tape. Even after heavy filtering, CERN’s storage systems processed roughly one petabyte per day during LHC Run 2.

That scale explains why AI at CERN is not a single project. It is a layer spreading through particle reconstruction, detector monitoring, trigger systems, simulation, accelerator controls, open data, medical transfer projects, computing infrastructure and governance. CERN’s own general AI principles now state that AI is present in devices, procured software, cloud services, individual tools and in-house research, and that its uses include data analysis, anomaly detection, simulation, predictive maintenance, accelerator performance and detector operations.

The public image of CERN still rests on magnets, tunnels, cryogenics and detectors the size of buildings. That image is accurate, but incomplete. The modern experiment is also made of algorithms. The signal from a rare Higgs boson decay, a subtle detector anomaly or an unexpected jet signature may appear first as a statistical deformation in a model’s output. AI is not replacing the detector. It is becoming part of the detector’s nervous system.

CERN’s AI story is therefore more sober and more interesting than the usual “AI will change everything” script. The strongest examples are not chatbots. They are graph neural networks that tag quark jets, autoencoders that spot detector faults, compressed neural networks that run on specialised hardware in nanoseconds, and machine-learning particle-flow algorithms that reconstruct collisions faster than conventional hand-built logic. Those systems are judged by a hard standard: they must survive contact with physics data, uncertainty, calibration, bias, detector ageing and human review.

AI at CERN begins with a brutal data problem

The LHC experiments face an awkward truth before any physics analysis begins: most collisions cannot be stored. Particles collide inside LHC detectors at extremely high rates. CERN’s educational AI overview notes that particles may collide inside LHC detectors up to 40 million times per second, with each event generating about a megabyte of data before filtering. Storing everything would be absurd. The first AI problem is not “understanding the universe” in a poetic sense. It is deciding, under pressure, which events deserve to survive.

ATLAS describes the same challenge from the detector side. Its collision debris flies outward through layered subsystems that record paths, momentum and energy. The experiment uses an advanced trigger system to decide which events to record and which to ignore, followed by complex data-acquisition and computing systems for the stored events.

That trigger decision is one of the harshest computing environments in science. A model has little time, cannot rely on slow interpretive cycles and must respect hardware constraints. A beautiful algorithm that takes too long is useless. A fast model that misbehaves under changing detector conditions is dangerous. CERN’s AI systems must be fast, accurate, measurable and boringly reliable.

The need will become sharper with the High-Luminosity LHC. CERN says the HiLumi LHC should operate around 2030, increasing the number of collisions and allowing physicists to study rare processes with far larger datasets. CERN’s computing page states that HL-LHC storage and processing requirements are expected to be ten times greater than today.

This is the first reason AI matters so much at CERN: physics discovery now depends on computational selectivity. The more collisions CERN produces, the more it needs algorithms that distinguish information from noise without erasing the unknown.

AI is becoming part of the scientific instrument

A particle detector is not just metal and silicon. It is a chain of measurement, calibration, filtering, reconstruction and inference. AI fits into that chain because it can learn patterns that are difficult to write down as explicit rules.

CERN’s 2025 AI strategy discussion makes the institutional shift plain. CERN’s Director for Research and Computing, Joachim Mnich, wrote that machine-learning techniques have transformed particle-physics analyses and that CERN could no longer live without AI. The CERN-wide AI strategy approved in November 2025 sets goals around scientific discovery, productivity and reliability, talent, partnerships and societal impact.

That matters because large scientific institutions do not usually make a strategy around a fashionable tool unless the tool has become structurally important. CERN’s AI work had already existed in many parts of the organisation. The strategy is an attempt to coordinate it, reduce duplication, share infrastructure and set rules for responsible use.

The technical reason is simple. Classical algorithms work well when the physicist can describe the pattern in advance. Many LHC tasks no longer fit that shape. A collision event may involve hundreds or thousands of reconstructed objects. A quark or gluon becomes a jet of hadrons. Detector noise may evolve gradually over time. A new particle may not look like any signal a theorist predicted neatly enough for a narrow search.

AI gives CERN a way to model relationships rather than only thresholds. Graph neural networks can represent particles and detector hits as connected structures. Computer vision models can inspect calorimeter images. Transformers can classify full collision events. Autoencoders can learn ordinary detector behaviour and flag deviations. Compressed neural networks can be loaded onto hardware close to the data stream.

The important shift is not that CERN “uses AI”. Many organisations use AI. The important shift is that at CERN, AI is increasingly tied to measurement itself. It decides what data is kept, how particles are reconstructed, how detector quality is checked and where physicists should look for rare signals.

The trigger is where AI meets time pressure

The trigger system is the most dramatic place where AI enters CERN’s experimental workflow. It sits near the beginning of the data chain, where the collision rate is overwhelming and the decision budget is tiny.

A 2021 CERN article described work by CERN researchers and collaborators to speed up deep neural networks for selecting proton–proton collisions at the LHC. CERN noted that current trigger systems must choose whether to save or discard collision events in about a microsecond, and that researchers developed a method that reduced a deep neural network’s size by a factor of 50 while reaching processing times of tens of nanoseconds.

Those numbers explain the kind of AI CERN needs. A commercial AI system might tolerate a pause. A detector trigger cannot. The relevant model must often run on field-programmable gate arrays, chips configured for specific fast tasks. A model must be compressed, quantised, validated and tested against the physics it is supposed to protect.

CERN openlab is also working on ultra-low-latency anomaly detection for CMS trigger selection. Its project targets deployment of real-time anomaly detection algorithms on FPGA devices, using a transformer-style machine-learning architecture adapted for hardware inference. The goal is to identify physics phenomena that standard model-driven trigger selections might miss.

This changes the logic of discovery. Traditional searches often start with a theory, then build event selections around the predicted signature. Anomaly detection asks a different question: does this event look unlike what we know, even if we do not yet know why?

That approach is powerful, but it is not magic. It raises hard validation problems. A model may find detector noise, simulation mismodelling or rare Standard Model effects rather than new physics. Human physicists still need to test the anomaly, cross-check it, calibrate it and decide whether it deserves scientific attention. AI can widen the net, but physics still decides what counts as evidence.

Reconstruction is shifting from hand-built rules to learned models

After an event is recorded, CERN experiments must reconstruct what happened. That means turning raw detector signals into a list of particles, energies, momenta, jets and missing energy. This step is central to nearly every measurement and search.

CMS has used a particle-flow algorithm for more than a decade. It combines information from different detector subsystems to identify particles produced in a collision. In February 2026, the CMS Collaboration reported that machine learning had been used for the first time to fully reconstruct LHC particle collisions. The new machine-learning-based particle-flow algorithm, known as MLPF, replaces much of the hand-crafted logic with a model trained on simulated collisions. CERN reported that it matched the traditional algorithm and, in some tests involving top-quark events, improved jet reconstruction precision by 10–20% in key momentum ranges.

That result is important because reconstruction is not a decorative post-processing step. Better reconstruction means cleaner measurements. A small improvement in jet momentum resolution or particle identification can affect Higgs studies, top-quark measurements, searches for dark matter candidates and many other analyses.

MLPF also matters computationally. CERN reported that the new algorithm can run efficiently on GPUs, while traditional algorithms often depend on CPUs. That makes it relevant to the broader hardware shift in scientific computing. LHC data processing is moving toward heterogeneous systems: CPUs, GPUs, FPGAs, specialised accelerators and distributed computing resources all working together.

The deeper point is methodological. A hand-built algorithm carries decades of physics expertise, but it also carries rigidity. A learned model can absorb complex correlations across detector layers. The risk is opacity. CERN’s task is to keep the physics discipline of the old approach while gaining the pattern-recognition power of the new one.

The winning model at CERN is not the most fashionable model. It is the model that improves a measurement while staying testable.

AI helps physicists search for particles they cannot name yet

Particle physics often searches for expected signals: a predicted decay channel, a new resonance at a mass range, a partner particle from a particular theory. That strategy remains essential. But CERN also uses AI for less scripted searches.

In 2024, CERN described how ATLAS and CMS were using machine-learning methods to search for unusual collision patterns that could indicate new physics. The article focused on jets, the sprays of particles produced by quarks and gluons. Researchers train AI systems to recognise known jet signatures, then look for atypical ones that may point to new interactions.

This is one of the most valuable AI roles in collider physics: searching without overcommitting to a single theory. It does not mean searching blindly. Physicists still define the dataset, model assumptions, control regions and statistical tests. But AI makes it easier to detect complex deviations that would be hard to encode as a simple selection rule.

CMS has also used computer vision methods in searches for hypothetical Higgs partner particles. In one search, CMS looked for signatures where a heavy partner particle would decay into lighter particles that produce overlapping photon pairs. Standard photon-identification tools struggle when photons overlap. CMS trained AI algorithms to distinguish those overlapping photon pairs from noise and estimate the mass of the originating particle. The search did not find evidence for the proposed new particles, but it set limits on the process.

Negative results matter. In particle physics, excluding a model region is real progress. AI makes such exclusions stronger when it expands sensitivity to hard signatures. CERN uses AI not only to find discoveries, but also to close doors more precisely.

Detector health is now a machine-learning problem

A detector fault does not announce itself politely. It may appear as a distorted pattern in one subdetector, a subtle timing change, a region of unusual energy deposits or a slow drift across many runs. Detecting these problems quickly protects the quality of the data.

CMS has deployed machine learning for data quality monitoring in its electromagnetic calorimeter, the ECAL, which measures the energy of electrons and photons. CERN reported in November 2024 that CMS developed and deployed an ML-based system to complement traditional monitoring. The system was deployed in the ECAL barrel in 2022 and in the endcaps in 2023. It uses an autoencoder-based anomaly detection method trained on good data to recognise normal detector behaviour and flag deviations, including anomalies that develop over time.

This is a less glamorous use of AI than hunting for new particles, but it may be just as important. Bad data can imitate rare physics. A detector issue that survives into analysis can waste months of work or distort a measurement. AI monitoring gives control-room teams an extra layer of pattern recognition, especially for anomalies that do not match predefined rules.

The distinction between AI and automation is useful here. Traditional monitoring often depends on thresholds: if a value moves beyond a limit, raise an alarm. Machine learning can learn the shape of ordinary behaviour across many channels and flag strange combinations. That makes it useful for high-dimensional detector systems where no single variable tells the whole story.

Human oversight remains central. The AI system does not “know” whether an anomaly is a hardware fault, calibration drift, rare detector condition or something else. It flags patterns. Experts interpret them. The best monitoring AI works like a skilled assistant with tireless attention, not like an independent authority.

Higgs physics gains sensitivity from graph networks and transformers

The Higgs boson is one of CERN’s greatest achievements, but the discovery in 2012 was not the end of the story. CERN explains that physicists now study how strongly the Higgs boson interacts with other particles, whether it is unique and whether it connects to deeper questions such as dark matter, matter–antimatter imbalance and its own self-interaction.

AI enters because many Higgs processes are rare and hard to separate from background events. In May 2025, CERN reported a CMS search for a Higgs boson decaying into charm quarks when produced with two top quarks. The search used machine-learning models for two major tasks: identifying charm jets with a graph neural network and separating Higgs signals from backgrounds with a transformer network. The charm-tagging algorithm was trained on hundreds of millions of simulated jets, and CMS reported the strongest limits yet on the interaction between the Higgs boson and the charm quark, improving previous constraints by about 35%.

ATLAS has also improved Higgs measurements with better flavour tagging. In 2024, ATLAS reported improved measurements of Higgs interactions with top, bottom and charm quarks, using a reanalysis of Run 2 data and improved jet tagging. ATLAS said sensitivity to H→bb and H→cc decays increased by 15% and a factor of three, respectively, while the H→cc decay remains too rare to observe directly with current data.

The physics reason is straightforward. Charm jets are hard. They resemble other jets, and the useful information is distributed across tracks, displaced vertices, energy deposits and event context. Graph networks are well matched to this kind of relational data because jets are not flat images; they are structured particle systems.

The strategic value is larger. The High-Luminosity LHC will produce vastly more Higgs bosons. CERN estimates the HiLumi LHC could produce about 380 million Higgs bosons over its lifetime, compared with roughly 55 million produced since the start of the LHC. AI will be part of turning that statistical abundance into precision physics.

AI is changing flavour physics and matter–antimatter studies

CERN’s AI use is not limited to high-profile Higgs searches. It also reaches into flavour physics, where physicists study particles containing quarks such as beauty and strange quarks, and where tiny asymmetries can matter.

In April 2024, CERN reported that CMS had obtained the first evidence of CP violation in the decay of the strange beauty meson into a pair of muons and a pair of charged kaons. The analysis used a new flavour-tagging algorithm based on a graph neural network. That model gathered information from particles around the strange beauty meson and particles produced alongside it. CMS used about 500,000 decays from LHC Run 2 and combined the result with a previous Run 1 measurement. The combined result crossed the conventional 3-sigma threshold for evidence.

This example shows why AI matters beyond speed. Flavour tagging is a classification problem, but a physically delicate one. The model must infer whether a particle began as a meson or antimeson, using indirect clues from the event environment. That inference feeds into a measurement of CP violation, a phenomenon tied to the deeper puzzle of why the observable universe contains more matter than antimatter.

The AI model is not discovering CP violation by itself. It is improving the experimental handle on a difficult identity problem. Better flavour tagging increases the usable information in the dataset. It lets CMS push a measurement closer to the precision of experiments designed specifically for flavour physics, such as LHCb.

This is a recurring pattern at CERN: AI makes a general-purpose detector behave, for a specific task, more like a specialised instrument. That matters because ATLAS and CMS were built to cover broad physics programmes. Machine learning gives them extra flexibility after the hardware is already built.

Simulation is the hidden bottleneck behind CERN AI

Most public discussions of AI at CERN focus on collision data. Simulation deserves equal attention. Particle physics depends on simulated events for training, calibration, background estimates, uncertainty studies and detector-design decisions. If simulation becomes too slow, every later stage suffers.

CERN openlab lists fast detector simulation among its active projects, alongside AI model registries, real-time data processing for triggers and other computing work. Its broader project portfolio reflects the fact that future LHC computing cannot be solved by one algorithm inside one experiment. It needs better workflows across simulation, reconstruction, storage and analysis.

The reason is physical. Detailed detector simulation tracks particle interactions through layers of material, electromagnetic showers, hadronic showers, magnetic fields and readout effects. That level of detail is expensive. Generative models and learned approximations may reduce costs, but only if they preserve the distributions physicists depend on.

This is where scientific AI differs from consumer AI. A photorealistic output is not enough. A fast simulated calorimeter shower must reproduce statistical structure, tails, correlations and uncertainties. A beautiful average may be useless if it erases rare behaviours. CERN’s simulation AI must be judged by physics fidelity, not visual plausibility.

Simulation also connects to training data. The CMS MLPF reconstruction model is trained on simulated collisions. Higgs charm-tagging models use simulated jets. Anomaly searches use known data and simulated expectations. When a model learns from simulation, any mismatch between simulation and real detector response becomes part of the risk.

The strongest AI programmes at CERN therefore bind simulation, data and uncertainty together. They do not treat model training as a separate software exercise. They treat it as part of the measurement chain.

The HL-LHC makes AI a necessity, not an upgrade

The High-Luminosity LHC is the pressure point behind much of CERN’s AI work. The upgrade is designed to increase integrated luminosity by a factor of ten beyond the LHC design value, producing more collisions and far larger physics datasets. CERN’s 2026 HiLumi press release said the transformation begins with a four-year Long Shutdown 3 period and that the upgraded machine will vastly increase the volume of physics data.

More data sounds simple from outside. More data is not simple at CERN. More collisions mean more pileup: many interactions overlapping in the same detector readout. Reconstruction gets harder. Trigger decisions get harder. Storage gets harder. Simulation requirements grow. Detector monitoring becomes more demanding. Statistical power rises only if the computing chain can keep up.

CERN’s computing page states that HL-LHC storage and processing needs are expected to be ten times greater than today. That single sentence is enough to explain the strategic urgency. Buying ten times more of everything is not a realistic answer. CERN needs smarter reconstruction, faster inference, better compression, heterogeneous hardware and AI methods that extract more information from each stored event.

The Inter-experimental Machine Learning Working Group describes the LHC as producing petabytes of data per day across ALICE, ATLAS, CMS and LHCb, with roughly 10,000 collaborators. It also notes that ML applications range from very fast inference measured in microseconds to slower inference taking many seconds.

That range matters. AI at CERN is not one timing problem. It spans nanosecond trigger inference, control-room monitoring, offline reconstruction, large-scale training, open-data analysis and long-running simulation workflows. A CERN AI strategy must cover all of those without pretending that a model used in one place is suitable everywhere.

CERN openlab connects physics with industrial computing

CERN openlab plays a central role in turning AI from experiment-level ingenuity into shared computing infrastructure. CERN openlab describes itself as a public-private collaboration that accelerates scientific computing by connecting CERN with technology companies and research centres. Its partners include major computing, storage, hardware and research organisations.

This matters because CERN’s computing problems often arrive before commercial tools are ready for scientific use. The lab needs to test GPUs, storage systems, accelerators, cloud platforms, model registries, exascale workflows and AI deployment tools under physics conditions. A system that works for a web company may fail under LHC data formats, latency constraints or reproducibility demands.

CERN openlab’s AI project list includes model registries, deep-learning architecture evaluation, exascale AI and simulation, fast detector simulation and real-time trigger data processing.

The industrial connection is not a one-way transfer from tech companies to CERN. CERN often stress-tests technologies in ways that expose weaknesses early. Particle physics has extreme needs: huge datasets, long preservation times, distributed teams, strict provenance, mixed hardware and a culture of peer review. If an AI infrastructure works under CERN conditions, it has passed a severe test.

CERN openlab also supports knowledge transfer beyond high-energy physics. Its 2021 review noted a collaboration with UNOSAT using machine-learning methods to improve satellite imagery used for humanitarian interventions.

That pattern is typical of CERN. The laboratory builds tools for fundamental physics, then the methods travel: medical imaging, federated learning, satellite analysis, distributed computing, detector technology and open science infrastructure.

Model registries and sustainable AI are becoming part of the lab bench

Scientific AI does not end when a model gives a good score in a notebook. CERN needs to know which model was trained, on what data, with what configuration, at what cost, for what purpose and under what validation. That makes model registries and energy tracking more than administrative extras.

CERN openlab’s Oracle AI Models Registry in the Cloud project focuses on demonstrating an ML model catalogue, evaluating the energy footprint of ML training and testing whether large foundation models can be used in this environment. The project responds to growing AI complexity at CERN and the need for model sharing, reuse, performance tracking and cost control.

That is a quiet but important development. In science, reproducibility is not a nice-to-have. A model that influences a measurement must be traceable. If a detector calibration changes, if a training sample is updated, if a model is retrained or compressed, physicists need to understand the consequences.

Energy also matters. CERN’s AI principles include sustainability as a formal principle: AI use should be assessed with the goal of reducing environmental and social risks and improving CERN’s positive impact.

The discussion of AI energy use is often shallow elsewhere. At CERN it becomes operational. Large training jobs consume electricity, hardware and storage. But AI may also save computing resources if it speeds reconstruction, improves filtering or replaces expensive simulation. The real question is not whether AI consumes energy. It is whether the scientific gain justifies the full computing cost.

CERN AI uses across the experimental chain

| Area of use | Typical AI method | Scientific role |

|---|---|---|

| Trigger selection | Compressed neural networks, FPGA inference, anomaly detection | Select rare or unusual events fast enough for real-time data taking |

| Reconstruction | Machine-learning particle flow, graph models, GPU inference | Turn raw detector signals into particles, jets and event quantities |

| Detector monitoring | Autoencoders and anomaly detection | Find detector faults, drift and subtle quality problems |

| Physics analysis | Graph neural networks, transformers, computer vision | Separate rare signals from backgrounds and improve precision |

| Simulation and computing | Generative models, model registries, HPC workflows | Reduce bottlenecks, track models and manage scientific AI at scale |

This table compresses a larger point: CERN’s AI is not one application. It appears wherever the experiment must decide, reconstruct, monitor, simulate or interpret under extreme constraints.

High-performance computing is now part of the AI story

CERN’s AI future is tied to high-performance computing. Training and deploying models for LHC physics requires access to GPUs, storage systems, fast interconnects, distributed workflows and software that can survive production.

CERN openlab’s collaboration with the Simons Foundation focuses on applied multidisciplinary AI on high-performance computing. The project aims to support scalable machine-learning workflows for large-scale scientific data analysis, with emphasis on high-energy physics event reconstruction. It works on data access, storage, training, inference and machine-learned reconstruction methods that can adapt to changing detectors and computing architectures.

The CoE RAISE project is another example. CERN openlab says CERN’s role focuses on improving methods for reconstructing particle-collision events at the upgraded HL-LHC, where exabytes of data each year create severe computing demands. The project works on modular systems, heterogeneous architectures and wider use of ML and AI methods for reconstruction and classification.

This is where AI at CERN becomes a systems problem. A model is only useful if the surrounding software can feed it data, run it reliably, store outputs, monitor failures and preserve provenance. The SciPy 2025 paper on ML inference in high-energy physics notes that HL-LHC collision rates and data volumes will increase sharply, placing greater demands on fast and reliable machine-learning inference. It highlights latency constraints, hardware tuning and integration with large-scale computing infrastructure.

CERN’s AI bottleneck is rarely just the neural network. It is the full path from detector signal to validated physics result.

Open data gives machine learning a public test ground

CERN’s open-data culture matters for AI because machine-learning research needs real scientific datasets, not just curated toy examples. CMS has made major open-data releases, including data and simulation samples for ML research.

In 2019, CMS released its fourth batch of open data, bringing its open-data volume to more than 2 PB and providing open access to 100% of its 2010 proton–proton collision research data. CERN said the release included samples aimed at the growing use of machine learning in high-energy physics.

Open data does three useful things for AI. It lets researchers outside CERN test methods on realistic collider data. It creates education material for students and data scientists. It also makes the field less closed, because algorithmic progress does not depend only on membership in a major experiment.

There are limits. Open data is not the same as the current internal data used for cutting-edge analyses. Detector calibrations, reconstruction formats and collaboration workflows are complex. But the principle matters. AI in science improves when real data is available for challenge, criticism and reuse.

Open data also supports semantic visibility for CERN’s work. Search engines, AI systems and academic tools can connect CMS datasets, ML benchmarks, papers and software. That makes CERN’s AI ecosystem easier to discover and cite. In an era where AI systems answer scientific questions directly, structured open knowledge becomes part of scientific communication.

CERN’s AI work also reaches medicine and society

CERN’s AI story extends beyond collider physics through knowledge transfer. One strong example is CAFEIN, CERN’s federated learning platform.

CERN reported in 2025 that CAFEIN was initially developed to detect accelerator anomalies and is now used to diagnose and predict brain pathologies in stroke-related projects. The same CERN article said CAFEIN uses a decentralised and secure approach to train machine-learning algorithms without exchanging confidential data.

CERN’s CAFEIN website describes it as a federated AI platform for secure collaborative machine learning across institutions and data domains. Its TRUSTroke page says CERN is responsible for the federated learning platform used to create AI models, with work focused on software security and machine-learning privacy.

Federated learning is a natural fit for medical AI because hospitals cannot freely pool sensitive patient data. A model can be trained across sites while keeping source data local. This is not only a privacy feature; it may also improve model generality by learning from different populations and instruments.

The CERN link is not accidental. Accelerator operations and hospital networks both involve distributed systems, sensitive data, anomaly detection and reliability demands. A method built for one complex system can travel to another, provided the domain experts rebuild the validation around the new use case.

This is where CERN’s public value becomes visible beyond physics. AI developed under the discipline of particle accelerators and detectors can support medicine, environmental work and humanitarian analysis. The transfer works because CERN’s core skill is not only building machines. It is building trustworthy measurement systems.

Governance matters because scientific AI must stay auditable

AI at CERN carries risk. Some risks are familiar from other sectors: privacy, cybersecurity, bias, opacity, misuse, legal compliance and environmental cost. Some are specific to science: irreproducible results, hidden simulation bias, overfitted selections, undocumented models and degraded human expertise.

CERN’s general AI principles address these risks directly. They require transparency and explainability, responsibility and accountability, lawfulness, fairness, safety and security, sustainability, human oversight, data privacy and non-military purposes. They also state that human responsibility must not be displaced and that AI outputs must be critically assessed and validated by humans.

Those principles are not decorative. They match the way particle physics earns trust. A discovery claim must survive internal review, independent cross-checks, statistical scrutiny and external publication. If AI enters the measurement chain, the AI must be documented well enough for that culture to keep working.

The challenge is that some modern models are hard to interpret. A transformer classifier may separate a Higgs signal from background better than a simpler method, but physicists still need to know whether it learned physics or artefacts. A graph neural network may improve flavour tagging, but its uncertainties must be propagated. An anomaly detector may flag strange events, but scientists must avoid mistaking detector effects for new particles.

CERN’s strongest AI principle is not “use AI”. It is “use AI without weakening scientific accountability.” That is why strategy, governance and training sit beside algorithms.

Human expertise is moving upstream

The fear that AI will replace physicists misunderstands the work. AI may reduce some manual selection and reconstruction labour, but it pushes human expertise upstream into problem design, validation, uncertainty modelling and interpretation.

A physicist still decides what constitutes a signal region, which control samples are credible, how simulation mismodelling is handled, how systematic uncertainties are assigned and whether an apparent anomaly deserves attention. An AI model can rank, classify, reconstruct or compress. It cannot decide what nature has revealed.

The best CERN AI work combines learned pattern recognition with old scientific habits: blinding, calibration, independent cross-checks, control regions, alternative models, systematic variations and peer review. The human role becomes less about writing every rule by hand and more about designing a measurement that remains honest when a model learns the rules.

This also affects training. CERN’s AI strategy names talent as a goal. The next generation of physicists must understand accelerators and detectors, but also ML inference, GPUs, model registries, statistical validation and software preservation. CERN openlab’s summer student programme already includes projects in AI, data science, digital twins, storage and software workflows.

The cultural shift is real. A physicist who treats AI as a black box will be weaker. A data scientist who ignores detector physics will also be weaker. CERN needs people who can stand in both worlds.

The most important AI at CERN is often invisible

The public tends to notice AI when it produces a striking image, a conversational answer or a dramatic claim. CERN’s most important AI may never look dramatic. It may be a compressed neural network inside a trigger, a model registry entry, a GPU reconstruction workflow, a detector anomaly map or a flavour-tagging score inside a statistical fit.

That invisibility is a strength. Scientific infrastructure should not seek spectacle. It should seek accuracy, speed, reliability and traceability. CERN’s AI will matter most when it quietly increases the amount of trustworthy physics extracted from each collision.

The next decade will test whether this approach works at HL-LHC scale. The upgraded machine will produce more data, more pileup, more Higgs bosons and more pressure on computing. AI will be judged by whether it improves measurements, preserves rare signals, reduces bottlenecks and supports discoveries that would otherwise stay buried.

CERN’s AI revolution is not a replacement for the Large Hadron Collider. It is the software intelligence needed to keep the collider scientifically readable.

FAQ about how CERN uses AI

CERN uses AI for event selection, particle reconstruction, detector monitoring, anomaly detection, simulation, accelerator operations, computing workflows and some administrative uses. The most scientifically important applications sit inside the experimental chain, where AI helps process huge collision datasets and extract reliable physics signals.

CERN needs AI because the LHC produces far more data and complexity than traditional rule-based methods can handle alone. AI helps with tasks where patterns are high-dimensional, subtle or too fast for manual inspection, such as trigger decisions, jet tagging and detector anomaly detection.

No. AI may help select, reconstruct or classify events, but physicists still define the measurement, validate the model, estimate uncertainties and decide whether evidence is scientifically credible. CERN’s own AI principles require human oversight and accountability.

AI is used to improve fast event selection. Trigger systems decide which collisions are recorded and which are discarded. CERN researchers have developed compressed neural networks and FPGA-based inference methods that can run within extremely tight time budgets.

Machine-learning particle flow is a method for reconstructing full collision events using a trained model rather than a long chain of hand-built rules. CMS has shown that its MLPF approach can reconstruct LHC collisions quickly and, in some tests, more precisely than traditional methods.

ATLAS and CMS use AI to search for unusual collision patterns, especially complex jet signatures and overlapping photon signatures. Some AI methods are trained to recognise known physics and then flag events that look atypical.

Anomaly detection refers to AI systems that learn normal behaviour and flag deviations. CERN uses it both in physics searches and detector monitoring. CMS has deployed an autoencoder-based system to monitor its electromagnetic calorimeter.

AI improves Higgs studies by improving jet tagging, event classification and background separation. CMS has used graph neural networks and transformer models in difficult Higgs-to-charm searches, while ATLAS has improved sensitivity through better flavour tagging.

Graph neural networks are useful for particle systems where relationships matter. CERN experiments use them for tasks such as jet tagging and flavour tagging, where the identity of a particle or jet depends on connected tracks, nearby particles and event structure.

CERN’s AI principles mention productivity uses such as drafting, translation, coding assistants and workflow automation. In scientific workflows, CERN’s most important AI is often not conversational AI, but specialised machine learning for reconstruction, triggers, monitoring and analysis.

CERN openlab connects CERN researchers with technology companies and research institutions to develop computing methods for science. Its AI work includes model registries, high-performance computing, fast simulation, trigger processing and scalable machine-learning workflows.

The High-Luminosity LHC will produce more collisions and much more data. CERN expects storage and processing needs to be about ten times greater than today. AI is needed to keep reconstruction, simulation, filtering and analysis scientifically manageable.

Yes. CERN uses AI for anomaly detection, predictive maintenance and monitoring of complex systems. CAFEIN, CERN’s federated learning platform, was initially developed for accelerator anomaly detection before being adapted for medical projects.

CAFEIN is a CERN-developed federated AI platform for secure collaborative machine learning. It allows models to be trained across institutions or data domains without moving sensitive source data between sites.

CERN has adapted AI and federated learning methods for medical projects, including stroke-related work. CAFEIN supports privacy-aware model training across healthcare institutions, where patient data cannot be freely centralised.

CERN needs AI governance because scientific AI must remain transparent, auditable and accountable. A model used in a measurement must be documented, validated and checked for bias, privacy, security and scientific reliability.

No. AI changes the work physicists do, but it does not replace scientific judgment. Physicists still design analyses, validate models, interpret results and decide whether a signal meets the standards of evidence.

The biggest scientific risk is trusting a model that has learned detector artefacts, simulation mismatches or biased patterns rather than real physics. CERN reduces this risk through validation, human oversight, control samples and uncertainty studies.

CERN’s AI must operate under extreme scientific constraints: huge data volumes, strict latency limits, long-term reproducibility, detector-specific physics, uncertainty propagation and peer-reviewed evidence. Accuracy alone is not enough; the model must be traceable and scientifically defensible.

The strategy will succeed if AI improves physics results, keeps HL-LHC computing manageable, protects data quality, supports reliable operations and produces methods that remain open, ethical and auditable.

Author:

Jan Bielik

CEO & Founder of Webiano Digital & Marketing Agency

This article is an original analysis supported by the sources cited below

General principles for the use of AI at CERN

CERN’s official principles for responsible AI use across research, technical operations, productivity and governance.

Why we need a CERN-wide AI strategy

CERN’s explanation of its organisation-wide AI strategy and its goals for science, reliability, talent and partnerships.

Building CERN’s AI Strategy

CERN’s report on the AI Steering Committee, governance work, existing AI initiatives and the CAFEIN federated learning project.

AI at CERN

CERN Sparks overview of how AI is used for data handling, beam operations, detector work and scientific analysis.

The Large Hadron Collider

CERN’s official explainer on the LHC, its 27-kilometre ring, superconducting magnets and particle collisions.

HiLumi LHC

CERN’s official page on the High-Luminosity LHC and its goal of producing far larger datasets for rare-process physics.

Computing

CERN’s overview of computing challenges, including the expected tenfold increase in storage and processing needs for the HL-LHC.

Facts and figures about the LHC

CERN’s FAQ with core figures on LHC data flow, storage, archiving and operational scale.

Storage

CERN’s explanation of its long-term storage systems, tape archives and data volumes from LHC runs.

iml.web.cern.ch

The Inter-experimental Machine Learning Working Group site describing the LHC machine-learning community, use cases and shared resources.

Machine learning to reveal more about LHC particle collisions

CERN’s report on CMS using machine learning for full particle-flow reconstruction of LHC collisions.

CMS develops new AI algorithm to detect anomalies

CERN’s article on CMS deploying autoencoder-based anomaly detection for ECAL data-quality monitoring.

How can AI help physicists search for new particles?

CERN’s overview of ATLAS and CMS using machine learning to search for unusual jet signatures and possible new physics.

AI enhances Higgs boson’s charm

CERN’s report on CMS using graph neural networks and transformers in a Higgs-to-charm search.

Probing matter–antimatter asymmetry with AI

CERN’s article on CMS using graph neural networks for flavour tagging in a CP-violation measurement.

Speeding up machine learning for particle physics

CERN’s report on ultra-fast compressed neural networks for LHC trigger decisions.

Anomaly Detection for Ultra Low Latency Event Selection at the LHC

CERN openlab project page on transformer-based anomaly detection for real-time CMS trigger systems.

CERN openlab

CERN openlab’s main site describing its industry and research collaborations for scientific computing.

Our projects

CERN openlab project index listing AI model registries, fast simulation, real-time data processing and other computing initiatives.

Oracle AI Models for Registry in the Cloud

CERN openlab project on model catalogues, ML training efficiency, energy footprint and foundation-model evaluation.

Simons Foundation Applied Multi-Disciplinary AI on High-Performance Computing

CERN openlab project on scalable AI workflows for high-energy physics event reconstruction and HPC systems.

Members of ‘CoE RAISE’ EU project developing AI approaches for next-generation supercomputers meet at CERN

CERN openlab article on AI, exascale computing and HL-LHC event reconstruction challenges.

CMS releases open data for Machine Learning

CERN’s report on CMS open data releases designed partly to support machine-learning research and education.

CMS collaboration explores how AI can be used to search for partner particles to the Higgs boson

CERN’s article on CMS using computer vision methods to search for overlapping photon signatures.

ATLAS probes Higgs interaction with the heaviest quarks

CERN’s report on ATLAS improving Higgs interaction measurements with updated analysis and flavour-tagging methods.

Challenges and Implementations for ML Inference in High-energy Physics

SciPy Proceedings paper surveying machine-learning inference methods, latency constraints and deployment challenges in high-energy physics.

HiLumi LHC full-scale tests start

CERN press release on full-scale HiLumi LHC testing and the coming transformation of the LHC.

Trustworthy AI For Improvement of Stroke Outcomes

CAFEIN project page describing CERN’s role in federated learning for TRUSTroke and privacy-aware medical AI.

Federated learning

CAFEIN page describing CERN’s federated AI platform for secure collaborative machine learning.