SpaceX’s claim that it had launched 32,000 Linux computers into space for Starlink internet sounded, at first, like a piece of hacker folklore that had escaped into aerospace reporting. Most people still picture satellites as sealed, rare, almost handcrafted machines. One satellite, one mission, one slow software cycle, one careful operational life. The Starlink number pushed against that mental model. Tens of thousands of Linux nodes were not riding inside a ground data center. They were circling Earth, inside a broadband network built to behave less like a classic spacecraft program and more like a constantly updated distributed system.

Table of Contents

The headline was stranger than the architecture

The figure came from SpaceX engineers during a 2020 Reddit AMA held by the company’s software team. Matt Monson, then leading Starlink software, said each 60-satellite launch contained more than 4,000 Linux computers, and that the constellation already had more than 30,000 Linux nodes and more than 6,000 microcontrollers in space. Josh Sulkin added that SpaceX ran Linux with the PREEMPT_RT patch for better real-time behavior, used its own kernel and toolchain rather than a third-party distribution, and kept the kernel mostly close to upstream except for custom drivers.

That is the detail that makes the story worth revisiting. The headline number is not just trivia about Linux. It exposes a deeper shift in spacecraft design. Starlink is not simply a fleet of satellites that happens to carry computers. It is an orbital network whose software, compute nodes, telemetry systems, routing decisions, update pipelines, safety logic, and failure handling are the product. The radio hardware matters. The phased-array antennas matter. The propulsion system matters. Yet the service customers experience as “internet from space” is produced by the interaction of code, timing, radio physics, orbital geometry, automation, and ground infrastructure.

The 32,000-computer figure also had a narrow meaning. It was a snapshot from 2020, when SpaceX had launched roughly 480 Starlink satellites. It should not be casually treated as a current verified total. Starlink’s fleet has grown by an order of magnitude, and the hardware generations have changed. Public sources now track Starlink as a constellation of more than 10,000 active satellites, while SpaceX has moved through V1, V1.5, V2 Mini, direct-to-cell variants, and plans for larger V3 spacecraft. A current Linux-node count has not been published with the same clarity. The honest way to read the 32,000 number is as a window into SpaceX’s architecture, not as a live counter.

Even as a historical number, it matters. It showed that SpaceX had already put a dense compute fabric into orbit before most people understood Starlink as more than “satellite broadband.” The company was not sending a few exotic processors into space for command and control. It was launching many small computers per satellite, tied together by a software model that borrowed from flight systems, embedded Linux, cloud operations, radio networks, and modern continuous deployment.

That mix is why the story has aged well. Starlink has become larger, more capable, more politically visible, more contested, and more commercially entrenched. Yet the basic lesson from that AMA remains sharp: the future of satellite internet is not only measured in launch cadence, antenna gain, spectrum licenses, or megabits per second. It is measured in how well thousands of moving computers can behave as one coherent network.

The 32,000 number came from one moment in Starlink’s buildout

The arithmetic behind the 32,000-computer headline was simple, but it needs careful handling. In June 2020, SpaceX engineers said that each launch of 60 Starlink satellites carried more than 4,000 Linux computers. Divide that by 60 and the rough result is more than 66 Linux computers per satellite. At that point, SpaceX had launched eight batches of 60 operational Starlink satellites, giving the public a constellation near 480 satellites. Reporting at the time connected those two facts and arrived at roughly 32,000 Linux computers in orbit.

That number surprised people because it broke the usual scale expectation. One satellite with a few computers feels intuitive. Sixty-plus Linux nodes per satellite feels excessive until the satellite is understood not as a single “box in space,” but as a bundle of subsystems. A Starlink satellite must manage power, thermal conditions, attitude control, propulsion, radio links, phased-array antennas, routing behavior, health monitoring, safe-mode logic, software updates, encryption boundaries, collision-avoidance data, and communication with ground systems. The compute is spread across tasks, not concentrated inside one glamorous central brain.

The phrase “Linux computers” also deserves interpretation. A Linux node in this context is not a laptop with a desktop environment, browser tabs, and a monitor. It is a stripped-down embedded computer running SpaceX-controlled software. SpaceX said it did not use an off-the-shelf distribution and instead maintained its own kernel and associated tools. That matters because the company was not outsourcing the operating system shape to Debian, Ubuntu, Red Hat, or any familiar Linux distribution. SpaceX used Linux as a kernel and systems base, then wrapped it in its own hardware drivers, process priorities, test discipline, and flight-software culture.

The 2020 context also explains the “more than 6,000 microcontrollers” part of the claim. A Linux computer is useful when a subsystem needs a richer operating environment: scheduling, processes, networking, device drivers, diagnostics, update mechanisms, and higher-level software abstractions. A microcontroller is better suited to narrow, deterministic, low-level control. Starlink’s architecture combined both. The satellite was not a Linux-only object. It was a hierarchy of Linux nodes and bare-metal devices, each placed where its trade-offs made sense.

A common mistake is to inflate the 2020 figure into a current claim. Since Starlink now has far more satellites, it is tempting to multiply the old per-satellite ratio by today’s active count and announce hundreds of thousands of Linux computers in orbit. That might be directionally plausible, but it is not verified. Hardware design changes across generations. Some boards may be consolidated, replaced, redesigned, or assigned different roles. Direct-to-cell satellites carry new payload functions. V2 Mini satellites differ from the original satellites. Future V3 spacecraft will likely change the internal compute balance again.

A better editorial reading is more useful: SpaceX revealed that Starlink was designed around high compute density from the beginning. The system was not a thin radio relay with a little command logic. It was a software-heavy network in low Earth orbit. That distinction explains many later Starlink moves: frequent satellite software updates, onboard detection of faults, direct-to-cell experimentation, laser-linked routing, fleet-level resilience, and regulatory filings built around much larger second-generation capability.

The 32,000 number was memorable because it felt absurd. Its value now is that it made the architecture visible.

Linux in orbit is less surprising than it sounds

Linux in space sounds odd only if Linux is imagined as a desktop operating system. In aerospace, the useful question is not whether the operating system has a penguin mascot. The useful question is whether the system can be controlled, stripped down, audited, patched, tuned for timing, tested on real hardware, and integrated with custom devices. Linux is attractive in that world because it gives engineers source-level control over a mature kernel while avoiding the need to build every low-level operating-system component from scratch.

SpaceX’s own explanation fits that pattern. Its engineers said Falcon, Dragon, and Starlink shared Linux platform infrastructure. For real-time behavior, they used the PREEMPT_RT patch. For hardware integration, they added custom drivers. For distribution control, they maintained their own copy of the kernel and tools. For applications running on Linux, they paid attention to priorities, avoided priority inversions, reduced nondeterministic coding patterns, and used telemetry to check whether processes met deadlines.

PREEMPT_RT is not a magic switch that turns Linux into a perfect flight computer. It is a set of changes that reduces kernel latency and improves real-time preemption behavior. The Linux kernel documentation describes real-time preemption as a developer-facing area concerned with scheduling, locking, priority inheritance, threaded interrupts, and the ways real-time kernels differ from ordinary configurations. In plainer terms, it helps Linux respond more predictably when timing matters.

Predictability is not the same as perfection. A hard real-time system must meet deadlines; missing a deadline may count as failure. A general-purpose operating system normally favors throughput, fairness, and broad hardware support. SpaceX’s approach appears to sit between those worlds. Linux provides the operating substrate for many onboard tasks, while microcontrollers and narrower control loops handle other responsibilities. The architecture is not “Linux does everything.” It is “Linux runs where its flexibility is worth the timing-management work.”

NASA and space-station operations had already shown that Linux belonged in orbit well before Starlink. The Linux Foundation described the International Space Station’s migration of key laptop functions from Windows to Linux, quoting the need for a system that was stable, reliable, and under in-house control. The same case study described the ISS OpsLAN laptops as tools for daily operations, inventory, camera interfaces, and crew support. That was not the same use case as Starlink flight hardware, but it showed the larger logic: in a remote environment, control over patching, adaptation, and support can matter as much as brand familiarity.

Linux also fits SpaceX’s organizational style. A company that builds rockets, spacecraft, user terminals, ground stations, and software in tight loops benefits from tools it can modify. A closed operating system would add friction. A niche aerospace RTOS might offer determinism but not the same ecosystem, developer familiarity, networking stack, or hardware breadth. Linux gave SpaceX a practical middle path: mature enough to trust, open enough to own, common enough to hire for, and adaptable enough to run across different hardware architectures.

The deeper reason Linux is unsurprising is that modern spacecraft are no longer isolated electromechanical platforms. They are networked computers exposed to changing conditions. They need diagnostics, software updates, cryptographic boundaries, test frameworks, hardware abstraction, logs, process isolation, and fleet operations. Once satellites become software-defined infrastructure, Linux becomes less of a novelty and more of an engineering tool.

Starlink treats satellites more like fleet infrastructure than rare spacecraft

The most revealing line in the SpaceX AMA was not the 32,000-computer number. It was Monson’s comment that Starlink satellites needed to be thought of more like servers in a data center than special one-of-a-kind vehicles. That does not mean a satellite is literally a server rack. It means the operating model changes. Instead of treating every spacecraft as an almost sacred individual asset, Starlink treats thousands of satellites as fleet infrastructure, with staged updates, telemetry, rollback paths, comparative testing, and system-level resilience.

That idea is radical in spaceflight because traditional spacecraft are expensive, slow to build, and hard to replace. They are designed for long missions, conservative update cycles, and deep prelaunch verification. Starlink is different because SpaceX can manufacture many satellites, launch them frequently, and tolerate some failures at the constellation level. The resilience is not located only inside one spacecraft. It is distributed across satellites, ground systems, user terminals, update pipelines, orbital replacement, and network routing.

The data-center analogy also clarifies why a satellite might contain so many Linux computers. A data center is not a single large computer. It is a set of machines, switches, storage devices, controllers, monitoring agents, management networks, and power systems. A Starlink satellite is not equivalent, but the pattern rhymes. It contains many pieces that need local computation and coordination. The satellite must keep itself healthy, point correctly, handle communications, respond to commands, watch its environment, and participate in a larger network.

SpaceX’s update model reflects that worldview. Monson said the Starlink team could deploy test builds to a subset of vehicles, compare performance against the rest of the fleet, tweak and try again, pause a rollout, roll back, and continue. He described that shift as critical to iterating quickly. That language would sound familiar to engineers running large online services. In the space domain, it carries more risk and requires stricter safety boundaries. A satellite update cannot be treated like a casual app release. Yet the fleet structure lets SpaceX use controlled exposure rather than waiting for perfect certainty before every change.

The difference between “spacecraft” and “fleet infrastructure” also changes how edge cases are discovered. SpaceX said hundreds of satellites operating continuously would find weird failures that ground testing had not imagined. That is not a confession of sloppy testing; it is a recognition of scale. A system with thousands of devices exposed to radiation, temperature cycles, orbital mechanics, radio conditions, user demand, and ground-network variation will produce failure combinations no test plan fully anticipates. The real question is whether the system fails safe, reports enough information, and allows recovery.

Starlink’s architecture appears to answer that with a layered rule: protect the hardware first, preserve command and update ability, keep the satellite safe long enough to debug, and use fleet-level redundancy to protect service. That is a different kind of confidence from old aerospace overdesign. It accepts that failures will happen and spends energy making them survivable.

The server analogy has limits. Data-center servers do not pass overhead at orbital velocity. They do not need propulsion for collision avoidance. They do not burn up if abandoned. They do not operate inside a shared sky visible to astronomers. Still, the analogy points to the right operating truth. Starlink is a planetary-scale edge network whose edge nodes happen to be spacecraft.

Real-time Linux sits between ordinary servers and flight control

The phrase “real-time Linux” can be misleading. It does not mean “fast” in the casual consumer sense. It means the system is shaped to respond within controlled timing bounds. A music player can stutter and continue. A web page can load half a second late. A control loop that misses a deadline may create a safety problem. SpaceX’s software team described Falcon and Dragon control in terms of repeated real-time loops: read sensors, combine the data with state, decide, and issue outputs back to hardware many times per second.

For Starlink, timing shows up differently but no less seriously. The satellite must manage attitude, power, thermal behavior, communications links, beam pointing, phased-array behavior, routing participation, update safety, and failure response. Some tasks need strict deadlines. Others can be slower. The architecture can separate them across Linux systems, microcontrollers, and safety logic. A strong design does not force one operating system to solve every timing problem. It places timing-critical functions where they belong and then verifies the boundaries.

SpaceX’s engineers said all onboard computers either ran Linux with PREEMPT_RT or were microcontrollers running bare-metal code. They also said Linux applications were configured to avoid priority inversions and written with deterministic habits, such as avoiding runtime memory allocation or unbounded loops where timing mattered. Telemetry was used to verify process performance across flight phases. Those details matter because they move the discussion beyond “Linux is reliable” into the real work: scheduling, coding discipline, hardware selection, measurement, and fault containment.

Linux by itself does not guarantee determinism. A poorly written real-time Linux application can still miss deadlines. A driver can introduce latency. A shared resource can block a high-priority task. A bad update can break assumptions. The PREEMPT_RT patch improves the kernel’s ability to preempt work and manage real-time scheduling, but the surrounding system has to be built for predictability. Real-time behavior is an architecture, not a logo.

The mix of Linux and bare-metal microcontrollers also shows restraint. In embedded systems, the simplest reliable solution is often the best one. A microcontroller can run a narrow control loop with fewer moving parts than a Linux process. It can boot quickly, talk directly to hardware, and avoid the complexity of a full kernel. Linux is chosen when richer capability is worth the cost: networking, file systems, drivers, processes, memory protection, diagnostics, and complex application logic.

This is where Starlink’s design becomes more interesting than a generic “open source in space” story. SpaceX was not merely celebrating Linux. It was using Linux as part of a control-and-compute hierarchy. That hierarchy includes C and C++ for flight software, Python for tools and automation, web technologies for certain display interfaces, microcontrollers for independent safety functions, and hardware-in-the-loop testing on real flight hardware. The company also said it used outside software sparingly beyond Linux and a few selected tools, preferring code it understood deeply.

For Starlink internet, real-time Linux matters because the network is alive. Links change. Satellites move. Loads shift. Failures occur. Updates roll out. User terminals connect and reconnect. Commands must be authenticated. Satellites must keep themselves safe even under partial communication loss. The operating system is only one layer, but it is the layer that lets many of those behaviors exist in a manageable form.

The constellation depends on redundancy at several layers

SpaceX’s approach to redundancy is not one thing. It is several overlapping habits. Falcon and Dragon use redundant computers, sensors, actuators, voting, state machines, safety logic, and mission simulations. Starlink uses some onboard redundancy, but its defining redundancy is larger: many satellites can serve a user, and the constellation can absorb the loss of individual spacecraft more gracefully than a single-satellite system. Monson said Starlink primarily trusted system-level fault tolerance, with multiple satellites in view and the ability to keep service working despite individual problems.

That distinction is central. A traditional high-value satellite may need to survive many years because replacing it is hard. A Starlink satellite still needs to be safe and reliable, but the service does not depend on one satellite surviving. The unit of reliability shifts from the spacecraft to the constellation. That lets SpaceX make different trade-offs in hardware, launch cadence, replacement, and software iteration.

This does not remove the need for onboard safety. A failed satellite is not harmless. It can become debris. It can lose pointing. It can interfere with operations. It can fail to deorbit if badly designed. SpaceX engineers said Starlink satellites were programmed to enter a high-drag state if they had not heard from the ground in a long time, allowing atmospheric drag to pull them down in a predictable way. They also said the company fights to actively deorbit satellites where possible.

Inside a satellite, redundancy may be expensive in mass, power, volume, and complexity. Across a constellation, redundancy can be created by coverage density. A user terminal does not care which specific satellite carries its traffic as long as service remains stable enough. The network can route around loss, adjust capacity, and replace nodes through future launches. This is not redundancy as duplication alone. It is redundancy as geometry, manufacturing, launch, and software control.

Radiation handling shows another layer. SpaceX engineers said radiation-induced errors in computers are handled through multiple redundant computers and voting on their outputs. If one computer fails due to radiation, it can be rebooted and reincorporated after recovery. Sensors and data transmissions also use redundant or error-detecting approaches. That is different from relying only on fully radiation-hardened components. It is a system-level design philosophy: use redundancy and detection to tolerate faults rather than assuming every component must be immune.

The trade-off is not free. More distributed redundancy creates more states to monitor. More computers mean more software versions, more logs, more configuration, more update risk, and more interface boundaries. A fleet-level strategy demands strong observability. Without telemetry, rollout control, and reliable command paths, a large fleet becomes a large mystery. Redundancy only works when the system can tell operators what failed, isolate the fault, and keep enough trusted control paths alive.

Starlink’s resilience also depends on SpaceX’s unusual launch capacity. A company without frequent, lower-cost access to orbit could not rely so heavily on constellation refresh. SpaceX’s Falcon 9 reuse cadence, Starlink manufacturing scale, and vertical integration reinforce the software architecture. The satellites, launch system, ground network, user terminals, and fleet software are not separate business curiosities. They are coupled parts of one resilience model.

That is the overlooked point in the Linux headline. The computers matter because they are embedded in a larger failure strategy. Starlink’s reliability is not the reliability of one Linux box. It is the reliability of a moving, replaceable, observable, updateable population of machines.

Software updates turned orbit into a production environment

SpaceX said it tended to update software running on all Starlink satellites about once a week, with smaller test deployments also happening. It also deployed ground services a couple of times a week or more. That cadence is startling in a space context. It treats the constellation as a production environment with constant improvement, not as a frozen artifact launched and left alone.

The reason is not fashion. Starlink’s value depends on software. Monson said small software improvements could have a huge impact on service quality and the number of people the system could serve. That is plausible because satellite broadband is full of trade-offs: beam scheduling, link management, congestion behavior, routing, power use, handovers, antenna control, telemetry filtering, update safety, and user-terminal behavior. A software change that squeezes more usable capacity out of the same satellite hardware has real economic value.

Weekly updates also help explain why Linux was a practical base. An operating system that supports familiar build, test, deployment, logging, and driver models lets a satellite fleet behave more like managed infrastructure. SpaceX still has to wrap that in far stricter safeguards than ordinary software services. Yet the basic operational vocabulary remains: test build, subset deployment, comparison, rollback, production rollout, telemetry, and continuous improvement.

The risk is obvious. Frequent updates can introduce fleet-wide failure if release discipline is weak. SpaceX’s answer, as described in the AMA, was staged exposure and rollback. Test builds could be deployed to a small group of satellites and compared with the rest of the fleet. If a rollout behaved badly, it could be paused or reversed. That approach is common in large web services. In orbit, it requires hard boundaries around command, update integrity, power safety, and fault recovery.

Software updates also create a philosophical break with classic aerospace conservatism. A traditional spacecraft may treat post-launch software change as a rare event. Starlink treats software change as part of service operation. The system is designed to learn after launch. That does not mean the launch version can be careless. It means the company expects the orbiting fleet to become better through measured, controlled change.

This matters for security as well. A signed-update pipeline is only useful if updates are normal enough to be practiced but controlled enough to resist compromise. SpaceX engineers said every piece of hardware in the Starlink system, including satellites, gateways, and user terminals, was designed to run only software signed by SpaceX. They also described end-to-end encryption for user data and internal hardening to limit the value of a foothold.

The satellite update cadence also reveals how much Starlink’s ground systems matter. A Starlink satellite is never only an isolated platform. The ground services schedule, monitor, command, analyze, route, and support the network. When SpaceX updates ground services multiple times per week, the constellation changes even when no new rocket has launched. Starlink capacity is not only added by putting metal into orbit. It is also added by improving code on the ground and in space.

The phrase “production environment” should still be used carefully. Space is unforgiving. A botched software deployment can become a hardware loss. Yet SpaceX’s own comments make clear that Starlink is operated with a live-service mindset. The orbiting computers are not static appliances. They are part of a system that is continuously measured and modified.

That is why the 32,000 Linux computers mattered. They were not just present in space. They were updateable.

Telemetry became Starlink’s nervous system

A fleet of thousands of satellites would be unusable without telemetry. SpaceX said in 2020 that Starlink was generating more than 5 TB of data per day, while also trying to reduce how much each device sent. The answer was onboard detection: send and store less routine noise, report more when something is interesting, and share alerting concepts between Starlink and Dragon.

Telemetry is the nervous system of a fleet. It tells operators whether processes meet deadlines, whether a software update behaves correctly, whether a satellite is safe, whether a radio link is degrading, whether a thermal condition is drifting, whether an unexpected fault is repeating, and whether a service change improves customer experience. Without telemetry, frequent updates become guesswork. With telemetry, the fleet becomes a measurable system.

The 5 TB-per-day number came when Starlink was much smaller. Today’s constellation is vastly larger, but public reporting has not provided a directly comparable current telemetry total. It would be wrong to scale the old number linearly and pretend certainty. The architectural lesson is enough: even early Starlink produced data volumes that forced SpaceX to filter, prioritize, and automate. The company could not simply dump every sensor reading from every node forever.

Onboard detection is especially valuable because communication is expensive. Every bit of telemetry competes with bandwidth, storage, processing, and analyst attention. A satellite that can detect “interesting” behavior locally reduces the burden on the ground. That local logic is another reason compute density matters. A satellite with many Linux nodes and microcontrollers can do more than obey commands. It can summarize, classify, alert, and preserve context for diagnosis.

Telemetry also supports SpaceX’s staged deployment model. If a new build goes to a subset of satellites, operators need meaningful comparison between the test group and the rest of the constellation. That comparison requires metrics: latency, link behavior, CPU load, memory, reboot events, missed deadlines, thermal shifts, packet loss, user impact, command success, and safe-mode events. The quality of the metrics determines the quality of the rollout decision.

There is another layer: telemetry makes rare edge cases visible. SpaceX said satellites had encountered failures on orbit that the team had not conceived of before, but the satellites stayed safe long enough for engineers to debug, find a workaround, and push an update. That line says more about fleet operations than any marketing phrase could. Starlink’s software model assumes unknown failures will appear, then tries to make the time between fault and loss long enough for humans and automation to recover.

Telemetry also becomes a strategic asset. A company operating thousands of satellites gathers operational knowledge that competitors cannot easily copy from public filings. It learns where hardware wears, where software assumptions break, how links behave by geography, how user demand shifts, how atmospheric drag affects operations, how handovers feel to applications, and how satellite generations compare. The data becomes a private map of low Earth orbit as an internet environment.

Yet telemetry creates its own problems. More data can hide the truth if poorly structured. Alert fatigue can bury urgent faults. Fleet dashboards can encourage false confidence. Data retention can become expensive. Security boundaries must protect diagnostic channels. Ground analysts need tools that surface patterns, not just logs. A large satellite constellation is as much an observability problem as a launch problem.

The Linux computers in Starlink matter partly because they produce and act on that observability. They are not passive endpoints. They are measuring instruments inside a moving network.

The internet path is a moving chain of terminals, satellites and ground stations

Starlink works because the network path is constantly rebuilt. A user terminal talks to a satellite overhead. The satellite may relay traffic to a ground station if one is visible, or to another satellite through inter-satellite laser links when supported and useful. Traffic then reaches a terrestrial point of presence and continues over the ordinary internet. The architecture is sometimes described as “bent pipe” when the satellite relays traffic between the user terminal and ground gateway, with laser links extending the path when ground-station geometry makes that helpful. A 2024 measurement study described this terminal-satellite-ground-station-PoP model and found evidence that inter-satellite links can improve performance for remote regions.

The moving chain creates the Starlink engineering problem. In a fiber network, most physical paths are fixed. In a cellular network, users move but towers do not. In Starlink, both the satellite and the user may be moving, and the network must keep sessions working as satellites pass overhead. The internet path is not a cable. It is a scheduled relationship between antennas, orbits, beams, gateways, software, and routing decisions.

Low Earth orbit gives Starlink its latency advantage over geostationary satellite internet. A geostationary satellite sits roughly 35,786 kilometers above Earth. Starlink satellites operate much closer, often around a few hundred kilometers to about 550 kilometers depending on shell and generation. Lower altitude reduces propagation delay and allows broadband applications that older satellite systems struggled with. Starlink’s own public specifications list land latency ranges around 25 to 60 milliseconds, with higher latency in some remote regions.

The closer orbit also creates motion. Starlink satellites cross the sky quickly relative to users. The system has to manage handovers, beam scheduling, Doppler effects, routing paths, and weather-related link changes. Academic measurement has found periodic Starlink network reconfigurations that can affect latency and throughput at fine time scales. The 2024 Starlink performance study analyzed about 19.2 million M-Lab samples from 34 countries and found Starlink competitive with terrestrial cellular networks, while also observing regional variation, load-related latency increases, and 15-second reconfiguration effects.

That performance picture is more useful than a simple “fast” or “slow” verdict. Starlink can be excellent for rural and remote users with poor terrestrial options. It can support video calls, streaming, cloud tools, emergency communications, maritime users, aviation, and field operations. It can also show congestion, obstruction sensitivity, handover artifacts, and regional differences depending on satellite density, ground-station placement, spectrum rules, and user load.

A path through Starlink is also shaped by user equipment. The terminal is not a dumb dish. It is a phased-array radio system that tracks satellites electronically and participates in network decisions. That terminal sits at the edge of the Linux-in-space story. Even though the 32,000 figure referred to orbital computers, Starlink’s service also depends on ground terminals, gateway infrastructure, PoPs, and cloud-like management systems.

The moving path explains why so much software is needed. A satellite internet network is not just “a signal goes up and down.” It is a sequence of choices made under tight physical constraints. Which satellite should serve this user? Which beam should carry this traffic? Which gateway should receive it? Should a laser path be used? Has a software update affected link stability? Is congestion building? Is a satellite healthy? Every answer is partly software.

Linux is only one part of the packet-delivery machine

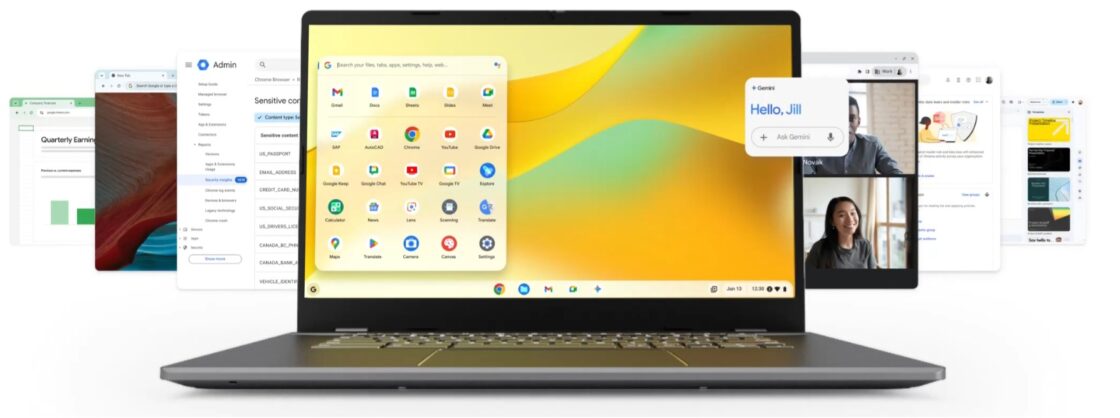

The Linux headline can accidentally shrink Starlink into an operating-system story. That would miss the machine. Starlink is a packet-delivery system built from satellites, phased arrays, radios, optical links, gateway dishes, terrestrial fiber, user terminals, routing systems, spectrum rights, software deployments, launch cadence, collision-avoidance logic, and customer-facing services. Linux is a critical layer, but it is not the whole stack.

The radio layer is central. Starlink satellites use high-frequency links to communicate with user terminals and gateways. The terminals use electronically steered antennas rather than a manually aimed dish that tracks one fixed satellite. Beamforming, scheduling, modulation, and interference management all shape performance. SpaceX’s later generations introduced laser inter-satellite links more widely, allowing traffic to move across space before descending to Earth. That improves service in oceans, polar regions, and places where ground gateways are sparse or politically difficult.

The orbital layer is just as important. A satellite at lower altitude offers lower latency and faster natural decay if abandoned, but it also covers a smaller ground area and experiences more atmospheric drag. Higher altitude improves coverage per satellite but can increase latency and debris persistence. FCC approvals and SpaceX filings have reflected these trade-offs. In January 2026, the FCC approved an additional 7,500 Gen2 Starlink satellites, bringing authorized Gen2 satellites to 15,000, while Reuters reported that SpaceX planned to lower satellites around 550 kilometers to 480 kilometers in 2026 for space-safety reasons.

The launch layer makes the system possible. Starlink’s density depends on SpaceX repeatedly launching its own satellites on Falcon 9. SpaceX’s reusable booster cadence is not just an impressive rocket statistic; it is an input to network design. When replacement and expansion launches are frequent, Starlink can accept shorter satellite lifetimes, faster hardware iteration, and constellation-level fault tolerance. In April 2026, SpaceX marked its 600th orbital-class booster landing during a Starlink mission, and the launch added 25 satellites to a constellation already above 10,275 units by one tracker’s count.

The regulatory layer is another hidden machine. Satellite internet is not allowed to operate simply because a company can build it. Spectrum coordination, orbital debris rules, national licenses, astronomy concerns, emergency-service rules, mobile-satellite permissions, and international coordination all define what Starlink can do. The 2026 FCC authorization did not give SpaceX everything it asked for; it approved an added tranche and deferred nearly 15,000 proposed Gen2 satellites.

The ground layer ties Starlink to the existing internet. Gateways and points of presence decide where satellite traffic enters terrestrial networks. A rural Starlink user may feel “connected to space,” but the session still depends on ground infrastructure, peering, backhaul, routing, data centers, and application servers. When ground stations are far away or overloaded, latency and throughput can suffer. Laser links can help, but they do not remove the need for terrestrial interconnection.

The software layer binds these pieces. Linux nodes handle many local tasks; microcontrollers run narrower code; ground systems schedule and analyze; user terminals connect and adapt; security systems sign and verify code; telemetry pipelines detect anomalies. Starlink’s achievement is not that it put Linux in space. It is that it put enough software-controlled machinery in space to make low Earth orbit behave like an internet access layer.

That is a harder story than the headline. It is also the true one.

A compact map of Starlink’s software and hardware layers

Starlink’s layered operating model

| Layer | Main role | Why it matters |

|---|---|---|

| Linux onboard computers | Run many satellite software functions, diagnostics, networking logic, and hardware-facing services | Give SpaceX a controllable, updateable compute base across the constellation |

| Microcontrollers and bare-metal code | Handle narrow low-level control functions where simplicity and timing are critical | Keep some tasks outside the complexity of a full operating system |

| Telemetry and alerting | Report health, timing, failures, and service behavior | Makes staged updates and fleet debugging possible |

| User terminals and gateways | Connect customers to satellites and satellites to the terrestrial internet | Determine much of the real user experience, especially latency and reliability |

| Fleet software and rollout systems | Deploy builds, compare subsets, pause or roll back changes | Turns orbit into a managed production environment rather than a frozen spacecraft archive |

| Launch and replacement cadence | Add capacity, replace failed or aging satellites, introduce new generations | Lets Starlink rely on constellation-level resilience instead of only satellite-level survival |

This table compresses the core idea: Starlink is not a single technology but a stack of interdependent systems. Linux is the most culturally recognizable piece of that stack, but the network works only because software, hardware, orbital design, and operations reinforce each other.

The satellite count has moved far beyond the original snapshot

The 2020 Linux-node number belonged to an early Starlink fleet. The current constellation is much larger. Public trackers and launch reporting now put Starlink above 10,000 active satellites, with the exact count changing as new satellites launch and older satellites deorbit. Space.com reported in March 2026 that SpaceX had passed 10,000 active Starlink satellites, with 10,049 units in the constellation and 1,509 previously launched Starlinks already reentered and destroyed. SatelliteMap listed 10,327 active Starlink satellites and 11,858 total launched, with the last listed launch on April 26, 2026.

That growth tempts a dramatic extrapolation. If the early ratio of more than 66 Linux computers per satellite still held, the constellation would now imply hundreds of thousands of Linux nodes in orbit. The trouble is that nobody outside SpaceX has a verified current per-satellite Linux-node count. Hardware generations changed. Starlink V1.5 added more laser-link capability. V2 Mini satellites increased capacity compared with earlier designs. Direct-to-cell satellites added cellular payload functions. V3 satellites planned for Starship launches are expected to be larger and far more capable. The old ratio is not a public specification for the modern fleet.

The better point is that compute scale almost certainly grew with the constellation’s ambitions. Starlink moved from consumer broadband beta service toward maritime, aviation, enterprise, government, mobility, emergency connectivity, direct-to-cell messaging, and future data services. Each category adds software complexity. The network has to manage different service priorities, terminals, regulatory markets, spectrum conditions, and mobility patterns. More satellites also create more operational states to monitor and more update risk to contain.

Regulators have continued to approve expansion, but not without limits. Reuters reported in January 2026 that the FCC approved another 7,500 Gen2 satellites, bringing the authorized Gen2 total to 15,000, while SpaceX had sought approval for nearly 30,000. The same decision allowed upgrades and broader frequency use, while imposing deployment deadlines. This tells us that Starlink’s growth is both technical and institutional. The system expands through rockets and computers, but also through permissions.

The growth of the fleet changes the meaning of failure. Losing one satellite in a 480-satellite network is different from losing one in a 10,000-satellite network. More density can improve service resilience. It can also raise orbital-congestion concerns, astronomy impacts, and operational burden. Scale is not automatically good or bad. It amplifies both the value of the network and the consequences of running it poorly.

The number also changes the Linux story. In 2020, “32,000 Linux computers in space” sounded astonishing because it exceeded most people’s mental image of space computing. In 2026, the deeper astonishment is that an orbital internet network now needs the operational discipline of a major cloud service while living inside satellites moving at orbital speed. The question is not whether there are 32,000 Linux nodes anymore. The question is how a company governs a software-defined constellation that may eventually contain tens of thousands of satellites and an unknown but enormous number of computers.

That is why the original snapshot still matters. It caught Starlink before the scale became familiar. It showed that SpaceX had already crossed from spacecraft engineering into orbital infrastructure engineering.

Starlink’s computing architecture changes the economics of failure

Every infrastructure system has a theory of failure. The old satellite-internet theory relied on a small number of large, expensive spacecraft placed in high orbits. Those satellites had to last because replacement was hard. Starlink’s theory is different. Use many smaller satellites in low Earth orbit, refresh them often, improve them through software, and keep the service alive through fleet density. The economics of failure shift from “avoid losing the one asset” toward “keep the population healthy enough that individual failures do not define the service.”

This shift is only possible because SpaceX controls much of the stack. It builds satellites, launches them on its own rockets, operates the network, designs user terminals, runs ground systems, and develops software. A company that had to buy rare rides to orbit from someone else could not treat replacement as an ordinary operational mechanism. A company that did not build its own software stack could not safely update satellites every week. A company without telemetry discipline could not learn from thousands of orbital machines.

There are costs. A large constellation must continually replenish satellites because low Earth orbit is not a permanent parking lot. Drag, hardware aging, capacity demand, failures, and new generations all drive replacement. SpaceX’s own model depends on repeated manufacturing and launches. That creates environmental, regulatory, and space-safety questions. It also creates customer risk if launch cadence slows, a vehicle is grounded, or regulators restrict expansion.

A distributed fleet also changes the meaning of quality. A traditional satellite may be judged by the survival of the spacecraft. Starlink must be judged by service: latency, availability, throughput, congestion, coverage, terminal cost, weather performance, mobility support, and resilience to local network outages. A satellite can be “working” and still contribute to a poor user experience if capacity is thin or routing is inefficient. The customer does not buy a satellite. The customer buys the behavior of the constellation.

Software turns that behavior into an adjustable variable. If a beam scheduler improves, the same satellite hardware can serve more users. If a routing decision improves, latency can fall. If onboard fault detection improves, telemetry load can shrink. If a power-management update reduces waste, service capacity can improve. This is why the Linux computers matter economically. They are not just cost centers; they are where physical assets become improvable after launch.

Failure economics also shape hardware choices. Fully radiation-hardened processors can be expensive and slower than commercial alternatives. SpaceX has often been associated with using commercially available components wrapped in redundancy and fault tolerance. In the AMA, engineers described multiple computers voting on outputs and rebooting failed units after radiation faults. That approach accepts that faults happen and designs around them. It is not reckless if the detection, voting, isolation, and recovery systems are strong enough.

The risk is correlated failure. Fleet-level resilience works best when failures are independent. A software bug pushed to every satellite, a shared hardware flaw, a ground-system compromise, a spectrum issue, or a design assumption that fails under a solar storm can affect many nodes together. The larger the fleet, the more dangerous common-mode failure becomes. Staged updates, signed software, diverse testing, and rollback paths are defenses against that risk, but not guarantees.

Starlink’s economics of failure are therefore both powerful and demanding. The company can lose satellites and still operate. It can improve the service through software. It can replace hardware faster than older space programs. Yet it must maintain discipline at a scale no previous satellite operator has faced. A software-defined constellation is forgiving of isolated failure and unforgiving of sloppy fleet management.

Direct-to-cell pushes the software model into telecom territory

Starlink began as satellite broadband for terminals. Direct-to-cell changes the shape of the problem. Instead of a dedicated Starlink dish with a relatively capable antenna, the network must communicate with ordinary smartphones using cellular spectrum and limited phone antennas. SpaceX sent and received its first direct-to-cell text messages in January 2024 using T-Mobile spectrum through newly launched satellites, and later U.S. services under T-Mobile’s T-Satellite branding promoted texting, select apps, location sharing, and emergency text access where terrestrial towers do not reach.

This is not just a new customer feature. It changes Starlink’s role from satellite ISP toward mobile-network infrastructure. A direct-to-cell satellite behaves, in part, like a moving cell tower in space. Reuters described Starlink direct-to-cell satellites as equipped with cellular modems that function like cell towers, beaming signals directly to smartphones on the ground. Ukraine’s Kyivstar tested the technology in 2025, with regular smartphones exchanging messages in a field trial.

The software burden increases sharply. Cellular networks depend on identity, authentication, roaming, spectrum coordination, emergency routing, device compatibility, messaging protocols, network selection, handover, and power management. A Starlink direct-to-cell system must handle those telecom expectations while satellites move overhead at high speed. The phone cannot grow a large antenna. The satellite has to close the link budget, coordinate with terrestrial carriers, avoid harmful interference, and provide enough capacity for a service that users understand as mobile connectivity.

T-Mobile’s own public page is careful about limits. It says T-Satellite works outdoors where users can see the sky, that satellite service may be delayed, limited, or unavailable, that data speeds are limited, and that some apps may behave differently than on terrestrial cellular networks. That is not a weakness in the disclosure; it is an accurate reflection of physics. Direct-to-cell is not the same as turning every smartphone into a full Starlink broadband terminal. It is a lower-bandwidth, high-coverage extension of mobile networks.

This matters because the Linux-computer story moves into a new domain. The original Starlink network already needed distributed compute for satellite broadband. Direct-to-cell adds telecom-grade control logic and partner integration. It ties satellite software to mobile-network operator systems, device ecosystems, emergency services, and national regulators. SpaceX is no longer just connecting fixed rural homes or ships. It is participating in cellular coverage maps.

The FCC’s 2024 approval of SpaceX and T-Mobile direct-to-phone service was reported as the first satellite-operator and wireless-carrier collaboration approved for supplemental cell coverage from space. The 2026 FCC authorization then expanded SpaceX’s path for Gen2 satellites and direct-to-cell connectivity beyond the United States, subject to market rules and coordination.

The commercial upside is clear: emergency coverage, rural gaps, maritime zones, disaster zones, national security use, roaming, and carrier partnerships. The technical limits are equally clear: capacity per beam, indoor coverage challenges, phone antenna constraints, spectrum sharing, latency variation, battery impact, and regulatory fragmentation. Direct-to-cell will be judged less by launch spectacle than by whether users can send a message when towers are gone.

For Starlink’s software teams, that is a new kind of promise. Broadband customers forgive some satellite quirks if they have no better option. Mobile users expect phones to just work. The gap between those expectations is a software and operations problem as much as a radio problem.

Security is inseparable from the operating system choice

A satellite internet network is a tempting target. It carries user traffic, supports governments and enterprises, reaches remote regions, and can matter during war, disaster, and political crisis. Security cannot be bolted on after launch. SpaceX engineers addressed that directly in the 2020 AMA, saying Starlink used end-to-end encryption for user data, signed software requirements for satellites, gateways, and user terminals, and internal hardening to reduce the value of compromising one part of the system.

Linux cuts both ways in this security picture. On one hand, Linux is widely studied, heavily used, and supported by a broad ecosystem of tools and expertise. SpaceX can inspect and modify source code, strip the system down, add custom drivers, run static and dynamic analysis, and control its update pipeline. On the other hand, a full operating system is complex. Drivers, kernels, network stacks, bootloaders, update tools, and application services create attack surface. The value of Linux depends on ownership discipline.

SpaceX’s signed-software model is central. If an attacker compromises a service but cannot install persistent unauthorized code on satellites, gateways, or terminals, the damage path narrows. Secure boot, code signing, update validation, hardware trust anchors, key management, and rollback protection all become part of the satellite’s safety case. The operating system must support this chain without becoming the weakest link.

Command paths are especially sensitive. A satellite network has to accept legitimate commands from ground systems. It must reject malicious commands. It must recover if communication is interrupted. It must handle emergency procedures. It must prevent lateral movement between user traffic and control systems. A Starlink satellite is not just a network router in space. It is a remotely commanded machine that must never let the public internet become a control surface.

The user terminal is part of that boundary. Starlink terminals sit in homes, vehicles, ships, aircraft, battlefields, and remote sites. They are physically accessible to customers and potentially to attackers. If a terminal could be modified to attack satellites or gateways, the entire network would face risk. SpaceX’s signed-code approach across terminals, gateways, and satellites suggests it treats every hardware class as part of one trust chain.

Security also intersects with update cadence. Frequent updates are useful for patching, improving, and responding to discovered issues. They also create a high-value pipeline attackers may try to compromise. A stolen signing key or poisoned build system could be worse than a single software bug. That is why aerospace software security has to extend beyond the satellite into source control, CI systems, build machines, developer access, code review, key storage, deployment authorization, and operational monitoring.

There is also geopolitical security. Starlink has become visible in conflicts and disasters because it can work when ground infrastructure fails. That value brings scrutiny over service control, availability decisions, lawful access, sanctions compliance, military use, and national dependency on a private network. Those are governance questions, not kernel questions. Yet the governance questions only arise because the software-defined network is powerful enough to matter.

The Linux choice fits into this wider picture by giving SpaceX control. But control is a burden. Running Linux in orbit is not safer because Linux is open source. It is safer only if SpaceX’s implementation, update chain, isolation model, monitoring, and operational culture are strong. Open source gives visibility and adaptability. It does not grant immunity.

Open source in space does not mean off-the-shelf spaceflight

The Starlink Linux story is sometimes told as if SpaceX simply installed a familiar Linux distribution on satellites and called it a day. The engineers said the opposite. They did not use a third-party distribution. They maintained their own kernel and tools. They made small kernel changes and added custom drivers. They used PREEMPT_RT. They ran many hardware architectures. They combined Linux systems with bare-metal microcontrollers.

That is typical of serious embedded Linux work. A company may use the Linux kernel, but the actual product is a tailored operating environment. It includes bootloaders, init systems, filesystems, device trees, drivers, build systems, update mechanisms, logging, watchdogs, recovery partitions, security policies, process priorities, and application frameworks. “Linux” is the foundation, not the finished spacecraft software package.

SpaceX also said it limited outside software beyond the operating system and selected tools. It mentioned Das U-Boot, Buildroot, and MUSL in the AMA, while stressing that it kept programs simple, slim, and based on code the team understood. That mindset is common in safety-adjacent systems. Every dependency carries unknowns. A library that is harmless in a consumer app may be undesirable in flight software if nobody can explain its failure modes.

Open source matters because it gives engineers the right to inspect, modify, compile, test, and patch the code. It reduces vendor lock-in. It gives access to a deep talent pool. It makes it easier to build custom systems for unusual hardware. It allows targeted changes without waiting for a commercial vendor’s roadmap. Yet open source also demands internal expertise. If a company forks or customizes too much, it owns the maintenance burden.

The ISS Linux migration story shows a related reason. United Space Alliance moved key functions from Windows to Linux partly because it wanted in-house control: the ability to patch, adjust, or adapt. SpaceX’s Starlink work pushes that idea into flight and network infrastructure. In orbit, waiting for a vendor fix is not a strong operating model. The farther the computer is from human hands, the more valuable software sovereignty becomes.

There is also a cultural point. Linux is familiar to engineers. SpaceX can hire people who understand Linux internals, networking, C++, embedded systems, real-time scheduling, Python tooling, and distributed operations. A proprietary or obscure RTOS might reduce some complexity in one area while shrinking the hiring pool and tooling ecosystem. Starlink needed both aerospace discipline and internet-scale software habits. Linux sits at that intersection.

Still, open source should not be romanticized. The kernel’s openness does not reveal SpaceX’s Starlink implementation. The constellation’s most valuable software is proprietary: routing logic, health systems, fleet management, deployment strategy, terminal firmware, security controls, radio scheduling, and operational tooling. Starlink is built on open-source foundations but operated as a closed commercial network.

That combination is now normal in modern infrastructure. The open kernel enables private systems. The public code base supports a proprietary service. In Starlink’s case, the twist is altitude. The same open-source logic that powers cloud servers, routers, phones, cars, and industrial machines now powers a moving network above Earth.

The sky is also a shared infrastructure layer

The Linux computers are not the only things Starlink has put into orbit. It has also put bright, radio-emitting, maneuvering objects into a shared sky. That creates consequences for astronomy, orbital traffic, spectrum use, atmospheric reentry, and public governance. A technically impressive constellation can still create external costs. The same scale that makes Starlink useful also makes it impossible to treat as a private matter alone.

Astronomers noticed Starlink early because the satellites were visible. The International Astronomical Union warned in 2019 that thousands of visible satellites could threaten ground-based astronomy and the appearance of the night sky. The concern is not nostalgia. Modern astronomy depends on long exposures, sensitive detectors, radio quiet, and predictable observing conditions. Satellite streaks, reflected sunlight, and radio emissions can damage data or require mitigation.

SpaceX has worked with scientific bodies on mitigation. The U.S. National Science Foundation said NSF and SpaceX finalized coordination to address radio astronomy protection, with attention to the 10.6–10.7 GHz band, and to meet international radio astronomy protection standards connected to the Gen1 license conditions. SpaceX has also published brightness-mitigation materials and changed satellite designs and orientations. Those steps matter, but they do not end the debate.

Recent research keeps the pressure on. A 2025 Nature paper warned that rapidly growing satellite constellations can affect astronomical images across the electromagnetic spectrum and create operational and mitigation costs for observatories. Starlink is not the only constellation in that future. Amazon Leo, Eutelsat OneWeb, Chinese constellations, direct-to-device systems, and proposed orbital services all add to the shared environment.

The orbital-debris issue is similar. Starlink satellites operate low enough that atmospheric drag can remove failed spacecraft over time, especially if satellites enter a high-drag state. That is a meaningful safety choice. Yet sheer numbers raise coordination demands. More objects require more tracking, more avoidance maneuvers, more reliable deorbit behavior, and more international coordination. The FCC’s 2026 approval of added Gen2 satellites came with conditions and did not approve the full SpaceX request.

There is also a governance issue around dependency. Starlink now supports consumers, airlines, ships, militaries, disaster response, remote industries, and countries with damaged infrastructure. The network’s software decisions, service policies, pricing, and coverage controls can have public consequences. A private company can move faster than government satellite programs, but public reliance on private orbital infrastructure raises questions that pure engineering cannot answer.

None of this cancels Starlink’s value. For many users, it is the first broadband connection that works well. For disaster zones, it can restore communication. For ships, aircraft, remote clinics, farms, and field teams, it can be transformative in the ordinary, practical sense of making work possible. Yet the costs must be part of the accounting. A network in low Earth orbit uses a shared physical environment, not just private capital.

The Linux story and the sky story belong together. Software lets SpaceX operate at scale. Scale creates public impacts. The better the software becomes, the more important governance becomes.

Starlink’s Linux story is a lesson for edge computing

Starlink is often described as satellite internet, but it is also one of the most extreme examples of edge computing. Edge computing usually means moving computation closer to where data is produced or used: factories, vehicles, cell towers, ships, cameras, medical devices, or industrial sensors. Starlink moves computation to the orbital edge. The network edge is not a cabinet near a cell tower. It is a satellite passing hundreds of kilometers above the user.

That makes latency, autonomy, and local decision-making central. A satellite cannot wait for the ground to decide every small action. It needs onboard control, onboard health monitoring, local fault response, local telemetry filtering, and enough autonomy to preserve safety during communication gaps. Linux nodes and microcontrollers create that edge intelligence. Ground systems remain critical, but the satellite must be competent on its own.

Starlink’s software model also shows where edge computing is going. Edge devices are no longer simple endpoints. They are updateable, monitored, security-sensitive, and part of a fleet. A modern car, wind turbine, robot, cell site, or satellite needs software rollout discipline. It needs signed updates. It needs telemetry. It needs staged deployment. It needs rollback plans. It needs hardware abstraction. It needs local safety behavior. Starlink is edge computing with orbital mechanics added.

The comparison also exposes the hardest edge problem: connectivity is intermittent or variable exactly where autonomy is most needed. Starlink satellites are designed for connectivity, but their command paths, gateway links, and routing states still change. A direct-to-cell satellite may pass over a phone with limited link margin. A user terminal may face obstruction. A gateway may not be visible. Edge systems must degrade gracefully.

Starlink’s weekly update culture offers another edge lesson. Hardware deployed into hard-to-reach places should not be frozen unless the safety case requires it. If software can safely improve performance, patch security issues, and adapt to new operating conditions, the product gets better after deployment. But updateability without discipline is dangerous. The edge needs both agility and restraint.

The Linux choice fits because Linux has become the default substrate for much edge infrastructure. It runs gateways, routers, embedded devices, industrial systems, vehicles, robots, and appliances. Starlink extends that pattern into space. The operating system’s strength is not glamour; it is its ability to be shaped into many specialized roles by teams that know what they are doing.

Starlink also shows the value of fleet learning. A single edge device teaches little. Thousands teach patterns. A satellite fleet reveals rare failures, regional performance differences, environmental effects, and hardware aging. The more devices a company operates, the more valuable its operational data becomes. That data can guide hardware generations, software updates, service planning, and customer support.

The danger is that edge fleets become opaque private infrastructure. Users may depend on them without understanding how decisions are made. Regulators may struggle to keep up. Researchers may lack access to data. Security failures may propagate quickly. Fleet intelligence creates fleet power. Starlink is a case study in both.

The 32,000 Linux computers were therefore not just a fun number. They were an early marker of a broader shift: compute is moving into every layer of infrastructure, including layers that used to seem physical, remote, and slow to change.

The useful lesson is not the number

The old headline still works because it is vivid. SpaceX launched 32,000 Linux computers into space. It sounds impossible and concrete at the same time. Yet the number is no longer the main lesson. Starlink has grown far past the 2020 snapshot. The current fleet is larger, the satellite generations are different, direct-to-cell is active, regulators have approved more Gen2 expansion, and the network has become part of global connectivity politics.

The useful lesson is architectural. Starlink turned satellites into software-managed fleet infrastructure. Linux made that easier, not because Linux is magic, but because it is controllable, adaptable, familiar, and compatible with a disciplined embedded-systems workflow. PREEMPT_RT helped SpaceX shape timing behavior. Custom drivers connected the kernel to hardware. Bare-metal microcontrollers handled narrower tasks. Signed updates and telemetry made fleet operation possible. Launch cadence made replacement and growth plausible.

That architecture also explains Starlink’s public tension. It is impressive because it works at scale. It is controversial because it works at scale. A constellation of thousands of satellites can bring broadband to places that fiber and towers do not reach. It can also crowd orbits, affect astronomy, concentrate infrastructure power, and force regulators to catch up with technical reality. The same operating model that makes Starlink agile makes it consequential.

There is a temptation to make the Linux story tribal: Linux beats Windows, open source conquers space, hackers inherit orbit. That framing is too small. The ISS Linux migration showed the value of control and reliability in space operations, but Starlink’s flight architecture is not a desktop operating-system rivalry. It is a demonstration that modern infrastructure is increasingly software-defined, from rockets to satellites to phones to emergency networks.

The stronger reading is less flashy: software is now a primary aerospace material. Aluminum, composite structures, krypton or argon propellant, phased-array antennas, solar panels, and laser links still matter. But software determines how those parts behave together, how failures are survived, how capacity improves, how security holds, how customers experience the service, and how fast the system evolves.

The Starlink Linux computers are not interesting because they are computers in space. Space has had computers for decades. They are interesting because of their number, their updateability, their placement inside a commercial internet service, and their connection to a manufacturing-and-launch system that can keep adding more. They mark the point where orbital infrastructure started to look less like a handful of exquisite machines and more like a planetary network.

The best way to understand the 32,000 figure is to treat it as a fossil from Starlink’s early scale-up. It preserves the shape of the system at a moment when the public had not yet grasped what SpaceX was building. Today, the actual number of Linux nodes is unknown, and responsible analysis should say so. But the direction is clear. Low Earth orbit has become a compute environment, not merely a place to park satellites.

That is the real story behind SpaceX’s Linux constellation. The computers were never the punchline. They were the evidence.

Questions readers ask about SpaceX’s Linux computers in orbit

Yes, but the number refers to a 2020 snapshot. SpaceX engineers said each 60-satellite Starlink launch carried more than 4,000 Linux computers and that the constellation already had more than 30,000 Linux nodes in orbit. Reporting rounded the figure to about 32,000 after several 60-satellite launches. It should not be treated as a current verified count.

SpaceX engineers said onboard computers ran Linux with the PREEMPT_RT patch, while other onboard devices were microcontrollers running bare-metal code. The architecture is mixed, with Linux used where a richer operating environment makes sense and microcontrollers used for narrower low-level tasks.

No public evidence says that it does. SpaceX engineers said they did not use a third-party distribution and maintained their own copy of the kernel and associated tools, with custom drivers for their hardware.

A Starlink satellite is not a single-purpose radio box. It has many subsystems: communications payloads, antennas, routing behavior, power, thermal control, attitude control, propulsion, diagnostics, telemetry, security, update handling, and safety logic. Distributing compute across subsystem roles can be more practical than relying on one central computer.

PREEMPT_RT is a real-time Linux patch set that improves kernel preemption and timing behavior. SpaceX said it used PREEMPT_RT to get better real-time performance. It does not make Linux perfect for every hard real-time task, but it helps when engineers need more predictable response times.

Linux can be safe enough when it is part of a disciplined system with testing, redundancy, process control, custom drivers, telemetry, signed updates, and fault handling. Linux alone does not make a spacecraft reliable. The surrounding architecture does.

Only as an analogy. SpaceX engineers said Starlink satellites should be thought of more like servers in a data center than one-of-a-kind spacecraft because they are operated as a fleet with staged updates, telemetry, and system-level redundancy. They are still spacecraft with propulsion, radios, orbital constraints, and safety requirements.

In 2020, SpaceX said it tended to update software across Starlink satellites about once a week, with smaller test deployments also happening. Ground services were updated even more often. Current exact cadence has not been publicly restated in the same detail.

SpaceX described a staged deployment model in which test builds could be sent to a subset of satellites, compared with the rest of the fleet, paused, tweaked, or rolled back if needed. That model is similar to modern large-scale software operations, adapted to spacecraft risk.

Telemetry lets SpaceX see satellite health, timing behavior, failures, update results, link quality, and fleet patterns. In 2020, SpaceX said Starlink was already generating more than 5 TB of data per day and was working to reduce unnecessary telemetry through onboard detection.

Yes, up to a point. Starlink relies on constellation-level fault tolerance, with multiple satellites in view and a large fleet that can absorb individual failures. Individual satellites still need safe failure behavior, especially deorbit or high-drag modes.

SpaceX has not fully disclosed all Starlink hardware. In the AMA, engineers described handling radiation-induced errors through redundant computers and voting, with failed computers rebooted and reincorporated where possible. That suggests a system-level radiation-tolerance strategy, not simple dependence on one hardened processor.

Not as a confirmed fact. The satellite fleet has grown hugely, so the number of onboard Linux nodes is likely much higher than in 2020. But hardware generations changed, and SpaceX has not published a current Linux-node count. Any exact modern figure would be speculation.

Linux likely supports many onboard software functions, diagnostics, networking services, update mechanisms, and hardware-facing processes. Internet performance also depends on antennas, spectrum, gateways, satellite density, routing, user terminals, weather, congestion, and ground infrastructure.

Starlink satellite internet uses dedicated terminals designed to communicate with Starlink satellites. Direct-to-cell aims to connect ordinary smartphones directly to specially equipped satellites using mobile-network spectrum. Direct-to-cell is lower-capacity and more constrained, but valuable for dead zones and emergencies.

A normal Starlink dish has a much stronger antenna system than a smartphone. Direct-to-cell must communicate with ordinary phones, coordinate with mobile operators, share spectrum safely, support emergency and messaging functions, and manage satellite motion like a moving cell tower.

Yes. The operating system and update model are part of the security boundary. SpaceX said Starlink hardware was designed to run only SpaceX-signed software, and that user data used end-to-end encryption. The security of the fleet depends on signed updates, isolation, monitoring, and command-path protection.

Large satellite constellations can reflect sunlight, create trails in telescope images, emit radio signals, and increase operational burdens for observatories. SpaceX has worked on mitigations, but the scale of Starlink and other planned constellations keeps the issue active.

The main lesson is that modern satellite internet is software-defined infrastructure. Starlink’s service depends on thousands of updateable, monitored, networked computers in orbit and on the ground. The number is memorable, but the architecture is the real story.

Author:

Jan Bielik

CEO & Founder of Webiano Digital & Marketing Agency

This article is an original analysis supported by the sources cited below

We are the SpaceX software team, ask us anything!

The original SpaceX software AMA where engineers discussed Starlink’s Linux nodes, PREEMPT_RT, software updates, telemetry, redundancy, security, and flight-software practices.

SpaceX engineers flash some facts about Starlink satellites

GeekWire’s report summarizing the 2020 SpaceX AMA, including the Linux computer count, telemetry volume, weekly update cadence, and early Starlink constellation context.

SpaceX software lead reveals Starlink details in Reddit AMA

Via Satellite’s coverage of Matt Monson’s Starlink software comments, including the 4,000 Linux computers per launch claim and the constellation-level fault-tolerance model.

Real-time preemption

Linux kernel documentation explaining PREEMPT_RT concepts, including scheduling, priority inheritance, threaded interrupts, and how real-time kernels differ from ordinary configurations.

Linux Foundation training prepares the International Space Station for Linux migration

Linux Foundation case study describing why ISS operations migrated key laptop functions to Linux for stability, reliability, patch control, and in-house adaptability.

International Space Station making laptop migration from Windows XP to Debian 6

Phys.org’s report on the ISS Debian migration, with background on the operational reasons for using Linux in a remote space environment.

SpaceX launches 10,000th active Starlink satellite in low Earth orbit

Space.com’s March 2026 report on Starlink passing 10,000 active satellites, including current constellation context and deorbited satellite counts.

Find Starlink satellites

Live Starlink constellation tracker used for current active satellite counts, total launched counts, decay counts, hardware-type labels, and recent launch status.

FCC approves SpaceX plan to deploy an additional 7,500 Starlink satellites

Reuters report on the FCC’s January 2026 approval of an additional 7,500 Gen2 Starlink satellites and the regulatory limits around SpaceX’s larger request.

FCC gives SpaceX approval for 7,500 more Starlink Gen2 satellites

Via Satellite’s coverage of the FCC’s Gen2 authorization, including frequency expansion, orbital-shell changes, and direct-to-cell implications.

600 rocket landings! SpaceX notches another milestone during Sunday Starlink launch

Space.com report connecting Falcon 9 reuse milestones to Starlink deployment cadence and the continued growth of the constellation.

SpaceX flies 25 Starlink satellites to orbit on its 50th Falcon 9 launch of the year

Spaceflight Now launch coverage used for recent Starlink deployment context and SpaceX’s 2026 launch cadence.

Starlink specifications

Starlink’s official specification document used for published latency ranges and customer-facing performance claims.

Starlink technology

Official Starlink technology page describing the low Earth orbit design behind Starlink broadband and the latency advantage over geostationary satellite systems.

Starlink progress

Official Starlink progress page used for SpaceX’s public framing of customer growth, deployment progress, and service expansion.

SpaceX sends its text messages via direct-to-cell Starlink satellites

Via Satellite report on SpaceX sending and receiving its first direct-to-cell text messages through newly launched Starlink satellites.

T-Satellite with Starlink direct to cell satellite phone service

T-Mobile’s public service page explaining T-Satellite with Starlink, compatible functions, service limits, emergency texting, coverage notes, and smartphone requirements.

Ukraine successfully tests Starlink’s direct-to-cell technology

Reuters report on Kyivstar’s direct-to-cell field test in Ukraine and the role of satellite-to-phone service where terrestrial networks are unavailable.

Starlink’s direct-to-cell satellite service receives FCC approval

The Verge report on FCC approval for SpaceX and T-Mobile supplemental cellular coverage from space, including limits and unresolved power questions.