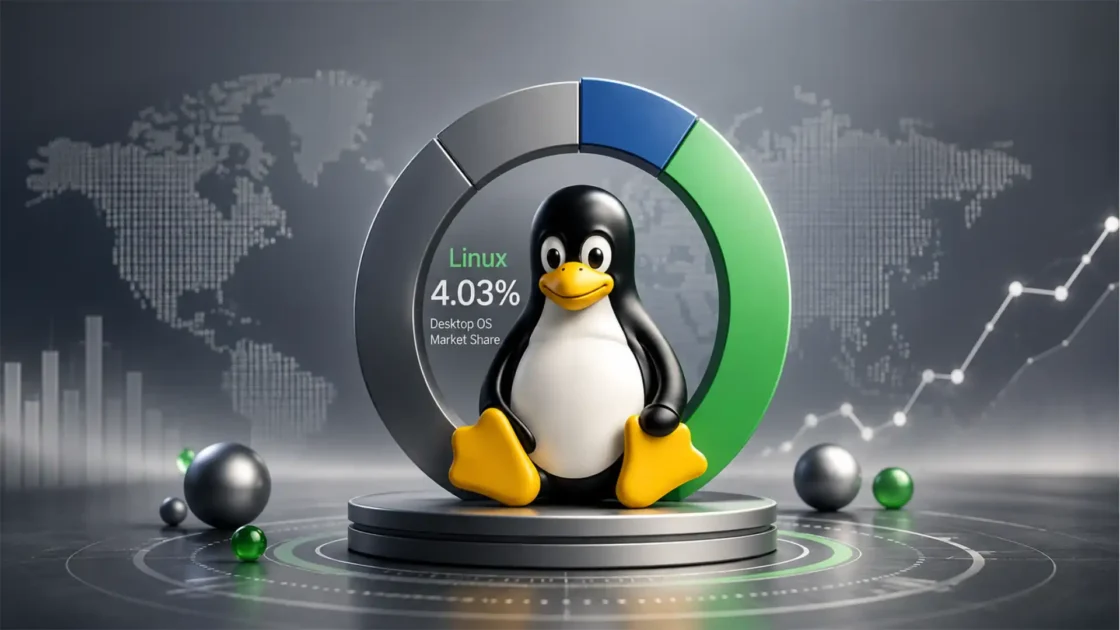

Windows is still the mainstream desktop operating system. Linux is still the default operating system behind a huge share of servers, cloud workloads, containers, and shell-driven engineering work. That pattern has lasted because the two systems were shaped by different pressures and rewarded by different markets. Windows had to win the PC sitting in front of an accountant, a designer, a gamer, a school administrator, or a help-desk-managed employee. Linux had to win the machine in a rack, a VM in a cloud region, a container host, a CI runner, or a box that no one touches physically for three years. StatCounter’s March 2026 desktop numbers still show Windows at 60.8% and Linux at 3.16%, while W3Techs shows Linux on 60.8% of websites with a known server operating system and Windows on 8.9%. That is not a close contest in either direction.

Table of Contents

A lot of bad writing on this topic falls into one of two traps. The first turns Windows into a punchline, as if desktop dominance came from inertia alone. The second turns Linux into a hobbyist fetish, as if the server world somehow forgot to move on. Neither view survives contact with the way real systems are bought, deployed, managed, and used. Windows keeps winning the desktop because desktop computing is an ecosystem problem. Linux keeps winning the shell and the server because infrastructure is an operations problem. Those are different contests, and each operating system was rewarded for excelling in a different one.

The desktop rewards broad application compatibility, predictable hardware support, polished onboarding, commercial vendor backing, and an environment that ordinary users can survive without thinking about the operating system itself. The server room rewards scriptability, transparency, package-driven maintenance, remote control, low overhead, composable tooling, and the freedom to shape the machine around the workload instead of shaping the workload around the machine. By those standards, the current split makes sense. Microsoft’s own product strategy says as much without saying it directly: it keeps investing heavily in Windows for managed endpoints, security, identity, and productivity, while also building WSL so Windows users can run Linux tools locally because that Linux userland is too valuable to ignore.

The interesting question is not whether one operating system is universally better. It is why each one became strong where it did, and why that balance has proven stubborn even after both platforms improved in each other’s territory. Linux desktops are far better than they were a decade ago. Windows command-line work is far better than it used to be. Yet the broad market map remains familiar. That is what deserves a serious answer.

Desktop computing is ruled by friction, not ideology

On the desktop, people talk about freedom and customization until the printer fails, the payroll software refuses to install, the webcam utility crashes, the CAD plugin is missing, or the company VPN support document assumes a Windows settings menu that is not there. Desktop operating systems are judged by friction long before they are judged by architecture. That is the first reason Windows still leads.

A mainstream desktop has to carry an ugly mix of tasks. It has to run office software, line-of-business software, browsers, VPN clients, device drivers, audio tools, video conferencing apps, security agents, browser plugins, certificate middleware, remote support tools, and often one or two ancient pieces of software the organization never managed to retire. Windows has spent decades becoming the platform that vendors assume they must support. Once that assumption is in place, it reproduces itself. Software vendors target Windows first because that is where the users are. Users stay on Windows because that is where the software is. Hardware vendors certify for Windows because that is where support tickets are most likely to come from. IT departments standardize on Windows because it reduces variation. Then more software vendors build for the platform IT departments already standardize on.

This is not glamorous, but it is decisive. Adobe’s current Creative Cloud requirements are still written around supported Windows and macOS environments, not Linux desktop deployments. Autodesk’s current AutoCAD support pages likewise point users toward Windows and Mac editions instead of a normal Linux desktop workflow. For a huge part of the professional desktop market, that settles the debate before it begins. A replacement is not the same thing as a supported standard. The GIMP is not Photoshop for an agency built around Adobe files, plugins, and training. LibreOffice is not a drop-in answer for every organization built around specific Microsoft Office behaviors, macros, add-ins, or compliance rules. Alternatives can be excellent and still lose the real procurement decision.

Windows also owns the “works out of the box” expectation more consistently in mainstream business hardware. That does not mean Linux hardware support is poor. On common laptops and desktops, Linux support can be excellent. It means something narrower and more important in corporate life: Windows is still the platform most OEMs, accessory vendors, and enterprise software providers validate first, document first, and train support staff around first. A docking station that technically functions under Linux but lacks a clean vendor utility, tested firmware workflow, or official support matrix is not equivalent to a fully supported Windows deployment in the eyes of a purchasing team.

This is why desktop market share moves more slowly than technical merit might suggest. Desktop computing is full of dependencies that are not visible in online arguments. Human training is one dependency. Help-desk scripts are another. Procurement rules are another. Vendor certification is another. The person choosing the company desktop image is often not deciding between abstract philosophies. They are deciding between predictable support burden and unpredictable support burden. Windows keeps winning those rooms because the desktop is where standardization beats purity.

Windows became the business desktop before Linux became a serious desktop contender

Linux did not lose the desktop because it was technically incapable of drawing windows, managing files, or connecting to networks. It lost because Windows had already consolidated the commercial desktop world before Linux desktop distributions matured into something ordinary workers could use without a local expert. By the time Linux desktops became genuinely comfortable for daily office tasks, the market had already hardened around Windows and, in some segments, macOS.

That timing matters. A platform does not need to be perfect if it becomes the default before alternatives become administratively safe. Windows arrived in offices with a software ecosystem that kept thickening. Word processors, spreadsheets, accounting suites, ERP clients, design software, email software, and department-specific applications kept targeting Windows because that was where organizations already were. Then Microsoft added the surrounding advantages: domain integration, enterprise identity alignment, management tooling, security policy control, and a vendor story that made CIOs and desktop support managers comfortable. Microsoft Intune now sits squarely in that tradition, with device enrollment, policy management, application deployment, update controls, and compliance workflows designed for large endpoint fleets. Windows Hello for Business adds enterprise-grade authentication tied to device attestation, certificates, conditional access, and policy controls. On a managed desktop, those things are not side notes. They are central.

Linux, by contrast, emerged from a culture that prized openness, flexibility, modularity, and control. Those are powerful virtues. They are also virtues that serve engineers better than procurement committees. For a long time, Linux desktop adoption required a tolerance for variation in package management, desktop environments, hardware quirks, vendor neglect, and self-support. That was acceptable to developers, enthusiasts, universities, and technical teams. It was less acceptable to the broad middle of office computing.

Even now, when desktop Linux is more polished than its reputation suggests, the inherited gap still matters. An enterprise does not migrate thousands of users because the alternative is now “good enough.” It migrates when the new platform reduces cost, risk, or dependence enough to justify the disruption. In many desktop environments, that calculation still favors Windows because the existing software and device stack is already aligned with it. The desktop is conservative for a reason: changing the base platform changes every assumption sitting on top of it.

This also explains why Linux desktop enthusiasm often overstates mainstream readiness by measuring the wrong things. A power user may care that Fedora, Ubuntu, Debian, or openSUSE can be configured beautifully and run fast for years. An operations manager may care instead that the payroll vendor supports only Windows, the badge printer middleware supports only Windows, the legal review plugin supports only Windows, and the training department already knows how to support Windows. In that room, elegance loses to institutional gravity.

Consumer software and specialist software still lean heavily toward Windows

The cleanest proof of desktop reality is not found in market-share graphs alone. It is found in which applications are treated as table stakes by users who are not trying to make a statement with their operating system choice. The desktop belongs to the platform that minimizes compromise in commercial software. Windows still does that for a large share of the market.

Creative software is the obvious case. Adobe’s own requirement pages spell out Windows and macOS support for Creative Cloud desktop applications. That matters because creative work is not just about opening a file. It is about exact compatibility, shared workflows, client expectations, plugin support, training, and supportability. The same logic applies to engineering and drafting tools. Autodesk’s current support pages for AutoCAD focus on Windows and Mac system requirements rather than presenting Linux as a regular, first-class desktop target. That is enough to lock large swaths of architecture, engineering, design, and education into non-Linux desktop workflows.

The same pattern appears in less glamorous sectors. Medical software, specialist scanners, tax software, legal review utilities, insurance platforms, industrial control front ends, exam software, retail back-office tools, and department-written legacy applications often assume Windows by default. Some run in browsers. Many do not. Some could be virtualized or containerized. Many are not. The desktop market is full of software that never makes headlines but quietly decides what organizations can and cannot deploy.

Linux users often answer this by pointing to alternatives, Wine, web versions, virtual machines, or remote desktops. Those can all work. Many are excellent. None of that changes the procurement truth. Needing a workaround means the platform is not the default winner for that workload. Ordinary organizations buy the path with the fewest exceptions, not the path with the most imaginative workaround culture.

This is where Windows’ desktop strength becomes self-reinforcing. When vendors know Windows is the most common target, they allocate QA budgets there. They train support teams there. They certify there. They write installer logic there. They publish troubleshooting guides there. Then buyers interpret that vendor behavior as evidence that Windows is the safer choice, which feeds the next procurement cycle.

Linux can and does win on desktop in narrower bands. It does very well for developers, privacy-focused users, technically confident professionals, schools with well-defined deployments, and organizations that can standardize on web-based tools. It can extend the life of older hardware. It can reduce licensing exposure. It can give skilled users a more controllable machine. But at the scale of the whole desktop market, the platform with the deepest commercial software assumption still has the structural advantage, and that remains Windows.

Gaming tells the truth about desktop convenience

Gaming is useful in this discussion because it strips away a lot of rhetorical decoration. Players care less about operating-system philosophy than about whether the game launches, performs well, updates cleanly, supports anti-cheat, and behaves after a new GPU driver lands. By that standard, Windows is still the easier gaming desktop, even though Linux gaming has improved dramatically.

Valve’s work with Proton changed the Linux gaming story more than any desktop Linux advocacy campaign ever could. Proton exists to let Windows-exclusive games run on Linux through Steam. Valve’s own repository states that plainly. Steamworks documentation for Steam Deck and Proton also documents anti-cheat support, including support paths for Easy Anti-Cheat and BattlEye. That is real progress, and it matters. Linux gaming in 2026 is not a fringe experiment. It is a practical option for many players, especially on Steam-centered libraries.

Still, practical option is not the same as default best choice. Steam’s hardware and software survey continues to show a Windows-heavy player base. Game studios and middleware vendors notice that. So do GPU vendors, anti-cheat providers, launcher developers, and support teams. The easiest path for broad compatibility remains Windows because most PC games are still built, tested, and supported around the Windows stack first. DirectX remains a core Windows gaming pillar. Linux often reaches the same destination by translation, compatibility layers, or developer opt-in. Even where the result is impressive, the route is still more conditional.

Anti-cheat is the part that exposes the remaining difference. Valve documents that Proton supports common anti-cheat systems, but in some cases the game developer still must enable or configure support for their build. Epic’s own announcement on Easy Anti-Cheat support for Linux, Mac, and Steam Deck confirms that support exists, but again, support at the platform level does not guarantee every publisher will ship it. That means Linux gaming still lives partly at the mercy of decisions made elsewhere in the toolchain. Windows does not face that same extra layer nearly as often.

That matters because gaming is not just a hobby category. It is one of the strongest tests of a mass-market desktop operating system. Games are messy software. They stress drivers, launchers, overlays, anti-cheat, input devices, audio stacks, and online services. The platform that absorbs that mess with the least user effort gets credit as the easier desktop. Windows still earns that credit more often.

The interesting change is not that Linux has caught up completely. It has not. The interesting change is that Linux no longer loses instantly. Steam Deck, Proton, and better driver support have made Linux a serious gaming platform for a meaningful slice of users. But the broad default remains Windows because “usually works” is not as strong a desktop selling point as “expected to work.”

A compact view of where each platform feels native

| Workload | Windows feels native when | Linux feels native when |

|---|---|---|

| Office desktop | You need broad vendor support, common peripherals, mainstream enterprise apps, and standard help-desk workflows | You can live mostly in web apps or open tools and want tighter control |

| Gaming desktop | You want the widest compatibility with the least effort | You are comfortable with Proton and accept occasional exceptions |

| Developer workstation | You depend on Windows-only desktop software but still need Linux tools through WSL | Your daily work already revolves around terminals, package managers, and Unix tooling |

| Web and application server | You run Windows-specific workloads or Microsoft-heavy server products | You run web stacks, containers, automation, or cloud-native infrastructure |

The table is not a moral ranking. It is a map of where each operating system matches the assumptions of the job best.

Linux fits the shell because the shell is not an accessory there

A command line on Windows used to feel like a maintenance hatch. A command line on Linux feels like part of the operating system’s nervous system. That difference is one of the main reasons Linux remains dominant in server and engineering environments.

The Linux way of working grew out of Unix traditions built around pipes, composable text tools, permissions, processes, services, remote sessions, and configuration as files. You can feel that ancestry in normal Linux administration. Shell commands do not sit awkwardly beside the system. They expose it. OpenSSH remains the standard way to reach remote machines securely. systemd, regardless of one’s personal taste, gives a unified model for service and boot management across major distributions. Package managers and repositories make it normal to install, update, and audit software from the shell instead of downloading opaque installers from vendor websites. Linux does not merely permit shell-centric work. It assumes it.

That assumption matters at scale. A server admin does not want to click through fifty settings pages on a remote system. A platform engineer does not want to configure 300 machines by hand. A DevOps team does not want production logic hidden in local GUI state. They want commands, manifests, packages, logs, services, environment variables, and files that can be inspected, versioned, reproduced, and automated. Linux gives them exactly that style of control.

Windows has made enormous progress here. PowerShell is a serious automation environment, not a toy shell. Microsoft’s own documentation reflects that maturity. WSL goes even further by letting developers run a real GNU/Linux environment, including Linux tools and applications, directly on Windows without a traditional virtual machine or dual boot arrangement. That is one of Microsoft’s smartest platform decisions in years. It has made Windows much more attractive to developers who need corporate desktop compatibility but still want Linux tooling on the same machine.

But WSL also says something deeper: the industry still values Linux userland so highly that Microsoft chose to bring it into Windows rather than trying to replace it outright. That is not a sign of Linux weakness. It is a sign of Linux’s command-line legitimacy. If the Linux shell, package model, and tooling culture were merely nostalgic habits, WSL would not be such an important product.

This is why many developers live in a mixed reality. They carry Windows laptops because their employer standardizes on Windows, their company security stack assumes Windows, or their workflow still depends on Windows desktop software. Then they open WSL, SSH into Linux environments, build containers targeting Linux, and deploy to Linux production hosts. That pattern is no longer a contradiction. It is the clearest sign that desktop leadership and shell leadership are separate things.

Servers care more about repeatability than polish

A desktop is interactive. A server is accountable. A desktop succeeds when a person can use it comfortably. A server succeeds when it behaves predictably, exposes services reliably, survives updates, and can be administered remotely without surprises. Those are not the same design pressures, and Linux happens to fit the server set exceptionally well.

One reason is minimalism. Linux server distributions can be installed with a very small footprint, limited services, and almost no unnecessary user-facing baggage. That reduces attack surface, lowers resource overhead, and makes the machine easier to reason about. Ubuntu Server is positioned directly for cloud, data center, and scale-out workloads, and Canonical publishes official cloud images for major public-cloud-style environments. Red Hat Enterprise Linux is marketed and deployed as a stable base for enterprise and hybrid-cloud workloads. These are not desktop products awkwardly pushed into server roles. They are operating environments built and maintained with server assumptions in mind.

Another reason is repeatability. Linux servers are usually managed through repositories, packages, configuration files, services, and scripts. That is exactly the shape that modern automation likes. It works cleanly with configuration management, infrastructure-as-code, container images, immutable deployment patterns, CI/CD workflows, and fleet maintenance. In Linux, the server often feels more like a described state than a hand-configured appliance. That is a major operational advantage.

The web stack reflects this. W3Techs still shows Linux vastly ahead of Windows on websites with known server operating systems. NGINX’s own documentation is blunt about the difference between platforms: its Windows version uses the native Win32 API, but high performance and scalability should not be expected, and the Windows version is considered beta. That one official page explains why so much web infrastructure still gravitates toward Linux even when cross-platform support exists on paper. Production does not go where software merely runs. Production goes where the ecosystem is strongest and the assumptions are honest.

Windows Server absolutely has legitimate roles. It is still important for Microsoft-centric environments, Active Directory-related services, certain enterprise applications, and workloads built around Windows containers or .NET ecosystems with Windows-specific dependencies. But where the server’s job is generic web serving, API delivery, proxying, container hosting, automated deployment, or cloud orchestration, Linux usually presents the cleaner operating model. It is easier to strip down, easier to automate, easier to clone, and easier to live with at scale.

That is why Linux dominance on servers is not just a relic of the old LAMP era. It keeps renewing itself because modern infrastructure keeps rewarding the same traits Linux was already good at.

Containers pushed Linux from server default to infrastructure substrate

The container era did not invent Linux’s server dominance, but it made that dominance even more fundamental. Once application delivery shifted toward containers and orchestration, Linux stopped being just a common server operating system and became the substrate beneath a great deal of modern infrastructure.

Red Hat’s container documentation points directly to Linux namespaces, cgroups, and SELinux as foundational mechanisms in container isolation and resource control. Docker’s own security documentation does the same, emphasizing kernel support for namespaces and cgroups along with host hardening features and security policies. Red Hat’s “Containers are Linux” piece states the point plainly: the runtime beneath the container story is Linux. That is the heart of the matter. Containers are not magic parcels floating above operating systems. They are deeply tied to Linux kernel features and Linux operational assumptions.

That matters far beyond developers running local builds. It shapes image ecosystems, base distributions, deployment pipelines, security tooling, monitoring, orchestration, and patching strategy. Once a team standardizes on Linux-based containers, a huge share of its surrounding tooling naturally leans Linux too. Build agents, registries, orchestration, host operating systems, and debugging habits all start orbiting the same center.

Kubernetes makes that even more visible. Official Kubernetes documentation for Windows nodes states clearly that a cluster can include multiple operating systems, but the control plane can run only on Linux while worker nodes can run either Windows or Linux depending on workload needs. That is not a small detail. It means Windows support in Kubernetes exists inside a Linux-governed architecture. Windows participates, but Linux defines the default structure.

The cloud-native ecosystem keeps reinforcing that default. The Linux Foundation and CNCF’s 2025 annual survey describes Kubernetes’ evolution from a container orchestrator to an AI infrastructure platform, reflecting how central cloud-native technologies have become. As those technologies spread, the operational assumptions beneath them spread too. Linux remains the assumed base because Linux was already where containers, orchestration, and server automation felt native.

This is one of the strongest answers to the original question. Windows is better on the desktop partly because desktop value comes from finished end-user compatibility. Linux is better in shells and servers partly because modern infrastructure keeps rewarding systems that expose themselves cleanly to automation and composition. Containers did not create that advantage, but they multiplied it.

Cloud and hosting economics favor Linux in ways desktop buyers rarely see

Desktop discussions often focus on purchase price, licensing arguments, or personal preference. Server decisions are usually colder. Teams compare support models, cloud image availability, automation fit, ecosystem alignment, patching workflows, and the cost of running thousands of instances or containers over time. In that environment, Linux often has a structural edge even before anyone starts talking about ideology.

Canonical’s official Ubuntu cloud images exist because Linux is expected to be deployed as a cloud image over and over again, not just installed once on a local machine. Ubuntu Server is positioned directly for scale-out and cloud workloads. Red Hat Enterprise Linux positions itself as a platform for bare metal, virtual machines, containers, and hybrid cloud. Those are deployment models where repeatability and fleet economics matter more than local desktop convenience.

Linux also benefits from an ecosystem that normalized package repositories, scripted provisioning, infrastructure-as-code, and disposable instances earlier and more deeply. A Linux server is often treated as something that can be rebuilt cleanly from code, not something nursed manually over years of desktop-like configuration drift. That is operationally attractive and financially attractive. It shortens recovery time, reduces snowflake systems, and makes staffing easier because teams can work from shared automation rather than private ritual.

The cloud market is also full of Linux-first defaults. Tutorials assume Linux. Sample deployment commands assume Linux. Container base images assume Linux. Many observability tools, reverse proxies, and platform components are documented first and best for Linux. This does not mean Windows is excluded. It means Windows usually enters these systems as the special case that must be justified by a workload requirement, not as the baseline.

That distinction matters because most production workloads are not emotionally attached to an operating system. They are attached to a supportable, repeatable, cost-conscious path. If a service runs perfectly well on Linux, is easier to containerize on Linux, fits the standard fleet model on Linux, and aligns with the team’s automation on Linux, there is little reason to force it onto Windows. Server operating systems are often chosen by subtraction. Linux survives that subtraction test very well.

This is one reason Linux’s server dominance has outlived so many predictions of convergence. Every new layer of infrastructure that values automation, disposability, and standardization tends to make Linux more, not less, attractive.

Security looks different on a workstation than on a server

Arguments about “which operating system is more secure” are usually too blunt to be useful. Desktop security and server security do not ask the same questions. A managed Windows laptop and a headless Linux API host live in different threat models, different operational routines, and different human contexts. The right comparison is not abstract security quality. It is security fit for the role.

Windows has strong desktop security advantages in managed enterprise environments. Windows Hello for Business brings device attestation, certificate-based authentication, and conditional access-aware controls into the sign-in story. Intune connects policy, compliance, updates, app deployment, and endpoint governance. On a corporate desktop, that combination is powerful because identity, user behavior, and device state are deeply connected. The endpoint is not just a machine. It is a managed participant in an identity system.

Linux’s strengths show up differently. On servers, Linux is often deployed with a narrow purpose, minimal package sets, limited exposed services, and strong remote-admin habits. Red Hat’s recent SELinux material frames SELinux as a cornerstone technology in RHEL security hardening. Its documentation describes SELinux as an additional layer of system security answering whether a subject may perform a given action on an object. Docker’s security material also points to namespaces, cgroups, and host hardening features. This is a security model that fits server discipline very well: isolate services, reduce moving parts, control privileges, and automate patching across a predictable fleet.

Linux also benefits from the fact that many production Linux systems are not interactive general-purpose machines. A reverse proxy should not be used for email, office work, and random browsing. A Kubernetes node should not be a personal workstation. A database host should not need a rich GUI environment. That narrow purpose helps hardening because the acceptable behavior is easier to define. When the system’s job is narrow, unusual behavior stands out sooner.

Windows can absolutely be secured on servers, and Microsoft has made serious investments there. But the strongest case for Windows security still tends to be the managed endpoint and Microsoft identity ecosystem, while the strongest case for Linux security tends to be the tightly scoped server and infrastructure role. Each platform shines where its security model is most natural, not where online partisans wish it did.

Linux’s openness is a superpower on servers and a complication on desktops

Linux is not one product. It is an ecosystem of distributions, release models, desktop environments, package formats, support contracts, and community norms. That openness is one of Linux’s greatest strengths. It is also one of the reasons Linux thrives more easily in server rooms than in ordinary offices.

On the server side, flexibility is an asset. Teams can choose Ubuntu Server for one set of operational reasons, RHEL for another, Debian for another, or a container-optimized base for another. They can align package policies, support terms, kernel cadence, and image strategy with the actual workload. This freedom is useful because server operators care deeply about those details. They want control over release channels, support windows, base image behavior, and system composition. Linux lets the operator shape the platform around the workload.

On the desktop, the same diversity can become a burden. Different package systems, different desktop shells, different GUI expectations, and different vendor support levels can make Linux feel fragmented to organizations that want one answer for everyone. This is not a fatal flaw. It is a tradeoff. Skilled users often love the freedom because they know how to use it. Large non-technical organizations often dislike the freedom because every extra degree of variation becomes another support variable.

That split explains a lot. The same operating-system culture that helps an SRE build a clean immutable deployment pipeline can annoy a finance department that just wants the same PDF tool, the same smart-card middleware, and the same screen layout on every machine. In one environment, choice is power. In the other, choice is support overhead.

Windows benefits from the opposite trade. It is more centralized, more standardized, and more constrained by Microsoft’s platform direction. Desktop buyers often interpret that as stability and supportability. Server operators often interpret it as less attractive for highly customized or Linux-first infrastructure paths. Neither reaction is irrational. They reflect different operational priorities.

This is why Linux adoption on desktop often grows strongest in places where users value control enough to justify the complexity: development, engineering, privacy-minded personal use, education with tailored deployments, and organizations living mostly in browser-based tools. It also explains why Windows remains stubbornly strong in the mainstream office. The office usually wants a narrower path than Linux culture naturally offers.

Microsoft itself now treats Linux as a necessary companion

One of the strongest arguments for Linux’s command-line and server legitimacy comes from Microsoft’s own behavior. Microsoft is no longer acting as if Windows must be the exclusive home for everything. It supports Linux where Linux has already won the right to exist.

WSL is the clearest example. Microsoft’s documentation presents WSL as a way to run a GNU/Linux environment, including most command-line tools, utilities, and applications, directly on Windows without the overhead of a traditional virtual machine or dual-boot setup. That is not a fringe feature tucked away for hobbyists. It is a mainstream answer to a mainstream need. Microsoft could have insisted that PowerShell plus Windows-native tools were enough for everyone. It did not. It acknowledged that many developers want Linux behavior, Linux packages, and Linux tools on their workstation.

SQL Server on Linux tells the same story from the server side. Microsoft’s documentation includes installation guidance for SQL Server on Linux, including Ubuntu and Red Hat Enterprise Linux. Red Hat, for its part, positions RHEL as a platform for Microsoft SQL Server across bare metal, virtual machines, containers, and hybrid cloud deployments. This is not a concession to niche demand. It is a response to the fact that many enterprise infrastructures are already Linux-centered, and Microsoft’s database business must meet them where they are.

Even Kubernetes support for Windows reflects this pattern. Windows nodes are supported because some workloads require them, but the official architecture still places the control plane on Linux. Windows is accommodated inside a Linux-shaped infrastructure model. That is a very different posture from the old era of platform rivalry. It is also more honest. The modern enterprise is mixed, and the operating system battle is no longer won by demanding exclusivity. It is won by remaining indispensable where your platform is strongest.

Microsoft remains indispensable on the managed desktop and in major enterprise identity and productivity stacks. Linux remains indispensable in cloud-native infrastructure, shells, containers, and a vast share of server operations. The market has settled into coexistence, but not symmetrical coexistence. Each platform has a domain where it still feels like the native tongue.

Linux also dominates the places where performance discipline matters most

One reason Linux keeps its reputation for server seriousness is that it is overwhelmingly present in computing environments where raw scale, determinism, and administrative control matter. The TOP500 list remains a useful signal here. The site continues to track the world’s most powerful supercomputers, and Linux remains the standard environment in that world. That does not mean every web server decision should mimic supercomputing, but it does mean the platform has long been trusted where performance engineering is not casual or decorative.

The deeper point is not that Linux is automatically faster for every workload. It is that Linux gives operators a long tradition of tuning, inspection, service control, scheduler awareness, filesystem choice, network tooling, and minimal deployments that fit environments where performance must be understood rather than merely hoped for. On the desktop, many users will never touch that layer. On servers, especially high-volume systems, those details matter.

This ties back to the shell. A platform that exposes itself clearly through logs, services, process tools, package metadata, configuration files, and remote sessions is easier to inspect under load. It is easier to automate during incidents. It is easier to strip down until the machine is doing almost nothing except the job it was assigned. Linux has decades of institutional practice around that style of operation.

Windows can be tuned too, and it performs very well for the workloads it targets. But the cultural default is different. Windows desktop culture historically prioritized integrated end-user computing; Linux server culture prioritized operator legibility. That difference still leaks into tooling, documentation, staffing, and habits.

For infrastructure teams, this matters because operating systems are not judged only on their best day. They are judged during patch windows, after odd failures, under unexpected traffic, during migrations, and in the middle of incidents at 3 a.m. Linux’s command-line-first nature is often a comfort in those moments because the machine is willing to show its workings without forcing the operator through layers of abstraction.

Windows desktops and Linux servers often belong in the same company for good reasons

A lot of organizations have already settled this argument in practice by refusing to choose only one side. They run Windows on employee endpoints and Linux in the backend. That pattern is not indecision. It is a rational division of labor.

Windows endpoints make sense because the employee desktop is where broad software support, standard onboarding, identity integration, device compliance, and user familiarity matter most. The same business might then run Linux for web hosting, reverse proxies, Kubernetes worker pools, CI systems, internal developer services, logging stacks, and API infrastructure because those systems benefit from Linux’s automation-friendly nature and ecosystem alignment.

This mixed model also reduces pointless friction. A developer may use Windows because that is the company standard for laptops, the security tooling assumes it, and several required desktop apps run there best. That same developer may use WSL locally, then deploy into Linux containers on Linux hosts because the production estate is Linux-native. Nothing about that workflow is contradictory. It simply follows the strengths of each platform.

The same logic appears in enterprise software strategy. Microsoft makes desktop management better through Intune and identity-linked security. It makes developer life better through WSL. It makes database adoption easier by supporting SQL Server on Linux. Kubernetes supports Windows nodes for workloads that need them while leaving the control plane on Linux. Vendors are adapting to the market as it exists, not to the tidy single-platform visions people argue about online.

This is probably the most mature way to understand the topic. Windows is not “better” because it should run everything. Linux is not “better” because it should replace everything. Each one is better where the surrounding ecosystem multiplies its advantages. The modern company often uses both because the workloads are genuinely different.

The old stereotypes are outdated, but the core balance still holds

It would be lazy to describe Windows as a bloated toy for non-technical users or Linux as an unusable desktop for hobbyists. Both stereotypes are badly out of date. Windows has serious command-line capability now. Linux desktops are polished and practical for many users. Gaming on Linux is no longer a novelty. Developers can do real work comfortably on either platform depending on the surrounding constraints.

Yet progress at the edges has not erased the center of gravity. Windows still leads the mass desktop because mass desktop computing is mostly about compatibility, support, and standardized user experience. Linux still leads shells and servers because those domains reward systems that expose themselves cleanly to automation, composition, and remote control. The evidence still lines up that way in 2026, both in desktop market share and in web/server deployment patterns.

The strongest proof is that even convergence often happens on Linux’s terms in technical workflows. WSL brings Linux into Windows. Kubernetes supports Windows inside a Linux control-plane world. SQL Server runs on Linux because Linux is where many enterprise workloads already live. Proton helps Windows games run on Linux because the game catalog is still Windows-centric. These are hybrid solutions, but they reveal where each platform’s center of power still lies.

That is why the split has proven so durable. It is not just historical inertia. It is a match between operating-system culture and workload reality. The desktop market wants a broad, standardized commercial surface. The infrastructure market wants a transparent, scriptable operational surface. Windows and Linux were shaped by those demands, and the market kept rewarding them for different reasons.

Choosing the right operating system means respecting the job, not the brand

The cleanest answer to the original question is also the least dramatic. Windows is usually better on the desktop because desktop users and desktop organizations value compatibility, vendor support, peripheral predictability, software availability, and managed endpoint tooling. Linux is usually better in command-line and server usage because operators value automation, transparency, low overhead, remote control, package-driven maintenance, and alignment with containers and cloud-native infrastructure.

That answer leaves room for exceptions, and the exceptions matter. A Linux desktop can be the better personal computer for a developer, an engineer, a privacy-minded user, or anyone whose workflow lives comfortably outside proprietary Windows-only software. A Windows server can be the right answer for Microsoft-specific enterprise workloads, Windows containers, or environments tightly bound to Microsoft infrastructure. The point is not to erase exceptions. The point is to identify the defaults honestly.

The habits of each platform reveal the truth. Windows tries to smooth the user experience and reduce visible complexity for a broad audience. Linux tries to make the system legible and controllable for people willing to use that control. One of those instincts tends to win where human users spend their day clicking through mixed commercial tasks. The other tends to win where operators need a machine they can script, reproduce, and trust under automation.

That is why this split has lasted longer than many predictions. It was never only about technical superiority. It was about fitness for the job. Windows fit the office desk. Linux fit the rack, the shell, the container host, and the cloud image. After all the crossover, all the hybrid tooling, and all the ideological noise, that basic division still explains more than any slogan does.

FAQ

Because it usually creates less friction with mainstream software, peripherals, enterprise management tools, and gaming libraries. That combination still matters more to most desktop buyers than customization alone.

Because Linux is built around shell access, package repositories, service management, automation, and low-overhead deployments that fit remote server operations extremely well.

No. Modern Linux desktops are very usable. The main limitation is not basic usability but weaker support for some commercial software, specialist tools, and certain vendor-certified workflows.

Much less than before. WSL makes Windows a stronger developer machine by letting users run real Linux tools directly on Windows. But that improvement also highlights how valuable Linux tooling remains.

Because Linux aligns well with common web stacks, reverse proxies, cloud images, container hosts, and shell-first administration. W3Techs still shows Linux far ahead of Windows in known server OS usage on websites.

Yes. Kubernetes supports Windows worker nodes for workloads that need them. But the control plane still runs on Linux, which shows where the architecture’s center of gravity remains.

Because the two platforms solve different problems well. Windows is strong for employee endpoints and management. Linux is strong for infrastructure, automation, and backend services.

For many players, yes. Proton and Steam Deck support made Linux gaming dramatically better. Windows still remains the easier default because native assumptions, launcher support, and anti-cheat behavior are still more consistently Windows-first.

Because desktop users interact directly with finished applications, hardware, and vendor utilities. Server operators care more about whether the platform can run services, automate deployments, and stay maintainable over time. That changes what “better” means.

Sometimes, yes. If a company relies mainly on browsers, open standards, and a narrow device set, Linux can work very well. The harder cases are the ones tied to Windows-only software, drivers, and support commitments.

Because it lets Windows keep its desktop strengths while borrowing Linux’s command-line strengths. It is one of the clearest acknowledgments that the two operating systems excel in different places.

No. Cost matters, but Linux’s server strength also comes from operational fit: automation, cloud images, container alignment, package-based maintenance, and a huge infrastructure ecosystem built around it.

Because most non-technical users want an environment where commercial apps, peripherals, gaming, and workplace support all line up with minimal effort. Windows still delivers that more consistently for the broad middle of the market.

Not as a universal rule. Linux often shines in tightly scoped server roles. Windows often shines on managed enterprise endpoints tied to strong identity and compliance controls. Security depends on the role and operating model.

Because containers rely heavily on Linux kernel features such as namespaces and cgroups, and the broader container ecosystem grew around Linux assumptions.

Yes. They are often the right choice for workloads that depend on Windows-specific enterprise software, Windows containers, or deeply Microsoft-centered infrastructure. The point is that generic web and cloud-native workloads usually lean Linux instead.

Because desktop success depends on software ecosystems, support chains, training, procurement, and vendor assumptions, not just on technical quality. Windows established that ecosystem lead long ago and still benefits from it.

Windows is strongest where the operating system should disappear behind broad user compatibility. Linux is strongest where the operating system should expose itself cleanly to operators and automation.

Probably not. The platforms overlap more than they used to, but desktop and infrastructure still reward different strengths, and those strengths continue to line up with Windows and Linux in familiar ways.

Author:

Jan Bielik

CEO & Founder of Webiano Digital & Marketing Agency

This article is an original analysis supported by the sources cited below

Desktop Operating System Market Share Worldwide

StatCounter’s current desktop market-share page, used for the latest Windows and Linux desktop share figures.

Linux vs. Windows usage statistics, April 2026

W3Techs comparison page used for current Linux and Windows website server operating-system share.

Usage Statistics and Market Shares of Operating Systems for Websites

W3Techs operating-system overview page used to support server-side share context.

Windows Subsystem for Linux Documentation

Official Microsoft documentation used for WSL capabilities and its role in developer workflows.

How to install Linux on Windows with WSL

Microsoft’s installation guide used to support the claim that WSL is a mainstream, supported workflow.

Windows Hello for Business overview

Microsoft’s official overview used for enterprise authentication, device attestation, and policy-driven desktop security.

Configure a tenant-wide Windows Hello for Business policy in Intune

Microsoft Intune documentation used for managed endpoint policy and deployment context.

Windows Hello for Business settings in Microsoft Intune

Microsoft’s Intune settings documentation used to support identity and endpoint management details.

Technical requirements for Creative Cloud apps

Adobe’s requirements page used to support the argument about commercial desktop software support patterns.

System requirements for AutoCAD for Mac

Autodesk support page used in the discussion of platform support in professional desktop software.

Proton

Valve’s Proton repository used to support Linux gaming via Windows-game compatibility layers.

Steam Deck and Proton

Steamworks documentation used for Proton behavior and anti-cheat support details.

Steam Hardware & Software Survey

Valve’s current survey used for gaming platform context on desktop PCs.

Docker Engine security

Docker’s security documentation used for namespaces, cgroups, and container host security context.

Containers are Linux

Red Hat’s article used to support the point that the container runtime story is fundamentally Linux-based.

Windows nodes in Kubernetes

Official Kubernetes documentation used for Linux control-plane limits and Windows worker-node support.

nginx for Windows

NGINX documentation used for Windows support limits, including performance and scalability caveats.

Ubuntu Cloud Images

Canonical’s official cloud-image page used for Linux cloud deployment context.

Get Ubuntu Server

Canonical’s Ubuntu Server page used for current server positioning and deployment context.

Red Hat Enterprise Linux for Microsoft SQL Server

Red Hat’s page used for the enterprise case for SQL Server on Linux.

Quickstart install SQL Server and create a database on Red Hat

Microsoft’s official SQL Server on Linux guide for RHEL used to support Linux production support claims.

Quickstart install SQL Server and create a database on Ubuntu

Microsoft’s official SQL Server on Ubuntu guide used for Linux workload support context.

SELinux and RHEL: A technical exploration of security hardening

Red Hat’s security article used for Linux hardening and SELinux context.

Getting started with SELinux

Red Hat documentation used to support the explanation of SELinux as an additional security layer.

CNCF Annual Cloud Native Survey: The infrastructure of AI’s future

Linux Foundation and CNCF survey page used for cloud-native adoption context.

CNCF Annual Survey Report final PDF

Primary survey report used to support claims about Kubernetes and cloud-native infrastructure significance.

TOP500

Official TOP500 site used for Linux’s continuing centrality in top-tier high-performance computing.