Performance marketing used to have a clean promise. Spend money, track the response, cut what fails, scale what works. For a long stretch of digital advertising history, that promise felt almost scientific. Search ads revealed demand. Paid social found lookalike audiences. Retargeting followed visitors until they returned. Dashboards showed clicks, conversions, revenue, ROAS, CPA and conversion rate with a confidence that made the work feel controllable.

Table of Contents

That version of performance marketing is gone.

The discipline has not disappeared. It has become harder, more technical, more strategic and more connected to the economics of the whole business. The strongest performance marketers of the 21st century are no longer just campaign operators. They are translators between customer demand, media systems, product economics, consent, analytics, creative testing and business margin.

Digital advertising is still growing. IAB reported that U.S. digital ad revenue reached nearly $300 billion in 2025, with search still the largest format and video showing the fastest growth among major formats. That growth does not make performance marketing easier. It means more money is being pushed through systems that are more automated, more regulated and less transparent than the systems marketers learned a decade ago.

Marketing budgets also face pressure. Gartner’s 2025 CMO Spend Survey reported that marketing budgets remained flat at 7.7% of company revenue, while a separate Gartner release said digital channels represented 61.1% of total marketing spend. More spend is digital, but the tolerance for waste is shrinking.

That is the central tension. Performance marketing is receiving a larger share of attention, tools and budget, yet the old measurement model is weaker. Third-party cookies are unreliable across browsers and user behavior. Apple’s App Tracking Transparency changed mobile attribution. EU rules have raised the bar for consent and ad transparency. AI systems now decide bids, placements, audiences and creative combinations in ways that are not fully visible to the buyer.

The result is a new craft. It still cares about CPA, ROAS, CAC, LTV and conversion rate. It still needs sharp execution inside Google Ads, Meta, TikTok, retail media networks, affiliate platforms, CRM systems and analytics tools. But the best work now starts before the platform. It asks harder questions.

Which conversions should the algorithm value? Which customers are worth acquiring? Which data can legally and reliably be used? Which landing page deserves traffic? Which creative angle is creating demand rather than harvesting existing demand? Which sales are incremental? Which campaigns look profitable only because attribution gives them too much credit?

The dashboard remains useful, but it is no longer the source of truth. It is evidence. Evidence still needs judgment.

The old bargain of total tracking is broken

Performance marketing grew up with a quiet bargain. Users could move across the web, platforms could identify them, advertisers could follow them, and measurement systems could stitch the journey together afterward. That bargain was never as complete as dashboards suggested, but it worked well enough for many brands to build media plans around it.

The bargain broke in stages.

Browsers restricted third-party cookies. Users blocked tracking. Regulators challenged opaque data collection. Mobile operating systems placed consent prompts between apps and identifiers. Platforms moved more reporting into modeled or aggregated views. Walled gardens gave advertisers numbers, but not always the raw material needed to verify them independently.

Google’s long-running Chrome cookie story shows how unstable the ground became. The company first planned to phase out third-party cookies, then shifted direction. In April 2025, Google said it would maintain its current approach to third-party cookie choice in Chrome and would not roll out a new standalone prompt. Google also said it would continue tracking-protection work, including stronger protections in Incognito mode.

That did not restore the old world. Even where third-party cookies remain technically available, marketers cannot treat them as a durable foundation. Safari, Firefox, mobile apps, consent choices, ad blockers and platform reporting limits have already changed the measurement environment. The practical lesson is not “cookies are back.” The practical lesson is that no serious performance strategy should depend on one tracking mechanism.

Apple made the mobile side even clearer. Its App Tracking Transparency framework requires apps to ask permission before tracking users or accessing the device advertising identifier. If permission is not granted, the advertising identifier is unavailable for tracking as described by Apple’s policy.

For performance teams, this creates a new hierarchy of trust. Server-side events, consented first-party data, clean CRM fields, offline conversion imports, product feeds, customer lifetime value models and incrementality tests matter more than pixel-only reporting. The pixel still matters, but it sits inside a wider measurement stack.

The broken bargain also changes how marketers should talk to leadership. A report that says “Meta generated 800 purchases” or “Google Ads delivered 500 leads” is not enough. The better question is whether those purchases or leads would have happened without the spend. The answer is often uncomfortable. Branded search, retargeting, email remarketing and bottom-funnel catalog ads can look brilliant in platform attribution while adding less new demand than the report implies.

That does not make them useless. It means they need a different role. Some campaigns defend demand. Some harvest demand. Some create demand. Some educate. Some accelerate a slow decision. A mature performance program knows the difference and does not judge every campaign by the same attribution window.

The performance marketer’s job has shifted from chasing perfect tracking to building a useful truth system. That system accepts gaps. It mixes platform data, analytics data, CRM data, finance data and experiments. It shows ranges, not false certainty. It gives the business enough confidence to move money without pretending that every click can be known.

Automation has moved the center of control

The biggest operational change in performance marketing is not that AI exists. It is that AI has moved the controls.

For years, campaign managers controlled the visible mechanics of paid media. They chose keywords, match types, bids, placements, audiences, devices, schedules, creatives and exclusions. Skill often meant finding small pockets of control the platform had not automated away. That skill still has value, but it is no longer the core of the job.

Google’s Performance Max gives advertisers access to Google inventory across Search, YouTube, Display, Discover, Gmail and Maps from a single goal-based campaign. Google’s Smart Bidding uses auction-time bidding to tune for conversions or conversion value. Target ROAS bidding uses Google AI to predict conversion value at auction time and set bids against the advertiser’s return target.

Google has also pushed Search further toward automation. In April 2026, Google said AI Max for Search campaigns was moving out of beta and reported that advertisers using the full AI Max feature suite saw an average of 7% more conversions or conversion value at similar CPA or ROAS compared with search term matching alone.

Meta has taken a similar path with Advantage+. Meta describes Advantage+ as a suite using AI to improve campaign performance in real time, while Advantage+ sales campaigns are built to reduce setup and improve sales-campaign delivery. TikTok’s Smart Performance Campaign is described as an end-to-end automation campaign product using less manual input.

The pattern is clear. Platforms want fewer manual constraints and more high-quality inputs. They want budgets, conversion events, creative assets, feeds, audience signals, landing pages and enough volume to learn. The platform then allocates delivery inside its own system.

That changes where human judgment belongs. The old question was often “Which bid should I set?” The newer question is “What am I asking the machine to learn?” A bidding system trained on low-quality leads will buy more low-quality leads. A campaign fed weak creative will find the least bad way to spend money. A product feed with poor titles and missing attributes will limit Shopping and Performance Max before the auction starts. A conversion event that fires too early in the funnel will reward shallow intent.

Automation is not strategy. It is an amplifier. It amplifies clear economics and clean signals. It also amplifies sloppy setup.

This is why the performance marketer’s control has moved upstream. Control now lives in measurement design, conversion value, customer segmentation, creative inputs, offer architecture, landing-page speed, consent implementation, margin data and budget rules. The person clicking buttons inside the ad platform has less direct control than before, but the team designing the system has more influence than ever.

The dangerous response is to fight automation by adding unnecessary constraints. Tiny audiences, fragmented campaigns, thin budgets and constant edits can starve learning systems. The equally dangerous response is to surrender judgment and let campaigns run because the platform claims they are “learning.” The modern craft sits between blind control and blind trust. It gives automation enough room to work, then audits whether the business result is real.

The signal layer now decides campaign quality

Campaign structure still matters, but the signal layer now matters more.

The signal layer is the set of inputs that tells ad platforms what happened and what matters. It includes web events, app events, server-side events, enhanced conversions, offline conversions, CRM status changes, revenue values, product feed data, consent signals and customer quality data. If the signal layer is weak, the media layer becomes guesswork with better graphics.

Google’s enhanced conversions show the direction of travel. The feature supplements existing conversion tags by sending hashed first-party conversion data, such as email addresses, to Google in a privacy-conscious way. Google says this can improve conversion measurement accuracy and support stronger bidding. Consent Mode lets advertisers communicate cookie or app identifier consent status to Google, with tags adjusting their behavior based on user choices.

Those tools do not remove the need for legal consent, careful implementation or data hygiene. They do show that performance marketing now depends on engineering-quality setup. A broken event, duplicated purchase tag or poorly mapped offline conversion import can mislead bidding systems for weeks. Small technical mistakes become media waste.

The signal layer should answer four questions.

First, what happened? This is the event layer: page views, product views, add-to-cart actions, leads, purchases, subscriptions, renewals, cancellations, qualified opportunities and offline sales.

Second, who gave permission? This is the consent layer. It should record user choices, update tags accordingly and respect withdrawal. Consent is not a cosmetic banner; it changes what data can be collected, stored and used.

Third, what was the business value? This is the value layer. A lead from a student with no budget is not equal to a lead from a procurement director ready to buy. A first purchase from a discount-only customer is not equal to a first purchase from a customer likely to reorder at full margin. Value-based bidding needs values that reflect reality.

Fourth, which data is clean enough to train on? This is the quality layer. It deals with bot traffic, spam leads, internal traffic, refunds, duplicate events, delayed revenue, sales-cycle stages and mismatches between web analytics and finance.

Many companies skip this work because it is unglamorous. They would rather test new channels, new hooks or new campaign types. But the signal layer is where performance gains often hide. Not because it is exciting, but because it changes what the platform learns.

A lead-generation business gives a clear example. If Google Ads is trained to value every form submission equally, it will find people likely to submit forms. If it receives imported CRM data showing which leads became qualified opportunities, it can learn toward quality. If it receives revenue or predicted value, it can learn toward business outcomes. The campaign interface may look similar, but the system is now solving a different problem.

The deepest performance work often happens outside the ad account. It happens in the tag manager, the CRM, the consent platform, the checkout, the product feed, the analytics warehouse and the finance model.

First-party data is the new operating asset

First-party data has become a cliché in marketing decks, but the idea is simple. It is the data a company earns directly through its own customer relationships. Email addresses, purchase history, subscription status, product preferences, support interactions, loyalty activity, account firmographics, consent records and declared interests can all be first-party assets when collected and used properly.

The strategic value is not just targeting. First-party data lets performance teams define value in their own language instead of accepting the platform’s default version of value.

Platforms know a lot about users inside their ecosystems. They do not know your gross margin, inventory pressure, customer service cost, sales acceptance rate, renewal probability or product return pattern unless you give them useful signals. First-party data lets advertisers move from “get conversions” to “get the conversions that matter.”

IAB’s State of Data 2025 describes the industry’s movement toward first-party data, alternative identifiers and data clean rooms as signal loss reshapes advertising. The same report places AI across media planning, activation and measurement, which raises the value of clean, permissioned inputs.

A strong first-party data program does not mean collecting everything. The better approach is selective. Gather what improves the customer experience, measurement or economic decision-making. Remove fields that are stale, risky or unused. Keep consent records. Connect systems so data does not live in dead silos.

For ecommerce, this might mean joining order data, margin, discount use, return behavior, product category and reorder rate. For B2B, it might mean connecting ad click IDs to CRM stages, sales notes, firmographic data, opportunity value and closed-won revenue. For apps, it might mean connecting installs to in-app events, trial quality, retention and subscription revenue while respecting platform privacy rules.

First-party data also changes creative. A brand that understands which problems high-value customers mention before buying can produce sharper ads. A SaaS company that knows which industries close fastest can build landing pages and proof points for those segments. A retailer that sees repeat purchase by category can build bundles and lifecycle campaigns instead of constantly rebuying the same customer through paid media.

The risk is overreach. Consent fatigue is real. Users do not owe brands unlimited data because the brand wants better ROAS. The data exchange has to feel fair. That means clearer forms, honest preference centers, fewer manipulative consent patterns and a better reason for the user to share information.

The companies that win with first-party data will not be the ones with the largest database. They will be the ones with the cleanest, most useful and most responsibly activated data. A smaller consented dataset can outperform a huge messy dataset because algorithms reward clarity more than volume.

Measurement has to leave last click behind

Last-click attribution survived because it is easy to understand. A user clicked an ad, then converted. Credit goes to the click. The model is tidy, reportable and often wrong.

It overcredits demand capture. It undercredits demand creation. It rewards channels that sit closest to purchase. It encourages brands to spend too much on retargeting, branded search and bottom-funnel traffic while starving the activity that made people care in the first place.

Data-driven attribution tried to improve this by assigning credit based on observed conversion paths, but user-level path data is less complete than it once was. Platform attribution can still be useful, yet it remains bounded by the platform’s view of the world. Google Analytics, Google Ads, Meta, TikTok, affiliate networks and ecommerce platforms can all tell different stories about the same sale.

Modern performance measurement needs a layered model.

The first layer is operational reporting. It answers daily questions: Are campaigns spending? Are events firing? Are CPAs rising? Did a creative fatigue? Did conversion rate break after a site release?

The second layer is platform learning. It feeds bidding systems with conversion and value signals. It is less about executive truth and more about helping the machine buy better.

The third layer is incrementality. Google Ads Conversion Lift measures conversions directly driven by people seeing ads, while Meta’s Conversion Lift compares exposed and control groups to estimate incremental impact. These tests are not perfect, but they ask the right causal question.

The fourth layer is marketing mix modeling. Google’s Meridian is an open-source MMM built for privacy-durable measurement, using aggregated data rather than cookie or user-level information. Meta’s Robyn is an open-source MMM package from Meta Marketing Science. These tools signal a wider return to probabilistic measurement because deterministic attribution has become harder.

The fifth layer is finance reconciliation. Marketing numbers should eventually connect to revenue, margin, cash flow, pipeline, retention or other business outcomes. If a channel looks great in-platform but the finance view does not move, the measurement model needs scrutiny.

The mature answer is not to pick one model and defend it. The mature answer is to know what each model is good for. Platform attribution is useful for tactical control. Lift testing is useful for causal proof. MMM is useful for budget planning across channels. CRM data is useful for lead quality. Finance data is useful for commercial truth.

A performance team that understands measurement limits makes better decisions. It knows when to scale, when to test, when to hold out, when to protect spend and when to stop trusting a beautiful chart.

Creative is now a performance system

Creative used to sit awkwardly inside performance marketing. Brand teams made “big ideas.” Performance teams resized assets, wrote direct-response copy and tested button colors. That split does not match how platforms now work.

Automated delivery systems need many creative inputs. Paid social feeds reward freshness, relevance and native behavior. Short-form video has trained users to reject ads that look like ads too early. AI-assisted campaign systems combine text, images, videos and landing pages into many permutations. Creative is no longer the wrapping around the media plan. It is one of the main control surfaces.

The best performance creative starts from customer tension, not from channel specs. What does the buyer believe before they buy? What fear blocks the decision? Which comparison are they making? Which promise sounds too good to trust? Which proof reduces risk? Which objection kills the sale?

Creative testing should answer those questions. A hook test is not just a race between thumbnails. It is a way to learn which customer angle has demand. An offer test is not just a discount exercise. It reveals price sensitivity, urgency and perceived value. A landing-page test is not just UX work. It shows which claims deserve more media.

The rise of creator-led advertising has also blurred brand and performance. IAB’s 2025 Creator Economy report projected U.S. creator ad spend at $37 billion in 2025, up 26% year over year and growing faster than the total media market.

Creator ads work in performance programs because they carry social proof, demonstration and cultural fluency that polished brand assets often lack. Yet they need discipline. A creator brief should not crush the creator’s voice, but it must define the job: introduce the problem, show the product, handle the objection, prove the claim, create a reason to act.

AI has made creative production faster, but speed creates its own trap. More assets do not automatically mean more learning. A messy creative pipeline can produce hundreds of ads that test nothing because every variable changes at once. Performance teams need a taxonomy: hook, audience belief, product claim, format, proof type, offer, landing page and funnel stage.

Creative volume without learning architecture is just noise.

A better system names each test clearly. It separates concept tests from execution tests. It tracks creative fatigue by angle, not only by ad ID. It feeds winning claims into SEO, email, sales enablement and product pages. It gives qualitative notes a place beside quantitative results.

Creative has become the fastest way to change campaign outcomes because platforms have taken over much of the mechanical targeting. The advertiser still controls what is being said, what is being shown and what promise the market is asked to believe. That is not a small lever. It may be the biggest one left.

Search intent is changing under AI search

Search performance marketing was built around intent. A person types a query, the advertiser responds. The query reveals language, urgency and commercial stage. Keyword strategy became a map of demand.

AI search complicates that map. Search engines now summarize, compare, recommend and answer before the user clicks. AI Overviews and other answer systems do not remove search behavior, but they change the shape of it. Some informational clicks disappear. Some users ask longer questions. Some arrive later in the journey with more context. Some compare brands inside AI-generated answers before visiting a website.

Google’s guidance on helpful, reliable, people-first content still stresses usefulness and reliability, while its Search Quality Rater Guidelines place trust at the center of page quality through E-E-A-T. Google also says structured data helps Search understand page content and entities.

For performance marketers, the search shift matters in three ways.

First, paid search cannot live alone. If AI answers shape consideration before the click, then organic content, brand mentions, reviews, technical SEO, structured data, product information and authoritative explanations influence paid performance. A weak brand may pay more for the same click because users do not recognize or trust it.

Second, keyword strategy must move beyond exact commercial phrases. AI-assisted search favors richer context. Content that answers the full buying problem can support both organic visibility and paid conversion. A cybersecurity buyer does not only search for “best endpoint protection.” They ask about compliance, deployment risk, false positives, integration, pricing, internal approval and migration.

Third, landing pages need to match query sophistication. A user arriving from an AI-shaped journey may already know the basics. Repeating generic copy wastes the visit. Better pages help the user decide: comparisons, proof, pricing logic, implementation detail, return policy, shipping, integrations, case evidence, limitations and clear next steps.

Generative engine optimization, or GEO, is not a replacement for SEO. It is a useful lens for creating content that AI systems can understand, quote and connect to entities. The work overlaps with strong editorial SEO: clear definitions, source-backed claims, named entities, original analysis, structured sections, author credibility and pages that answer real decision questions.

Search performance in the AI age will reward brands that are easy to understand, easy to verify and easy to trust. The click may still happen in Google Ads. The reason for the click may have been built across content, reputation and machine-readable evidence long before the auction.

Paid social has become creative-led demand capture

Paid social used to be sold as audience targeting. Advertisers could slice people by interest, behavior, demographic signal and lookalike similarity. That era is weaker now. Privacy changes reduced addressability. Platforms moved toward broader targeting. Algorithms became better at finding pockets of response when given enough creative and conversion volume.

The result is a different paid social model. The audience is still there, but creative does more of the targeting. The ad itself attracts, repels, qualifies and educates. The platform watches who responds and finds more people like them.

This is why broad campaigns can outperform heavily segmented campaigns when the account has strong creative and clean conversion data. The ad carries the segmentation. A video speaking to first-time parents will naturally pull a different audience than a video speaking to marathon runners, even if both run inside broad targeting. A founder-led B2B ad discussing CFO approval will find a different pocket of users than a product-demo ad focused on technical setup.

Paid social now rewards creative systems that behave like editorial systems. Teams need a steady flow of angles, scripts, creator briefs, product demonstrations, proof assets, objection-handling ads and offer variants. They need to read comments. They need to know why people are laughing, doubting, saving, sharing or arguing. Performance insight is not only in Ads Manager. It is also in the market’s reaction.

The role of the landing experience is larger than many social teams admit. A paid social click often arrives with weaker intent than a paid search click. The page must do more work: remind the visitor why they clicked, deepen the problem, make the product concrete, show proof quickly and reduce risk. Sending cold social traffic to a generic product page wastes attention.

Paid social also needs a more honest view of incrementality. Retargeting and catalog ads can report excellent ROAS because they reach people already close to purchase. Prospecting may look weaker while creating new buyers. A blended view is needed. Separate reports help: new customers, returning customers, first purchase, repeat purchase, payback period and margin.

The strongest paid social teams no longer argue about whether “creative” or “media buying” matters more. They treat the account as a learning system. Creative supplies hypotheses. Media delivery supplies market response. Measurement separates real lift from reported credit. Landing pages convert attention into action. CRM and retention data show whether the acquired customer was worth the cost.

Retail and commerce media push performance closer to the shelf

Retail media has changed the meaning of performance marketing for ecommerce brands. A sale no longer happens only after a user clicks from Google or Meta to a brand’s own website. Many purchases happen inside retail ecosystems where the retailer controls search results, sponsored placements, product detail pages, loyalty data and closed-loop reporting.

Amazon Ads, Walmart Connect, Instacart, Tesco Media, Carrefour Links, Target Roundel and many other retail and commerce media networks have turned retailers into media owners. The promise is attractive: advertisers can reach shoppers closer to purchase and often connect ad exposure to sales inside the retailer’s environment. The problem is fragmentation. Every network has its own metrics, attribution windows, inventory, reporting rules and commercial incentives.

IAB and MRC released Retail Media Measurement Guidelines to address inconsistent measurement across retail media networks, while IAB Europe updated its Commerce Media Measurement Standards V2 in 2026 after industry feedback and a wider commerce-media scope.

For performance marketers, retail media demands a stronger commercial brain. A sponsored product ad may lift sales on a retailer, but did it steal sales from organic ranking? Did it shift share from a competitor? Did it raise total category presence? Did it cannibalize DTC revenue? Did it improve retailer relationships? Did it clear stock? Did it sell low-margin SKUs at a loss?

Retail media also makes product content a performance asset. Product titles, images, reviews, availability, pricing, shipping promise, product attributes and detail-page content directly affect paid media. Google Merchant Center’s product data specification makes the same point in the Google ecosystem: accurate and properly formatted product data helps match products to relevant queries and avoid disapprovals or display issues.

The shelf has become digital, searchable and auction-based. A weak product feed is a weak media plan. A product with poor reviews forces ads to work harder. A price mismatch kills conversion. Out-of-stock products waste demand. A bad image reduces click-through rate before the landing page has a chance.

Commerce media also pulls brand and performance closer. Shoppers may compare products, read reviews and buy within minutes. Yet the brands they recognize still have an advantage. Performance spend near the shelf cannot repair years of underinvestment in trust. It can defend share, improve visibility and capture demand, but it rarely builds deep preference by itself.

Retail media is not just another paid channel. It is where media, merchandising, trade marketing, ecommerce operations and performance analytics collide. Companies that keep those teams separate will struggle to understand whether spend is creating growth or merely paying rent on the digital shelf.

Programmatic needs more discipline than ever

Programmatic advertising brought scale to digital media buying. It also brought opacity. A single impression can pass through agencies, DSPs, SSPs, exchanges, verification vendors, data providers, identity systems and publishers before it reaches a user. Each step can add cost, complexity and measurement risk.

The ANA Programmatic Media Supply Chain Transparency Study examined the open web programmatic ecosystem and urged marketers to think critically about spend, incentives and supply-chain value. ANA earlier said the ecosystem involved major waste, with the study’s first look pointing to as much as $20 billion in waste across open web programmatic media.

That does not mean programmatic is bad. It means programmatic needs serious governance. The laziest version of programmatic is buying cheap reach and hoping verification tools clean up the mess. The serious version is supply-path discipline, inventory quality, clear objectives, frequency control, creative fit and independent measurement.

OpenRTB standards from IAB Tech Lab provide the protocol language for real-time bidding, including support for connected TV and other formats. MRC standards and invalid traffic guidance give advertisers a framework for thinking about measurement quality and traffic that should not count as legitimate advertising exposure.

The performance marketer’s programmatic checklist should be sharper than “did it deliver impressions?” It should ask where impressions ran, which domains or apps carried spend, whether inventory was viewable, whether frequency was controlled, whether invalid traffic was filtered, whether the audience was real, whether the bid stream matched the plan and whether the campaign had measurable business impact.

Connected TV adds new potential and new risk. It brings sight, sound and motion to performance-minded advertisers, especially as streaming becomes more shoppable and measurable. Yet CTV measurement can be messy. Households are not individuals. QR codes and post-view attribution can exaggerate impact. Frequency across platforms can be hard to control. Premium inventory and long-tail inventory are not the same thing.

Programmatic should be judged by the job it is hired to do. If the job is reach, then incremental reach and frequency matter. If the job is demand creation, then brand search lift, direct traffic, geo tests or MMM may matter. If the job is retargeting, then incrementality and suppression rules matter. If the job is lead generation, then CRM quality matters.

The mistake is treating programmatic as a cheap conversion machine and then being surprised by low-quality outcomes. Good programmatic is not cheap by default. It is accountable by design.

Landing pages carry more of the media budget than teams admit

Media teams often talk about CPC, CPM, CPA and ROAS as if the ad platform is the whole game. The landing page quietly decides whether much of that spend survives.

A landing page is not just a destination. It is the moment where the promise made by the ad either becomes believable or collapses. Every paid click carries expectation. The landing page pays off that expectation or wastes the click.

Page speed matters because attention is fragile. Google’s Core Web Vitals work places user experience signals such as loading, visual stability and interaction responsiveness into a shared measurement framework. Interaction to Next Paint replaced First Input Delay as a Core Web Vital in March 2024, with INP measuring responsiveness across user interactions.

But speed is only the first layer. A fast confusing page is still a bad page. A good performance landing page aligns message, intent, proof and action. It should make clear what the product does, who it is for, why it is different, what risk is removed and what the visitor should do next.

For ecommerce, the page must handle price, delivery, returns, reviews, product fit, payment options, stock status and visual confidence. For B2B, it must handle problem framing, credibility, integration, security, implementation, stakeholder proof and a call to action that matches the buying stage. For local services, it must handle trust, proximity, availability, qualification and contact friction.

Landing-page testing should not obsess over tiny design changes while ignoring the offer. The biggest gains often come from sharper positioning, better proof, clearer pricing, stronger comparison pages, improved product photography, fewer form fields, better mobile layout or a CTA that matches intent. A “book demo” request may work for high-intent enterprise traffic but fail for early-stage visitors who need a guide, calculator or pricing explanation first.

The landing page also feeds the algorithm indirectly. Better conversion rates give bidding systems more conversion volume at the same budget. Higher post-click quality can lower acquisition cost without touching the campaign. Stronger pages improve SEO, email conversion and sales enablement as well.

Performance marketers should own landing-page feedback even if they do not own web development. The media buyer sees which promises get clicks. The analytics team sees where users drop. The sales team hears which claims matter. The CRO team sees friction. These inputs belong together.

A campaign with weak landing pages forces media to compensate. That leads to discounts, narrower retargeting, inflated claims and rising CPA. A strong landing page makes media buying look smarter because it turns more of the same attention into revenue.

SEO and paid media can no longer be separate planning rooms

SEO and paid media are often managed by different teams, budgets and agencies. That split is convenient for reporting and harmful for customers. Users do not experience search as “paid” and “organic.” They experience a results page, a set of answers, a brand impression and a decision path.

The paid team knows which queries convert. The SEO team knows which pages earn visibility and links. The content team knows which questions need depth. The analytics team knows which journeys lead to revenue. Keeping those insights separate creates waste.

The strongest search strategy treats paid and organic as one demand system.

Paid search can reveal commercial language quickly. If a new product term converts in Google Ads, SEO can build durable content around it. If a paid query has high CPC but poor conversion, SEO can investigate whether the user intent is informational rather than transactional. If organic rankings are strong for a term, paid can test whether incremental coverage still adds value. If AI Overviews reduce clicks on informational queries, paid and SEO can shift content toward decision support and entity strength.

Structured data matters because search systems need help understanding products, organizations, authors, reviews, FAQs, events and other entities. Google’s structured data documentation says Search uses structured data to understand page content and gather information about the web and the world. FAQ structured data has specific guidelines, even though FAQ rich results are limited in many cases.

Performance marketers should care about this because AI search, organic rankings and paid conversion all draw from the same trust reservoir. A brand with thin content, weak reviews, unclear authorship and inconsistent product data may still buy clicks, but the conversion path is weaker.

SEO also helps reduce paid dependency. A company that must pay for every visit has fragile economics. Organic content, direct demand, email lists, communities, partner traffic and brand search create resilience. Paid media then becomes a growth accelerator rather than a life-support system.

The joint planning room should include several shared assets: a search-intent map, a paid query report, organic ranking data, content gap analysis, landing-page performance, customer objections, SERP features, AI answer presence, competitor pages and revenue by query cluster.

This is not about making every page rank and convert at once. Some pages educate. Some compare. Some sell. Some support trust. But the architecture should be deliberate. A paid campaign for “best CRM for manufacturing” should not send traffic to a generic CRM homepage if SEO already shows users need industry proof, integrations and implementation detail.

The wall between SEO and paid media belongs to an older web. The next version of performance marketing will treat search as a connected system of visibility, answerability, trust and conversion.

Value-based bidding changes the math of growth

Many accounts still use conversion volume as their main goal. That can work when every conversion has similar value. Most businesses do not live in that world.

Some leads never answer the phone. Some customers return half their orders. Some subscriptions churn after one month. Some product categories carry thin margin. Some deals require heavy sales support. Some customers buy once with a discount code and never return. Others buy repeatedly for years.

If bidding systems are asked to maximize raw conversions, they will find cheap conversions. Cheap is not always profitable. Value-based bidding is the shift from buying actions to buying economic outcomes.

Google’s target ROAS bidding exists for this reason. It uses predicted conversion value at auction time and adjusts bids to help maximize return against the advertiser’s target. The strategy depends on conversion value data. Without useful values, the system has little commercial intelligence.

The hard part is not turning on a bid strategy. The hard part is deciding what value should mean.

Ecommerce can start with revenue, but revenue alone may be misleading. Margin, return rate, shipping cost, discount depth and repeat purchase probability may change the real value of a conversion. A brand selling both accessories and large appliances should not teach the algorithm that every euro of revenue has the same value if the margin profile is different.

Lead generation needs even more care. A form submission might be worth €20, €200 or €2,000 depending on qualification and close rate. If CRM stages are imported back into ad platforms, the bidding system can learn from deeper outcomes. For example: raw lead, marketing-qualified lead, sales-qualified lead, opportunity, closed-won deal. Each stage can carry a different value.

Subscription businesses should consider payback period and retention. A campaign that acquires users cheaply but brings high churn may look strong for 30 days and weak after six months. Value signals should move closer to lifetime economics when volume allows.

Value-based bidding also changes budget conversations. The question becomes less “What CPA can we get?” and more “What acquisition cost is acceptable for this customer type, product line or payback window?” That moves performance marketing closer to finance.

The risk is fake precision. Many companies do not know true LTV by channel, segment or product. That should not stop progress. A rough value model based on real business logic is better than treating every conversion as equal. The model can improve over time.

Performance teams should document value assumptions. Which margin is used? Which close rate? Which retention period? Are refunds included? Are repeat purchases included? Are offline sales matched? Are values capped to avoid overbidding on rare outliers?

A value-based account is harder to manage, but it is more honest. It teaches platforms to chase what the business actually wants, not what the tag happens to count.

Privacy, consent and regulation are now growth constraints

Privacy is not a legal footnote. It is now part of performance marketing infrastructure.

EU law has shaped this reality. The GDPR sets conditions for personal data processing. The ePrivacy Directive addresses confidentiality of communications and rules around tracking technologies. The European Data Protection Board’s consent guidelines explain that valid consent under GDPR must meet specific standards, including being freely given, specific, informed and unambiguous.

The Digital Services Act adds platform-level advertising transparency and restrictions. The European Commission says the DSA bans targeted advertisements to children and prohibits ads based on sensitive data categories such as religion, race or sexual orientation.

For performance teams, the practical impact is direct. Consent banners affect tag firing. Consent rates affect modeled conversions. Platform rules affect targeting. Ad repositories affect transparency. Sensitive-category restrictions affect audience strategy. Documentation affects audit risk. Poor consent design can damage trust and data quality at the same time.

The lazy approach is to treat compliance as something legal handles. The better approach brings legal, analytics, marketing and product into one room. The team should know which tags fire before consent, which vendors receive data, which events are modeled, which data is sent server-side, how consent withdrawal works and how long data is stored.

A consent banner should not be designed only to maximize opt-ins through confusion. Dark patterns may raise short-term consent rates while creating legal and trust risk. Users should understand what they are agreeing to. A cleaner consent experience can reduce volume but improve the reliability of the users who opt in.

Privacy also creates product and brand opportunities. A company that explains data use plainly can stand out in categories where users feel tracked and manipulated. A B2B brand that handles data governance well can use that competence as proof of maturity. A retail brand that lets customers manage preferences cleanly can improve lifecycle marketing.

Performance marketing has always worked with constraints: budgets, competition, inventory, conversion rates. Privacy is now one of those constraints. The winners will not be the brands that evade it. The winners will build performance systems that work inside it.

The performance marketer’s role has expanded

The old performance marketer was often judged by account metrics: CPA, ROAS, CPC, CTR, conversion rate, budget pacing. Those metrics still matter. They no longer describe the full role.

The modern performance marketer needs fluency across media, analytics, creative, customer economics, experimentation, privacy and technology. Not mastery of every discipline, but enough understanding to ask the right questions and prevent bad handoffs.

The role now includes diagnosing signal quality. If purchases drop, is demand down, tracking broken, consent lower, checkout slower, feed disapproved, inventory out, CPC higher, creative tired or attribution delayed? The answer often sits outside the campaign interface.

It includes creative judgment. Which angle is worth testing? Which ad is getting attention from the wrong audience? Which creator asset feels credible? Which proof is missing? Which claim will legal reject? Which format fits the platform without losing the brand?

It includes commercial judgment. Which products deserve budget? Which customers are profitable? Which markets have supply constraints? Which channel brings low-quality leads? Which campaign produces revenue but damages margin?

It includes experimentation design. What question are we testing? What is the hypothesis? What will change? How long will the test run? What sample is needed? What result will change a decision?

It includes communication. Performance marketers need to explain uncertainty without sounding evasive. They need to tell a CEO that platform ROAS is not profit. They need to tell sales that lead volume fell because quality filters improved. They need to tell creative teams that a polished ad failed because it answered no real objection.

The performance marketer has become a systems operator. The system includes platforms, data, people, pages, offers, budgets and buyers. A weak operator reacts to dashboard changes. A strong operator understands the machinery behind the dashboard.

This also changes hiring. The best candidate is not always the person who knows every platform setting. Platform interfaces change too quickly. Better traits include analytical patience, clear writing, commercial curiosity, technical comfort, creative taste and enough skepticism to question easy wins.

Agencies need the same shift. A paid media agency that only manages bids and budgets is becoming easier to replace. An agency that can improve measurement, creative testing, landing pages, feed quality, CRM feedback and growth economics is harder to commoditize.

Performance marketing has grown up. The role is less narrow, more demanding and more valuable when done well.

Budget planning needs marginal thinking

Performance budgets are often planned around averages. Average CPA. Average ROAS. Average conversion rate. Average order value. Averages are useful for reporting, but dangerous for scaling.

The next euro does not perform like the last euro. As spend rises, campaigns often move into more expensive auctions, weaker audiences or lower-intent inventory. Frequency increases. Creative fatigues. Incremental return declines. This is the basic curve every growth team eventually meets.

Marginal thinking asks what the next unit of spend will do, not what past spend averaged.

This matters because platform dashboards can hide diminishing returns. A campaign with a blended ROAS of 4.0 may have early spend returning 8.0 and later spend returning 1.5. Cutting or scaling based on the average can mislead. Budget decisions should consider response curves, incrementality, impression share, saturation, frequency, audience size and creative freshness.

Marketing mix modeling helps at the planning level because it can estimate channel contribution, diminishing returns and budget scenarios across channels. Meridian’s documentation describes MMM as a statistical method using aggregated data across channels and non-marketing factors to guide budget planning and media performance decisions, without using cookie or user-level information.

Smaller businesses may not have enough data for advanced MMM. They can still use marginal thinking. Increase budgets in controlled steps. Watch new-customer CAC separately from blended CAC. Track contribution margin, not only revenue. Compare holdout regions or periods where possible. Measure branded search lift after awareness pushes. Ask whether retargeting spend is capped or creeping upward.

Budget planning also needs payback discipline. A company with strong cash reserves and high retention can afford longer payback than a company under cash pressure. A B2B SaaS company may accept high CAC if ACV and retention support it. A low-margin ecommerce retailer may need faster payback. Performance marketing cannot answer this alone; finance must be part of the model.

The wrong budget question is “How much can we spend?” The better question is “Where does the next euro create the best risk-adjusted return?” Sometimes the answer is paid search. Sometimes it is creative production, landing-page work, feed cleanup, lifecycle email, sales enablement or SEO content. Media is not always the bottleneck.

This is a hard lesson for performance teams because it means not every problem is solved by more campaign management. Sometimes the best media decision is to stop spending until the offer improves.

B2B performance marketing has its own physics

B2B performance marketing is often damaged by B2C assumptions. A software buyer does not behave like someone buying shoes. A procurement process is not a checkout. A lead is not revenue. A demo request is not a deal. A high CTR can mean curiosity from the wrong people.

B2B has longer sales cycles, buying committees, internal politics, budget windows and risk aversion. The person clicking the ad may not be the final decision maker. The person researching may not fill out a form. The person who fills out the form may not have authority. Attribution may credit the last touch while months of category education did the real work.

The main B2B performance mistake is treating lead volume as growth.

A better B2B model connects campaigns to pipeline quality. That requires CRM hygiene. UTM parameters, click IDs, lead source, campaign source, lifecycle stage, opportunity value, close date, lost reason and sales feedback must be reliable enough to use. Without that, the paid team will keep buying the leads that look cheap.

B2B performance also needs content that matches buying stages. Early-stage buyers may need problem education, benchmark reports, calculators or comparison frameworks. Mid-stage buyers need use cases, integration detail, implementation proof and stakeholder-specific arguments. Late-stage buyers need pricing clarity, security documentation, case studies, migration support and ROI evidence.

Search works well in B2B when intent is clear, but volume may be limited and CPCs high. Paid social can create demand, but the conversion path is rarely immediate. LinkedIn can reach professional segments, but costs require careful qualification. Programmatic and content syndication can produce volume while creating serious quality problems if incentives reward form fills rather than buying intent.

Account-based marketing can help when the target market is defined. But ABM should not become a decorative dashboard of named accounts receiving impressions. It needs a real account list, clear buying roles, tailored proof, sales coordination and measurement that tracks account movement, not only clicks.

B2B performance marketers should also respect dark social and offline influence. A buyer may see an ad, ask peers in a Slack community, read reviews, listen to a podcast, attend a webinar, search the brand, visit the site from direct traffic and later convert through branded search. Last-click attribution will flatten that path into nonsense.

The right B2B performance question is not “Which ad got the lead?” It is “Which mix of activity increased qualified pipeline among the accounts we can actually win?” That question is harder. It is also closer to the truth.

AI should be used as a controller, not as a conscience

AI now sits throughout performance marketing. It writes draft ads, builds audiences, predicts bids, generates images, summarizes search results, identifies patterns, recommends budgets, scores leads and supports customer interactions. Refusing to use it is not serious. Trusting it without oversight is worse.

AI is useful for speed, pattern recognition and variation. It can draft creative angles, cluster search terms, summarize customer reviews, inspect landing-page gaps, generate test ideas, help write product feed improvements and scan large reports. It can help small teams do work that previously required more people.

But AI does not know the business truth unless humans supply it. It does not know whether a claim is legally safe. It does not know whether a customer segment is profitable. It does not know whether a conversion is low quality. It does not know whether a creative idea fits the brand’s reputation. It does not know when a campaign is exploiting measurement bias.

AI should control repetitive complexity. Humans should control judgment, ethics and commercial meaning.

The danger is automation theater: teams using AI to produce more assets, more reports and more tests without clearer decisions. More output can bury the signal. A performance team can suddenly generate 200 ad variants and still fail to learn why customers buy.

The better use of AI is structured. Give it a defined job. Summarize support tickets into objections. Group search terms by intent. Find contradictions between ad promises and landing-page copy. Draft five hooks for one audience belief. Compare reviews for competing products. Flag anomalies in conversion tracking. Build first drafts, then let humans sharpen.

AI also raises disclosure and quality questions for content. Google’s guidance says using generative AI content is not inherently against Search policies, but using automation to generate many pages without adding user value may violate spam policies.

That matters for GEO and SEO. AI-generated pages that repeat common knowledge will not build authority. Strong content still needs original analysis, source-backed claims, subject expertise, examples, structure and editorial judgment. AI can assist production, but it cannot fake experience for long.

In paid media, AI-generated creative needs brand review. Cheap synthetic ads may produce novelty clicks and weak customers. Over-automated personalization can feel invasive. Performance gains that rely on misleading claims create future costs in refunds, complaints and trust loss.

The best teams will not ask, “Can AI do this?” They will ask, “Should AI do this, and what human review is needed before it affects customers or spend?”

A practical operating model for the next decade

A 21st-century performance marketing program needs an operating model, not just a channel plan. The model should define how the business chooses goals, collects signals, creates assets, allocates spend, measures incrementality and learns.

The work starts with economics. Before campaign setup, the team should know target customers, margin, payback window, sales cycle, retention, product priority and capacity constraints. Media should not sell what operations cannot deliver or what finance cannot support.

Then comes the measurement stack. Events must be mapped. Consent must be handled. CRM stages must be connected. Product feeds must be clean. Revenue and value must be passed where appropriate. Dashboards must separate platform-reported conversions from business outcomes.

Creative should run as a learning pipeline. The team needs themes, angles, formats, proof assets, creator content, landing-page variants and a testing calendar. Each creative test should answer a business question, not merely feed the platform more assets.

Budgeting should use both short-term and long-term views. Daily pacing protects spend. Weekly analysis spots account movement. Monthly reviews examine creative, landing pages, query trends and CRM quality. Quarterly reviews examine incrementality, channel mix, payback and strategic bets.

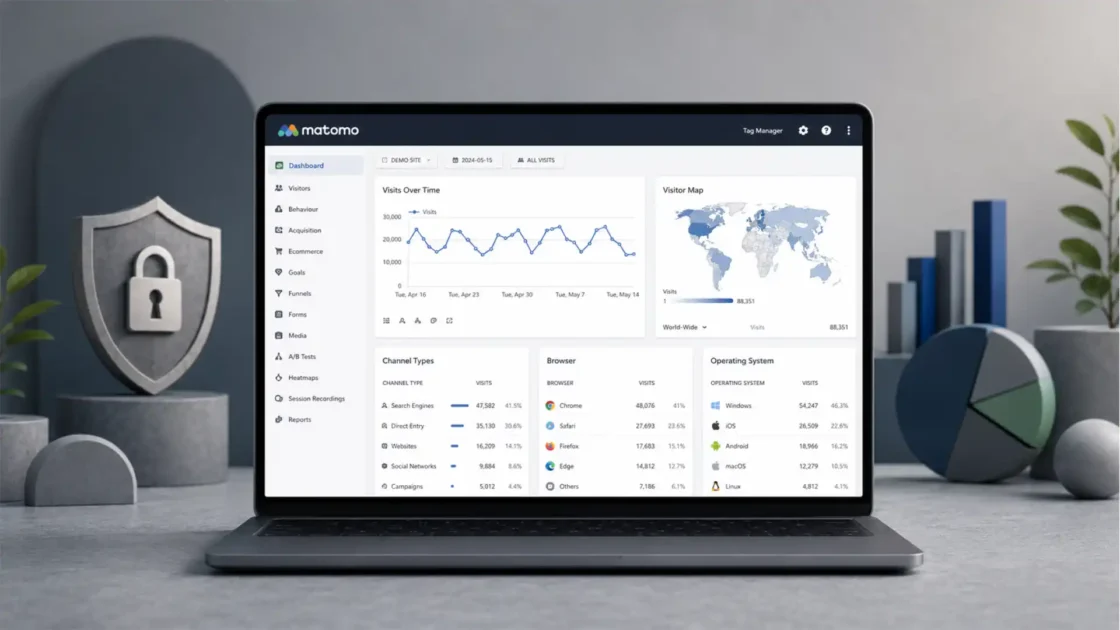

A compact operating model for performance teams

| Layer | Main job | Failure signal |

|---|---|---|

| Economics | Define which customers, products and margins deserve spend | Strong ROAS with weak profit |

| Signal quality | Feed platforms clean, consented and useful conversion data | Rising spend with declining lead or customer quality |

| Creative system | Test customer beliefs, proof and offers across formats | High asset volume with little learning |

| Landing experience | Turn attention into action with speed, clarity and trust | Good CTR with weak conversion rate |

| Measurement | Separate reported credit from incremental impact | Platform wins that finance cannot see |

| Governance | Control privacy, brand safety, waste and decision rights | Growth that creates legal, trust or margin risk |

This model keeps performance marketing connected to business reality. It also prevents the most common failure: treating channel execution as the whole discipline.

A strong operating rhythm needs decision rules. When do we scale a campaign? When do we pause it? How much data is enough? Which metric wins when platform ROAS and contribution margin disagree? How often do we refresh creative? Which tests require holdouts? Which changes are too risky during peak season?

The answers vary by business, but they should be written down. Written rules reduce emotional budget swings. They also help teams learn faster because decisions become comparable.

Performance marketing will keep changing. Interfaces will change. Attribution windows will change. AI tools will change. Privacy rules will change. The operating model protects the team from chasing every platform update without a strategy.

The next era belongs to marketers who can prove, not just report

Digital performance marketing has outgrown the dashboard because the dashboard no longer contains enough truth.

The next era will reward teams that can prove value, not merely report activity. They will understand automation without worshipping it. They will use first-party data without abusing trust. They will build creative systems that learn. They will connect paid media with SEO, content, CRM, product feeds and landing pages. They will use incrementality and MMM when platform attribution is not enough. They will know that a cheaper conversion is not always a better customer.

The discipline is becoming less comfortable and more important. Easy tracking made performance marketing look cleaner than it was. Privacy changes, AI systems and fragmented journeys have removed some of that comfort. What remains is a more honest version of the work.

Performance marketing in the 21st century is not the art of buying clicks cheaply. It is the discipline of turning measurable demand into profitable growth under real-world constraints. That requires technical skill, commercial judgment and editorial taste. It requires patience with data and impatience with vanity metrics.

The marketer who only reads the platform report will be replaceable. The marketer who understands what the platform report hides will be valuable.

Questions serious marketers ask about digital performance marketing

Digital performance marketing is the discipline of using measurable digital channels to acquire, convert and retain customers while connecting spend to business outcomes. In the 21st century, it includes paid search, paid social, retail media, programmatic, affiliate, SEO support, landing-page testing, CRM data, consent management, incrementality and AI-assisted campaign systems.

It has become harder because tracking is less complete, platforms are more automated, privacy rules are stricter, journeys are fragmented and attribution is less reliable. Marketers now need better signal quality, stronger creative, cleaner data and more advanced measurement.

ROAS is useful, but it is incomplete. It does not always include margin, returns, customer quality, incrementality or long-term value. A campaign can show strong ROAS and still produce weak profit if it sells low-margin products or receives too much attribution credit.

The biggest mistake is optimizing for the wrong conversion. If a platform is trained on low-quality leads, shallow events or low-margin sales, it will buy more of those outcomes. The conversion event must match business value.

AI should be used for pattern recognition, drafting, clustering, reporting support, creative variation and campaign automation. Human teams should still control strategy, claims, ethics, value definitions, testing logic and budget decisions.

Automation reduces the value of manual button-clicking, but it raises the value of strategic media operators. Media buyers now need to manage inputs, measurement quality, creative systems, value signals and incrementality.

First-party data is information collected directly through customer relationships, such as purchases, CRM stages, preferences, subscriptions and consent records. It matters because it lets businesses define customer value more accurately and reduce dependence on third-party tracking.

Last-click attribution gives all credit to the final click before conversion. It often overcredits branded search, retargeting and bottom-funnel activity while undercrediting demand creation, content, video, creators and upper-funnel campaigns.

Incrementality measures what happened because of marketing that would not have happened otherwise. It asks whether ads caused new conversions, not merely whether ads were touched before conversions.

Marketing mix modeling is a statistical method that uses aggregated data to estimate how channels, spend, seasonality and other factors affect sales or other KPIs. It is useful when user-level tracking is limited or when leaders need budget planning across channels.

Platforms now use broader targeting and automated delivery, so creative does more of the qualifying work. The ad’s message, proof, format and offer influence who responds and what the algorithm learns.

AI search changes how users discover answers, compare brands and reach websites. Performance teams need stronger content, clearer entities, structured data, authoritative explanations and landing pages that match more informed visitors.

SEO supports performance by building durable visibility, trust, content depth and search demand that paid media can capture. Paid search and SEO should share query data, landing-page insights and intent research.

Consent management affects which tags fire, which data can be collected, how conversions are modeled and whether advertising data can be used lawfully. Poor consent setup weakens both compliance and measurement.

Value-based bidding tells ad platforms to pursue conversion value rather than raw conversion count. It works best when advertisers pass accurate revenue, margin, lead quality or predicted lifetime value signals.

B2B performance should be measured through qualified pipeline, opportunity value, sales acceptance, close rate, account engagement and revenue, not just lead volume. CRM integration is critical.

Retail media is a performance channel, but it is also a commerce, merchandising and trade-marketing environment. Advertisers must measure sales impact, incrementality, organic cannibalization, margin and product-page quality.

They can reduce waste through supply-path discipline, domain and app transparency, invalid-traffic filtering, viewability standards, frequency control, inventory quality rules and independent measurement.

A good performance landing page matches the ad promise, loads quickly, explains the offer clearly, shows proof, handles objections, reduces risk and gives the visitor a clear next action.

The next decade will be defined by signal quality, AI-assisted automation, privacy-safe measurement, creative learning systems, first-party data, incrementality and closer alignment between marketing spend and profit.

Author:

Jan Bielik

CEO & Founder of Webiano Digital & Marketing Agency

This article is an original analysis supported by the sources cited below

Internet Advertising Revenue Report Full Year 2025

IAB’s annual benchmark report on U.S. digital advertising revenue, channel growth and market structure.

Gartner 2025 CMO Spend Survey reveals marketing budgets have flatlined at 7.7% of overall company revenue

Gartner’s 2025 CMO budget release, used for context on marketing budget pressure.

Gartner survey finds digital channels account for 61.1% of total marketing spend

Gartner’s release on digital channel budget share across marketing spend.

About Performance Max campaigns

Google’s overview of Performance Max as a goal-based campaign type across Google inventory.

We’re upgrading Dynamic Search Ads to AI Max

Google’s 2026 announcement about AI Max for Search campaigns and Dynamic Search Ads migration.

About Smart Bidding

Google Ads documentation explaining Smart Bidding and auction-time bidding.

About Target ROAS bidding

Google Ads documentation on target ROAS bidding and conversion value.

About enhanced conversions

Google Ads documentation on enhanced conversions and hashed first-party conversion data.

About consent mode

Google Ads documentation explaining how Consent Mode communicates user consent signals.

Next steps for Privacy Sandbox and tracking protections in Chrome

Google’s 2025 update on Chrome’s approach to third-party cookies and tracking protections.

Third-party cookies

Privacy Sandbox guidance on third-party cookies, testing and migration options.

User privacy and data use

Apple Developer documentation on App Tracking Transparency and tracking permission.

Privacy control

Apple’s user-facing privacy page describing app tracking permission controls.

The Digital Services Act

European Commission information on the Digital Services Act and platform obligations.

Digital Services Act keeping us safe online

European Commission summary of DSA protections, ad transparency and targeting restrictions.

Guidelines 05/2020 on consent under Regulation 2016/679

European Data Protection Board guidance on valid consent under GDPR.

ePrivacy Directive

European Data Protection Supervisor overview of the ePrivacy Directive and tracking rules.

State of Data 2025

IAB report on AI, signal loss, data strategy and media campaign execution.

2025 Creator Economy Ad Spend and Strategy Report

IAB report on creator advertising spend, strategy and measurement challenges.

IAB Europe’s Commerce Media Measurement Standards V2

IAB Europe’s updated standards for commerce and retail media measurement.

Product data specification

Google Merchant Center documentation on product feed data requirements.

Introducing INP to Core Web Vitals

Google Search Central announcement and updates on Interaction to Next Paint becoming a Core Web Vital.

Interaction to Next Paint

web.dev documentation explaining INP and page responsiveness measurement.

Creating helpful, reliable, people-first content

Google Search Central guidance on useful, reliable content for people.

General Guidelines

Google Search Quality Rater Guidelines, including E-E-A-T and trust concepts.

Introduction to structured data markup in Google Search

Google Search Central documentation on structured data and how Search understands page entities.

FAQ structured data

Google Search Central documentation for FAQPage structured data.

Google Search’s guidance on using generative AI content on your website

Google Search Central guidance on generative AI content and spam policy risk.

Meridian

Google for Developers page for Meridian, Google’s open-source marketing mix modeling framework.

About Meridian

Google Meridian documentation explaining MMM, aggregated data and privacy-safe measurement.

Meridian is now available to everyone

Google’s announcement that Meridian became available to marketers and data scientists.

Robyn

Meta Marketing Science documentation for Robyn, an open-source marketing mix modeling package.

About Conversion Lift

Google Ads documentation on Conversion Lift as an incrementality measurement tool.

About Conversion Lift

Meta Business Help documentation on Conversion Lift and incremental ad impact.

About Meta Advantage+

Meta Business Help documentation describing Meta Advantage+ automation products.

About Advantage+ sales campaigns

Meta Business Help documentation on Advantage+ sales campaigns.

About Smart Performance Campaign

TikTok Ads documentation on Smart Performance Campaign automation.

ANA Programmatic Media Supply Chain Transparency Study

ANA study on transparency, waste and supply-chain issues in open web programmatic media.

Minimum Standards for Media Rating Research

Media Rating Council standards and guidelines, including invalid traffic and measurement guidance.

OpenRTB

IAB Tech Lab standards page for OpenRTB and real-time bidding specifications.