The Institute of Science Tokyo has moved laboratory automation from a technical ambition into a working medical research site. Its Robotics Innovation Center at the Yushima Campus is built around AI-equipped robots, including the humanoid Maholo LabDroid, and current reporting says the facility operates with 10 robots and no human staff inside the active experimental workspace. Science Tokyo’s own materials say the center was established on October 1, 2025, the robotic experimentation facility began full operation on April 1, 2026, and the opening ceremony was scheduled for April 15, 2026. The long-range ambition now attached to the project is striking: a possible expansion toward about 2,000 robots by 2040 and a research model in which robots carry out more of the scientific process, from planned experimental execution to AI-supported verification.

Table of Contents

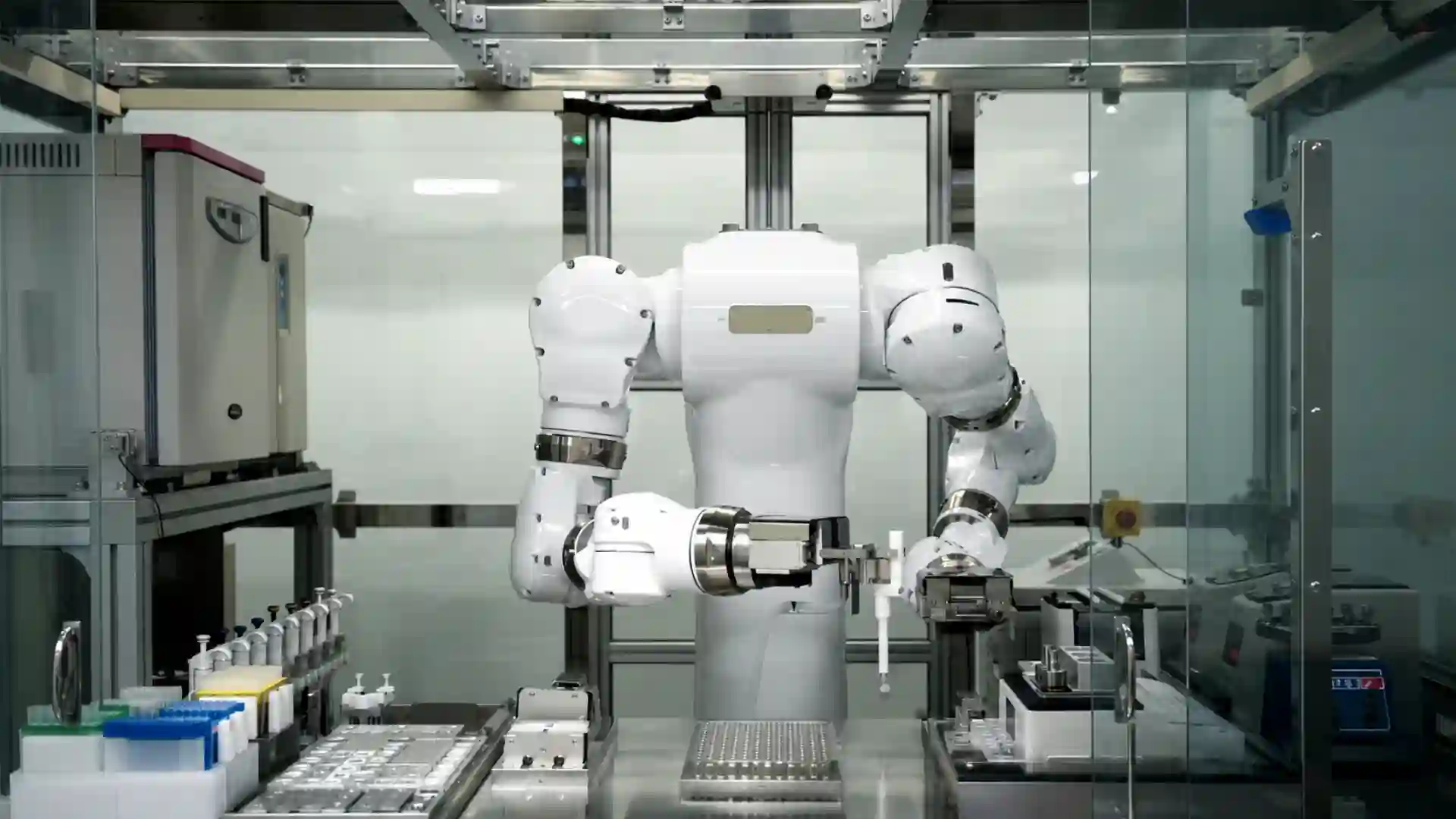

Tokyo’s lab is not a robot stunt

Tokyo’s unmanned medical laboratory has drawn attention because of the image: a humanoid robot, surrounded by machines, handling samples inside a controlled biomedical workspace. That image is powerful, but it is not the real story. The real story is institutional. A major Japanese science and medical university has created a dedicated robotic research center where automated systems are not side equipment in one professor’s lab but shared infrastructure for life-science experimentation.

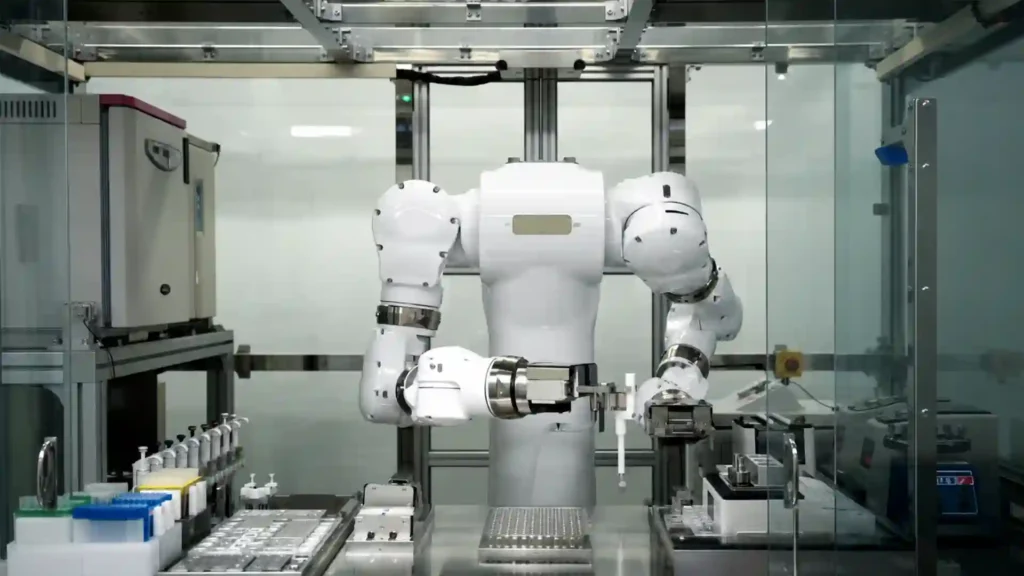

That difference matters. Biomedical research has used automation for decades. Liquid-handling robots, plate readers, imaging systems, sequencers, incubators, and automated storage systems are already familiar in large labs. The Tokyo project is different because it treats automation as a whole research environment. The goal is not to automate one operation. The goal is to build a place where protocols, instruments, robots, software, data records, and human oversight fit into one scientific operating system.

Science Tokyo describes the Robotics Innovation Center as a facility on a dedicated floor of the M&D Tower at its Yushima Campus. The center is designed for robotic life-science experimentation and includes Maholo LabDroid, automated liquid-handling systems, incubators, analytical instruments, and related equipment for protocols in areas such as cell culture, organoids, proteomics, and next-generation sequencing.

The distinction between “a robot in a lab” and “a robotic laboratory” is central. A single robot can be a demonstration. A robotic laboratory changes the way experiments are planned, scheduled, executed, logged, repeated, and reviewed. In that model, the robot is not the whole breakthrough. The breakthrough is the attempt to make wet-lab science programmable, auditable, and available as shared research capacity.

The facility’s public language also avoids the most careless version of the story. Science Tokyo does not say that robots have replaced scientists. It says robots and researchers collaborate on experiments, and it frames the center as a step toward AI-equipped robotic experimentation. The center is split between a Robotic Laboratory, which uses current robotic systems to perform life-science workflows, and a Next-Generation Robotic Laboratory, which develops the autonomous robotics and AI methods that current systems cannot yet provide.

That split is revealing. The present-day facility can already run defined protocols. The next-generation side is aimed at the harder questions: how robots should monitor a whole lab, how they should adapt to unexpected sample states, how AI should schedule work, how protocols should be converted from human instructions into executable robot code, and how experimental results should feed the next round of decisions.

Calling the site a “robot-only medical lab” captures the public-facing novelty. It also needs care. The lab may be physically unmanned during experimental operations, but it is not intellectually unmanned. Humans still define research questions, select models, approve protocols, review data, maintain equipment, handle governance, and decide whether a result has medical meaning. The human role moves away from repetitive bench execution and toward design, supervision, judgment, and accountability.

That is why the Tokyo project matters beyond its visual impact. It is a test of whether medical research can shift from a craft model, where much of the work depends on trained hands and local habits, toward an infrastructure model, where experiments become machine-executable workflows with cleaner records and more consistent execution.

Maholo LabDroid brings humanoid robotics into the wet lab

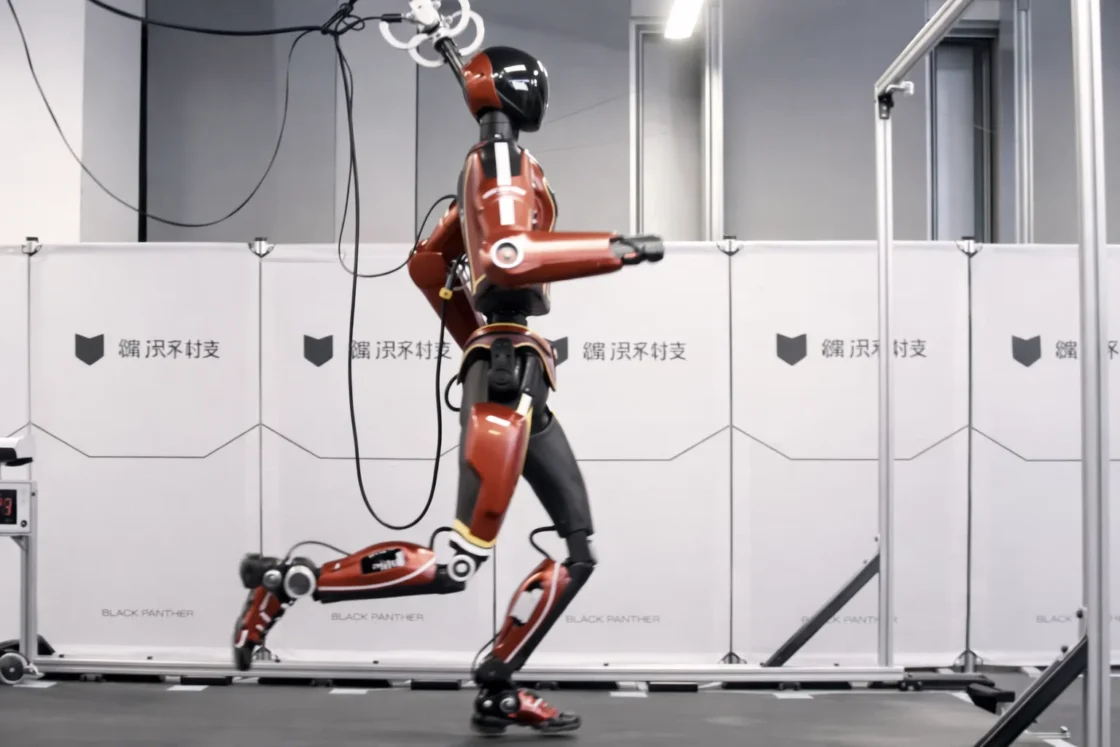

Maholo LabDroid is the robot that gives the Tokyo facility its most memorable figure. It is a humanoid-style laboratory robot built for life-science work, not a general-purpose social robot dressed up for publicity. Robotic Biology Institute describes Maholo as a laboratory humanoid that uses a dual-arm seven-axis structure and works with ordinary laboratory apparatus and equipment. The design goal is clear: Maholo is meant to perform biological experimental operations in settings still built around human tools and human bench layouts.

That design choice matters because biology labs are not factories. A factory line can be rebuilt around a fixed product. A biomedical lab often cannot. Researchers move between culture plates, tubes, pipettes, incubators, microscopes, plate readers, centrifuges, reagent reservoirs, and special-purpose instruments. Protocols change as projects change. Methods are revised after early results. A robot that can interact with equipment designed for people has a practical advantage in that messy environment.

Maholo is not small. Robotic Biology Institute lists the robot at 2,500 mm wide, 2,000 mm deep, and 2,200 mm high, with a weight of 1,200 kg. It is closer to a heavy robotic workstation than a desktop assistant. Its specifications include ISO Class 6 cleanliness, and its stated purpose is precise, repeatable handling of biological laboratory procedures.

The robot’s history is older than the new Tokyo center. AIST and Yaskawa Electric developed Maholo as a biomedical experiment robot using industrial robot technology, and Robotic Biology Institute was founded in 2015 to commercialize the system and related robotic facilities. AIST’s English research highlight describes Maholo as a system built to automate biomedical bench work and perform some operations with higher accuracy and reproducibility than experienced laboratory technicians.

That history protects the Tokyo story from being dismissed as sudden AI hype. Maholo has been part of Japan’s laboratory robotics work for years. The new development is not the existence of one humanoid lab robot. The new development is the attempt to place robots inside a shared institutional facility and connect them to a broader scientific automation plan.

Maholo also sits between two schools of lab automation. One school builds specialized machines for narrow tasks: a pipetting robot, a sequencing prep station, a robotic incubator, a plate handler. The other school builds more flexible robots that use existing lab tools. Maholo belongs to the second school. It will not beat a specialized high-throughput system on every repetitive task. Its value is different: it can adapt to protocols and physical layouts that were originally designed for people.

That flexibility is important in medicine. Clinical and biomedical research rarely follows one fixed manufacturing path. A group may work on stem-cell differentiation one month, organoids the next, then sample preparation for proteomics or sequencing. If a robot can operate across those workflows, it becomes a bridge between manual research culture and automated research infrastructure.

The risk is mechanical complexity. Humanoid-style lab robots are expensive, large, and dependent on skilled support. They need maintenance, calibration, error recovery, and careful integration with equipment around them. A humanoid robot is not automatically better than a specialized machine. It is better only when flexibility, compatibility with existing lab tools, and protocol change matter enough to justify the complexity.

That is why the Tokyo center’s broader equipment mix matters. The future of robotic science will not be one humanoid robot doing everything. It will be an orchestration system: humanoid robots where flexible manipulation matters, specialized liquid handlers where throughput matters, imaging systems where measurement matters, incubators where environmental control matters, and software tying the whole system together.

The timeline shows a planned research platform

The public version of this story sometimes makes the lab sound like a single surprise opening. The official timeline is more deliberate. Science Tokyo says the Robotics Innovation Center was established on October 1, 2025, and that the robotic experimentation facility began full operation on April 1, 2026. A Japanese notice from Science Tokyo announced an opening ceremony and commemorative symposium for April 15, 2026, with the notice first published on March 11, 2026 and later updated on April 10, 2026.

This timeline matters because it shows a planned institutional project rather than a one-day spectacle. The center has named leadership, an official site, a defined campus location, a research mission, and usage plans. Science Tokyo lists Keiichi Nakayama as center director and Genki Kanda as deputy director.

The center also sits inside a new university structure. The Institute of Science Tokyo opened on October 1, 2024, after the merger of Tokyo Medical and Dental University and Tokyo Institute of Technology. The merger joined medical, dental, science, and engineering capability under one institution.

That institutional background is not a side detail. A robot-run medical lab needs exactly that mix. Medicine supplies the real biological and clinical questions. Engineering supplies robotics, systems design, control, hardware integration, and software. AI supplies scheduling, monitoring, experiment selection, pattern recognition, and data interpretation. A university built from a medical university and a technical university is unusually well placed to try this.

Science Tokyo’s Japanese opening notice states the case directly. It says human experimental operations carry errors, that reproducibility has long been a problem, that Japan’s falling birthrate is reducing the researcher population, and that scientists have less time each year to spend on experiments. The notice then says the center introduces many general-purpose dual-arm humanoid robots called Maholo and aims to create an autonomous scientific research infrastructure in which robots combined with AI verify hypotheses and generate new knowledge.

That language is ambitious, but it is rooted in two practical pressures: reproducibility and labor. Reproducibility is a scientific problem. Labor is a demographic and institutional problem. Japan is not trying to automate medical research only because robots are impressive. It is trying to protect research capacity in a country where skilled human labor is becoming scarcer and where scientific workflows are growing more complex.

The center’s usage model reinforces the platform logic. Science Tokyo says it plans multiple forms of use, including fixed-menu robotic experimental services, customized program adjustment, and time-based access for trained users. It also says pilot use for internal and external researchers is planned in fiscal 2026 while fee structures and detailed terms are developed.

That makes the facility look less like a single lab and more like a core research service. Universities already run core facilities for sequencing, imaging, proteomics, animal studies, and cleanroom work. The Robotics Innovation Center points toward a new kind of core facility: a robotic experimentation core where the experiment itself, not only the measurement, becomes shared infrastructure.

Ten robots today, two thousand as a test of scale

Current reporting says the facility operates with 10 robots, including Maholo LabDroid, and that researchers observe work remotely rather than standing inside the experimental workspace. Reports from Japan Today, Mainichi, and Interesting Engineering describe a long-term plan to expand toward about 2,000 robots by 2040. The stated aim is to automate more of the research process, including parts of hypothesis generation and experimental verification.

The 2,000-robot figure is the kind of number that easily overwhelms the story. It should be treated as a strategic target, not a guarantee. Scaling from 10 robots to 2,000 is not a purchasing exercise. It requires space, power, maintenance, staff, consumables, instruments, sample tracking, protocol libraries, queue management, safety systems, software integration, cybersecurity, data storage, quality control, and enough scientific demand to keep the machines useful.

At 10 robots, the central challenge is demonstration: proving that the facility can run meaningful workflows reliably. At 2,000 robots, the central challenge becomes governance. Which experiments get priority? Who approves protocol changes? How are results audited? How are errors investigated? How are external users charged? How are human-derived samples protected? How is scientific credit assigned? How are models tested before they influence experimental choices?

The bottleneck changes as the fleet grows. With one robot, the bottleneck is capability. With 10 robots, it is coordination. With 2,000 robots, it is an entire operating model for science.

The final system, if it reaches anything like the 2040 ambition, will almost certainly not consist of 2,000 identical humanoid Maholo units. A large robotic research facility would need a mixed fleet. Some tasks belong to humanoid robots because they require flexible manipulation of human-designed tools. Other tasks belong to dedicated liquid handlers, automated incubators, plate movers, imaging systems, mobile platforms, microfluidic systems, or analytical devices.

Science Tokyo’s own description supports that mixed interpretation. It mentions Maholo LabDroid, automated liquid-handling systems, and other experimental robots rather than suggesting that one robot type will run every protocol.

The 2040 target should be judged by milestones. The first milestone is reliable operation of the current facility. The second is protocol depth: how many useful workflows can the robots execute? The third is user demand: do researchers inside and outside the university return because the robot facility produces results they trust? The fourth is data quality: do the robotic workflows produce records good enough to improve future experiments? The fifth is genuine closed-loop work: do AI systems use previous results to propose better next experiments inside safe, human-approved boundaries?

If those milestones are met, the number of robots becomes less of a headline and more of a consequence. If they are not met, 2,000 robots would only multiply cost and complexity.

Medical research automation is harder than ordinary lab automation

Medical research adds difficulty that many automation stories underplay. A robot in a warehouse moves objects. A robot in a chemistry lab may mix reagents and measure outputs. A robot in a medical life-science lab often handles living systems, fragile samples, sterile workflows, human-derived materials, long timelines, and results that may later affect clinical research.

That makes the work more than repetitive pipetting. A cell culture protocol may depend on passage timing, cell density, reagent temperature, pipetting force, incubation time, medium change timing, and how long plates remain outside controlled conditions. Some effects appear days or weeks after the operation that caused them. A human researcher may develop skill around those details without writing them all down. A robot forces the lab to make those details explicit.

The eLife paper by Kanda and colleagues describes induced differentiation in regenerative medicine as a process heavily dependent on experience and skill. The paper’s robot-AI system was designed for exactly that kind of problem: a difficult cell culture workflow where many hidden or semi-hidden variables affect the outcome.

This is where medical research automation becomes valuable. The point is not that robots will instantly discover cures. The point is that hard biological workflows may become more measurable. If a robot executes the same steps with the same parameters and records what happened, researchers can better separate biological variation from manual variation. If AI then proposes the next experimental conditions based on measured results, slow trial-and-error work becomes a structured search.

The promise is not automatic genius. The promise is cleaner experimental memory. Medical science wastes time when failed experiments leave little information behind. A robot-run workflow can record the failed attempt in detail: the timing, the volumes, the instrument settings, the images, the environmental conditions, and any deviation flags. That record gives the next experiment a better starting point.

Medical workflows also demand sterility. A robot that works in an ordinary engineering demo space is not the same as a robot that maintains biological samples inside a controlled environment. For cell therapy research, regenerative medicine, organoid work, stem-cell culture, and clinical-adjacent sample processing, contamination control is central. A useful robot lab needs not only arms but clean operations, validated cleaning, controlled access, environmental monitoring, and documented deviation handling.

That is why the Tokyo center’s life-science focus is important. It is not automating a harmless toy task. It is moving into the zone where biological sensitivity and medical translation force discipline. The center’s stated protocol areas — cell culture, organoids, proteomics, and next-generation sequencing — are all domains where sample handling and repeatability matter.

The risk is that automation may create false confidence. A robot can repeat a flawed protocol perfectly. It can execute a biased experimental plan at scale. It can chase a proxy metric that misses the real biological endpoint. It can make a bad assumption more reproducible. Automation improves the operating layer of science, but it does not remove the need for strong experimental design.

The eLife experiment shows the practical value

The strongest public evidence behind the Maholo model comes from the 2022 eLife study on robotic search for optimal cell culture in regenerative medicine. The researchers combined Maholo LabDroid with batch Bayesian optimization to search culture conditions for iPSC-derived retinal pigment epithelial cells. The search space contained 200 million possible parameter combinations. The system tested 143 different conditions over 111 days and reported an 88% improvement in iPSC-RPE production by pigmentation score compared with the pre-optimized culture.

That result is often more important than the robot’s humanoid appearance. It shows a working pattern for automated medical research. Human researchers define the biological problem, the boundaries of the search, and the evaluation metric. The AI system selects candidate conditions. The robot executes the protocol. Measurements feed the next round. The loop continues until the system finds better conditions inside the defined search space.

This is not a robot independently becoming a scientist. It is a bounded autonomous search system under human scientific framing. That is likely to be the dominant model in biomedical automation for years. Full autonomy across open-ended medical research remains far harder than bounded autonomy inside carefully designed workflows.

The eLife work also shows why robots matter even when AI gets the attention. A model cannot learn from physical experiments that were never run. It also cannot learn well from experimental data polluted by untracked manual variation. Maholo’s role was to make the search physically possible and more consistent. The robot did not merely save labor. It created a more controlled experimental system.

The paper’s long timeline is revealing. A 111-day culture search is not a quick demonstration. It involves sustained operation, repeated handling, measurement, and protocol execution over months. That matters because medical biology often operates on slow biological time. A robot that can sustain a long workflow without fatigue changes what researchers can attempt.

The 88% result also needs a careful reading. It does not mean all cell culture workflows will improve by that amount. It does not mean robots solve regenerative medicine. It means one well-defined protocol search achieved a measured improvement under the study’s conditions. That is still meaningful. It proves the research pattern can work in a serious biological workflow.

The value of the eLife study is methodological. It shows that AI-guided experiment selection and robotic execution can search a huge biological condition space more systematically than manual trial and error. For a medical research center, that is precisely the kind of capability worth turning into infrastructure.

Closed-loop cell culture reveals the daily burden robots can carry

The most glamorous claims about robot labs often focus on discovery. The more immediate value may lie in maintenance. A 2022 SLAS Technology paper described a variable-scheduling maintenance culture platform for mammalian cells. The system combined LabDroid with AI software and microscope observation so that the robot could maintain HEK293A cell cultures without human intervention for 192 hours. It used growth-curve prediction to decide when passage operations should occur.

That may sound less dramatic than AI-designed drugs, but it addresses a real bottleneck. Cell culture maintenance is a daily burden in biomedical research. Cells do not respect weekends, holidays, nights, or conference schedules. They need monitoring, feeding, passage, and careful handling. The work is repetitive, but failure can ruin days or weeks of research.

The term “variable scheduling” is important. A useful biological robot cannot simply follow a fixed clock. Cells grow differently depending on density, passage number, medium condition, line behavior, incubator state, and other factors. A rigid schedule may pass cells too early or too late. A better system observes cell state and acts when the biology calls for action.

That is a different kind of automation. It is not “do step 12 at 9:00.” It is “monitor the culture, predict its state, and decide whether the next maintenance operation should happen now.” The decision is narrow, but it is biologically meaningful.

This is one area where AI in the lab may first become ordinary. Not as a grand theorist, but as a scheduler, classifier, anomaly detector, and decision assistant. A vision model may flag abnormal morphology. A growth model may predict passage timing. A scheduler may coordinate incubator access. A quality model may detect a failed well before it contaminates analysis. These tasks do not sound like science fiction. They sound like lab management. That is why they matter.

A robotic system that handles routine culture maintenance also changes human work. Instead of spending hours on predictable handling, researchers can design better comparisons, inspect richer data, or troubleshoot unusual results. The time saved is only part of the value. The steadier execution may be more important.

Robots become most useful when they absorb the fragile routine work that science depends on but rarely celebrates. Medical research is full of that work. A robot that keeps cells alive, logs operations, and acts consistently gives researchers a stronger base for more ambitious experiments.

Clinical translation demands sterility, records, and trust

Laboratory automation becomes more serious when it moves near clinical translation. A robot that prepares research samples operates under one set of expectations. A robot that handles cells for clinical research must meet a much higher standard. Sterility, traceability, equipment validation, access control, sample identity, cleaning, deviation records, and quality review become central.

A 2023 SLAS Technology paper evaluated a robotic cell-processing facility for clinical research on retinal cell therapy. The system incorporated Maholo LabDroid and a clean processing unit. The study reported that the facility design met cleanliness and aseptic requirements for cell manufacturing in the tested setting and that iPSC-derived retinal pigment epithelial cells met clinical quality standards for transplantation in that research context.

That work matters because it connects the robot lab story to medical translation rather than only laboratory convenience. Cell therapy and regenerative medicine depend on consistent handling. A small change in culture conditions, timing, or sterility practice may affect the final cell product. Human skill remains valuable, but human variation creates a problem when the goal is repeatable production.

The Science Tokyo center should not be confused with a hospital treatment ward or an approved manufacturing plant for commercial therapies. It is a research infrastructure. Still, the Maholo research record shows that humanoid lab robots have already been tested in clinical-adjacent workflows. Science Tokyo’s Department of Robotic Science notes work involving clinical use of humanoid robots and retinal cell therapy, including cells cultured by the robot that were used in patient treatments at Kobe City Eye Hospital in 2022.

The distinction is important. Research automation can discover or refine protocols. Clinical manufacturing must satisfy formal regulatory standards. The gap includes validation, documentation, qualified equipment, quality systems, lot release, traceability, and trained responsible personnel. A research robot does not automatically become a clinical manufacturing system.

Yet research automation may narrow that gap. If a protocol is developed robotically and its execution is logged from the start, it may be easier to understand, validate, and transfer than a method built around tacit human handling. A machine-readable history of the protocol can reveal how the process changed over time. That record matters when a method approaches clinical use.

Medical robot labs will earn trust through records, not through spectacle. A robot arm moving a tube is visually interesting. A complete record of what happened to a sample, why it happened, who approved it, and whether the result passed quality checks is scientifically and medically valuable.

Reproducibility is the deepest scientific argument

The Tokyo lab is partly a response to reproducibility. Life-science reproducibility problems do not have one cause. They come from incomplete methods, reagent differences, batch effects, cell-line drift, sample variability, weak statistical design, publication pressure, selective reporting, and undocumented manual variation. Robots cannot solve all of that. They can reduce one large source of noise: inconsistent physical execution.

Science Tokyo’s Robotics Innovation Center says precise and consistent robotic operations improve research quality and address reproducibility challenges in the life sciences. The Department of Robotic Science page also connects robotic crowd biology to reproducibility and to reducing research misconduct risk by letting robotic systems execute submitted protocols.

A human protocol often contains words that are easy for people and useless for machines: gently, carefully, briefly, as usual, until adequate, without disturbing. Those words carry tacit knowledge. A skilled technician knows what they mean because they have learned the local craft. A robot requires numbers: volume, angle, speed, acceleration, depth, time, position, temperature, threshold, error tolerance.

That forced precision is uncomfortable. It is also scientifically useful. When a protocol is translated into robot operations, hidden assumptions become visible. The lab must decide what “gentle” means. It must define how long a plate may remain outside the incubator. It must specify how mixing is performed. It must decide what counts as an error. Automation makes vague methods harder to hide.

The same point applies to failure. Human errors are often poorly recorded. A researcher may know that an aspiration felt wrong, a reagent was slightly cold, a plate was left out longer than planned, or a pipette step seemed inconsistent. Some of that may never appear in the notebook. A robot can log errors, retries, alarms, timings, and instrument states automatically.

Robots fail too. They misalign, drift, drop tips, create bubbles, misread positions, suffer sensor faults, and execute bad scripts with perfect obedience. The difference is that many robotic failures leave a record. That record can be audited and corrected. Human micro-variation often disappears.

Reproducibility also requires intellectual discipline. A robot can repeat a weak experiment exactly. It can reproduce a flawed assay, a bad cell line, or a misleading endpoint. Better execution does not equal better science. The strongest case for the Tokyo lab is that it improves the operating layer of research while still leaving the intellectual burden where it belongs: with scientists.

Japan’s demographics give robotics a harder edge

Japan’s interest in robot-run research is not only technological. It is demographic. The Statistics Bureau of Japan reported that as of October 1, 2024, the population aged 65 and older was 36.243 million, equal to 29.3% of the total population. The working-age population aged 15 to 64 was 73.728 million, or 59.6%.

Those figures explain why Japan often treats robotics as infrastructure rather than novelty. A country with a shrinking and aging workforce must decide which work should depend on scarce human labor. Biomedical research contains many tasks that are repetitive, delicate, time-sensitive, and dependent on trained hands. If skilled researchers spend too much time on routine experimental execution, the research system loses capacity.

Science Tokyo’s opening ceremony notice makes that connection directly. It cites Japan’s falling birthrate and the decreasing researcher population as part of the reason for the Robotics Innovation Center. The English director message says Japan faces an urgent challenge in sustaining research infrastructure as the scientific workforce shrinks due to demographic decline.

That framing is more grounded than a simple claim that robots are “better” than people. Robots are not cheaper or easier in every setting. A Maholo-scale system is expensive, heavy, complex, and dependent on skilled support. The argument is not that every lab should replace staff with robots. The argument is that some manual work is consuming human time that Japan may not have to spare.

The demographic argument also changes the ethical framing. If automation is used only to cut jobs, the story looks narrow and defensive. If automation is used to protect research capacity in a shrinking workforce, the story becomes more strategic. Researchers still need jobs, training, and career paths. But the content of those jobs may shift toward protocol design, supervision, data review, quality control, and robotic facility operation.

Japan is also building on industrial strengths. The country has long experience in industrial robotics, precision machinery, manufacturing discipline, and instrument engineering. The RSC review of self-driving laboratories in Japan notes the relevance of Japanese robot makers such as FANUC and Yaskawa, as well as the country’s ecosystem of measurement and analysis equipment companies.

That does not guarantee success in biology. Medical research is harder to automate than many factory tasks. But Japan’s combination of demographic pressure, robotics capability, and biomedical need explains why a project like Science Tokyo’s robotic lab is not just plausible there. It is almost predictable.

Science Tokyo’s merger gave the project a natural home

The Institute of Science Tokyo is young, but its predecessor institutions were not. It was created from the merger of Tokyo Medical and Dental University and Tokyo Institute of Technology, officially opening on October 1, 2024.

That merger matters because the Robotics Innovation Center needs both sides. Medical institutions understand disease, clinical relevance, biological models, patient-derived samples, and translational pathways. Engineering institutions understand robot mechanics, control systems, simulation, software, sensors, AI, and facility design. A robot-run medical lab fails if either side dominates at the expense of the other.

Engineering-led automation can miss biological reality. It may produce machines that work beautifully in demonstrations but fail in sterile workflows, long cell culture timelines, or clinical sample handling. Biology-led automation can identify the pain points but lack the systems expertise needed to build stable robotic infrastructure. Science Tokyo’s merged identity gives the center a better chance of joining those cultures.

The center’s public materials also show a network beyond the university. The opening ceremony and symposium notice listed participation from Japan’s Ministry of Education, Culture, Sports, Science and Technology, Astellas Pharma, Yaskawa Electric, AIST, Serapha Biosciences, and academic researchers.

That mix signals the project’s intended scale. Government matters because research infrastructure needs policy and funding support. Pharma matters because drug and regenerative medicine companies have practical demand for reproducible, automated experimentation. Yaskawa matters because Maholo’s development is tied to industrial robot technology. AIST matters because national research institutes often bridge public research and industrial transfer.

Large-scale lab automation is an ecosystem problem. It cannot be solved by one robot vendor, one AI model, or one professor’s lab. It requires institutions that can maintain shared facilities, companies that can build and support machines, regulators that can define acceptable practice, funders that can tolerate long development cycles, and researchers willing to redesign their workflows.

Science Tokyo’s center also has a training role. The official site says it aims to support and educate internal and external users. That may become one of its most important contributions. The future of robotic biomedical science depends on people who understand both wet-lab work and automation. Those people are still scarce.

The lab is also a data machine

A robot-run laboratory is not only a physical automation system. It is a data machine. Every action can generate a record: volumes, positions, pipette speeds, timings, temperatures, incubation intervals, robot IDs, software versions, sensor readings, images, alarms, retries, operator approvals, instrument settings, and output files.

Human labs usually do not capture that level of operational detail. A notebook may record the method and result, but not every motion, delay, deviation, or environmental condition. A robotic lab can capture much more. That “data exhaust” becomes valuable because AI systems need context. A final measurement without a precise history of how it was produced is often too thin for strong learning.

The robot’s arm is less important than the experimental memory it creates. If every run is logged, researchers can ask better questions after success or failure. Did the result change because of reagent lot, passage number, timing, temperature, pipetting depth, incubation duration, robot motion, or biological variation? Without logs, many of those questions remain guesses.

This data layer also supports protocol libraries. A protocol library is not merely a list of services. It is a set of executable workflows with parameters, device mappings, error handling, performance histories, and quality thresholds. Each run adds evidence. Each failure teaches the facility something. Over time, the center can improve protocols based on repeated use.

Science Tokyo’s current protocol areas — next-generation sequencing, proteomics, organoids, and cell culture — are data-rich domains. Sequencing and proteomics already depend on digital pipelines after sample preparation. Organoids and cell culture increasingly depend on imaging, morphology analysis, gene expression, and long-term tracking. Robotic execution gives those data streams a more controlled physical origin.

The risk is fragmentation. If every robot and instrument stores data differently, the facility becomes a pile of disconnected files. To learn across experiments, the lab needs standard metadata, stable sample identifiers, versioned protocols, instrument calibration records, and links between physical operations and analytical outputs.

Cybersecurity and privacy also enter here. If the lab handles human-derived samples, omics data, or clinical metadata, data governance must be strict. Cloud-based operation increases access, but it also widens the attack surface. A robot lab’s data system must protect not only research results but sample identity, protocol integrity, and physical operations.

The best automated labs will treat data architecture as part of experimental design. A robot that performs a task without leaving useful records is only partially automated. A robot that performs a task and creates a searchable, auditable, machine-readable history changes how research knowledge accumulates.

Failed experiments become more useful when machines record them

Research failure is expensive. A failed experiment consumes human time, reagents, sample material, instrument access, and often weeks of waiting. In biology, a failed experiment may leave little useful information if the failure source is unclear. Was the design bad? Was the cell line unhealthy? Was a reagent degraded? Did someone change the timing? Was contamination present? Did the endpoint fail to measure the right biology?

Robotic labs change the economics of failure by changing the record. They do not make failed experiments cheap. They make more failures informative. If a robot-run experiment fails but every step is logged, the team can audit what happened. If nothing operationally unusual appears, the biological hypothesis may deserve more scrutiny. If a deviation appears, the team can repeat the run or revise the protocol.

That matters in medical research because failure is the norm. Drug discovery, regenerative medicine, disease modeling, organoids, and assay development all involve many dead ends. A research system that learns from failure faster is more valuable than one that only celebrates successful runs.

Automation also changes when work can happen. Robots can run outside normal human hours, if the facility is designed for safe unattended operation. They do not get tired during long repetitive protocols. They do not rush because a meeting is starting. They do not become inconsistent because a task is boring. These traits are especially useful for protocols with long timelines or repetitive condition testing.

The risk is automated waste. A robot can waste enormous resources if a flawed script runs repeatedly or an AI model searches the wrong conditions. Bad design at scale is worse than bad design in one manual experiment. That is why automated facilities need strong review gates, run limits, anomaly detection, and human oversight.

Automation magnifies the quality of experimental design. A well-designed search becomes faster and cleaner. A weak search becomes an expensive machine-run mistake. The Tokyo center’s success will depend less on the number of robots than on the quality of the questions fed into them.

For external users, this creates a new responsibility. Submitting a protocol to a robotic center is not like dropping off a sample at a measurement core. The user must translate a research question into a workflow that can be executed, monitored, and interpreted. The center may support that process, but it cannot turn a vague question into a strong experiment by magic.

Main layers of a robot-run medical research lab

Main layers of a robot-run medical research lab

| Layer | Role in the lab | Main risk |

|---|---|---|

| Robotic execution | Handles samples, liquids, plates, culture vessels, and instrument interfaces | Mechanical failure, misalignment, contamination |

| Instrument network | Links incubators, imagers, liquid handlers, analytical devices, and storage systems | Calibration drift, incompatible formats, downtime |

| AI and scheduling software | Selects timing, flags anomalies, proposes conditions, and coordinates queues | Poor metrics, biased search, brittle models |

| Protocol library | Converts human methods into reusable machine-executable workflows | Tacit assumptions encoded into scripts |

| Data and audit system | Records operations, metadata, results, deviations, and approvals | Missing provenance, weak cybersecurity |

| Human governance | Defines aims, validates protocols, reviews risk, and interprets results | Overtrust, unclear accountability |

The table shows why the robot itself is only one layer. A medical robot lab creates value when physical execution, instruments, AI, protocols, data records, and human governance operate as one controlled research system.

Fully unmanned does not mean fully autonomous

The phrase “fully unmanned” is easy to misunderstand. In the Tokyo case, it appears to mean that no human staff are inside the active laboratory workspace while robots conduct experimental procedures. It does not mean the scientific process is free of human control. Scientists still design experiments, set goals, approve protocols, monitor outputs, maintain systems, and decide what results mean.

Science Tokyo’s official language points to collaboration between robots and researchers. The center is described as a facility where robots and researchers collaborate on life-science experimentation. The director’s message says robots allow researchers to focus on more creative and conceptual scientific activities.

That distinction matters for public trust. A reader hearing “robot-only medical lab” may imagine machines making unsupervised clinical decisions. That is not the right reading. The center is a research infrastructure. It shifts physical experimental work to robots, but accountability remains human.

Autonomy exists on levels. At the lowest level, a robot executes a fixed script. At the next level, the system adjusts timing based on a sensor reading. At a higher level, AI chooses the next experimental condition from a human-defined search space. At a still higher level, a system proposes hypotheses. Medical research will move through these levels unevenly, and each step requires more oversight.

The current public evidence supports a bounded-autonomy model. The eLife study, for example, used AI to search culture conditions inside a defined problem. The LabDroid cell maintenance work used observation and prediction to schedule maintenance actions. These are meaningful forms of autonomy, but they are not open-ended medical reasoning.

The difference matters because inflated claims damage credibility. Robots do not yet replace the full scientific judgment chain: choosing disease models, forming causal theories, deciding which patient populations matter, evaluating ethical risk, interpreting conflicting evidence, and deciding whether a result deserves clinical pursuit.

At the same time, it would be wrong to reduce the lab to “just automation.” The combination of robots, AI, and experimental feedback does change the scientific process. It moves some decisions closer to machines and makes experiments more programmable. The right framing is human-supervised robotic science, not robot science without humans.

Human work moves higher in the research stack

Robot labs change jobs before they eliminate them. A technician who once pipetted plates may become a protocol engineer, robot operator, quality monitor, automation troubleshooter, or data reviewer. A postdoc who once spent nights maintaining cell cultures may spend more time defining search spaces and interpreting results. A principal investigator may need to think harder about which parts of a protocol are genuine scientific variables and which are manual habits.

This shift changes skill. Wet-lab expertise has long been tied to hands-on competence. Researchers earn trust by culturing difficult cells, troubleshooting assays, and producing consistent results. In a robot lab, some of that credibility moves into protocol design and audit literacy. The researcher must understand how a biological method becomes machine-executable.

That does not make biology less important. It makes biological judgment more visible. A robot may execute a protocol perfectly, but a scientist must still decide whether the endpoint is meaningful. A robot may find conditions that improve a proxy score, but a scientist must ask whether the proxy reflects the medical goal. A scheduler may run work efficiently, but a scientist must know whether the timing is biologically sound.

The future lab scientist is not merely a button-pusher. The role becomes closer to designer of experimental systems. That designer needs enough biology to understand the organism or cell model, enough automation knowledge to understand machine constraints, and enough data sense to interpret machine-generated records.

Training will have to change. Biology students will need exposure to robotics, data structure, and automation limits. Engineers will need exposure to sterile technique, cell behavior, sample identity, and biological time. Facility staff will need hybrid skills: wet-lab practice, robot maintenance, software logs, quality systems, and user support.

The shared-center model may make this transition easier. If every research group must build its own robotic expertise from scratch, adoption will be slow. A central facility can concentrate specialists, support users, validate protocols, and spread automation literacy across projects. Science Tokyo’s center says user support and education are part of its role.

This also affects scientific culture. Researchers may need to accept that some of the most valuable expertise in a paper comes from facility staff who translated a protocol into robot operations. Authorship, credit, and contribution norms may need to adjust. A robotic facility is not a passive service when it helps design the workflow that makes the result possible.

Biology keeps autonomy slower than headlines imply

Autonomous science sounds clean when described in abstract terms: generate a hypothesis, run an experiment, read the result, choose the next experiment. Biology makes that loop slow and messy. Cells are not passive objects. They adapt, differentiate, die, drift, contaminate, mutate, and respond to small physical changes. Two cultures that look identical at the start may diverge later.

The eLife iPSC-RPE study shows this difficulty. The authors discuss how cell culture outcomes may be influenced by reagent dose, timing, pipetting strength, vibration, and time outside incubator conditions. Some effects become visible only days or weeks later.

That delay is hard for AI. A model that selects experimental conditions needs feedback. If feedback takes weeks, the search becomes slower and more expensive. If measurements are noisy, the model may chase false patterns. If the endpoint is only a proxy for the true biological goal, the model may find a condition that improves the metric while missing the medicine.

Robots help by holding many physical variables steadier. They do not make the biology simple. A robot can reduce manual noise, but it cannot remove cell-line drift, donor variability, reagent batch effects, or unclear disease relevance. That is why autonomous medical research must begin with carefully chosen workflows.

The strongest early candidates have certain traits. They are structured enough to encode. They have measurable endpoints. They benefit from repeatability. They involve enough conditions that automated search pays off. Cell culture optimization, sample preparation, maintenance scheduling, imaging-based quality checks, and some assay development tasks fit that profile.

Open-ended biology is harder. A scientist may notice an unexpected morphology, pause the plan, inspect a culture manually, and form a new idea. A robot system can be built to flag anomalies, but interpreting them is still difficult. Some scientific discoveries come from noticing something the protocol did not ask about.

That is why the Tokyo center’s two-lab design is sensible. The current robotic laboratory handles defined workflows. The next-generation laboratory works on autonomy beyond what today’s systems can handle. This separates usable present infrastructure from longer-range research.

Medical autonomy will grow from narrow, well-governed loops, not from a sudden leap to independent robot scientists. That does not make the field less important. It makes the path more realistic.

Protocol translation may be the hardest bottleneck

Buying a robot is easier than turning a human protocol into a reliable robot protocol. A methods section in a paper rarely contains enough information for automation. It may say “mix gently,” “remove medium carefully,” “incubate briefly,” or “handle cells as usual.” A trained person knows what those phrases mean in a local lab. A robot does not.

Protocol translation requires a team to break the method into machine operations. Labware must be defined. Liquid classes must be configured. Pipette depth, speed, acceleration, volume tolerance, mixing cycles, and waiting times must be set. Incubator timing must be controlled. Error handling must be defined. Imaging or measurement steps must be linked to sample identity. Cleaning and contamination controls must be specified.

Then the protocol must be tested. Does the robot-run version produce results comparable to the human-run version? Does it introduce stress that changes biology? Does the robot handle edge cases safely? Does it recover from minor failures? Does the protocol produce useful logs? This work is slow and technical.

A 2023 arXiv paper involving Genki Kanda explored the possibility of using large language models to generate robot scripts from natural-language biological protocols. The direction is important because it addresses one of the biggest gaps in lab automation: the distance between human experimental language and machine-executable commands.

Language models may assist with protocol drafting, step extraction, documentation, and script generation. They must not be treated as trusted autonomous protocol writers in medical research. A plausible-looking robot script can still be unsafe, biologically wrong, or incompatible with equipment. Every protocol touching real samples needs verification.

The shared-center model helps because each validated protocol becomes a reusable asset. The first conversion is expensive. Later users can benefit from the work. Over time, the protocol library becomes a form of institutional knowledge. A university with many validated robotic protocols owns more than hardware. It owns executable scientific practice.

Protocol intelligence may become one of the most valuable assets in biomedical research. It includes knowing how to express tacit bench work as parameters, how to handle errors, how to measure quality, and how to transfer a method between humans and machines.

Software will decide whether the robots work as a lab

A room with 10 robots is not automatically a robotic laboratory. The robots must be scheduled, monitored, coordinated, and connected to instruments. Samples must be tracked. Protocols must be versioned. Results must be linked to operations. Failures must be flagged. Users must know what happened. This is a software problem as much as a robotics problem.

Life-science scheduling is especially hard. Some steps must happen within narrow time windows. Reagents degrade. Cells cannot sit too long outside incubators. Instruments are shared. Some workflows take hours; others take weeks. Some samples are rare or patient-derived and cannot be repeated easily. A scheduler must understand biological constraints, not only machine availability.

The RSC review on self-driving laboratories in Japan discusses the need to consider constraints such as reaction times and degradation of living cells and reagents. It also describes work on monitoring labware status and converting natural-language procedures into robot-operating code.

This is where AI becomes practical. A useful AI layer may not look like a chatbot. It may be a scheduler that prevents a culture plate from waiting too long, a vision model that detects whether a tip failed to pick up liquid, a growth predictor that triggers passage timing, or an optimizer that chooses the next set of culture conditions.

The software must also support traceability. If a result is surprising, researchers need to reconstruct the run. Which robot performed the operation? Which protocol version was used? Which reagent lot? Which incubator? Which plate position? Was there an alarm? Did a device retry a failed step? Was a sample outside controlled conditions longer than expected?

Without that traceability, automation loses much of its scientific value. A robot-run experiment with poor records may be as opaque as a poorly documented manual experiment. A robot-run experiment with full records becomes a stronger scientific object.

Scale makes this harder. Ten robots can be managed with close supervision. Two thousand robots require operational discipline closer to cloud infrastructure, semiconductor manufacturing, or automated logistics. Software updates, device status, queue conflicts, access control, emergency stops, and maintenance schedules become part of scientific reliability.

A robot lab is a cyber-physical research system. The “cyber” part — software, data, security, scheduling, version control — is not support work. It is part of the experiment.

Cloud operation could widen access, but biology remains physical

Science Tokyo says the Robotics Innovation Center aims to develop next-generation research infrastructure that integrates autonomous experimentation and cloud-based operation. That phrase points to a major change in who might access advanced laboratory systems.

Cloud-based operation suggests that researchers may be able to submit protocols, monitor work, and receive data without standing next to the robot. This would make a robotic laboratory more like a research platform. A small academic group, hospital, startup, or external collaborator might use advanced robotic workflows without buying the machines.

The idea is not new. Robotic crowd biology, proposed in 2017, imagined many humanoid robots and instruments in a large laboratory space operated remotely through online systems. The University of Tokyo’s RCAST summary and Robotic Biology Institute materials described a model in which researchers submit protocols and receive experimental results from a shared robotic system.

The cloud analogy is useful, but it has limits. Biology is not software. Samples must be collected, shipped, received, stored, tracked, and sometimes processed quickly. Human-derived materials may be subject to consent, privacy, institutional review, biosafety, and cross-border restrictions. Reagents expire. Cells die. Organoids mature. Patient samples vary.

A cloud robotic lab can widen access, but it cannot make biology weightless. It must solve logistics, governance, sample identity, and chain of custody. It must also define what users are allowed to control remotely. No serious medical lab should let external users send arbitrary robot commands into a sterile workspace. Remote operation needs guardrails, approved protocols, simulation checks, and facility review.

The most plausible near-term model is not open remote control. It is structured access. Users select validated workflows or work with facility staff to adapt protocols. The facility reviews safety and feasibility. Robots execute approved runs. Users receive data, logs, and quality notes. Custom protocols become possible once they pass validation.

That model could still change biomedical research. Many institutions cannot afford advanced robotic infrastructure. A shared facility could let more researchers run high-quality automated workflows. The center becomes not only a local lab but a regional or national research service.

Standards will decide whether the 2040 vision scales

A 2,000-robot research infrastructure would collapse without standards. Every robot, instrument, protocol, sample, and data output must be named, tracked, versioned, and connected. If each workflow is custom-built with incompatible formats, the system becomes impossible to scale.

The RSC review of self-driving laboratories in Japan notes that Japan has active laboratory automation communities, including the Laboratory Automation Suppliers’ Association, established in 2019, and broader activity linking researchers, engineers, industry, government, and media around automated science.

That community work matters because standards often start as practical agreements before they become formal documents. How should labware be described? How should deviations be logged? How should protocol versions be named? How should sample IDs travel across instruments? How should microscopy outputs connect to robot actions? How should quality flags be encoded? How should users export records for publication or regulatory review?

These questions sound mundane. They are decisive. Without consistent metadata, AI cannot learn across experiments. Without versioned protocols, replication becomes confused. Without common device interfaces, adding instruments becomes costly. Without sample identity standards, medical research becomes unsafe.

Standards also affect competition. If a facility uses closed formats and vendor-specific scripts, it may become dependent on one provider. If protocols and metadata are portable, the center can combine different robot types and instruments. That flexibility matters because no single vendor will solve every biomedical automation task.

A large robot lab also needs simulation. Before a protocol runs physically, it should be tested for collisions, timing conflicts, labware compatibility, and schedule feasibility. Simulation becomes more important as protocols grow more complex and as multiple robots share space.

Scale requires boring interoperability. The public sees robot arms. The facility succeeds or fails on identifiers, formats, validation rules, maintenance logs, and shared language across biology, robotics, and data systems.

Japan’s angle differs from the global automation race

The global race in self-driving laboratories is not limited to Japan. Researchers in North America, Europe, China, South Korea, and other regions are building automated systems for chemistry, materials science, biology, and drug discovery. Some focus on flow chemistry. Some use microfluidics. Some build modular liquid-handling systems. Some create mobile robots that move plates between instruments. Some companies tie robotic labs to AI drug design.

Japan’s distinctive angle is the combination of humanoid laboratory robotics and a strong industrial robotics base. The RSC review describes Maholo LabDroid as a versatile humanoid used across fundamental and clinical research, and it notes Japan’s robot and instrument ecosystem, including companies such as Yaskawa and FANUC.

The humanoid strategy has strengths and weaknesses. Its strength is compatibility with existing human-designed equipment. Many biology labs cannot rebuild everything around new automation lines. A humanoid robot that uses common instruments may reduce the redesign burden. Its weakness is cost and complexity. Humanoid systems are not always the fastest or simplest way to perform a high-volume task.

Other countries may choose different architectures. A materials lab may benefit from modular synthesis stations. A drug-screening lab may use high-throughput liquid handlers. A chemistry lab may use flow reactors. A biology lab may need mobile robots, incubator integration, imaging, and flexible manipulation. There will be no single winning form.

Insilico Medicine’s 2025 announcement of a bipedal humanoid called Supervisor in an AI-powered robotic drug discovery laboratory shows that embodied AI and humanoid supervision are also entering commercial drug discovery narratives. The point is not that every lab needs a humanoid. The point is that companies and universities are testing physical AI systems beyond purely digital models.

The Tokyo center will be watched because it is public, institutional, and medical. A private company may show impressive automation but keep many details closed. A university center, if it publishes protocols and results, can shape the field more openly. It can train people, set benchmarks, and show which workflows are actually ready.

The global race will probably produce hybrid laboratories. Humanoid robots will handle flexible tasks. Specialized machines will handle high-throughput tasks. AI will schedule, select, classify, and monitor. Human researchers will supervise the scientific frame. The Tokyo lab is one prominent version of that future, not the only one.

Open and low-cost automation will shape the field from below

High-end systems like Maholo get attention, but laboratory automation will not grow only through expensive flagship centers. Lower-cost and open systems also matter because they train people, lower barriers, and let small labs prototype automated workflows.

Hokkaido University announced FLUID in 2025 as an open-source, 3D-printed robotic platform for automated materials synthesis. The system is not a direct competitor to Maholo because it targets materials synthesis rather than medical cell culture. It still shows a broader pattern: researchers want automation that is cheaper, modifiable, and accessible outside elite facilities.

This lower-cost layer matters for education. Students who build, modify, and repair simple automation platforms learn the physical logic of robotic science. They learn why alignment matters, why calibration drifts, why liquid handling is difficult, why metadata matters, and why a protocol is not truly automated until it can recover from errors.

High-end centers and open platforms may reinforce each other. A flagship robotic medical lab can validate complex workflows and set quality expectations. Low-cost platforms can broaden the user base and train future automation scientists. Mid-tier instruments can automate common tasks inside individual labs. The field needs all three layers.

This matters for Science Tokyo’s 2040 vision. A country cannot operate thousands of research robots without a workforce that understands automation. That workforce will not be trained only on million-dollar systems. It needs hands-on exposure at many levels.

Open systems also put pressure on closed vendors. If researchers learn to expect programmable, modifiable, documented platforms, proprietary systems must justify their limits. In medical research, full openness is not always possible because validated systems need control. Still, transparent interfaces and data export will become more important.

The future of lab automation will be shaped from both ends: expensive shared infrastructure at the top and accessible experimental platforms at the bottom. Tokyo’s robotic medical lab belongs to the first category. It will be stronger if the wider ecosystem develops the second.

Pharma will care about decision quality, not robot theater

Pharma and biotech will watch the Tokyo center because medical research automation touches their hardest problem: decision-making under uncertainty. Drug discovery is expensive not only because experiments cost money, but because weak assumptions often survive too long. A robot lab that generates cleaner, more reproducible, better-documented experimental evidence could change when companies stop, redirect, or expand programs.

The business case is strongest where workflows are repetitive, sensitive, and data-rich. Cell-based assays, stem-cell models, organoids, sample preparation, biomarker testing, assay development, and protocol search fit this pattern. A robot that reduces manual variation may make early signals easier to interpret. A closed-loop system may test conditions faster. A strong audit trail may make collaboration easier.

The Tokyo center’s industry links are visible in the opening ceremony program, which included Astellas Pharma and Yaskawa Electric among listed participants. That does not mean the center is a pharma lab. It means the project sits in a network where industry demand is likely.

The broader market is also moving. Reuters reported in March 2026 that Eli Lilly expanded a partnership with Insilico Medicine in a deal worth up to $2.75 billion for AI-powered drug discovery. That kind of deal shows that pharma is willing to invest heavily in AI-assisted discovery platforms. Robotic labs are the physical counterpart to that digital push.

AI drug discovery often faces a gap between prediction and experiment. A model may suggest a compound, target, or mechanism, but biology must still confirm it. Robotic labs make the feedback loop faster and more systematic. If an AI model proposes ideas but experiments remain slow, noisy, and manual, the system bottleneck remains physical. Robot labs attack that bottleneck.

Still, pharma will not pay for robot theater. It will pay for better decisions: stronger assay quality, cleaner reproducibility, faster protocol search, more reliable cell models, better documentation, and evidence that weak candidates can be killed earlier. A robot center that produces those outcomes will matter. A center that only produces impressive videos will not.

The real commercial question is whether robotic experimentation improves the quality of go/no-go decisions. If it does, pharma will keep investing. If it only shifts labor costs without improving evidence, adoption will stay limited.

Tokyo robot lab facts and cautions

Tokyo robot lab facts and cautions

| Item | Current status | Editorial caution |

|---|---|---|

| Institution | Institute of Science Tokyo, Yushima Campus | Some reports loosely describe the site in ways that can confuse it with other Tokyo universities |

| Facility | Robotics Innovation Center | Official timeline says established Oct. 1, 2025; full robotic facility operation Apr. 1, 2026 |

| Main robot | Maholo LabDroid and other robotic systems | Maholo is not new; the center-scale deployment is the news |

| Current robot count | Reported as 10 robots | Public English materials do not fully detail every system in the count |

| Long-range target | About 2,000 robots by 2040 | This is a strategic target, not proof of guaranteed deployment |

| Research scope | Life science, cell culture, organoids, proteomics, next-generation sequencing | “Medical lab” does not mean a hospital ward treating patients directly |

| Autonomy level | Programmed robotic workflows with AI development pathway | Humans still define aims, approve work, validate results, and carry accountability |

The caution column matters because the story is important without exaggeration. The center represents a real shift in research infrastructure, but it should not be described as independent robot scientists replacing medical researchers.

Regulation will shape the pace more than hype

Medical research automation sits near regulated territory even when it is not itself a regulated medical product. If robots handle stem cells, patient-derived samples, human tissue, organoids, or therapy-adjacent workflows, institutions must manage biosafety, consent, privacy, sample identity, access control, and audit records.

A robot does not remove liability. It redistributes it. If a robot contaminates a sample, executes the wrong protocol version, uses the wrong reagent, or mislabels a plate, responsibility must be clear. The principal investigator, facility director, vendor, software provider, and institution may all have roles. Contracts and governance must define them before failures occur.

The closer a workflow moves to clinical translation, the stricter the expectations become. Research automation can explore conditions and refine methods. Clinical manufacturing requires formal validation, quality management, deviation handling, chain of custody, release criteria, and regulatory review. A research robot does not become a clinical manufacturing system merely because it handles cells.

The 2023 retinal cell processing facility paper is important because it evaluated cleanliness and aseptic performance in a clinical research setting rather than only robot motion. That is the kind of evidence regulators and clinical collaborators will expect.

AI adds another layer. If AI schedules a maintenance step based on predefined thresholds, the governance burden is manageable. If AI proposes a change that affects clinical-grade cell production, review requirements rise. The more AI influences experiment choice, the more institutions need validation, review gates, and documentation.

Regulation may slow adoption. It may also improve the field. Medical robot labs cannot rely on vague claims. They need evidence that workflows are controlled, records are complete, and errors are investigated. The best centers will treat regulation not as a burden after the fact but as a design principle.

Trust in medical automation will come from documented control. A robot lab must show not only that it can perform operations, but that it can prove what happened, detect problems, and assign responsibility.

Cybersecurity becomes laboratory safety

A cloud-connected robot lab is a cybersecurity system with physical consequences. The risk is not only stolen data. A malicious or careless change to a protocol could damage samples, waste expensive reagents, compromise results, or create safety hazards. If human-derived samples or omics data are involved, privacy risk rises as well.

Laboratory cybersecurity usually sounds less dramatic than robot hardware, but it may decide whether remote operation is safe. Users should not be able to send arbitrary commands into a wet lab. Protocol changes should be versioned, reviewed, and tested. Remote access should use strong authentication. Device networks should be segmented. Logs should be protected from tampering. Software updates should be controlled.

A robot lab also needs protection against accidental harm. A badly written script may not be malicious, but it can still break a workflow. It may leave cells outside the incubator too long, use the wrong volume, collide with equipment, or repeat an operation after a sample has already moved. Simulation and pre-run validation reduce those risks.

Scale increases exposure. A bug in one robot script may damage one experiment. A bug in a shared protocol library may damage many. A scheduling failure may cascade across instruments. A compromised model may influence many experimental choices. The more programmable the lab becomes, the more software governance becomes part of safety.

Cybersecurity also affects scientific integrity. If logs can be altered, the audit trail loses value. If protocol versions are unclear, results become hard to trust. If external users can access data beyond their project, collaboration becomes risky. If sample identifiers leak, privacy may be compromised.

A robot lab is not safe because robots are precise. It is safe only if the whole cyber-physical system is controlled. That includes hardware, software, users, data, protocols, and emergency procedures.

Ethics begins with access, consent, and accountability

The idea of robots and AI generating scientific knowledge invites philosophical questions. Who gets credit when an AI suggests a condition and a robot runs the experiment? Can a machine be an inventor? Should AI influence research priorities? Those questions matter, but the first ethical layer is more practical.

Access is one issue. If robotic labs serve only wealthy institutions and companies, they may widen research inequality. If they operate as shared infrastructure with clear access rules, they may give smaller groups access to experimental capacity they could never build alone. Science Tokyo’s stated plan to support outside users will be worth watching for that reason.

Consent is another issue. Medical research often uses human-derived samples, genomic data, imaging data, or clinical metadata. A robotic workflow does not reduce the obligation to respect consent limits. If samples are processed through cloud-operated systems or shared facilities, institutions must make sure data and material use remain within approved boundaries.

Bias can enter through protocols and data. If a robotic center automates only methods from elite labs, it may encode their assumptions. If AI search methods chase easy metrics, they may neglect endpoints that matter clinically. If cell models or patient-derived samples lack diversity, the automated results may not generalize.

Accountability must be explicit. When a robot runs the experiment and AI helps choose the condition, humans still sign off on the work. The facility must define who approved the protocol, who reviewed the output, who owns the data, who handles deviations, and how contributions are credited.

Credit may become contentious. Facility staff who translate protocols into robot workflows may make intellectual contributions, not merely technical ones. AI systems may propose conditions that lead to publishable findings. Current authorship norms may not fit every case. Institutions will need policies before disputes appear.

The ethical argument for the Tokyo lab is strongest when automation is tied to transparency, shared access, better records, and safer reproducibility. It is weakest when automation is sold as a replacement for human judgment.

The public story should avoid two mistakes

The first mistake is to claim that robots now do science alone. They do not. They execute protocols, monitor conditions, and in some cases choose among defined experimental options. They do not replace the full human chain of scientific reasoning: problem selection, disease relevance, causal interpretation, ethical review, and accountability.

The second mistake is to dismiss the lab as fancy pipetting. That is too small. A robot-run medical lab is not only a mechanical assistant. It is a system for turning experimental work into programmable infrastructure. Its value lies in repeatable execution, operational metadata, protocol libraries, closed-loop search, remote access, and auditability.

The accurate claim is stronger than either exaggeration: Science Tokyo is building a human-supervised robotic research infrastructure that could shift parts of medical science from manual bench work toward machine-executed, data-rich experimental systems.

That statement does not require science fiction. It does not claim that robots are curing disease on their own. It says the operating layer of biomedical research is changing. That is already enough.

Careful language is especially important because medical AI and robotics face public distrust. People worry about safety, jobs, accountability, and loss of human control. Inflated claims attract attention but weaken trust. A realistic account allows readers to see both the opportunity and the limits.

Science Tokyo’s own public materials provide a better model than many headlines. They emphasize reproducibility, research infrastructure, demographic pressure, AI-equipped robots, user support, and collaboration between humans and machines. That framing should guide the broader discussion.

The lab technician role will change before it disappears

The idea that robots will simply replace lab technicians misses the structure of laboratory work. Many tasks remain difficult to automate: unusual sample handling, troubleshooting, cleaning, maintenance, safety response, improvised inspection, reagent preparation, protocol validation, and exception handling. Robots need people around them, even if humans are not inside the active workspace during operations.

What changes is the center of gravity. Repetitive, documented, time-sensitive tasks may move to robots. Human staff may focus more on protocol setup, quality review, equipment maintenance, data checking, deviation handling, and user support. In advanced centers, technical work may become more skilled rather than less.

This transition may be hard for workers whose identity is tied to hands-on bench skill. It may also create new opportunities for people who combine biology, robotics, and data skills. A technician who understands sterile technique and robot logs may become more valuable, not less.

Training will be decisive. Institutions cannot assume that biologists will automatically become automation users or that engineers will automatically understand biological constraints. The facility must teach people how to design robot-ready protocols, interpret machine logs, and recognize when an automated result is biologically suspicious.

Science Tokyo’s commitment to user support and education is therefore not a side service. It is part of the center’s scientific value.

There is also a labor ethics question. Automation should not be used as a vague excuse to devalue technicians. Many labs depend on technicians’ tacit knowledge. A successful robot center should capture that knowledge respectfully and turn it into documented, reusable workflows. The people who know the protocols best should be part of the automation process.

The best version of the robot lab does not erase laboratory expertise. It changes where that expertise is applied.

Boring operations will decide whether the center succeeds