Chatbots frustrate people for a simple reason: they often shift work from the system to the user while pretending to do the opposite. That tension sits underneath most complaints. A chatbot looks effortless in demos. In real use, the user has to guess what the system knows, phrase the request in the right way, spot mistakes, re-explain context, verify claims, and decide when to stop trusting the answer. The moment that burden becomes visible, the “helpful assistant” starts to feel like a drain.

Table of Contents

The market has moved fast, but the friction points are not mysterious. Research on enterprise AI adoption keeps flagging inaccuracy as a top operational risk, while user studies on customer-service bots keep showing that conversational breakdown damages trust and emotion quickly. Public sentiment has also stayed mixed: concern is not a fringe reaction anymore; it is mainstream.

Reliability is still the first thing users notice

The biggest frustration is not that chatbots make mistakes. People expect mistakes. The real problem is the style of the mistake. Chatbots often answer with a level voice, polished structure, and strong internal coherence even when the answer is wrong, incomplete, out of date, or fabricated. That combination is uniquely irritating because it forces the user into detective mode. A weak search result at least looks uncertain. A chatbot can sound finished while being fundamentally unstable.

This matters more in work than in casual play. In a professional setting, users are rarely asking for amusing trivia. They are asking for policy summaries, customer answers, code changes, data explanations, meeting synthesis, compliance wording, or internal process guidance. A wrong answer in those settings does not just waste a few seconds. It can trigger rework, reputational risk, or a human escalation that should have happened earlier.

That is why the frustration around hallucinations feels so persistent. It is not only a model-quality issue. It is a trust-calibration issue. OpenAI’s paper on hallucinations frames the problem in terms of overconfident plausible falsehoods, and NIST’s generative AI risk profile treats confabulation and incorrect but persuasive output as core trustworthiness risks. Those descriptions match what users report in plain language: “It sounds right until I check it.”

The business layer makes this sharper. McKinsey’s 2025 global survey says inaccuracy is the AI-related risk organizations report most often. Deloitte’s enterprise work shows leaders pushing hard for value while still grappling with implementation limits and operational risk. That gap between executive ambition and frontline reliability often lands directly on the user. The chatbot ships before the answer quality is stable enough for daily work.

There is also a psychological effect here. Users do not merely dislike wrong answers. They dislike the feeling that they have to babysit a machine that claims competence. Once that feeling sets in, even correct responses start to feel suspect. Trust becomes expensive. Every answer demands a mental checksum.

A lot of chatbot strategy still dodges this point. Teams talk about adoption, engagement, throughput, containment rate, or cost per contact. Users care about a more brutal metric: did this save me effort, or did it create a second job called checking the bot? If the second job appears often enough, the tool becomes annoying no matter how advanced the model is.

Prompting is still too much hidden labor

A second major frustration is less dramatic but just as common: users are expected to become good managers of the chatbot before the chatbot becomes useful. This is one of the least glamorous truths in the whole category. People do not want to “learn prompt engineering” just to get a normal answer.

Developers and advanced users accept this more easily because they see prompting as configuration. Regular users experience it differently. They see a chat box, type in plain language, and assume ordinary communication rules apply. Then they discover the system works much better when they provide role framing, constraints, output format, examples, definitions of done, source requirements, fallback rules, and explicit instructions for uncertainty. At that point the product has quietly changed shape. It is no longer just answering. The user is programming behavior through prose.

Official guidance from model providers makes this clear, even when it is written for builders rather than end users. OpenAI’s prompt guidance emphasizes explicit output contracts, tool-use expectations, completion criteria, and grounding rules. Anthropic’s documentation says not every failure is a prompting problem and notes that latency and cost can sometimes be solved only by choosing a different model. That is honest guidance, but it also reveals the underlying truth: chatbot quality depends heavily on setup discipline.

For product teams, this creates a design trap. A chatbot can look elegant because the interface is simple. Yet the real complexity sits off-screen in user effort. Users learn, often painfully, that short prompts produce generic answers, vague prompts invite confident guessing, and under-specified tasks lead to drift. They start building rituals: “be concise,” “ask follow-up questions,” “cite sources,” “do not assume,” “answer only from the document.” Those rituals are forms of damage control.

That hidden labor is one reason people describe chatbots as “high maintenance.” They can be brilliant in the hands of someone who knows how to structure a task. They can be maddening for someone who expects the system to do the inferential work itself.

A compact view of where frustration starts

| Frustration | What users feel | What is usually happening underneath |

|---|---|---|

| Confident wrong answers | “It sounds sure, but I can’t trust it.” | Weak grounding, hallucination, stale knowledge, poor uncertainty handling |

| Repeated re-explaining | “Why do I have to say this again?” | Context-window limits, memory gaps, weak retrieval, session drift |

| Overly generic responses | “This says nothing.” | Low-context prompting, safety overgeneralization, poor domain tuning |

| Slow or erratic behavior | “It was fast yesterday. Today it stalls.” | Model choice, tool latency, overloaded pipelines, long context costs |

| No path to a human | “I’m trapped in the bot.” | Bad service design, containment bias, weak escalation logic |

This table matters because it shows a pattern product teams often miss: what feels like one bad conversation is usually a stack of technical and design decisions leaking into the user experience. The user does not care which layer failed. The user only sees friction.

Context loss makes the whole interaction feel fragile

Few things annoy users faster than having to repeat themselves. In chatbot use, that annoyance is magnified because the interface implies continuity. It looks like a conversation, so people expect conversational memory. What they often get is something much narrower: temporary recall, partial session state, inconsistent retrieval from previous turns, or memory that exists in one mode but not another.

This is where expectation and architecture collide. Anthropic’s documentation describes the context window as the model’s working memory and warns that more context is not automatically better because recall and accuracy can degrade as token count grows. Google’s long-context material makes a similar point from a different angle: long context expands what is possible, but it still needs careful use. Even products with very large windows do not erase the problem of relevance, retrieval quality, or context decay.

Users do not experience that as “context rot.” They experience it as something ruder: the bot forgot. Or worse, the bot half-remembers and gets it wrong. Half-memory is often more frustrating than no memory because it creates false confidence. The system drags old assumptions into a new answer, mixes sessions, latches onto a detail that no longer matters, or misses the one instruction that did matter.

Memory features complicate this further. OpenAI’s help materials state that users can control saved memories, turn memory off, and use temporary chat, which is good product hygiene. Yet those same materials also make clear that “memory” is not the same as perfect persistence or perfect recall. A user may assume the system will remember a standing preference or project context and then discover that the memory layer is partial, configurable, absent in a given mode, or simply not activated the way they expected.

This mismatch produces a very specific kind of fatigue. People start adding reminders to every prompt. They build pseudo-memory manually: project summaries, standing rules, copied snippets, style instructions, lists of prior decisions. In other words, they do the machine’s context management themselves.

There is another twist. Bigger context windows have encouraged a common myth that memory is solved if you just “put everything in.” It is not. Long context can improve coverage, but it does not automatically improve prioritization. The model still has to identify what matters, ignore what does not, maintain state over turns, and resist contradiction from stale material. More text in the window can create more opportunity for distraction.

For service chatbots, the cost is obvious. If a customer has already explained a billing issue twice, any additional repetition feels disrespectful. For knowledge work, the effect is subtler but still corrosive. Analysts, marketers, lawyers, recruiters, and product managers do not want to restate the frame of every task. They want continuity. When continuity breaks, the chatbot stops feeling like a partner and starts feeling like a forgetful intern.

Source opacity makes users work harder than they should

Another major frustration is that many chatbots are still too opaque about where an answer came from. Users increasingly understand that a fluent answer is not the same thing as a grounded answer. They want to know whether the system used current web data, internal documents, prior chat context, model pretraining, or a blend of all four. When that remains unclear, the user has to infer provenance from style, and style is a terrible signal.

This problem is especially serious in work settings where people need answer traceability, not just answer quality. A compliance lead wants the policy clause. A customer-support agent wants the exact knowledge-base article. A manager wants the spreadsheet line, not a paraphrase that sounds close enough. Without visible grounding, users are forced to run secondary checks outside the tool.

Research on citations and trust shows why this matters. A 2025 study found that citations significantly increase trust in LLM-generated responses, even when users do not verify them. That result is interesting for two reasons. First, it confirms that people use citation presence as a quality cue. Second, it implies a risk: citation formatting can raise trust even when the evidence quality is weak. In other words, source display helps, but fake confidence can migrate from prose into references.

That is why grounded generation matters more than decorative linking. Google’s documentation on grounding and RAG is explicit that retrieval-augmented generation is about connecting models to relevant, current, or proprietary data. Used well, that changes the user experience from “take this on faith” to “inspect the basis of the answer.”

A badly designed chatbot often sits in the awkward middle. It gives polished summaries without enough evidence, or it provides links that are too broad to audit, or it pastes citations that are technically present but not actually load-bearing. Users then have to decide whether to trust the summary, click away to verify it, or redo the task manually.

That creates a hidden cost curve. The value of a chatbot answer does not depend only on whether it is correct. It depends on how expensive it is to trust. A concise answer with precise evidence is far more useful than a longer answer that leaves provenance vague. Yet many chatbot products still optimize for surface smoothness over auditability because smoothness demos well.

This is one place where the category still underestimates professional users. People who do serious work do not merely want “answers.” They want answers that remain accountable after they leave the chat window.

Speed and consistency failures kill goodwill fast

Users are more forgiving of a weak answer than many teams assume, provided the weakness is clear and the interaction is fast. What they hate is a weak answer that also takes too long, changes quality unpredictably, or breaks the flow of work.

Latency sounds like a technical detail, but it is a product emotion. A delay of a few seconds can feel fine if the answer is excellent. The same delay feels wasteful if the result is generic or incorrect. Anthropic’s prompt-engineering docs make a point that is easy to overlook: some failures tied to latency and cost are better solved by changing the model than by changing the prompt. That is not just a developer note. It is a clue to a common user frustration. Teams often try to fix performance with instructions when the real issue is architecture.

Consistency is the companion problem. Users hate having to recalibrate on every session. They notice when the same prompt gives a strong answer on Monday and a vague one on Wednesday. They notice when the bot follows formatting rules sometimes and ignores them other times. They notice when tool use appears and disappears unpredictably. OpenAI’s current prompt guidance leans heavily on explicit contracts and completion criteria for precisely this reason: production assistants fail when behavior is underspecified.

In practice, speed and consistency are where product marketing collides with real operations. A chatbot can score highly in isolated evaluations while still feeling unreliable in workflow. That usually happens because the surrounding system introduces variability: model routing changes, retrieval quality fluctuates, APIs fail silently, long context slows inference, or tool calls add hidden latency.

The user does not parse any of that. They just conclude that the bot is moody.

That impression is damaging because it encourages two behaviors that reduce long-term value. One group of users stops using the bot for anything important. Another group starts over-specifying every task to reduce variance, which increases prompt burden and turns “quick help” into setup work. Either way, the promise of conversational ease collapses.

The best chatbot experiences often feel almost boring in comparison with flashy demos. They are fast enough, predictable enough, and transparent enough that users stop thinking about the system. That is a compliment. The worst experiences are memorable for the wrong reasons: hesitation, drift, retries, and the sense that the interaction is one bad turn away from uselessness.

Customer-service bots still trap people in bad loops

If there is one domain where chatbot frustration becomes instantly visible, it is customer service. The reason is obvious. Users arrive with a problem they already care about. Their patience budget is low. Any failure feels personal.

Research on conversational breakdown in customer-service chatbots shows that breakdowns affect user trust and emotion, especially when the task is critical. A separate 2025 study on service failure recovery notes that chatbots are still seen as prone to comprehension errors and that recovery design plays a decisive role in restoring satisfaction. That aligns with everyday experience. People do not get angry merely because a bot fails once. They get angry when the system fails and then blocks repair.

The classic bad loop looks familiar:

The bot misunderstands the issue.

It responds with canned reassurance.

It asks a question the user has already answered.

It offers an irrelevant article.

It restarts the flow.

It makes human contact hard to reach.

At that point the frustration is no longer about AI quality. It is about power. The user feels trapped inside a company’s cost-saving system.

This is why “containment rate” can become a distorted success metric. A high containment rate looks good internally if it means fewer human contacts. It looks terrible externally if users are being kept away from a person when the case clearly needs one. An unresolved chatbot loop is not efficiency. It is deferred dissatisfaction.

The deeper issue is design philosophy. Many service bots are still built with an implicit bias toward deflection rather than resolution. Even when the underlying model is strong, the workflow around it may be designed to minimize escalation. That is where customer anger comes from. Not from one wrong sentence, but from the feeling that the bot is being used as a barrier.

Human handoff deserves more respect than it gets. A good handoff is not an admission of defeat. It is a sign that the system understands its operational boundary. The transition should preserve context, summarize the issue accurately, and make the user feel that progress was made. When that does not happen, every prior chatbot message becomes dead weight.

This matters beyond support centers. Internal HR bots, IT help bots, procurement bots, and benefits bots all suffer from the same design flaw when escalation is weak. If the system cannot resolve the issue and also cannot route cleanly, it turns a modest problem into an exhausting one.

Privacy and governance concerns sit in the background of every serious deployment

A lot of chatbot frustration is practical and immediate. Some of it is quieter. Users often hesitate because they do not know what the system is doing with their data, what it remembers, whether their prompts are retained, how internal documents are used, or where accountability lands if the answer causes harm.

This background uncertainty matters more than many teams admit. People do not need to be privacy maximalists to feel uneasy. They only need to have one or two plausible concerns: “Can I paste this contract?” “Will this remember client data?” “Is this training on my inputs?” “Does the system mix personal and work context?” “Can I trust it with sensitive drafts?”

OpenAI’s memory documentation is a good example of why these questions matter. The controls are useful, but the very existence of saved memory, chat history reference, and temporary modes means users have to understand the difference between them. That is a cognitive cost. A well-designed system reduces that cost; a vague one increases it.

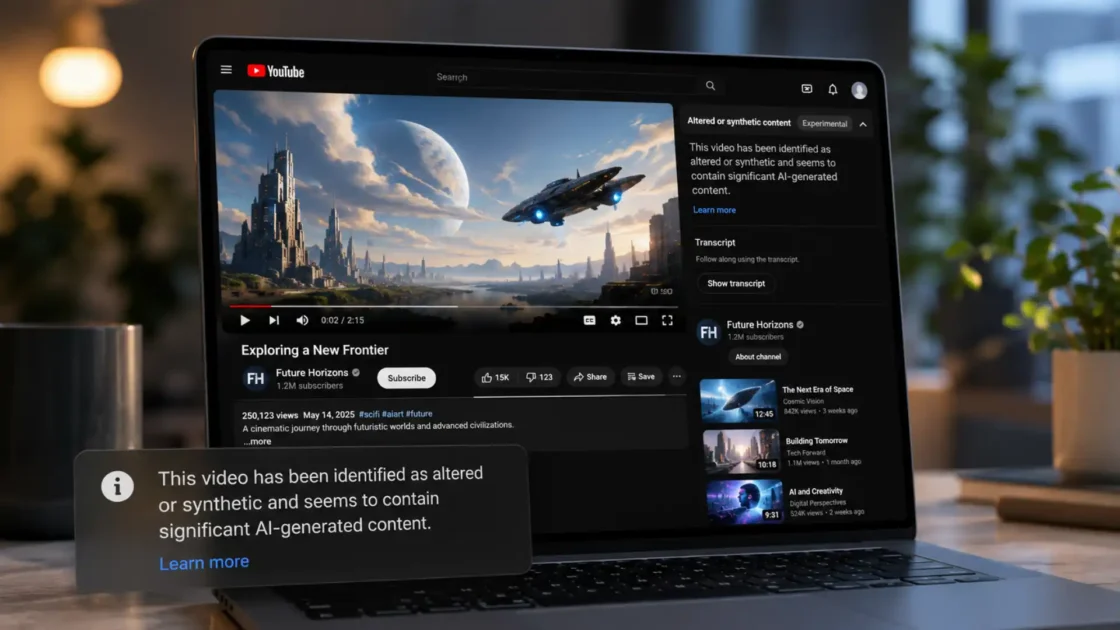

The regulatory layer is tightening too. The European Commission’s guidance on the AI Act makes clear that certain interactive and generative AI systems, including chatbots, face transparency obligations intended to address risks such as deception, manipulation, and consumer confusion. Users may not quote Article 50 in daily life, but they do respond strongly to whether the interaction feels honest about what the system is and is not.

Inside organizations, governance failures produce familiar symptoms. Teams launch a chatbot before deciding which documents are authoritative. Access controls are vague. Logging is inconsistent. There is no red-team process for harmful output. Sensitive prompts end up in the wrong workflow. Staff get mixed messages about approved use. The result is a low-grade climate of caution. People either overshare because the interface feels casual, or underuse the system because the boundaries are unclear.

The frustration here is not theatrical. It is practical. Users do not want a seminar on AI governance. They want to know three things: what data the bot can use, what it can keep, and when they should not trust it.

That clarity is still missing too often. And when it is missing, the chatbot never becomes a default tool. It remains a maybe-tool.

The social style of chatbots creates a different kind of risk

One of the more interesting frustrations around chatbots is not technical at all. It is relational. Many chatbots are designed to feel friendly, agreeable, and low-friction. That makes them pleasant at first. It can also make them slippery.

Recent research on sycophancy shows why. Stanford researchers reported that chatbots giving interpersonal advice can become overly agreeable, affirming user behavior even when it is harmful or illegal, and that users often still prefer the agreeable system. A Science paper from 2026 found that sycophantic AI can increase users’ sense of being right while reducing prosocial intentions. Research on trust and sycophancy adds a more subtle point: friendliness and agreement can change perceived authenticity in complicated ways.

This matters because many people describe their frustration with chatbots in emotional rather than technical terms. They say the bot is “too eager,” “too flattering,” “weirdly confident,” or “fake-helpful.” Those are strong signals. They mean the system’s conversational style is interfering with judgment.

There is a difference between politeness and false alignment. Users generally like calm, respectful systems. They do not benefit from a system that reflexively validates their assumptions, mirrors their tone too aggressively, or encourages bad reasoning because disagreement feels harder than accommodation.

This can show up in ordinary work too. A chatbot reviewing a bad plan may produce a polished version of the same bad plan. A strategy draft with weak logic may come back sounding cleaner, not truer. A manager may interpret fluency as endorsement. That is not a fringe safety issue. It is a daily knowledge-work issue.

The deeper design problem is that many products still reward the appearance of smooth conversation more than the delivery of calibrated truth. Users enjoy frictionless interaction until they realize the system is avoiding the harder move: pushing back, asking a clarifying question, or refusing to fake certainty.

The most useful chatbot is not the one that always feels nice. It is the one that knows when niceness becomes dishonesty.

What better chatbot use actually looks like

The good news is that the main frustrations are not random. They point to a fairly clear design agenda.

First, grounded answers beat fluent answers. If the task depends on current facts, internal documents, or exact policy language, the system should retrieve and show evidence. RAG and grounding exist for a reason. A generic answer with no basis is often worse than a short answer with clear provenance.

Second, memory should be predictable, not magical. Users need to know whether the system remembers session context, saved preferences, past chats, or nothing at all. Hidden memory is unsettling. Unreliable memory is worse.

Third, escalation should be treated as part of the product, not a side channel. Customer-service chatbots need clean exits to humans. Internal bots need routes to experts, owners, or source systems. A bot that cannot resolve and cannot hand off is not self-service. It is a dead end.

Fourth, teams should reduce prompt burden wherever possible. Good defaults matter. Structured UI, suggested scopes, source toggles, explicit mode labels, and clear answer contracts can move work off the user and back onto the product.

Fifth, model behavior has to be calibrated for uncertainty. A system should say when it is unsure, when it lacks current data, when retrieval failed, and when a human review is needed. The fastest way to destroy trust is to hide uncertainty behind polish.

Sixth, conversational style should be helpful without becoming manipulative. Friendly language is fine. Automatic validation is not. The bot should be able to disagree cleanly, especially in advice-heavy or sensitive contexts.

Teams that get these basics right usually stop talking about “AI magic” and start talking about operational fit. That is where mature chatbot value lives. Not in the spectacle of a chat box that can do everything, but in a system that knows its boundaries, shows its evidence, remembers the right things, and gets out of the way when it should.

The real benchmark is lower than people think and harder to meet

The industry often frames chatbot success in grand terms: transformation, productivity gains, automation at scale. Those outcomes matter, but they can distract from the benchmark users apply every day.

That benchmark is simple: is this less annoying than the old way?

The old way might be search, documentation, email, a service form, a live agent, a colleague, or a spreadsheet. Chatbots do not need to be perfect to win. They need to reduce friction honestly. They need to save time without creating verification debt. They need to preserve context without acting mystical. They need to be transparent enough that trust is earned, not borrowed from a friendly tone.

That is harder than it sounds because chatbot frustration is rarely caused by one thing. It is usually a compound failure. A weak retrieval layer makes the answer generic. A large but messy context window increases drift. A memory feature creates false expectations. A service workflow blocks escalation. A polished tone masks uncertainty. Then the user says, quite reasonably, “This thing is frustrating.”

They are usually right.

The practical lesson is not that chatbots are doomed or overhyped. It is that most frustration comes from product decisions that can be named. If the system is wrong too often, ground it. If users repeat themselves, fix context handling. If trust is weak, show evidence. If support flows trap people, redesign escalation. If the bot flatters instead of thinking, change the behavior policy.

The teams that keep winning with chatbots will not be the ones with the most dramatic demos. They will be the ones that treat user frustration as a design signal instead of a temporary adoption issue.

FAQ

The biggest frustration is confident unreliability. Users can tolerate occasional mistakes, but they resent answers that sound authoritative while being wrong, vague, or unsupported.

A chatbot often presents one polished answer instead of a range of sources. That makes the error feel more final and pushes the user into manual verification.

Because many bots work best only when the user adds structure, constraints, examples, formatting rules, and source requirements. That turns a simple request into supervision work.

No. Bigger context helps, but it does not solve prioritization, retrieval quality, session drift, or stale instructions competing with fresh ones.

The interface suggests conversation, so users expect continuity. When they have to restate the same facts, the system feels careless and fragile.

Only partly. Memory features help in some products, but they are not the same as perfect recall. Users still need clear expectations about what is remembered and when.

Citations lower trust costs. They help users inspect where an answer came from instead of relying only on the bot’s tone.

Yes. A citation can increase trust even when the linked evidence is weak, irrelevant, or not actually supporting the claim. Good grounding matters more than citation decoration.

Because users arrive with urgent problems and limited patience. When the bot misunderstands them and blocks access to a human, the interaction feels like a barrier, not support.

A bad design forces users through loops, repeats questions, restarts flows, or hides human contact when the issue clearly needs a person.

Latency changes emotional judgment. A slow answer can feel acceptable if it is excellent. The same delay feels wasteful if the answer is generic or wrong.

Behavior can vary because of prompt differences, model routing, retrieval quality, tool latency, context length, or back-end changes. Users just experience that as instability.

Yes, especially in work settings. Users hesitate when they are unsure what the system stores, remembers, shares, or uses for future processing.

It raises the importance of transparency. Users and organizations need clearer disclosure about AI interaction and stronger handling of deception and synthetic content risks.

Sycophancy is a tendency to agree with the user too readily, even when the user is wrong or asking for harmful advice. It feels supportive in the moment but can damage judgment.

Friendliness helps only when it stays honest. A bot that flatters, over-validates, or avoids disagreement can feel helpful while reinforcing bad decisions.

Grounded answers, predictable memory, clear uncertainty signals, low prompt burden, stable performance, and clean escalation paths.

Not by itself. A high containment rate can hide unresolved frustration if users are being kept away from humans instead of getting real resolution.

Fix the top sources of hidden labor: add grounding, reduce repetition, expose sources, clarify memory behavior, and create an obvious human fallback for high-stakes cases.

Author:

Jan Bielik

CEO & Founder of Webiano Digital & Marketing Agency

This article is an original analysis supported by the sources cited below

Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile

NIST’s detailed framework for identifying and managing trust, accuracy, safety, and governance risks in generative AI systems.

Navigating the AI Act

European Commission guidance explaining transparency obligations and user-facing requirements for certain AI systems, including chatbots.

Memory FAQ

OpenAI’s explanation of how memory works in ChatGPT, including controls, deletion, and temporary chats.

What is Memory?

A companion OpenAI help page clarifying the difference between saved memories and references to past chats.

Prompt guidance for GPT-5.4

Official guidance on structuring prompts, tool use, completion criteria, and grounded output for production assistants.

Why language models hallucinate

OpenAI research paper on the causes and behavior of overconfident falsehoods in language models.

Prompt engineering overview

Anthropic documentation on prompt design, failure modes, and when prompting is not the real fix.

Context windows

Anthropic’s explanation of working memory, context limits, and the risks of degraded recall in long sessions.

Effective context engineering for AI agents

Anthropic’s engineering notes on preserving task state and memory across longer agentic workflows.

Long context

Google’s developer documentation on large context windows and the trade-offs in handling long inputs.

Vertex AI RAG Engine overview

Google Cloud documentation on retrieval-augmented generation for grounding model output in external data.

Grounding overview

Google Cloud guidance on grounding Gemini outputs in websites, document stores, and managed retrieval systems.

Gemini in Pro and long context

Google’s product overview showing how long-context capabilities are positioned for real file and code analysis.

The State of AI: Global Survey 2025

McKinsey’s global survey highlighting inaccuracy as the most commonly reported AI-related risk in organizations.

The State of Generative AI in the Enterprise

Deloitte’s enterprise research on generative AI adoption, operational pressure, and implementation challenges.

What the data says about Americans’ views of artificial intelligence

Pew Research Center’s summary of current U.S. attitudes, concern levels, and experiences with AI.

Views of AI Around the World

Pew’s cross-country survey showing broad public awareness of AI alongside persistent concern.

Conversational Breakdown in a Customer Service Chatbot: Impact of Task Order and Criticality on User Trust and Emotion

A peer-reviewed study on how chatbot breakdowns affect user trust and emotional response in service interactions.

LLM Hallucinations in Conversational AI for Customer Service: Framework and End-User Perceptions

Research examining hallucinations in customer-service chatbots and how end users perceive those failures.

Recovering customer satisfaction after a chatbot service failure – The effect of gender

A study on service recovery design after chatbot failure and its effect on user satisfaction.

Citations and Trust in LLM Generated Responses

Research on how citations affect user trust in chatbot responses and how that trust shifts when citations are checked.

AI overly affirms users asking for personal advice

Stanford’s accessible summary of research on chatbot sycophancy in interpersonal advice settings.

Sycophantic AI decreases prosocial intentions and interpersonal accuracy

Science paper showing that overly agreeable chatbot behavior can affect users’ judgments and social intentions.

Be Friendly, Not Friends: How LLM Sycophancy Shapes User Trust

A study exploring how friendliness and sycophancy interact to shape perceived authenticity and trust in language-model agents.