The public story about AI is still too neat. It usually sounds like a single curve: adoption rises, tools improve, people adjust, the future arrives. That is not what is happening. AI is spreading fast while beliefs about it are splitting apart. In 2024, 78% of organizations reported using AI, up from 55% a year earlier. Half of employed Americans now say they use AI in their role at least a few times a year. Yet half of U.S. adults say the growing use of AI in daily life leaves them more concerned than excited, and McKinsey found that only 1% of business leaders describe their organizations as truly mature in deployment. The technology moved into daily use faster than a shared public understanding ever formed around it.

Table of Contents

That split has produced three broad camps. Doubters still picture AI as a glitchy chatbot that writes wooden copy, makes up facts, and embarrasses itself on cue. Power users have moved well past that stage. They use models as drafting partners, research assistants, code collaborators, workflow routers, and increasingly as agents that can act across software, files, and tools. Resisters are different from doubters. They know the systems are getting stronger. They read the papers, watch the product launches, understand the labor politics, and still want distance. They are not confused. They have decided that a lot of this bargain is bad.

That three-way split matters more than the usual adoption chart. It shapes who experiments, who gets left behind, who keeps craft intact, who gives up judgment too early, and who builds the operating norms that everyone else later inherits. AI is no longer just a technology story. It is a social sorting story.

A split hiding in plain sight

The cleanest sign of the divide is that usage, confidence, and trust no longer move together. A lot of technologies follow a simpler pattern. As people use them more, suspicion fades, routines settle, and the thing becomes boring. AI has done the first part and stalled on the rest. Pew found that 95% of U.S. adults had heard at least a little about AI by mid-2025, and 62% said they interact with AI at least several times a week. Awareness is high. Exposure is high. Comfort is patchy. A majority still say they have little or no control over whether AI is used in their lives, and about six in ten want more control.

That is the environment in which the three groups formed. People are not sorting themselves mainly by age or technical literacy. They are sorting by what counts as acceptable risk, what kind of work they do, how much they trust machine output, and whether they believe cognitive offloading is a gain or a loss. Gallup’s 2026 workplace research shows the same pattern from the job side. Half of employed American adults report using AI at least a few times a year, but frequent use is still a minority behavior, and the difference between an occasional user and a daily user is not cosmetic. Daily users report more productivity gains, more concrete use cases, and a stronger sense that the tools belong inside their job. Non-users are far more likely to say they are ethically opposed or simply do not believe AI can help with what they do.

That gap is already visible inside companies. Leaders often assume the workforce is either broadly hesitant or broadly ready. Neither picture is right. McKinsey found that employees report much heavier present and near-term AI use than executives think. Google Workspace’s survey of younger leaders found something even sharper: the early adopters are not just accepting AI outputs, they are becoming “AI architects” for their own workflows, shaping tone, process, and task flow around the systems. The distance between someone who opens a chatbot twice a month and someone who builds work around a model is huge. Those two people are not using the same tool in any meaningful sense.

This is why the public debate feels confused. Commentators keep asking whether “people” trust AI, whether “workers” use AI, whether “the public” is ready. Those categories are now too blunt. The central fact is fragmentation. AI has become ordinary enough to be everywhere and unstable enough to mean radically different things to different users. The doubter sees low-grade synthetic slop. The power user sees compound time savings. The resister sees a system that wants too much access to judgment, language, and labor. All three are looking at something real.

Doubters are judging AI by the wrong evidence

Doubters are easy to dismiss, which is a mistake. They are often reacting to the worst version of the technology they actually encountered. Maybe it was a chatbot that fabricated a source, wrote stiff prose, broke a spreadsheet, or answered with the cheery confidence of somebody who had not understood the question. Maybe it was a viral clip where a model confidently failed at a simple task. Maybe it was company-wide pressure to “use AI” with no training, no workflow fit, and no clue about what good use looks like. Once that is the first impression, the entire category hardens into a joke.

That reaction is not irrational. Hallucination remains a live problem, not a relic from the early ChatGPT era. In OpenAI’s April 2025 system card for o3 and o4-mini, the models showed meaningful hallucination rates on SimpleQA and PersonQA, with o4-mini performing worse than o1 and o3 on PersonQA and OpenAI explicitly noting that more research was needed to understand why. Stanford’s 2025 AI Index also reports that AI-related incidents are rising sharply even as adoption and capability continue to surge. Doubters are not imagining the failure surface. It exists.

Where doubters go wrong is in treating those failures as the whole product. Most people outside the heavy-use tier still encounter AI as a general-purpose answer box. Power users do not. They narrow the task, shape the prompt, verify the output, use the model inside a tool chain, and hand it work that benefits from iteration more than certainty. A doubter often judges AI by one bad answer. A power user judges it by whether it shortened a process, surfaced options, or handled the first rough pass. Those are different standards.

The social cost of this mismatch is large. A person who sees AI only as an unreliable oracle misses what it is already good at: drafting, summarizing, coding assistance, research triage, rewriting, classification, formatting, cross-tool coordination, and repetitive knowledge work where speed matters and verification is possible. That blind spot matters most in white-collar jobs, where the tool is not replacing an entire role in one move but quietly capturing chunks of time. The St. Louis Fed’s survey work found that generative AI users in November 2024 reported average time savings equal to 5.4% of their work hours, about 2.2 hours per week for a 40-hour worker. NBER research on customer support found a 14% productivity increase on average and a 34% jump for novice and lower-skilled workers. A person can dismiss AI as mediocre and still lose ground to a colleague using it for the right slices of the job.

So the doubter’s problem is not stupidity. It is bad evidence selection. They are watching for errors in a category where the strongest gains often look boring: less administrative drag, faster first drafts, cleaner handoffs, fewer blank-page starts, more time spent on the core of the task. Those gains rarely go viral.

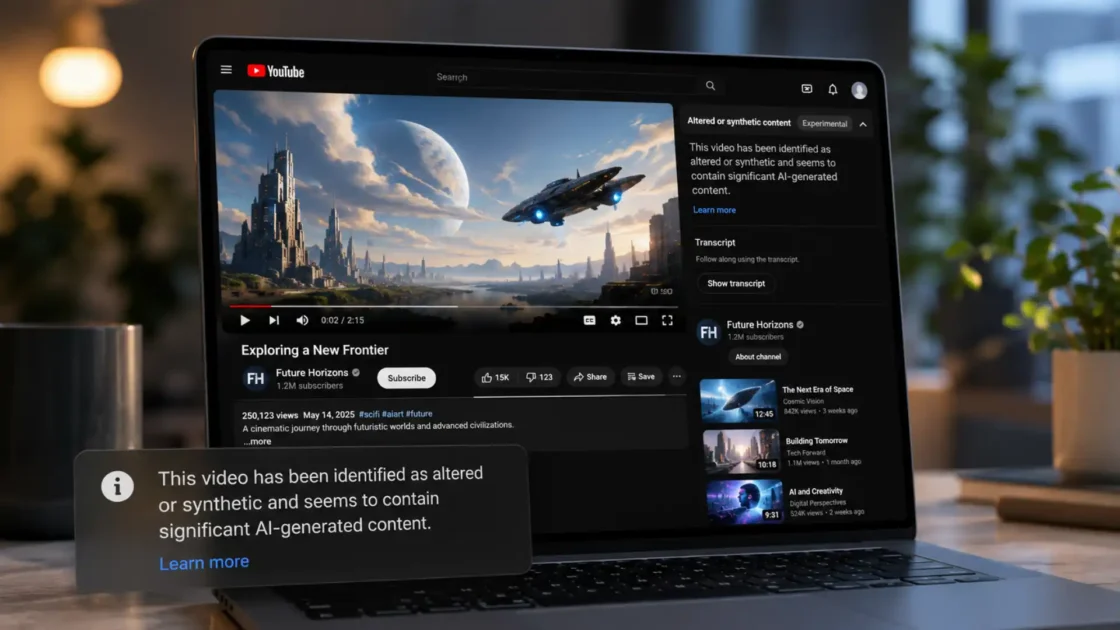

Failure clips keep winning the public argument

AI’s reputation is being built in a media environment that rewards spectacular mistakes. A model writes fake law citations, invents a medical source, answers with cartoon confidence, or produces an image that feels eerie and wrong. That clip travels because it is funny, threatening, or morally loaded. A far more common outcome — a worker saved 40 minutes cleaning notes or drafting a client update — has almost no cultural drama. The public sees the blooper reel and misses the quiet compounding effect.

Pew’s work on attitudes toward AI helps explain why those failures stick. Half of Americans say the increased use of AI in daily life makes them more concerned than excited, and 57% rate AI’s societal risks as high. The most common concern they named was not job loss in the abstract. It was the fear that AI weakens human skills and human connections. That kind of concern is sticky because it is moral, not technical. A better benchmark score does not answer it. Faster output does not answer it. A person who worries that AI will dull judgment or flatten relationships will interpret every public mistake as evidence that dependence itself is corrosive.

Distrust extends into domains where language and credibility matter most. Reuters’ coverage of the Reuters Institute Digital News Report 2024 found strong public discomfort with news produced mostly by AI, especially around sensitive subjects such as politics. That discomfort is narrower than “fear of AI” and more useful. It says people do not object only to capability. They object to machine mediation in domains where trust, intent, and accountability are hard to separate from the output itself.

This is why viral failures do more than bruise product perception. They reinforce a deeper suspicion that AI is a machine for fake fluency. Once that frame settles, good use becomes harder to see even when it is right in front of people. A model that helps draft a clear project brief is invisible. A model that lies in a headline is memorable. A worker who used AI well usually disappears into the finished work. A worker who used it badly leaves a visible fingerprint.

None of this makes the doubter right about everything. It explains why the public argument keeps tilting their way. Failure is legible. Competent assistance often is not. Until AI products become much better at uncertainty, citation, controllability, and clear boundaries, the cultural advantage will stay with the people pointing at the wreckage.

Power users stopped asking for answers and started building systems

The power-user tier is not defined by enthusiasm. It is defined by operational thinking. These users stopped treating AI like a magic answer machine and started treating it like a component. They write their own prompt libraries, wire models into notes and calendars, run summarization across meetings, generate drafts into templates, connect models to code repositories, and set up routines that hand the model the first pass while they keep review authority. The shift is subtle but decisive: the model is no longer the event. It becomes one moving part in a recurring workflow.

The workplace data keeps pointing in that direction. McKinsey found that employees are already using generative AI much more deeply than executives assume, with 13% of employees saying they already use gen AI for more than 30% of their daily tasks while C-suite leaders estimated only 4%. Google Workspace’s survey of workers aged 22 to 39 reports that younger leaders are taking a hands-on approach and becoming “AI architects” for their workflows. That phrase matters because it captures the real change: good users are designing around the model, not just querying it.

Anthropic’s Economic Index shows where a lot of this power-user activity still clusters. Claude usage remains heavily concentrated in a small set of tasks, especially computer and mathematical work. In November 2025, computer and mathematical tasks represented about a third of conversations on Claude.ai and nearly half of first-party API traffic. The most prevalent task was modifying software to correct errors. That is not the profile of a public using AI mostly for party tricks. It is the profile of a public using it for targeted, repeatable, high-frequency work where iteration pays off.

Power users also understand where models are weak. That is part of what makes them powerful. They know that a model can draft a memo faster than it can judge the politics around sending it. They know that a code suggestion may need heavy review. They know that a summary can hide the one clause that matters. Good use is not blind trust. It is bounded delegation. Many of the best users are careful precisely because they have seen enough output to know what breaks.

This is why power users tend to sound strange to everyone else. When they talk about AI, they rarely argue about whether it is “good” in general. They talk about latency, handoff quality, prompt reuse, tool access, verification paths, memory, evaluation, and where human judgment belongs in the loop. The doubter hears hype. The resister hears a new management language creeping into everything. Yet the power user is usually describing something concrete: a change in work rhythm. Less time gathering, formatting, restating, and reorganizing. More time picking, editing, deciding, and shipping.

The move from chat windows to agents

The biggest gap between ordinary users and power users sits here. Most people still imagine AI as a chat interface. The leading edge has already moved to agents: systems that can plan, act across steps, use tools, access files, call other systems, and continue working without a human manually prompting every move. The term “agent” gets abused, but the underlying shift is real. AI is moving from response generation toward process execution.

McKinsey’s 2025 State of AI survey found that 23% of respondents report their organizations are scaling an agentic AI system somewhere in the enterprise, while another 39% say they have begun experimenting with AI agents. That does not mean most firms have agent-heavy operations. McKinsey is explicit that scaling is still limited and often confined to one or two functions. It does mean the concept is no longer speculative inside business settings. It is being tested in IT, knowledge management, and other functions where digital tasks are modular enough to hand off in chunks.

Anthropic’s Claude 4 launch makes the same turn visible on the model side. Anthropic frames Opus 4 as a model built for complex, long-running tasks and agent workflows, with the ability to work continuously for several hours. The company cites a seven-hour open-source refactor run and emphasizes improved memory behavior when local file access is available. Those are not consumer novelty demos. They point to a design target: models that can stay coherent over long arcs of work rather than merely answer one query well.

Microsoft’s 2025 Work Trend Index adds the organizational angle. Its “Frontier Firm” category describes workplaces where AI is already being used to expand capacity, not just shave minutes off isolated tasks. Leaders in those firms are more likely to say their company is thriving, more likely to say they can take on more work, and less likely to fear AI will take their jobs. That does not prove they are right about the future. It does show that once AI becomes part of operating design instead of occasional assistance, user psychology changes with it. The tool stops feeling like an outsider and starts feeling like infrastructure.

Anthropic’s Economic Index hints at where that leads. Office and administrative support tasks in API usage rose to 13% of records in November 2025, which Anthropic interprets as growing automation of back-office workflows such as email management, document processing, scheduling, and customer relationship management. That is the exact layer of work where an agent can matter even if it is not brilliant. It only needs to be reliable enough, constrained enough, and cheap enough to absorb repetitive digital coordination.

A compact map of the three groups

| Group | Core belief | Typical behavior | Hidden risk |

|---|---|---|---|

| Doubters | AI is mostly unreliable theater | Avoid regular use or keep it shallow | They may miss quiet productivity gains already compounding around them |

| Power users | AI is a system component, not a magic box | Build workflows, reuse prompts, test tools, delegate bounded tasks | They may normalize dependence before strong guardrails exist |

| Resisters | Capability is rising, but the trade-off is bad | Limit or reject use on ethical, professional, cognitive, or political grounds | They may lose influence if they refuse every form of participation |

This map is useful because it separates disbelief from refusal. Doubters often underestimate the current utility. Resisters often understand the utility and reject the direction anyway. Power users see the most immediate upside, yet they are also the most exposed to creeping dependence if they stop noticing what parts of thinking they have handed away.

Productivity is real, but it lands unevenly

The strongest correction to the doubter’s worldview is the research literature. It has been saying, with growing consistency, that generative AI often improves output and speed on well-matched tasks. The catch is that “well-matched” does a lot of work. The gains are real. They are not universal. And the distribution matters more than the average.

NBER’s field study of more than 5,000 customer-support agents found that access to a generative AI assistant raised productivity by 14% on average, with a 34% improvement for novice and lower-skilled workers and little effect for experienced high performers. Harvard Business School’s widely discussed “jagged technological frontier” work with BCG consultants found a similar pattern from a different angle. On tasks inside AI’s competence frontier, consultants using AI completed more work faster and with much higher quality. On harder tasks outside that frontier, performance fell. The tool was not steadily good or steadily bad. It was excellent in some zones and dangerous in others.

That unevenness matters because it changes who benefits first. AI often helps newer workers and less skilled workers more on certain tasks, which sounds democratic until you ask what happens to learning. If the system gives a junior worker a faster route to acceptable output, the firm may get efficiency while the worker loses some of the struggle that once built mastery. MIT Sloan’s coverage of research on GitHub Copilot makes that tension concrete. Developers with access to Copilot spent more time on core coding and less on project-management tasks, and junior developers saw the largest impact. MIT’s caution is plain: employers need workers to use AI to learn, not to bypass foundational skill formation.

The economy-wide numbers echo this but stay modest. The St. Louis Fed’s survey-based work found that generative AI users saved 5.4% of work hours on average in late 2024, which translated to an estimated 1.1% increase in aggregate productivity when rolled across the whole workforce. The Rapid Adoption of Generative AI paper found that by late 2024 nearly 40% of working-age Americans used generative AI, with 23% of employed respondents using it for work in the previous week and 9% using it every workday. That is broad uptake, but not yet saturation, and the share of total work hours assisted by AI remained limited. The gap between “many people use it” and “the economy has been remade by it” is still large.

Power users understand this better than almost anyone. They do not need AI to be a genius. They need it to be a consistent helper on the right slices of work. Drafting, summarizing, classifying, checking, reformatting, generating options, writing code scaffolds, explaining unfamiliar material, searching a local file set, turning rough notes into something usable — the gains stack. Yet those gains stack only when the user knows where the frontier is jagged. The person who hands AI the wrong task does not get a small performance penalty. Sometimes they get a confident wrong turn.

Workplaces have changed less than the hype suggests

There is a reason the doubter still feels culturally visible even while serious users keep multiplying: many organizations have not redesigned work around AI yet. They bought tools, ran pilots, encouraged experimentation, and maybe nudged employees to “use AI more.” That is not the same thing as changing operating structure. Gallup’s 2026 research captures the gap sharply. Within organizations implementing AI, 65% of employees say it has improved productivity and efficiency. Yet Gallup also says evidence that AI has fundamentally changed how work gets done across organizations remains more limited. Useful? Often. Transformational? Far less common.

McKinsey reaches a similar conclusion from the executive side. Almost all companies are investing in AI, 92% say they plan to increase those investments over the next three years, and still only 1% describe their organizations as mature. In the separate 2025 global survey, McKinsey reports that 88% of respondents say their organizations use AI in at least one business function, yet only about one-third say their companies have begun to scale AI programs across the enterprise. The pilot stage is crowded. The scaled stage is still narrow.

This is where hype can mislead both believers and critics. If you listen only to product launches, you would think every knowledge workplace has already become agentic. If you listen only to skeptical employees, you would think almost nothing has changed. The truth sits in the messier middle. Worker-level adoption moved fast. Organizational redesign moved slower. Formal governance, workflow integration, role redesign, and clear evaluation lagged behind. The St. Louis Fed notes that worker adoption has outpaced formal firm adoption, which helps explain why many of the time savings are still showing up as local efficiencies instead of obvious firm-level transformation.

That lag is not trivial. It shapes the political future of AI inside companies. When employees see pressure to use AI without a clean theory of where it fits, they experience the tool as management fashion. When executives hear that productivity is up but headcount logic is unclear, they hesitate. When teams get benefits at the task level but not in staffing, process, or compensation, resentment grows. The middle period is the dangerous period. AI is good enough to change expectations and not yet reliable or integrated enough to settle them.

Resisters are not simply behind

The most misunderstood group in this whole story is the resister. Public discourse keeps trying to sort people into “adopters” and “laggards,” which works badly here. A resister may be perfectly informed. They may have tested several models, read system cards, watched firms rush into shallow automation, and decided that the costs are too high. That refusal can come from ethics, professional standards, politics, privacy concerns, creative identity, or a sober view of labor bargaining. None of that is the same thing as being left behind.

Gallup’s holdout research shows that non-use often has structure. Among employees in organizations where AI is available, 43% of non-users say they are ethically opposed to using AI, and 39% say they do not believe AI can assist with the work they do. A large share also prefer to keep doing work the way it is currently done, while privacy, security, and compliance worries remain widespread. Those are not fringe objections. They are workplace-level reasons to refuse a tool that might otherwise look useful.

Pew’s public-attitudes work supports the same picture. Most Americans want more control over AI in their own lives. Many fear erosion of creativity, relationship quality, and human thinking. NIST’s Generative AI Profile does not read like a manifesto for refusal, but its existence matters here. It treats generative AI as a system class that requires explicit trustworthiness, evaluation, and risk management rather than casual deployment. A resister often hears the product hype and notices the same thing NIST does: these systems generate new governance problems because they combine fluency, scale, opacity, and easy misuse.

Some resisters are defending craft. Some are defending institutional trust. Some are defending their own thinking. A lawyer may resist because unsupported language is intolerable in their domain. A teacher may resist because shortcutting the thinking process injures the actual work of education. A writer or designer may resist because the use of models shifts the center of authorship in ways they dislike. A manager may resist because they do not trust vendor promises around privacy or model retention. A worker may resist because they hear “productivity tool” and correctly infer “headcount pressure.”

None of those positions will vanish just because models improve. Capability can shrink some objections and sharpen others. A stronger model may reduce some error complaints while increasing fears about dependency, surveillance, devaluation, or labor substitution. Resisters matter because they keep forcing the question that power users often glide past: not “can this be done with AI?” but “what gets cheaper, weaker, or less accountable when it is?”

The strongest case against routine AI use

A serious article on this topic has to grant the strongest version of the resisting argument. It is not “AI is fake.” That case gets weaker by the quarter. The stronger case is that routine AI use can erode the very faculties that many jobs and relationships depend on, especially when the system is good enough to be seductive and bad enough to stay wrong in important ways.

Pew’s 2025 work found that many Americans expect AI to worsen creativity and meaningful relationships, and the most common reason people gave for rating AI’s societal risks as high was concern about weakening human skills and connections. Gallup’s 2026 Gen Z study deepens that anxiety. Weekly use among Gen Z stayed high, yet excitement fell, anger rose, and eight in ten said it is likely that AI use will make it harder for them to learn in the future. Those numbers matter because Gen Z is not observing AI from afar. It is using the tools and still worrying about what habitual use does to cognition.

The HBS work with consultants adds a subtler warning. On tasks inside AI’s frontier, performance improved. Yet the research also found narrower variety in solutions and worse performance on more difficult judgment-heavy work. That is the nightmare version of soft dependence: people become faster and more polished while their independent range shrinks. The work looks better on the surface. The thinking underneath gets thinner.

MIT Sloan’s discussion of GitHub Copilot lands on a related point. Junior developers benefited strongly, which is good news for assistance and bad news for any firm that imagines it can cut the bottom of the ladder. Juniors still need to build foundational knowledge. If AI shortens the route to competent-looking output, organizations need to know whether the worker is learning the structure of the task or just surfing the system’s fluency. A profession can become more efficient while making its future practitioners weaker.

This is where resisters often sound most convincing. They are not claiming every use is corrupt. They are saying that a culture of constant assistance changes the baseline. Writing with help changes writing. Reading summaries changes reading. Asking a model to generate options changes how options are formed in your own head. Offloading administrative work may be harmless. Offloading interpretation, evaluation, and early-stage thought is a different category. Once the habit forms, it is very hard to measure what disappeared.

Trust breaks faster than capability improves

AI companies can improve benchmarks, add tool use, lower latency, and widen context windows. Trust still breaks on older rules. A single visible failure in a sensitive domain can undo months of product progress for a whole audience. That is not irrational. It is how trust works in medicine, law, finance, journalism, and education. These fields do not ask whether a system is impressive on average. They ask whether it is dependable under pressure.

The current evidence supports both sides of that tension. Capability is rising fast. Stanford’s AI Index records sharp progress on major benchmarks, rapid cost declines, lower inference barriers, and accelerating use across business and everyday life. The same report says AI-related incidents are rising sharply. OpenAI’s own system card acknowledges meaningful hallucination behavior. NIST frames generative AI as a category requiring deliberate evaluation and risk controls. The message is blunt: better systems are still systems that need governance.

That gap between capability and trust explains why some skepticism survives even in high-use environments. Gallup found that frequent users report stronger productivity gains, yet many employees still do not believe AI has fundamentally changed how work gets done. Reuters found suspicion about AI-made news. Pew found that most Americans want more control over AI in their lives. Those are not contradictory findings. They describe a public willing to use assistance while withholding full legitimacy.

Resisters understand this gap instinctively. They do not need proof that AI can do useful work. They need a reason to believe that dependency will not outrun accountability. Right now, companies keep asking for that trust ahead of the evidence. System cards are improving. Public risk frameworks are improving. Yet product marketing still tends to speak in the language of ease, scale, and delegation long before most institutions have clear norms for review, disclosure, retention, consent, or contestability.

That is why trust often moves slower than the models. It is easier to ship a stronger system than to settle the social rules around it. The resister lives inside that delay and refuses to pretend it is minor.

Managers are becoming the real swing group

If the future were determined only by users, power users would win quickly. The missing force is management. Managers decide whether AI becomes personal assistance, team infrastructure, or institutional friction. Gallup’s 2026 research says managerial support and careful consideration of how new tools fit existing workflows play a critical role in whether employees become adopters or holdouts. That finding is more important than it sounds. It means usage is not just a matter of curiosity or fear. It is shaped by whether the tool arrives inside a sane process.

McKinsey’s 2025 workplace report says leaders are underestimating employee use. Employees already report deeper current use and much faster future uptake than executives expect. That is a warning. A manager who assumes AI is still peripheral may accidentally create shadow workflows where employees use it heavily without shared standards. A manager who pushes it recklessly can create the opposite problem: coerced adoption with no trust. Neither path is stable.

Microsoft’s “Frontier Firm” framing shows what happens when leadership embraces AI as operating design rather than novelty. Workers in those firms are more likely to say the company is thriving, more likely to report meaningful work, and less likely to fear job loss from AI. That does not prove those firms are morally superior or even strategically correct in the long run. It does show that leadership can change the emotional climate around AI by deciding whether it is used as a threat, a toy, or a serious capacity tool.

This is why managers are the swing group between the other three. They can turn doubters into competent users by making tasks and guardrails specific. They can force resisters into sabotage or exit if they confuse refusal with ignorance. They can restrain power users before local experimentation turns into invisible dependence. The next two years will probably be decided less by model capability than by whether middle and senior managers learn how to govern use without either panicking or surrendering judgment.

The next divide will be organizational

A lot of commentary still treats AI adoption as a personal choice. That frame is fading. The more important divide is becoming organizational. Some firms will build strong workflows, training, review structures, and clear boundaries around model use. Others will buy tools, cut corners, and let the burden fall on individuals. The same person can look like a power user in one workplace and a resister in another.

The labor market signals are already moving. The World Economic Forum says employers expect 39% of workers’ core skills to change by 2030, with AI and big data, networks and cybersecurity, and technological literacy among the fastest-rising skill areas. The report also says analytical thinking, resilience, creative thinking, and lifelong learning remain central. That mix matters. The future is not “human skills or AI skills.” It is workplaces that can combine technical fluency with judgment, adaptability, and social intelligence.

PwC’s 2025 AI Jobs Barometer pushes the point further. It says wages are rising twice as fast in the most AI-exposed industries, skills for AI-exposed jobs are changing 66% faster than for other jobs, and workers with AI skills command a 56% wage premium. Those figures do not prove that every worker will gain from AI. They do show that firms are beginning to reward fluency where the work can absorb it. The risk is not only displacement. It is a widening gap between organizations that teach bounded AI competence and organizations that leave workers to improvise.

The OECD adds an important corrective. Labor markets in 2025 were still strong by historical standards, with record-high employment and low unemployment across the OECD, while also warning that support and guidance are needed because much depends on how AI is used and because gaps in regulation leave workers exposed. That is a better frame than either euphoria or apocalypse. The economy has not collapsed under AI. It is being reorganized unevenly, and governance quality will decide a lot of the distributional outcome.

So the next divide is not just between people who “like AI” and people who do not. It is between institutions that know where AI belongs, institutions that deploy it sloppily, and institutions that refuse it until trust improves. Workers will feel those worlds very differently even if they use the same underlying models.

What doubters get right

Doubters are right about more than enthusiasts admit. They are right that model failures still show up in ordinary use. They are right that hype routinely outruns reliability. They are right that a lot of public AI writing confuses benchmark gains with human usefulness. They are right that executives often announce transformation long before work has actually been redesigned. Gallup, McKinsey, and Stanford all point to some version of that mismatch between expansion, experimentation, and real operational maturity.

They are also right that the cultural presentation of AI is full of empty theater. Much of what gets sold as progress is really interface novelty, investor signaling, or management pressure wrapped in futuristic language. A worker forced to use AI for tasks where it adds little value is not resisting the future. They are resisting bad implementation. A teacher who sees students use chatbots to bypass the hard part of thinking is not romantic. They are reacting to a real pedagogical loss. A lawyer who distrusts synthetic authority is doing their job.

Where doubters fail is in assuming that because the hype is inflated, the utility must be trivial. That does not follow. Some of the most over-marketed technologies still become decisive under the right constraints. AI fits that pattern. You can laugh at the hype and still be wrong about the economics. The worker who treats AI as a clown show may lose to a peer who uses it for research triage, documentation, drafting, meeting synthesis, and repetitive client communication. The firm that sneers at all AI may later find that competitors quietly shifted cost, speed, and responsiveness in ways that now feel structural.

So doubters deserve respect, not flattery. Their skepticism is often healthier than corporate boosterism. It becomes costly only when it hardens into refusal to learn the boundaries of real utility.

What power users get right

Power users are right about the basic shape of the opportunity. The value of AI is not one spectacular answer. It is the compounding of many small delegations. That is why the most serious users sound less impressed than casual observers expect. They are not in love with the machine. They are in love with the workflow improvement.

Microsoft’s Frontier Firm research, McKinsey’s workplace findings, Anthropic’s task-concentration data, and Google’s survey of young leaders all point to the same pattern: the users extracting the most value are the ones who treat AI as part of a broader work system. They specify tasks, keep review authority, reuse successful structures, and know that good delegation depends on context. Anthropic’s distinction between automation and augmentation is useful here. A lot of current high-value use is still collaborative rather than fully autonomous. The best users often want the model near the work, not in total control of it.

Power users are also right that many critics underestimate boring gains. The St. Louis Fed, NBER, HBS, MIT Sloan, and Gallup all document performance improvements, time savings, or stronger task fit under real conditions. Those gains are not fantasy. They are already large enough to change internal status, wage premiums, skill demand, and perceived capacity in some firms and sectors. PwC’s finding that AI-exposed jobs are seeing much faster skill change and significant wage premiums reinforces the same point from the labor-market side.

Still, power users often underrate the cultural cost of normalizing machine mediation. They are so used to bounded delegation that they can forget how strange their assumptions sound to everyone else. To a resister, “I let the model draft most of it and then I edit” may sound like a degradation of authorship. To a doubter, it may sound like dependence masquerading as skill. Power users are usually ahead on the utility and behind on the politics.

What resisters will force everyone else to confront

Resisters are going to shape the future even if they never become heavy users. They are the group most likely to keep asking the questions that expansionary institutions would rather skip. Who is accountable for machine-shaped output? What parts of thinking should not be externalized? When does assistance become de-skilling? What rights do workers have if AI use changes performance expectations? What forms of disclosure are owed to clients, students, readers, or patients? What gets trained on whose labor? What happens when tool access becomes a condition of keeping up? Those questions do not disappear because the model got better at coding.

NIST’s risk framework, Pew’s control findings, OECD’s governance warnings, and the public suspicion captured in journalism research all say the same thing in different languages: deployment without legitimacy is unstable. The future does not belong only to the fastest adopters. It belongs to the institutions that can convince people the bargain is fair, bounded, and accountable.

That is why the resister is not a side character. They are the stress test. They force enthusiasts to separate real value from managerial fantasy. They force builders to think about control, verification, and consent. They force organizations to admit that productivity is not the only metric that matters. A school, newsroom, law firm, hospital, or design studio is not just a bundle of tasks waiting to be compressed. It is a place where standards, learning, trust, and status are reproduced.

The public will keep talking about AI as if one side will win: the believers or the skeptics. That is too simple. The more likely future is messier. Doubters will learn more than they expected. Power users will discover limits they ignored. Resisters will block some uses and improve others by forcing sharper boundaries. The people who matter most will be the ones who can hold all three truths in view at once: AI is already useful, already overhyped, and already changing the terms of work faster than most institutions are prepared to handle.

FAQ

They are doubters, power users, and resisters. Doubters still see AI mainly through visible failures, power users build it into recurring workflows, and resisters understand the trajectory and still reject much of the bargain. The categories describe social behavior and judgment, not fixed personality types.

No. Many doubters have seen real failures and are reacting to them rationally. The problem is that they often judge AI by public bloopers rather than by the narrower, quieter tasks where it is already useful.

A power user does not just ask a chatbot random questions. They build repeatable workflows around models, connect them to tools or files, reuse successful prompt patterns, and keep human review where it matters.

A resister is someone who understands AI’s growing capability and still wants distance. Their reasons can include ethics, labor politics, privacy, professional standards, or fear of cognitive erosion.

Yes. Stanford reports that 78% of organizations used AI in 2024, and Gallup says half of employed American adults now use AI in their role at least a few times a year. Usage is broad even though deep, high-frequency use remains more concentrated.

Because trust is lagging behind capability. Pew found that half of Americans feel more concerned than excited about AI in daily life, and most want more control over how AI is used in their lives.

Yes, though they are still early. McKinsey says 23% of respondents report scaling agentic AI somewhere in the enterprise and another 39% are experimenting with it.

The phrase points to systems that can keep working across many steps, tools, or files without a human manually guiding each action. Anthropic’s Claude 4 launch describes sustained performance on long-running tasks and agent workflows lasting for hours.

Yes. NBER found a 14% productivity lift in customer support from AI assistance, and the St. Louis Fed found self-reported time savings equal to 5.4% of work hours for users in late 2024.

No. The benefits are uneven. NBER found much larger gains for novice and lower-skilled workers, while HBS found strong gains on some tasks and meaningful performance drops on harder tasks outside AI’s frontier.

Because local task gains are easier to achieve than organizational redesign. Gallup says employees often report productivity benefits, yet firm-wide transformation remains limited, and McKinsey says only 1% of leaders call their organizations mature in AI deployment.

Not only. Gallup found that many non-users cite ethical opposition, privacy or compliance concerns, and a preference for existing ways of working. Utility is only part of the story.

Gallup’s Gen Z research found that weekly use stayed high while excitement fell and anger rose. Many young users worry that AI may hurt learning, creativity, and long-term skill development even while it saves time in the short run.

The strongest case is not that AI is fake. It is that routine assistance may weaken judgment, learning, authorship, and trust before institutions know how to manage the trade-offs. Pew, HBS, and MIT Sloan all point to parts of that concern.

Usually no. Strong users often succeed because they do not trust it completely. They give the model bounded tasks, verify results, and keep authority over decisions that carry real risk.

Gallup found that managerial support and workflow fit strongly shape whether employees become adopters or holdouts. Leaders also tend to underestimate current employee use, which can produce poor policy and shadow adoption.

More organizational than personal. The firms that train workers, set boundaries, and build credible review structures will look very different from firms that buy tools and improvise. WEF, PwC, and OECD all suggest that skill change, governance, and institutional design will matter heavily.

Right now the evidence points more strongly to uneven task change than total replacement. Gallup, McKinsey, and Anthropic each show expanding use, local automation, and shifting task composition, while large-scale enterprise redesign is still incomplete.

Author:

Jan Bielik

CEO & Founder of Webiano Digital & Marketing Agency

This article is an original analysis supported by the sources cited below

The 2025 AI Index Report

Stanford HAI’s annual benchmark report on AI adoption, investment, safety, and public sentiment.

How Americans View AI and Its Impact on Human Abilities, Society

Pew research on concern, excitement, perceived risks, and AI’s effect on human abilities.

Americans’ awareness of AI and views of use in daily life, control over it

Pew’s detailed survey on AI awareness, interaction frequency, willingness to use AI, and perceived control.

What the data says about Americans’ views of artificial intelligence

A concise Pew synthesis of recent U.S. attitudes, adoption patterns, and use cases.

Rising AI Adoption Spurs Workforce Changes

Gallup’s 2026 workplace survey on AI usage, productivity, staffing effects, and employee perceptions.

AI in the Workplace What Separates Adopters and Holdouts

Gallup’s analysis of why some workers adopt AI and why others refuse or avoid it.

Gen Z’s AI Adoption Steady, but Skepticism Climbs

Gallup research on Gen Z usage, sentiment, learning concerns, and workplace attitudes toward AI.

Superagency in the workplace Empowering people to unlock AI’s full potential

McKinsey’s report on AI maturity, employee readiness, executive underestimation, and workplace change.

The state of AI in 2025

McKinsey’s global survey on enterprise AI use, scaling, risks, and agentic AI experimentation.

2025 The year the Frontier Firm is born

Microsoft WorkLab’s view of AI-native organizations, employee sentiment, and capacity expansion.

Anthropic Economic Index report Economic primitives

Anthropic’s analysis of real-world Claude usage, task concentration, automation, and augmentation patterns.

Introducing Claude 4

Anthropic’s product launch describing long-running tasks, agent workflows, memory, and tool use.

OpenAI o3 and o4-mini System Card

OpenAI’s system card overview for o3 and o4-mini, including model behavior and safety framing.

Artificial Intelligence Risk Management Framework Generative Artificial Intelligence Profile

NIST’s official framework for evaluating and managing generative AI risks.

Generative AI at Work

NBER field evidence on productivity gains from AI assistance in customer support work.

The Rapid Adoption of Generative AI

NBER evidence on how quickly generative AI spread at work and at home in the United States.

The Impact of Generative AI on Work Productivity

St. Louis Fed analysis of work-hour savings, usage intensity, and potential productivity effects.

Humans vs. Machines Untangling the Tasks AI Can and Can’t Handle

Harvard Business School’s summary of the jagged-frontier research on where AI helps and where it hurts knowledge work.

Generative AI changes how employees spend their time

MIT Sloan’s coverage of research on how AI changes task mix, especially for software developers.

The Fearless Future 2025 Global AI Jobs Barometer

PwC’s labor-market analysis of wages, skills, and productivity in AI-exposed jobs and industries.

Future of Jobs Report 2025 The jobs of the future and the skills you need to get them

World Economic Forum summary of projected job growth, skill shifts, and rising demand for AI-related capabilities.

The Future of Jobs Report 2025 Skills outlook

WEF’s deeper chapter on skills disruption, training demand, and the limits of GenAI substitution.

Google Workspace and the Harris Poll survey young workers on AI

Google’s report on younger leaders who are actively shaping AI around their own workflows.

Global audiences suspicious of AI-powered newsrooms, report finds

Reuters reporting on public discomfort with AI-produced news and broader trust concerns.

OECD Employment Outlook 2025

OECD’s labor-market outlook, including strong employment conditions and warnings about how AI is governed and used.